Best Thunderbolt 5 Docking Stations: Future-Proof Your Desk Setup in 2025

July 29, 2025

Udio AI Music Generator July Update: Inpainting and Section-Level Editing That Change Everything

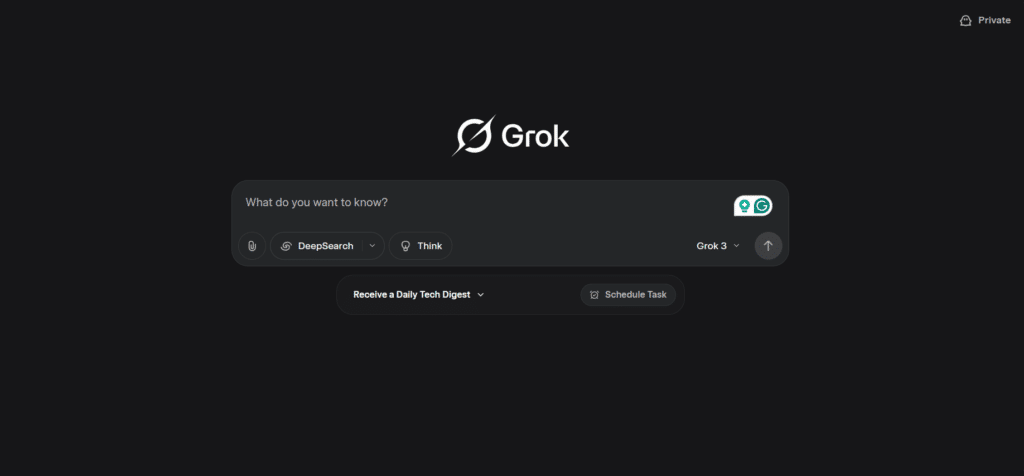

July 30, 2025An AI model that can reason through your question while simultaneously pulling live data from X, the web, and breaking news feeds — that’s not a roadmap slide anymore. xAI Grok 4, launched July 9, 2025, is the first frontier model to ship with real-time data integration baked directly into its reasoning loop, and the benchmarks suggest this isn’t just marketing.

What Makes xAI Grok 4 Different from Every Other Model

Let’s cut through the noise. Every major AI lab has been shipping reasoning models in 2025 — OpenAI’s o3, Google’s Gemini 2.5 Pro, Anthropic’s Claude. But xAI Grok 4 does something fundamentally different: it was trained from the ground up to use tools as part of its reasoning process, not as an afterthought bolted on after pretraining.

Built on version 6 of xAI’s foundation model, Grok 4 consumed 100x more training compute than Grok 2 and 10x more reinforcement learning compute than Grok 3. Trained across 200,000 GPUs in xAI’s Memphis Colossus supercomputer, the model learned to interleave web searches, code execution, and data retrieval directly within its chain-of-thought reasoning. That’s a paradigm shift — previous models generalize tool usage from examples, while Grok 4 was explicitly trained through RL to decide when and how to reach for external tools mid-thought.

What this looks like in practice: when you ask Grok 4 a complex multi-step question — say, analyzing the financial implications of a recent merger announcement — the model doesn’t just generate text from its training data. It recognizes mid-reasoning that it needs current stock data, fires off a Live Search query, integrates the results into its chain of thought, then continues reasoning with the fresh context. This tool-augmented reasoning happens transparently, with the model deciding autonomously which tools to invoke and when.

Real-Time Data: xAI Grok 4’s Secret Weapon

The Live Search API is where xAI’s unique position in the Elon Musk ecosystem pays off. While GPT-4o relies on Bing and Gemini leans on Google Search, Grok 4 taps directly into X’s firehose — real-time posts, trending topics, media attachments, and social signals that no other AI model can access.

Here’s what that means in practice: ask Grok 4 about a breaking event, and it doesn’t just search the web. It cross-references live X posts from verified journalists, official accounts, and eyewitnesses, then synthesizes that with traditional web sources. The Live Search API supports domain filtering (up to 5 domains), image understanding during browsing, and automatic citation generation — all running in the background while the model reasons through your query.

The API launched as a free beta, signaling xAI’s aggressive push to build developer adoption before competitors can replicate this real-time advantage. For developers building agents that need current information — financial analysis tools, news aggregators, social monitoring dashboards — this is the first native solution that doesn’t require cobbling together separate search APIs.

Benchmark Results: The Numbers That Matter

Grok 4 earned an Artificial Analysis Intelligence Index of 73, surpassing OpenAI o3 (70), Google Gemini 2.5 Pro (70), and Anthropic Claude (64). But the individual benchmarks tell a more nuanced story.

On GPQA Diamond — the graduate-level science reasoning benchmark — Grok 4 hit 88%, an all-time high. On Humanity’s Last Exam, designed to be the final closed-ended academic benchmark, the base model scored 24%. Impressive, but the real headline is Grok 4 Heavy: the multi-agent configuration where multiple Grok 4 instances collaborate on a problem, pushing that score to 50.7% — more than double what any tool-free model achieved.

The model achieves PhD-level performance across all academic disciplines simultaneously, delivering perfect SAT scores and near-perfect GRE results. In coding benchmarks (LiveCodeBench and SciCode), Grok 4 leads the field. In math (AIME24 and MATH-500), it’s similarly dominant.

Grok 4 Heavy: When One Model Isn’t Enough

Grok 4 Heavy deserves special attention because it previews where the entire industry is heading. Instead of a single model pass, Heavy orchestrates multiple Grok 4 agents that independently reason about a problem, then synthesize their findings. Without tool use, Grok 4 plateaus at 26.9% on Humanity’s Last Exam. With tools enabled (code execution, web search), it reaches 41.0%. In the Heavy multi-agent configuration, it climbs to 50.7%.

That 50.7% on HLE is a milestone — it’s the first time any AI system crossed the 50% threshold on a benchmark explicitly designed to stump AI. The jump from single-agent to multi-agent (41% → 50.7%) proves that orchestration matters as much as raw intelligence. For anyone building AI applications, this is a clear signal: the future is multi-agent, not monolithic.

Pricing, Speed, and Practical Considerations

Grok 4 prices at $3.00 per million input tokens and $15.00 per million output tokens — competitive with frontier reasoning models but not cheap. Output speed is 75 tokens per second, which places it behind o3 (188 tok/s), Gemini 2.5 Pro (142 tok/s), and Claude Sonnet Thinking (85 tok/s), but ahead of Claude Opus Thinking (66 tok/s).

Context windows span 128K tokens in the consumer app and 256K through the API. The knowledge cutoff sits at December 2024, but the Live Search integration effectively makes this less relevant — the model can bridge the gap with real-time web and X data when it recognizes its training data might be stale.

Access is rolling out in phases: X Premium+ and SuperGrok subscribers first, with broader API access following. This tiered approach mirrors what OpenAI did with o1, but xAI’s X integration means Premium+ subscribers get something competitors can’t match — an AI assistant that understands the social conversation happening in real time.

What This Means for the AI Industry

Grok 4’s launch crystallizes three trends that will define the second half of 2025. First, reasoning models are the new frontier — raw language generation is table stakes, and the competition has shifted to multi-step problem solving. Second, real-time data integration is becoming a differentiator, not a feature. Google has Search, xAI has X, and other labs will need to find their own data moats. Third, multi-agent orchestration (Grok 4 Heavy) is graduating from research curiosity to production architecture.

For developers, the implications are immediate. The Live Search API opens up use cases that were previously impossible without complex multi-service architectures: agents that can monitor X for breaking developments, cross-reference them with web sources, and reason about implications — all in a single API call. Whether you’re building financial analysis tools, news intelligence platforms, or social monitoring systems, Grok 4’s native real-time integration removes an entire layer of infrastructure complexity.

Consider a practical scenario: a developer building a market intelligence agent previously needed to wire together a search API (Serper, Tavily), a social media API (X API v2), a news aggregator, and an LLM — managing authentication, rate limits, and data normalization across four different services. With Grok 4 and Live Search, that entire stack collapses into a single API call. The model handles source selection, query formulation, result synthesis, and reasoning in one unified pipeline. That’s not incremental improvement; it’s an architectural simplification that changes what solo developers and small teams can realistically build.

The AI race in 2025 isn’t about who has the biggest model anymore. It’s about who can connect reasoning with reality in real time. With Grok 4, xAI just made a very convincing case that they understand what comes next.

Interested in building AI-powered automation pipelines or need help evaluating AI models for your business? Let’s talk.

Get weekly AI, music, and tech trends delivered to your inbox.