WWDC 2025 Hardware Lineup: MacBook Air M4, Mac Studio M4 Max, and Why Apple’s Keynote Goes All-Software

June 2, 2025

Ableton Live 12.2 New Devices: 7 Game-Changing Features and Sound Design Tips You Need to Try

June 3, 2025Six days. That is all that stands between us and what could be the most consequential WWDC keynote since 2014. With WWDC 2025 set to kick off on June 9, leaks and rumors have reached a fever pitch — and the loudest signal points squarely at WWDC 2025 iOS 19 AI features that could redefine how we interact with our iPhones. Apple is not just catching up to the AI race anymore. Based on everything we have seen so far, Cupertino appears ready to leap ahead with a fundamentally different playbook.

Why WWDC 2025 iOS 19 AI Features Matter More Than Any Previous Update

When Apple Intelligence debuted at WWDC 2024, the reception was mixed. Google and Samsung had been pushing cloud-based AI for years, and Apple’s on-device approach felt cautious by comparison. But here is the thing about Apple: they tend to wait until the technology is genuinely ready, and then they go all in.

This year, the foundation is finally in place. According to Apple’s own machine learning research documentation, the company has developed on-device foundation models with approximately 3 billion parameters that can run on iPhone 15 Pro and later hardware. The numbers are staggering: 0.6 milliseconds per token latency and a generation rate of 30 tokens per second. For context, that is fast enough to generate a full paragraph in under two seconds — all without sending a single byte to the cloud.

The privacy implications alone make this significant. While competitors route your queries, photos, and conversations through remote servers, Apple’s approach keeps everything locked on your device. But privacy is just the starting point. The real story is what Apple is expected to do with this capability across iOS 19.

Foundation Models Framework: The Developer Game-Changer Nobody Is Talking About Enough

While Visual Intelligence and Smart Replies will grab the consumer headlines, the most transformative announcement expected at WWDC 2025 may be aimed squarely at developers. The anticipated Foundation Models framework would give third-party app developers direct access to Apple’s on-device large language model through a structured API.

Consider the implications. Right now, if a developer wants to add AI capabilities to their iOS app, they have two options: train and deploy their own model (expensive and complex) or integrate a cloud-based API from OpenAI, Google, or another provider (ongoing costs, latency issues, privacy concerns). The Foundation Models framework eliminates both barriers. Developers would get access to a capable on-device LLM that runs for free on the user’s hardware, with zero latency and complete privacy.

This could trigger an explosion of AI-powered features across the App Store. Imagine journaling apps that provide thoughtful writing prompts based on your emotional patterns. Fitness apps that generate personalized workout plans through natural language conversation. Recipe apps that adapt instructions based on what ingredients your camera sees in your fridge. The possibilities scale with the creativity of millions of developers — not just Apple’s internal teams.

MacRumors has characterized the expected changes as potentially the biggest transformation since iOS 7. And that comparison extends beyond AI — rumors point to a complete visual redesign inspired by visionOS, introducing a glassmorphism aesthetic that would make iOS 19 look and feel dramatically different from anything that came before.

Beyond the Big Three: Live Translation, Enhanced Personal Voice, and Health Coaching

The AI expansion in iOS 19 is not limited to image understanding and messaging. Several additional features are rumored to round out what could be the most comprehensive AI update in mobile history.

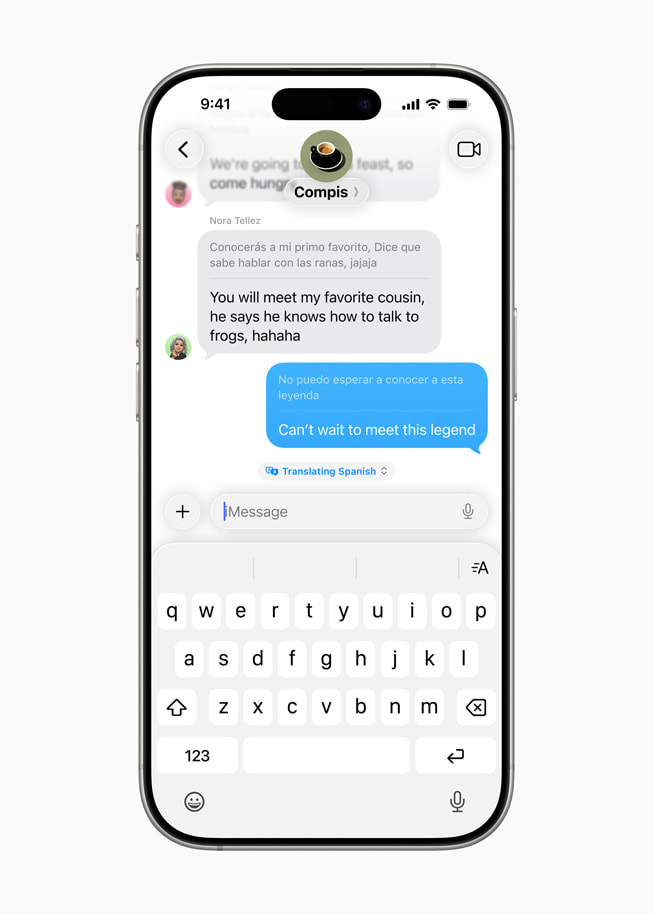

Live translation is expected to get a major upgrade, with AirPods integration enabling real-time translation during FaceTime calls, Messages conversations, and potentially even in-person interactions. The rumored expansion to support more than 10 languages would significantly broaden the feature’s utility for international users and travelers.

Enhanced Personal Voice stands out as particularly impressive from a technical standpoint. The current implementation requires an extensive recording session to create a synthetic version of your voice. iOS 19 is expected to cut this down dramatically — just 10 phrases and under one minute of total recording time. While positioned as an accessibility feature, the technology could eventually enable Siri to speak in your own voice, creating a truly personal AI assistant experience.

Apple Watch AI integration and an AI-powered health coaching tool are also in the rumor mix. These would analyze workout patterns, sleep data, and heart rate trends to deliver personalized health guidance — all processed on-device through the Neural Engine in the latest Apple Watch chips.

And then there is the AI battery management system. Rumors suggest iOS 19 could use machine learning to predict your daily usage patterns and intelligently manage power consumption, potentially extending battery life by learning when you need full performance and when background optimization is sufficient.

The Bigger Picture: Apple’s Privacy-First AI Philosophy Gets Its Proving Ground

Step back from the individual features for a moment and the strategic picture becomes clear. While Google and Microsoft have committed to a cloud-first AI architecture — powerful but dependent on server infrastructure and inherently involving data transfer — Apple has bet everything on a different paradigm. On-device processing. Local data. Privacy by design.

For the first time, the hardware appears ready to fully deliver on that promise. The Neural Engine in Apple’s latest chips provides enough computational power to run meaningful AI models locally. The Foundation Models framework extends that capability to the entire developer ecosystem. And the hybrid on-device/cloud compute approach — where Apple’s Private Cloud Compute handles tasks too large for on-device processing while maintaining end-to-end encryption — fills the gap for heavier workloads.

If everything the leaks suggest proves true on June 9, Apple will not just be adding AI features to iOS. It will be making a compelling case that you do not have to sacrifice privacy to get world-class AI capabilities. That is a proposition that resonates far beyond the tech enthusiast community — it speaks to every person who has ever felt uneasy about where their data goes.

This upcoming Monday could mark the moment Apple Intelligence stops being a promise and starts being a platform. Whether you are a developer planning your next app, a consumer deciding on your next phone, or simply someone who cares about the direction of technology, WWDC 2025 deserves your full attention. The countdown is on.

Get weekly AI, music, and tech trends delivered to your inbox.