NVIDIA DLSS 5 at GTC 2026: Neural Rendering Generates Photoreal Lighting — The ‘GPT Moment for Graphics’

March 17, 2026

How I Switched Terminal Apps Without Interrupting 10+ Running Sessions

March 17, 2026The man who called AI-generated music ‘platform pollution’ just shook hands with AI. In October 2025, Universal Music Group CEO Sir Lucian Grainge sent a company-wide memo labeling AI-generated content as pollution flooding digital platforms. Three months later, on January 6, 2026, from Santa Monica, UMG announced a strategic collaboration with NVIDIA — a company valued at $4.56 trillion — built around UMG NVIDIA Music Flamingo, an AI model trained on approximately 2 million full songs across more than 100 genres. This isn’t an AI that makes music. It’s an AI that understands music. And that distinction changes everything.

What Is Music Flamingo? An AI That Reads Full Tracks, Not Just Metadata

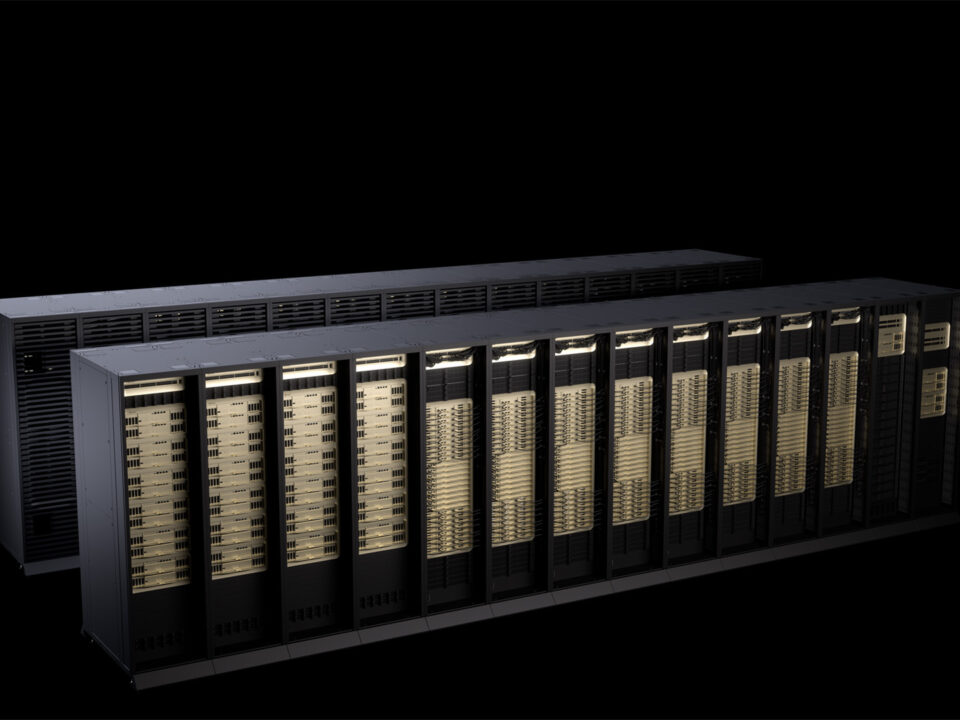

Music Flamingo is built on NVIDIA’s Audio Flamingo architecture, but it’s been specifically engineered for music. Where previous music AI tools relied on 30-second clips, metadata tags, or user behavior patterns, Music Flamingo analyzes full-length tracks up to 15 minutes long. It doesn’t just hear — it reads. Chord progressions. Instrument timbres. Lyrics in multiple languages. And critically, cultural context — the web of influences, traditions, and stylistic lineages that give a piece of music its identity.

The training data is staggering: roughly 2 million complete songs spanning 100+ genres. The model employs chain-of-thought reasoning, which means it doesn’t just pattern-match. It reasons through musical analysis the way an experienced musicologist might — tracing a chord progression back to its possible influences, connecting a vocal melody’s phrasing to regional singing traditions, identifying production techniques that place a track within a specific era or movement. This is fundamentally different from the shallow tagging systems that power most current recommendation algorithms.

UMG NVIDIA Music Flamingo Performance: Crushing GPT-4o Across 10+ Benchmarks

Numbers tell the story. In Chinese lyric transcription testing, Music Flamingo achieved an error rate of 12.9%, compared to GPT-4o’s 53.7%. That’s not a marginal improvement — it’s a four-fold reduction in errors. Across more than 10 benchmarks including music captioning, instrument recognition, and multilingual transcription, Music Flamingo consistently outperformed existing models.

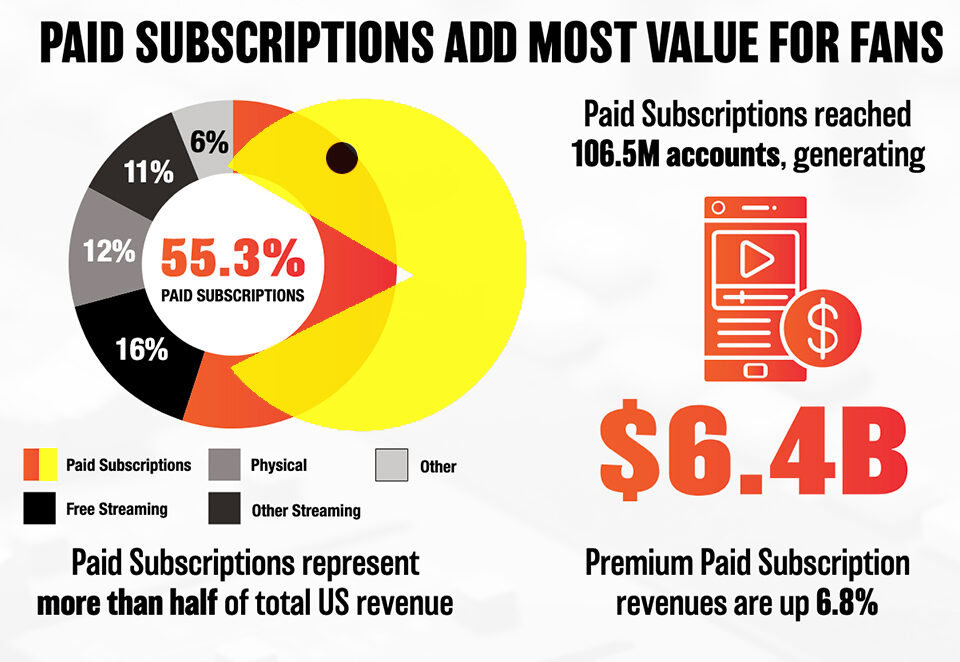

Why does this matter for the music industry? Because streaming platform recommendations have historically been built on behavioral data — play counts, skip patterns, playlist additions, listening time. These signals tell you what users did, but not why they liked something. Music Flamingo adds a layer of genuine musical understanding. Instead of recommending songs because “people who listened to Track A also listened to Track B,” it can recommend songs because they share harmonic DNA, similar timbral qualities, or parallel cultural roots. The difference between collaborative filtering and actual musical comprehension is the difference between a record store algorithm and a record store owner who’s spent 30 years listening to everything.

Consider a practical example. A listener discovers a contemporary Malian artist and loves the guitar work. A behavioral algorithm might suggest other West African artists based on listener overlap. Music Flamingo could identify the specific tuning system and rhythmic pattern, trace its connection to desert blues traditions, and surface artists from completely different regions who share those same musical DNA strands — perhaps a Turkish saz player or a Mississippi Delta blues guitarist who uses a similar modal approach. That’s music discovery at a level that metadata tags simply cannot achieve.

The Three Pillars: Discovery, Engagement, and the Abbey Road Artist Incubator

UMG and NVIDIA structured their collaboration around three pillars, each addressing a different stakeholder in the music ecosystem.

Music Discovery is the most immediately impactful. By leveraging Music Flamingo’s contextual analysis capabilities, UMG aims to build recommendation systems that help billions of fans discover music they never knew existed — not through popularity metrics or demographic profiling, but through the essential musical characteristics of the tracks themselves. For independent and emerging artists on UMG’s roster, this could be transformative. Instead of competing purely on marketing spend and playlist placement, they can be discovered because their music genuinely connects to what a listener already loves, on a harmonic and cultural level that goes far deeper than genre labels.

Fan Engagement explores new forms of interaction between artists and audiences, mediated by AI. Imagine asking a natural language question about a song — “What instruments are used in the bridge section?” or “What genre traditions influenced this track?” — and receiving a detailed, musically literate answer. Music Flamingo’s analytical capabilities make this kind of deep, conversational engagement with music possible at scale, turning passive listening into active musical exploration.

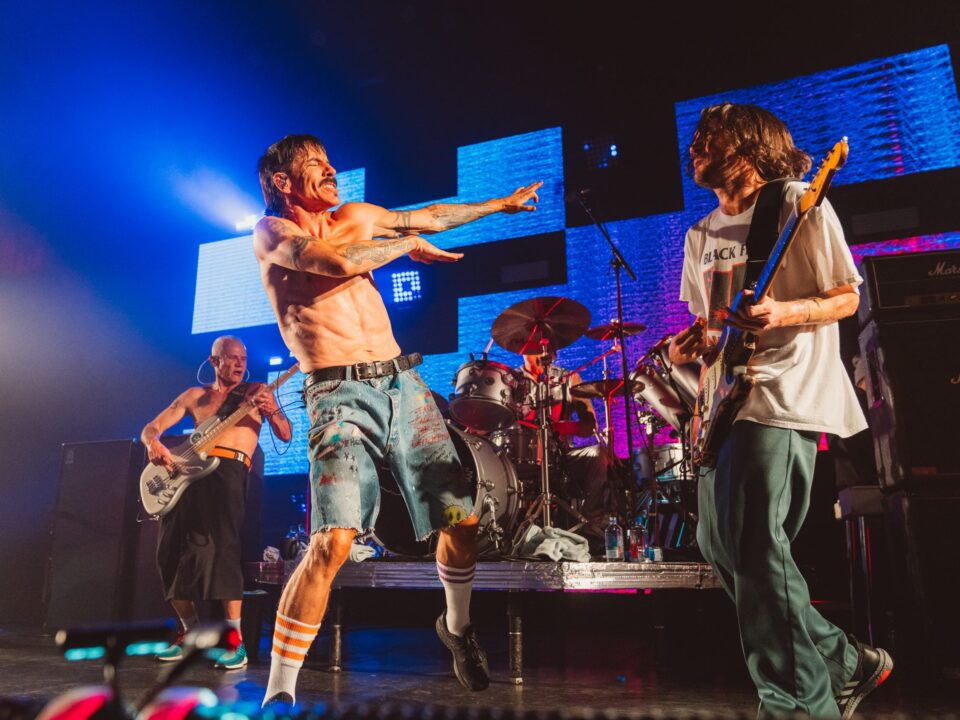

Artist Empowerment is where the partnership gets physically interesting. UMG is launching an Artist Incubator program at Abbey Road Studios and Capitol Studios — two of the most storied recording facilities on the planet. UMG artists will have hands-on access to NVIDIA’s AI tools, experimenting with how these technologies can augment their creative process. This is a deliberate strategic choice: by placing AI tools inside legendary studios rather than in Silicon Valley offices, UMG signals that this technology exists to serve artists, not replace them. The message is clear — AI walks into the studio as an assistant, not as the talent.

The Anti-Slop Strategy: How UMG Is Drawing the Line on Responsible AI

To understand this partnership, you need to see UMG’s full AI strategy. Sir Lucian Grainge’s October 2025 memo didn’t just criticize AI-generated content — it established a doctrine. The term ‘platform pollution’ was chosen deliberately, framing low-quality AI-generated music as an environmental problem for digital ecosystems, not merely a competitive one. UMG’s official position is unequivocal: “We will NOT license any model that uses an artist’s voice without consent.”

The NVIDIA collaboration is built directly on this foundation. Grainge stated that “NVIDIA choosing to take a leadership position in responsible AI principles is critically important.” NVIDIA’s VP Rev Lebaredian echoed this commitment, emphasizing the partnership would proceed “responsibly, with safeguards that protect artists’ work, ensure attribution, and respect copyright.”

UMG’s recent AI deal-making reinforces this pattern. The company has signed licensing agreements with Stability AI, settled its lawsuit with Udio, and forged partnerships with KLAY and Splice. Each of these deals operates within the framework of artist consent and rights protection. But here’s the notable gap: Warner Music Group settled with Suno in November 2025. UMG has not. This isn’t an oversight — it’s a signal. Suno represents the generative side of AI music, the tools that create new compositions. Music Flamingo represents the analytical side — AI that understands existing music without generating competing content. UMG is drawing a bright line between AI that supports human creation and AI that threatens to replace it.

This positioning is strategically brilliant. By partnering with NVIDIA on music understanding rather than music generation, UMG gets the commercial benefits of AI — better discovery, deeper engagement, new revenue streams — while maintaining its hardline stance against unauthorized AI-generated content. It’s not anti-AI. It’s anti-slop. And in an industry where artist trust is the most valuable currency, that distinction matters enormously.

Industry Implications: What the UMG NVIDIA Music Flamingo Deal Means for Everyone

The ripple effects of this partnership extend far beyond UMG and NVIDIA. Here’s what it signals for the broader music industry.

- AI in music shifts from generation to understanding: Music Flamingo doesn’t create new songs. It deeply analyzes, discovers, and connects existing music. This is a strategic approach that maximizes AI’s value while avoiding the existential threat that generative AI poses to artists. Expect other major labels to pursue similar “understanding, not generating” partnerships.

- Rights-holder-centric AI ecosystems become the standard: When the world’s largest music rights company ($4.56 trillion market cap tech partner included) insists on consent-based, attribution-respecting AI, it sets a precedent. Smaller labels, distributors, and platforms will face pressure to align with these standards.

- The Suno gap is a policy statement: UMG’s refusal to settle with Suno while actively partnering with NVIDIA on Music Flamingo draws the clearest line yet between acceptable and unacceptable AI applications in music. Generation without consent remains beyond the pale. Analysis with consent is welcomed with open arms.

- Streaming recommendation is about to get smarter: If Music Flamingo delivers on its promise, the era of shallow genre-tag-based recommendations could end. Culturally aware, harmonically literate discovery could fundamentally change how fans find new music — and how emerging artists get found.

- The artist incubator model matters: Placing AI tools in Abbey Road and Capitol Studios — rather than making artists come to tech conferences — sends a powerful message about who this technology is meant to serve. Other companies launching AI music tools should take note of this artist-first approach.

The music industry has spent the last two years oscillating between AI panic and AI hype. UMG’s partnership with NVIDIA offers something rarer and more valuable: a coherent strategy. Music Flamingo positions AI as a tool that amplifies human creativity rather than replacing it — understanding music at a depth that metadata tags never could, while explicitly refusing to generate content that competes with the artists who created the training data. Whether this model becomes the template for the entire industry depends on what happens next at GTC 2026 and how quickly Music Flamingo moves from research papers to production systems. But one thing is already clear: the company that drew the hardest line against AI slop has just shown the industry what responsible AI in music actually looks like. The question now isn’t whether AI belongs in the music industry — it’s whether everyone else can meet the standard UMG and NVIDIA just set.

Get weekly AI, music, and tech trends delivered to your inbox.