Pre-Holiday Tech Deals October 2025: 7 Early Black Friday Prices You Shouldn’t Wait On

October 27, 2025

Best Free Max for Live Devices: 15 Essential Downloads for Ableton (2025)

October 28, 202567 seconds of cold start time. If you’ve ever tried deploying torch.compile in production, you know exactly how painful that number is. PyTorch 2.5 just crushed it down to 9.6 seconds—a 7x improvement that changes the calculus for every team running compiled models in production. But that’s just the headline. This release also introduces FlexAttention, a revolutionary API that lets you define custom attention patterns in pure Python and have them compiled into optimized FlashAttention kernels. Add in a 75% SDPA speedup on H100 GPUs, Intel GPU support covering 40 million devices, and a Flight Recorder for debugging distributed training nightmares, and you’ve got one of the most consequential PyTorch releases in recent memory. Let’s break down what 504 contributors and 4,095 commits actually delivered.

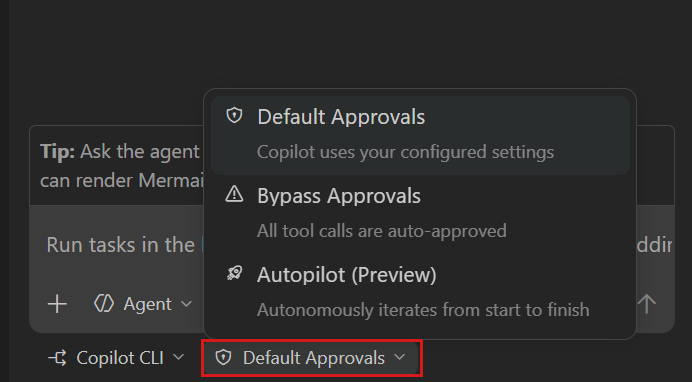

PyTorch 2.5 Regional Compilation: Solving the Cold Start Problem for Good

The single biggest barrier to adopting torch.compile in production has been cold start latency. When you compile an entire model at once, even on an H100 GPU, you’re looking at 67.4 seconds of compilation time before a single inference can happen. For serving environments with autoscaling, cold starts, or frequent model updates, this was a dealbreaker.

PyTorch 2.5’s regional compilation attacks this problem with an elegant insight: Transformer models are built from repeated, identical layers. Instead of compiling the entire model graph, you compile just one representative nn.Module—say, a single transformer layer—and reuse that compiled artifact for every identical layer in the model.

# Traditional approach: compile the whole model (67.4s cold start)

model = torch.compile(model)

# PyTorch 2.5: regional compilation (9.6s cold start)

for layer in model.transformer_layers:

layer = torch.compile(layer)The results speak for themselves. Cold start drops from 67.4 seconds to 9.6 seconds on H100, and with warm cache, it’s just 2.4 seconds. PyTorch 2.5 enables inline_inbuilt_nn_modules by default, so you get this optimization without any additional configuration. For teams that have been holding off on torch.compile adoption due to cold start concerns, this update fundamentally changes the equation.

To put this in practical terms: consider a Kubernetes-based model serving setup with autoscaling. When a new pod spins up, it needs to compile the model before serving its first request. A 67-second cold start means your autoscaler is essentially useless for handling traffic spikes—by the time the new pod is ready, the spike may have already passed. At 9.6 seconds, that same autoscaling setup becomes viable. And with warm cache at 2.4 seconds, you’re looking at near-instant scaling. This is the kind of improvement that changes deployment architectures, not just benchmark numbers.

FlexAttention API: Custom Attention Without Custom CUDA Kernels

If regional compilation is the pragmatic engineering win, FlexAttention is the research breakthrough. Attention mechanism researchers have long faced an uncomfortable choice: use the standard attention implementations that are fast but inflexible, or write custom CUDA kernels that are flexible but painful to develop and maintain.

FlexAttention eliminates this tradeoff entirely. You define a score modification function in pure Python—causal masking, sliding window attention, document masking, or any novel pattern you can imagine—and PyTorch compiles it into an optimized FlashAttention kernel automatically. The API is remarkably simple: you write a function that takes attention scores and returns modified scores, and torch.compile handles the rest.

The performance implications are significant. On H100 GPUs, Fused Flash Attention through FlexAttention delivers up to 75% speedup over FlashAttentionV2. This isn’t just a convenience feature—it fundamentally accelerates the experiment-to-deployment cycle for attention research. Researchers can prototype new attention patterns in minutes, benchmark them at production-grade performance, and deploy without rewriting a single line of CUDA.

Consider the practical workflow change this enables. A researcher exploring a novel sparse attention pattern previously faced weeks of work: designing the pattern, implementing it in CUDA or Triton, debugging memory issues, and then benchmarking against optimized baselines. With FlexAttention, that same researcher writes a Python function describing how attention scores should be modified, wraps the model in torch.compile, and immediately gets performance comparable to hand-tuned kernels. The barrier between having an idea and testing it at scale has effectively collapsed.

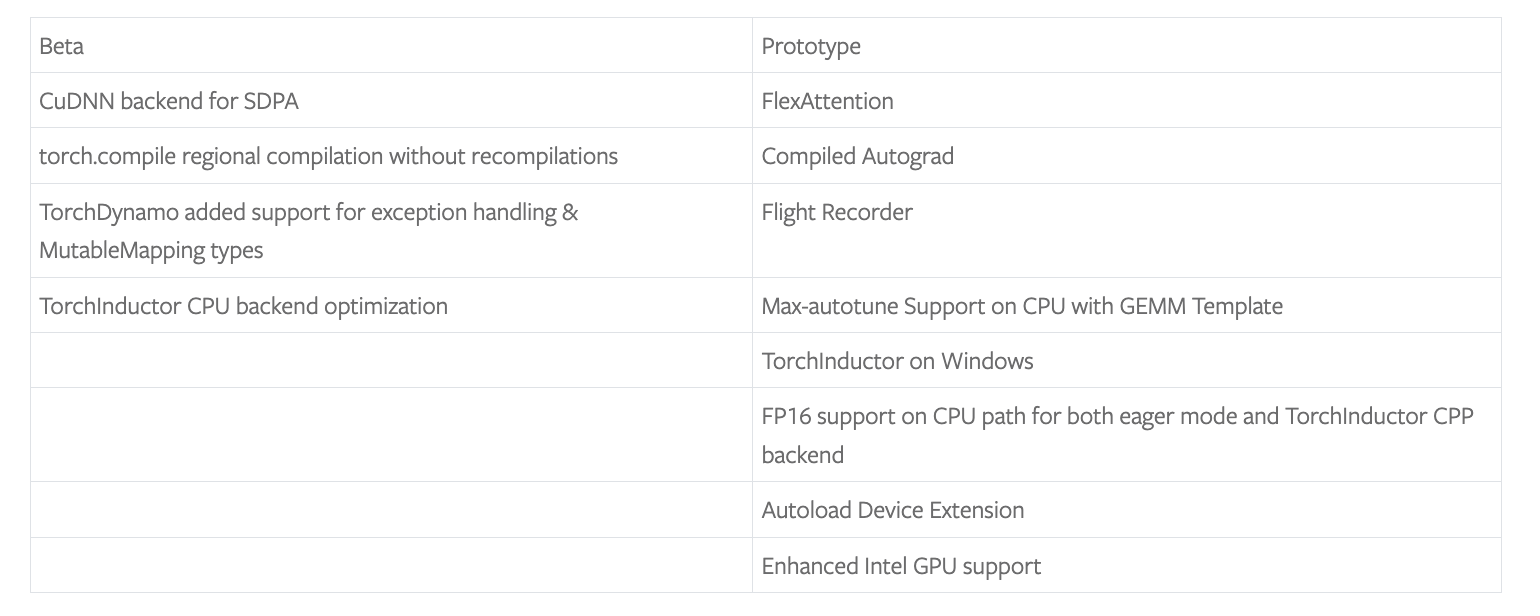

cuDNN SDPA Backend and TorchInductor CPU Optimization

PyTorch 2.5 introduces a new cuDNN-based Scaled Dot-Product Attention (SDPA) backend for NVIDIA H100 and newer GPUs. This backend leverages NVIDIA’s cuDNN library to deliver optimized attention computation that outperforms existing Flash Attention implementations. For large language model training and inference workloads, the cuDNN SDPA backend provides measurable throughput improvements that compound across thousands of training steps.

On the CPU side, TorchInductor’s new max-autotune mode represents a significant step forward. For GEMM (General Matrix Multiply) operations—the computational backbone of deep learning—the max-autotune mode automatically profiles multiple kernel implementations and selects the optimal combination for your specific hardware. Rather than using a one-size-fits-all kernel selection strategy, max-autotune benchmarks available implementations against your actual workload patterns and hardware configuration, then caches the optimal choices for subsequent runs.

Benchmarks show a 7% geomean speedup across the board, with LLM inference workloads seeing up to 20% improvement. That 20% figure is particularly noteworthy because CPU-based LLM inference is increasingly common for smaller models and edge deployments where GPU access is limited or cost-prohibitive. For teams running CPU-based inference pipelines or developing on machines without discrete GPUs, these optimizations make a meaningful difference in both iteration speed during development and serving costs in production.

Intel GPU Support: 40 Million New Deep Learning Devices

Perhaps the most broadly impactful change in PyTorch 2.5 is the expansion of Intel GPU support. According to InfoQ’s analysis, this update enables native PyTorch execution on approximately 40 million laptops and desktops equipped with Intel GPUs. This includes both Intel Arc discrete graphics and integrated graphics chipsets, with support for both eager mode and torch.compile mode.

The democratization angle here is genuinely significant and shouldn’t be understated. Students, hobbyists, and researchers working in resource-constrained environments can now train and run deep learning models on their existing Intel-based hardware without purchasing dedicated NVIDIA GPUs. Combined with the new Windows support for the Inductor CPU backend, PyTorch 2.5 substantially lowers the hardware barrier to entry for deep learning. You no longer need a Linux workstation with an NVIDIA GPU to access PyTorch’s full compilation and optimization capabilities.

Flight Recorder: Debugging Distributed Training at Scale

Anyone who has debugged a stuck distributed training job knows the pain. Hundreds of GPUs connected across multiple nodes, and somewhere in that mesh, a collective communication operation has gone wrong. Finding the root cause typically involves hours of log analysis, educated guessing, and a fair amount of profanity. Traditional debugging approaches—adding print statements, checking NCCL logs, manually comparing operation sequences across ranks—simply don’t scale when you’re dealing with hundreds or thousands of processes.

PyTorch 2.5’s Flight Recorder tackles this problem head-on. It maintains a ring buffer of collective communication events on each node, recording the sequence and timing of all distributed operations. When a job stalls, you can dump the Flight Recorder data and quickly identify which rank experienced a mismatch, which collective operation failed, and where the communication breakdown occurred.

The ring buffer design is particularly clever—it captures enough history to diagnose issues without the memory overhead of logging everything. For teams running FSDP (Fully Sharded Data Parallel) or pipeline parallelism across large GPU clusters, this tool can reduce debugging time from hours to minutes. When GPU hours cost real money and a stuck job means burning thousands of dollars in idle compute, having Flight Recorder in your toolkit pays for itself almost immediately. It’s the kind of infrastructure tooling that doesn’t make headlines but saves thousands of engineering hours and prevents significant wasted compute costs.

What PyTorch 2.5 Signals: The Compile Era Is Here

Stepping back and looking at PyTorch 2.5 holistically, the strategic direction is unmistakable. PyTorch is fully committing to the compiled execution paradigm while maintaining the eager mode development experience that made it the framework of choice for researchers. Regional compilation lowers the adoption barrier, FlexAttention preserves researcher freedom within the compiled world, and Intel GPU plus Windows support expand the ecosystem’s reach.

The contribution statistics—504 contributors, 4,095 commits—reflect a thriving community that continues to drive the framework forward. For production ML teams, the message is clear: if you’ve been waiting for torch.compile to be “ready,” PyTorch 2.5 is the version that makes the case definitively. The cold start problem that kept many teams on eager mode is largely solved, the performance gains are substantial, and the tooling for debugging and optimization has matured significantly.

Whether you’re running large-scale distributed training, deploying models in latency-sensitive serving environments, or experimenting with novel attention mechanisms, PyTorch 2.5 delivers concrete improvements that translate directly to faster iteration cycles and lower infrastructure costs. For organizations navigating these kinds of technical challenges, having the right expertise and automation in place makes the difference between a smooth upgrade path and weeks of debugging.

Need tech consulting or automation system architecture? We provide hands-on consulting backed by real-world experience.

Get weekly AI, music, and tech trends delivered to your inbox.