Samsung 990 Pro 4TB Review: Why This Gen4 NVMe Still Destroys Gen5 Drives for Content Creators

May 20, 2025

iZotope Music Production Suite 7: Is This 26-Plugin Bundle Worth $599?

May 21, 202572.1% on the first try. That’s what OpenAI Codex codex-1 scored on SWE-Bench Verified — a benchmark where AI agents must resolve real GitHub issues from open-source repositories. Not autocomplete suggestions. Not code snippets. Full autonomous software engineering: reading entire repos, understanding architecture, writing fixes, running tests, and submitting pull requests. Launched on May 16, 2025, OpenAI’s Codex represents the most significant leap from code completion to autonomous coding we’ve seen yet. Here are the five technologies that make it work.

The codex-1 Model: What Happens When You Optimize o3 for Software Engineering

At its core, OpenAI Codex codex-1 is a specialized version of the o3 reasoning model, fine-tuned through reinforcement learning on real-world software engineering tasks. According to OpenAI’s system card, codex-1 achieves 72.1% on SWE-Bench Verified with a single attempt and 83.8% when given eight tries. For comparison, the base o3 model in high-effort mode scores 69.7%.

The training methodology is what sets codex-1 apart. Rather than learning from static code corpora, OpenAI trained the model using reinforcement learning on actual engineering workflows — implementing features, fixing bugs, and iterating until test suites pass. The result is a model that doesn’t just generate syntactically correct code but produces output that follows human conventions for pull requests, commit messages, and code organization. As OpenAI noted, codex-1 generates “human-style code and PR preferences,” a subtle but crucial distinction from earlier code models.

The performance gap between codex-1 and base o3 might seem modest — 72.1% vs 69.7% — but in the context of SWE-Bench, that 2.4 percentage point improvement represents dozens of additional real-world issues resolved autonomously. Each of those issues involves reading multiple files, understanding interconnected systems, and producing production-ready patches.

Cloud Sandbox Architecture: Isolation as a Feature, Not a Limitation

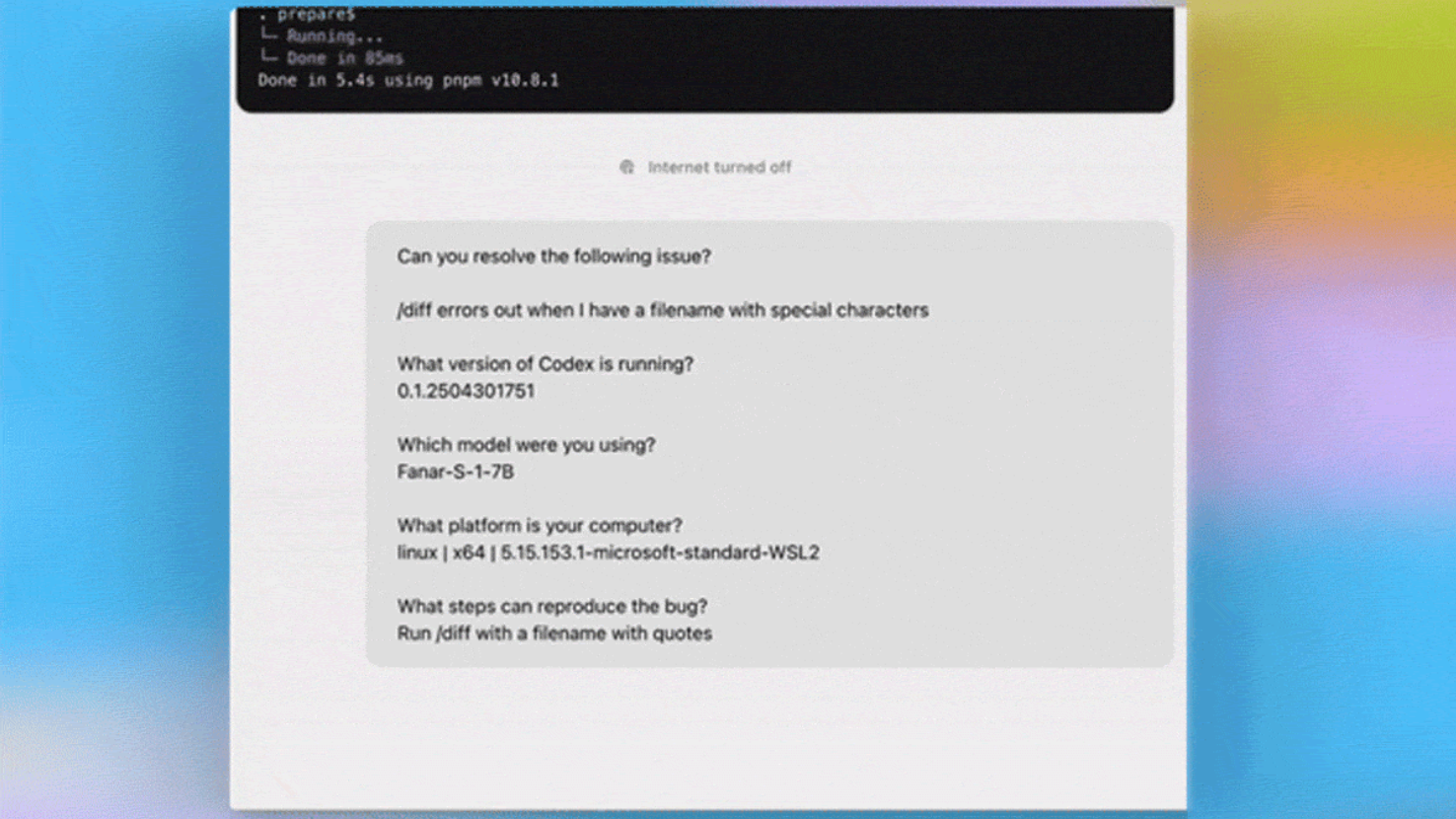

Every task OpenAI Codex codex-1 handles runs inside a dedicated cloud sandbox — an isolated container pre-loaded with the user’s repository. According to OpenAI’s official blog, these sandboxes have no internet access by design. The agent works exclusively with what’s already in the repository, including dependencies, test suites, linters, and type checkers.

This architecture delivers three critical advantages. First, security: with no network access, there’s zero risk of code exfiltration or dependency confusion attacks during agent execution. Second, reproducibility: identical sandbox environments guarantee consistent results across runs. Third, parallelism: because each sandbox is fully isolated, multiple tasks can run simultaneously without interference — a team can submit ten different issues and have Codex work on all of them concurrently.

As InfoQ reported, each sandbox supports the project’s complete toolchain. If your repository uses pytest, ESLint, mypy, or any other verification tool, Codex runs them. The agent iteratively executes tests, reads failures, adjusts code, and reruns until tests pass — mimicking the actual development loop that human engineers follow. Tasks typically complete in 1 to 30 minutes depending on complexity.

Multi-Repository Code Understanding: Reading Architecture, Not Just Lines

Perhaps the most impressive capability of OpenAI Codex codex-1 is its ability to understand codebases at the architectural level. The OpenAI Developers documentation reveals several specific code understanding tasks that Codex performs routinely:

- Request flow mapping: Tracing how requests move through the system from API endpoints through middleware, service layers, and database queries across multiple modules

- Module architecture analysis: Identifying what each module owns, what it depends on, and what depends on it — creating a mental map of the codebase’s structure

- Hidden dependency surfacing: Discovering implicit dependencies that aren’t obvious from import statements or project configuration — the kind of connections that cause unexpected breakages

- Risk assessment: Evaluating what a proposed change might break across the system before writing a single line of code

This matters because studies consistently show that developers spend 60-70% of their time reading and understanding existing code rather than writing new code. Codex’s ability to rapidly build a comprehensive understanding of complex codebases attacks the biggest productivity bottleneck in software engineering. A new team member who would take weeks to understand a legacy system can now use Codex to get an architectural overview, trace critical paths, and identify risk areas in minutes.

AGENTS.md: The Rise of Repository-Level Agent Instructions

Alongside OpenAI Codex codex-1, OpenAI introduced the AGENTS.md convention — a file that lives in your repository and provides project-specific instructions to the AI agent. According to OpenAI’s developer guide, AGENTS.md serves as a README for AI agents, covering project-specific practices, naming conventions, business logic, known quirks, and testing commands.

The concept isn’t entirely new — Anthropic’s Claude Code uses CLAUDE.md files for the same purpose — but OpenAI’s adoption solidifies this as an emerging industry standard. The implications are significant for team-based development. Instead of each developer individually prompting the AI with context, the entire team shares a single source of truth for agent behavior. When a new engineer joins, the AGENTS.md file immediately brings their AI tools up to speed on project conventions.

Practically, a well-crafted AGENTS.md file can include instructions like which test framework to use, how to name branches, what directories contain sensitive business logic, which patterns to follow for new endpoints, and which legacy modules require special handling. This transforms AI coding agents from generic tools into project-aware collaborators.

The Competitive Landscape: Codex vs Claude Code vs GitHub Copilot

The AI coding agent market in 2025-2026 has crystallized into three distinct paradigms. OpenAI Codex codex-1 represents the cloud-isolated approach: tasks run in sandboxed containers with no internet access, optimized for security and parallelism. Anthropic’s Claude Code takes the local-first approach: running directly in the developer’s terminal with a 1-million-token context window for massive codebase understanding. On SWE-Bench Verified, Claude Code scores 72.5% — essentially neck-and-neck with Codex’s 72.1%.

GitHub Copilot remains the most widely adopted tool but focuses primarily on inline code completion rather than autonomous task execution. The three tools serve overlapping but distinct use cases:

- Codex: Best for enterprise teams needing secure, parallel autonomous task execution with audit trails and zero network exposure during agent operation

- Claude Code: Best for developers who need deep local integration, real-time file system access, and the flexibility to interact with external APIs and services during development

- Copilot: Best for real-time coding assistance within IDEs, with the broadest user base and deepest editor integration

The adoption numbers tell their own story. By March 2026, Codex has surpassed 2 million weekly active users — a 5x increase since January 2026. ChatGPT Plus users gained access in June 2025, and desktop apps launched in February 2026, driving mainstream adoption far beyond the initial Pro/Team/Enterprise tier.

My Take: Choosing Between Sandboxed and Local AI Coding Agents

I currently run a multi-agent pipeline built on Claude Code that automates this very blog — six agents handle research, writing, image generation, publishing, review, and reporting in sequence. From that hands-on experience, examining OpenAI Codex’s architecture reveals a fundamental philosophical split that every developer needs to understand.

Codex’s cloud isolation approach is elegant for security and reproducibility. But in practice, the “no internet” constraint is a bigger limitation than it might seem at first glance. My pipeline alone communicates with WordPress API, Cloudinary for image hosting, Notion for content management, and Telegram for notifications — all in real time. A sandboxed agent with no network access simply cannot support this kind of integrated workflow. For pure code-on-code tasks — bug fixes, feature implementations, refactoring — Codex’s approach is arguably superior. For anything involving external service integration, the local approach wins.

That said, Codex’s parallel task execution is genuinely compelling. After 28 years working in studios where running multiple signal chains simultaneously is second nature, I appreciate the value of parallelism. My current pipeline processes topics sequentially, but if independent tasks like researching multiple topics could run concurrently, throughput would multiply. The choice between Codex and Claude Code ultimately comes down to the same principle I’ve applied to every tool decision in my career: which one integrates most naturally into your existing workflow? For enterprise teams with strict security requirements and well-contained codebases, Codex is the clear choice. For individual developers and small teams that need flexibility and external service integration, Claude Code’s local-first approach is more practical.

The Bottom Line: Autonomous Coding Agents Are Here

OpenAI Codex codex-1 isn’t just another incremental improvement in AI coding tools. It represents the decisive shift from code completion to autonomous software engineering — agents that read entire repositories, understand system architecture, and deliver production-ready pull requests. The 72.1% SWE-Bench score matters less than what it demonstrates: AI that can navigate the messy, interconnected reality of real codebases. With AGENTS.md establishing a new convention for human-agent collaboration and cloud sandboxes enabling secure parallel execution, the infrastructure for AI-powered development teams is now in place. The question is no longer whether AI coding agents work — it’s how quickly your team adapts to working alongside them.

Interested in building AI-powered automation pipelines or integrating coding agents into your workflow? Sean Kim offers hands-on consulting from 28 years of experience.

Get weekly AI, music, and tech trends delivered to your inbox.