Home Studio 2026 Upgrade Priorities Guide: Where to Invest First (From a 28-Year Veteran)

December 18, 2025

Best MIDI Controllers 2025: 13 Keyboards, Pads, and Faders Compared

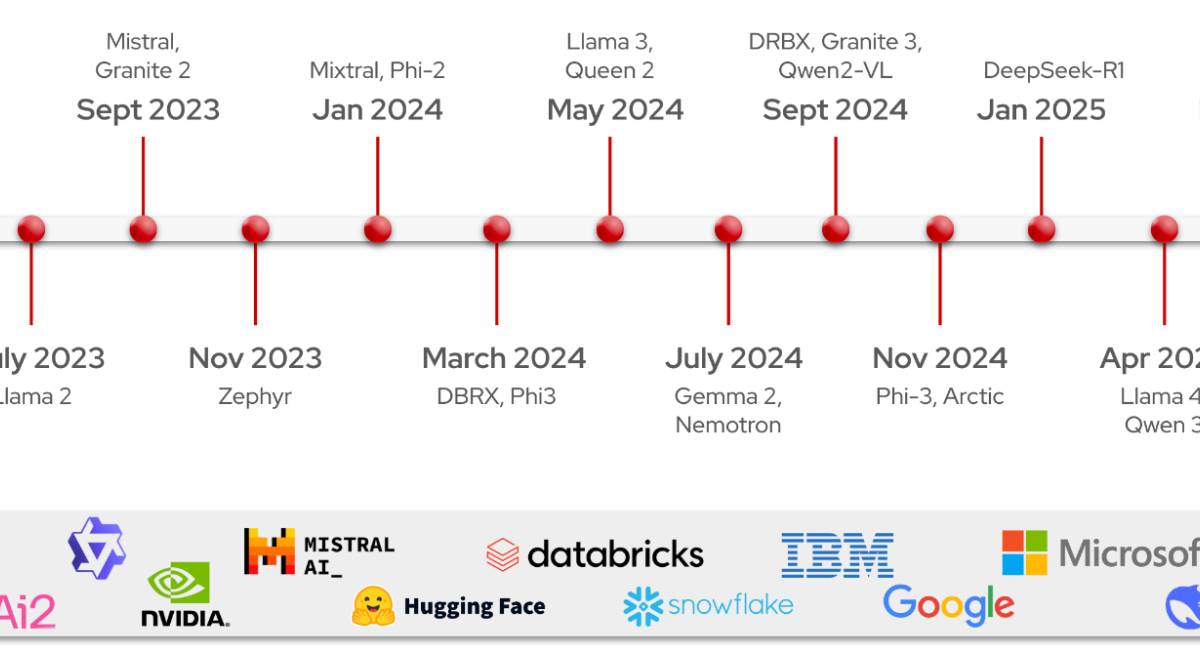

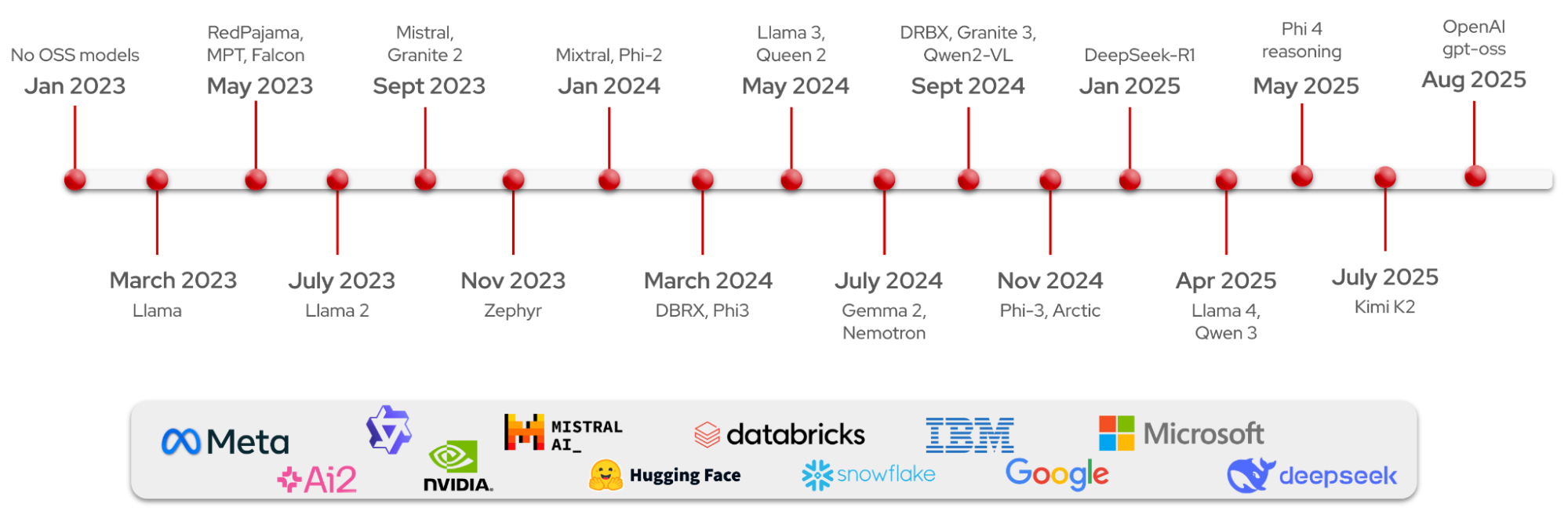

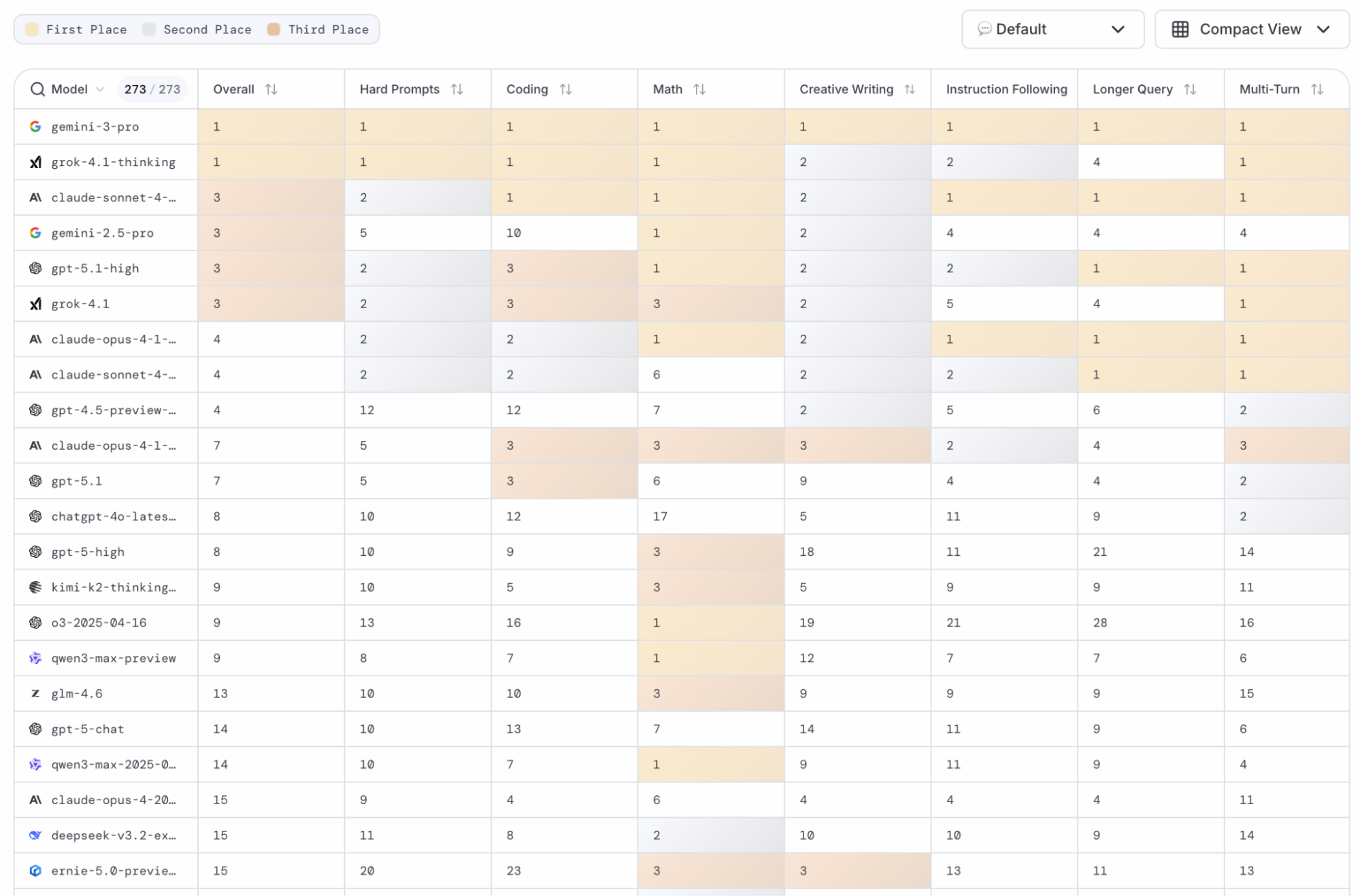

December 19, 2025A year ago, if you wanted frontier-level AI reasoning, you paid OpenAI. Full stop. Then January 2025 happened — and the open source AI models 2025 ecosystem exploded in ways nobody predicted. As we close out what might be the most transformative year in open-weight AI history, it’s time to name the winners.

I’ve spent the past 12 months tracking every major release — from the DeepSeek R1 bombshell that kicked off the year to the Qwen 3 family quietly overtaking Meta’s Llama in total downloads. Here are the 6 open source AI models 2025 that didn’t just compete with proprietary systems — they redefined what’s possible without writing a single check to OpenAI or Anthropic.

1. DeepSeek R1: The Model That Shook the Industry

When DeepSeek dropped R1 on January 20, 2025, under an MIT license, the AI world collectively did a double-take. Here was a 671-billion-parameter Mixture-of-Experts model from a Chinese startup — delivering reasoning capabilities competitive with OpenAI’s o1 at roughly 30x lower cost.

DeepSeek R1 didn’t just prove open source AI models 2025 could match proprietary reasoning. It triggered a domino effect: Chinese labs that had been keeping models private suddenly started releasing them. The shift was seismic — by summer, total model downloads on HuggingFace flipped from USA-dominant to China-dominant. The MIT license meant anyone could build on it commercially, no strings attached.

What made R1 truly special wasn’t just benchmarks — it was the distillation strategy. DeepSeek released smaller R1-Distill variants based on Qwen and Llama architectures, making frontier reasoning accessible on hardware that actual developers can afford. This is how you change a landscape: not by hoarding capability, but by distributing it.

2. Qwen 3: The Quiet Giant That Overtook Everyone

If DeepSeek R1 was the year’s loudest debut, Alibaba’s Qwen 3 was its most consequential slow burn. By the end of 2025, Qwen has overtaken Meta’s Llama as the most downloaded model family on HuggingFace and the most popular base model for fine-tuning — a distinction that would have been unthinkable 18 months ago.

The numbers speak volumes: Qwen 3’s MoE variants exceed 1 trillion total parameters, support 119 languages, and hit 92.3% accuracy on AIME25. The Apache 2.0 license made enterprise adoption frictionless. Airbnb CEO Brian Chesky publicly noted they use Qwen in production because it’s “faster and cheaper than OpenAI’s models.”

Then came the NeurIPS 2025 cherry on top. The Tongyi Qianwen team won a Best Paper Award for their Attention Gating research — technology already applied to the Qwen3-Next model series. When your research wins top-tier academic prizes and your model leads production downloads, you’re not competing anymore. You’re defining the category.

3. Llama 3.3 70B: Meta’s Efficiency Masterclass

Meta’s contribution to 2025’s open source AI revolution was less about raw capability and more about brutal efficiency. The Llama 3.3 70B model delivers performance comparable to the massive 405-billion-parameter Llama 3.1 — at a fraction of the computational cost. For teams running inference at scale, this wasn’t incremental. It was transformative.

Llama maintained its position as the backbone of RAG workflows and production deployments throughout 2025. The ecosystem built around it — fine-tuned variants, adapter libraries, inference optimizations — remained unmatched in depth. While Qwen surpassed it in raw download numbers, Llama’s community license and massive developer base kept it central to the industry’s infrastructure.

The real story here is what 70B-class models mean for the future: you no longer need a cluster of A100s to run frontier-quality inference. A single high-end GPU can now serve a model that matches what required a datacenter rack a year ago. Meta democratized deployment, even if they didn’t win every benchmark.

4. Mistral Small 3: Europe’s Apache 2.0 Champion

Mistral AI’s approach in 2025 was refreshingly pragmatic. While competitors raced to trillion-parameter counts, Mistral Small 3 aimed to cover 80% of real-world use cases with a 24-billion-parameter model under an Apache 2.0 license — meaning full commercial use with zero restrictions.

For European enterprises navigating AI sovereignty concerns and GDPR compliance, Mistral became the obvious choice. The model slots perfectly into environments where self-hosting isn’t optional — it’s required. And at 24B parameters, it runs comfortably on hardware that most companies already own.

Mistral also made waves with Devstral 2, their coding-specialized model that hit 72.2% on SWE-bench Verified — state-of-the-art for open-source code agents. When your “small” model handles most tasks and your specialist model leads coding benchmarks, you’ve built a complete ecosystem without the billion-dollar compute bills.

5. Gemma 3: Google’s Multilingual Dark Horse

Google’s Gemma 3, staying under 30 billion parameters, punched well above its weight class in 2025. The model’s standout feature was multilingual capability combined with robust vision support — a combination that made it the go-to choice for teams building applications that need to work across languages and modalities simultaneously.

In a year dominated by Chinese and French models, Gemma 3 represented Google’s commitment to keeping the open-source community engaged. It wasn’t trying to be the biggest or the fastest — it was trying to be the most useful for developers who need a reliable, well-documented, multimodal foundation model that doesn’t require a PhD to deploy.

6. OLMo 3: The Gold Standard for Open Science

AI2’s OLMo 3 might not grab headlines like DeepSeek or Qwen, but it earned something arguably more valuable in 2025: trust. OLMo 3 is the only major model that releases everything — training data, code, model weights, and complete training logs. In an era where “open source” often means “open weights with closed everything else,” OLMo 3 set the bar for genuine transparency.

For researchers, this matters enormously. You can’t reproduce science you can’t see. OLMo 3 made it possible to actually understand why a model behaves the way it does — not just that it does. As AI regulation tightens globally, this kind of radical openness may transition from “nice to have” to “required by law.”

The Bigger Picture: What 2025 Proved About Open Source AI

Three trends defined the open source AI models 2025 landscape that no one should ignore heading into 2026:

- The China factor is real. HuggingFace download data shows a clear shift — Chinese labs now lead in both model releases and total downloads. DeepSeek, Qwen, and others didn’t just compete; they set the pace.

- Small models won the practical war. While headlines chased trillion-parameter counts, the actual revolution happened at 7B-70B scale. Models running on consumer hardware — or a single GPU — became the backbone of production AI in 2025.

- The inference stack matured. vLLM hitting 60,000+ GitHub stars with 1,700+ contributors tells the full story. Open-source inference infrastructure is now production-grade. Self-hosting isn’t a compromise anymore — it’s a competitive advantage.

NeurIPS 2025 captured this shift perfectly. With submissions doubling from 9,467 in 2020 to 21,575 in 2025, the research community has decisively moved from “bigger models” to “smarter systems.” Inference economics matter. Routing by task complexity matters. And open weights are no longer a concession — they’re the default starting point for serious AI development.

The proprietary AI providers aren’t going anywhere. But 2025 proved conclusively that you don’t need them for most tasks. The open source AI models 2025 winners didn’t just narrow the gap — they eliminated it for the majority of real-world applications. And that changes everything about how we build, deploy, and think about AI in 2026.

Interested in building AI pipelines with open-source models, or need help choosing the right model for your use case?

Get weekly AI, music, and tech trends delivered to your inbox.