Next.js 16.2 Deep Dive: 87% Faster Dev Startup, Agent DevTools, and 200+ Turbopack Fixes

March 25, 2026

Atlassian Layoffs AI: 1,600 Jobs Cut as the Jira Giant Splits Its CTO Role for an AI-First Future

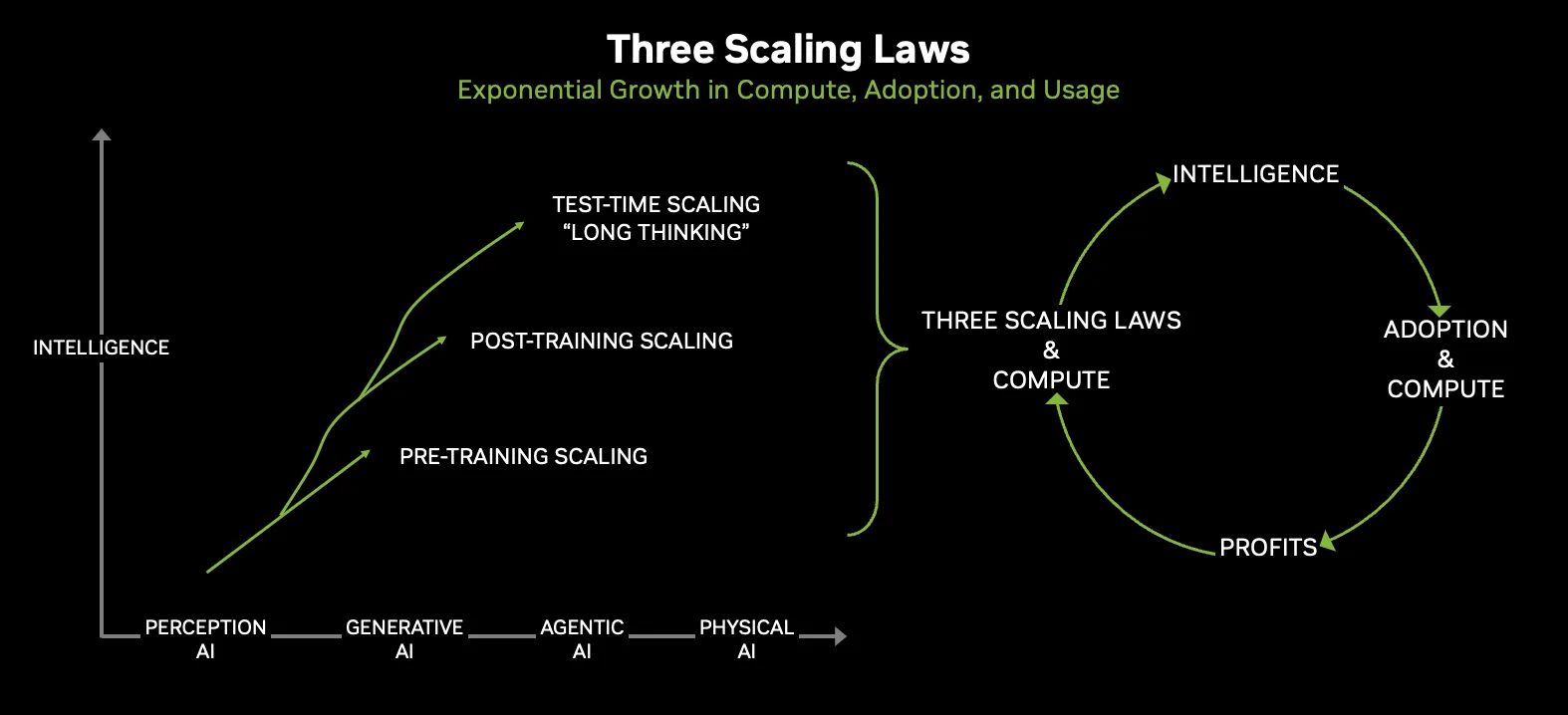

March 25, 202650 PFLOPS from a single GPU. 336 billion transistors. 22 TB/s of memory bandwidth. And when you rack 72 of them together, you get more bandwidth than the entire internet. These are not projections — these are the production specs NVIDIA revealed for the Vera Rubin platform at GTC 2026. The NVIDIA Vera Rubin platform is the most ambitious vertically integrated AI system ever built, and it ships this year. Here’s a complete technical breakdown of every chip, every spec, and why this matters for the next era of AI infrastructure.

NVIDIA Vera Rubin — Six Chips, One Supercomputer

On March 16, 2026, at the SAP Center in San Jose, NVIDIA CEO Jensen Huang declared: “Vera Rubin is a generational leap — seven breakthrough chips, five racks, one giant supercomputer — built to power every phase of AI.” The platform comprises six core chips: the Rubin GPU, Vera CPU, NVLink 6 Switch, ConnectX-9 SuperNIC, BlueField-4 DPU, and Spectrum-6 Ethernet Switch.

At GTC 2026, a seventh chip joined the family — the NVIDIA Groq 3 LPU (Language Processing Unit), coming from the $20 billion Groq acquisition. This isn’t just a GPU launch. It’s an entire AI factory in a box.

Rubin GPU — The 336 Billion Transistor Beast

The heart of the NVIDIA Vera Rubin platform is the Rubin GPU, manufactured on TSMC’s 3nm process with a dual-die design. Two reticle-sized compute chiplets house a combined 336 billion transistors — a 1.6x increase over Blackwell’s 208 billion. The architectural leap is real. Moving from Blackwell’s TSMC 4NP to a full 3nm node shrink, NVIDIA has packed significantly more compute density per square millimeter while reducing power consumption per transistor.

Performance numbers tell the story: 50 PFLOPS of NVFP4 inference, 35 PFLOPS of NVFP4 training, 130 TFLOPS FP32 vector, 400 TFLOPS FP32 matrix, 33 TFLOPS FP64 vector, and 200 TFLOPS FP64 matrix compute. With 224 fifth-generation Tensor Cores optimized for low-precision execution, this GPU covers everything from scientific simulation to trillion-parameter model inference. The inclusion of robust FP64 matrix capabilities at 200 TFLOPS makes Rubin a serious contender for HPC workloads as well — Grace Hopper users can expect up to 3.2x improvement in simulation performance.

Memory is where things get truly wild. Each GPU carries 288GB of HBM4 delivering 22 TB/s of bandwidth — that’s a 2.8x jump from Blackwell’s 8 TB/s on HBM3e. For context, Blackwell was already considered bandwidth-rich. Rubin nearly triples it. This massive memory bandwidth is critical for serving the increasingly large KV caches that long-context reasoning models demand, and for handling the dynamic routing patterns in Mixture of Experts architectures where each token may activate different expert subsets.

Vera CPU — 88 Custom Olympus Cores

NVIDIA’s custom-designed Vera CPU packs 88 Olympus cores with full Arm v9.2 compatibility. Spatial multithreading delivers 176 threads, backed by 2MB L2 cache per core and a unified 162MB L3 cache. Memory support goes up to 1.5TB of power-efficient LPDDR5X via SOCAMM modules, with up to 1.2 TB/s of memory bandwidth.

The real differentiator is the CPU-GPU interconnect. NVLink-C2C provides 1.8 TB/s bidirectional bandwidth between each Vera CPU and its paired Rubin GPUs, enabling a unified address space for KV-cache offloading and multi-model execution. NVIDIA claims 3x the memory bandwidth per core and 2x the energy efficiency compared to x86 CPUs. Add PCIe Gen 6 and CXL 3.1 support, and you have a CPU purpose-built for AI infrastructure. The SOCAMM LPDDR5X memory modules are specifically chosen for power efficiency — critical when you’re running 36 of these CPUs in a single rack alongside 72 power-hungry GPUs.

NVLink 6 — More Bandwidth Than the Entire Internet

Sixth-generation NVLink delivers 3.6 TB/s bidirectional bandwidth per GPU — a 2x improvement over Blackwell. This matters enormously for Mixture of Experts models, where expert routing demands massive all-to-all communication patterns. According to NVIDIA’s technical blog, NVLink 6 achieves 2x higher throughput specifically for these MoE communication patterns.

Each NVLink 6 switch tray aggregates 28.8 TB/s with FP8 in-network compute (SHARP) delivering 14.4 TFLOPS per tray. SHARP reduces all-reduce traffic by up to 50% and improves collective operation time by up to 20%. The entire 72-GPU rack connects via a single-hop, full all-to-all fabric — no multi-hop latency penalties. For variable all-to-all operations common in MoE workloads, this translates to 3x faster job completion compared to standard Ethernet configurations.

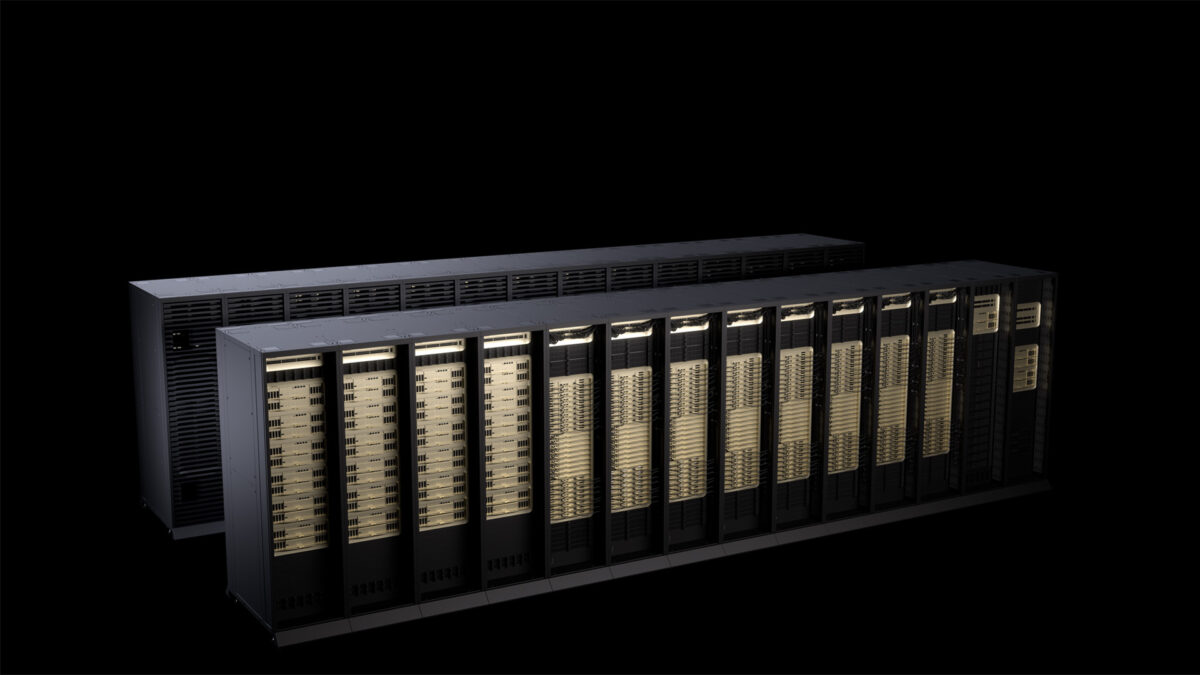

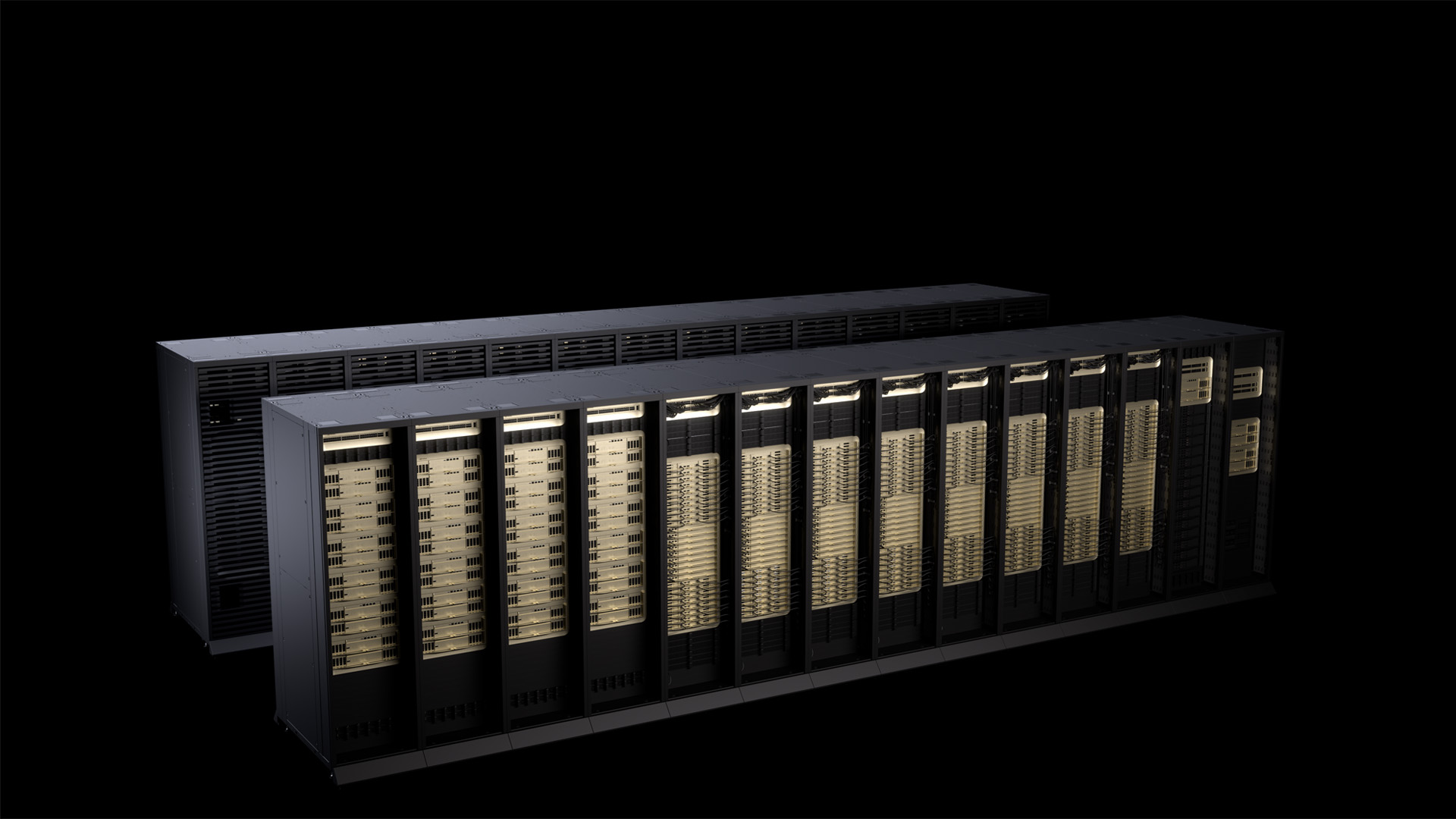

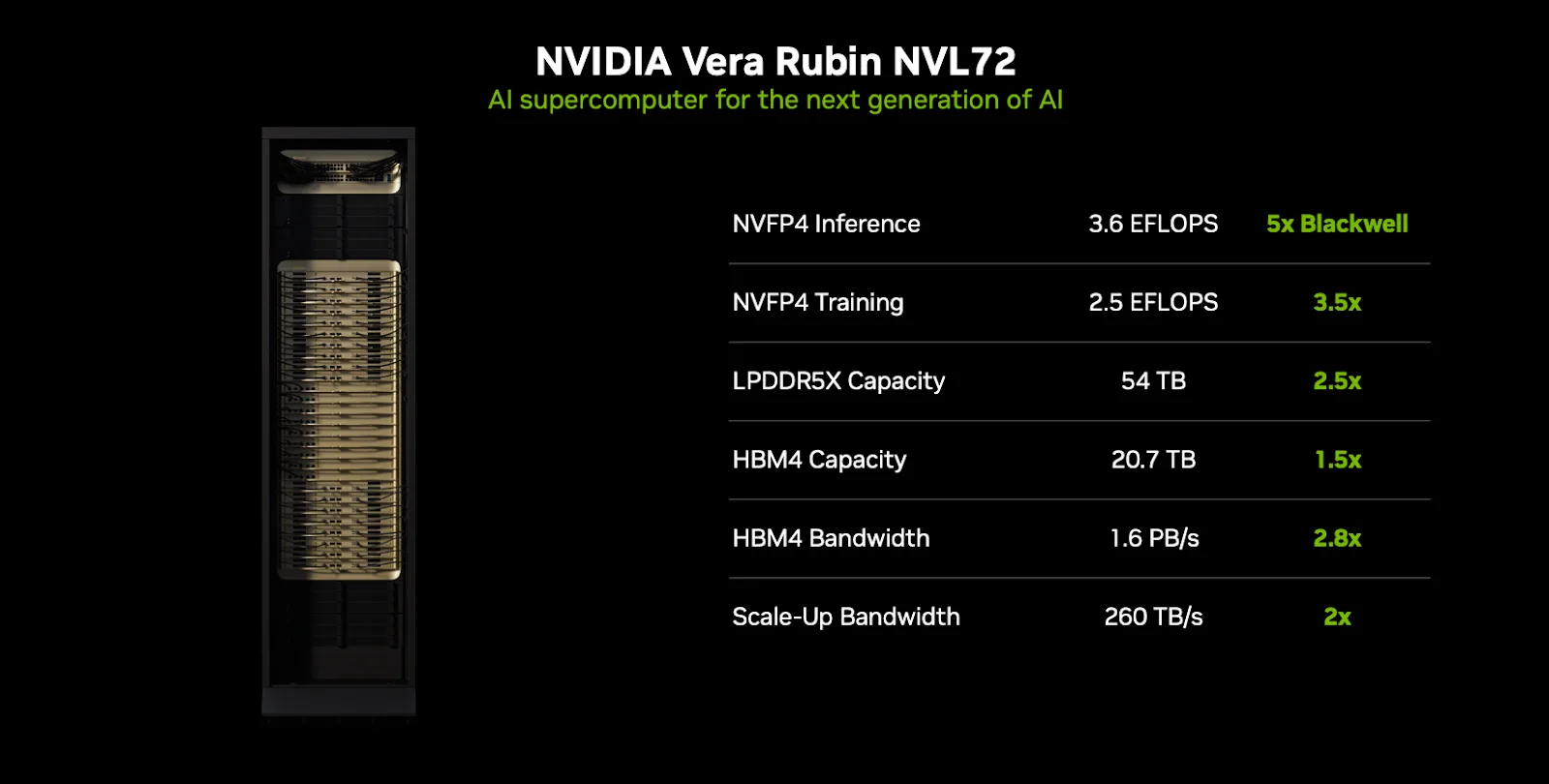

Vera Rubin NVL72 — The 3.6 ExaFLOPS Rack

The Vera Rubin NVL72 unifies 72 Rubin GPUs and 36 Vera CPUs into a single rack. That’s 18 compute trays, 9 NVLink switch trays, 1.3 million individual components, and roughly 1,300 chips packed into a third-generation MGX liquid-cooled rack weighing approximately 4,000 pounds. Every compute tray houses 2 Rubin Superchips (each pairing one Rubin GPU with one Vera CPU), ConnectX-9 SuperNICs providing 1.6 Tb/s per GPU, and a BlueField-4 DPU for infrastructure offload.

The aggregate numbers are staggering: 3.6 exaFLOPS of NVFP4 inference, 2.52 exaFLOPS of training, 20.7TB of HBM4 with 1.6 PB/s bandwidth, 54TB of LPDDR5X connected to the Vera CPUs, and 260 TB/s of NVLink 6 scale-up bandwidth. Each compute tray delivers 200 PFLOPS of NVFP4 performance with 2TB of fast memory and 14.4 TB/s of NVLink 6 bandwidth.

The efficiency gains over Blackwell are transformative: train large MoE models with one-quarter the GPUs, up to 10x higher inference throughput per watt, and one-tenth the cost per token. Compared to the Hopper H200, that’s roughly 50x more tokens per watt. As Tom’s Hardware reported, this isn’t incremental improvement — it’s a redefinition of AI infrastructure economics.

The Full Networking Stack — ConnectX-9, BlueField-4, Spectrum-6

ConnectX-9 SuperNIC provides 800 Gb/s per port and 1.6 Tb/s per GPU of network bandwidth with programmable congestion control, traffic shaping, and IPsec/PSP data-in-transit encryption.

BlueField-4 DPU integrates 64 Grace CPU cores (Neoverse V2) with 128GB LPDDR5X memory, handling 800 Gb/s AES-XTS encryption, 128K-host cloud networking, and 20 million IOPs of NVMe storage disaggregation. The Spectrum-6 Ethernet switch delivers 102.4 Tb/s per chip with 32 silicon photonics engines, achieving 64x better signal integrity than traditional transceivers and approximately 5x better network power efficiency than pluggable-based systems.

Groq 3 LPU — The Seventh Chip

The surprise addition at GTC 2026 was the NVIDIA Groq 3 LPU. The LPX rack houses 256 LPU processors with 128GB of on-chip SRAM and 640 TB/s of scale-up bandwidth. NVIDIA claims up to 35x higher inference throughput per megawatt and up to 10x more revenue opportunity for trillion-parameter models. This chip specifically targets the latency-sensitive inference workloads that define the agentic AI era. The integration of Groq technology — acquired for roughly $20 billion in NVIDIA’s largest deal ever — signals that NVIDIA sees deterministic, low-latency inference as a fundamentally different compute problem from training, one that requires purpose-built silicon rather than general-purpose GPU adaptations.

Blackwell vs. Vera Rubin — The Generational Leap

The numbers speak clearly: up to 5x inference throughput, 3.5x training throughput, 2.8x memory bandwidth, 2x NVLink bandwidth, and 1.6x transistor count. But the most important metric isn’t raw performance — it’s efficiency. At 10x performance per watt and 1/10th cost per token, Vera Rubin fundamentally changes the economics of running AI at scale.

Jensen Huang now projects “at least $1 trillion” in orders for Blackwell and Vera Rubin systems through 2027 — more than doubling the previous $500 billion estimate. When Anthropic CEO Dario Amodei says the platform “gives us the compute, networking and system design to keep delivering while advancing the safety,” and OpenAI CEO Sam Altman says they’ll “run more powerful models and agents at massive scale” — the industry has clearly placed its bets.

Availability and What Comes Next

NVIDIA Vera Rubin is in full production now. Partner-based products ship in H2 2026. Looking further ahead, Vera Rubin Ultra — featuring a Kyber design with 144 GPUs arranged vertically for higher density and lower latency — is expected in 2027. The NVIDIA Inference Context Memory Storage (ICMS) system, powered by BlueField-4 with Ethernet-attached flash, will also be available to manage KV caches for long-context inference, delivering up to 5x tokens-per-second improvement and 5x power efficiency gains over traditional storage approaches.

Vera Rubin isn’t just a faster GPU — it’s a vertically integrated AI infrastructure platform where CPU, GPU, networking, storage, and security are co-designed as a single system. With agentic AI, long-context reasoning, and trillion-parameter models becoming production realities in 2026-2027, this platform is positioned to become the standard unit of the AI factory. The question isn’t whether your organization needs this level of compute — it’s when. And with Mistral AI CTO Timothee Lacroix noting that the storage architecture is “ideally positioned to ensure that our models can maintain coherence and speed when reasoning across massive datasets,” even the storage layer of this platform is being designed for the agentic future.

Get weekly AI, music, and tech trends delivered to your inbox.