AI Mastering LANDR vs eMastered vs CloudBounce 2025: One Just Died — Here’s What It Means

April 24, 2025

Parallel Compression Drums: The Complete Drum Processing Guide for Punchy Mixes

April 25, 2025Six months ago, getting your hands on an NVIDIA H200 GPU instance felt like winning a lottery. Fast forward to Q2 2025, and NVIDIA H200 cloud GPU availability has expanded dramatically — 28 providers now offer HBM3e instances — but here’s the catch: on-demand prices just jumped 26% in a single month. The GPU shortage isn’t over; it’s just changing shape.

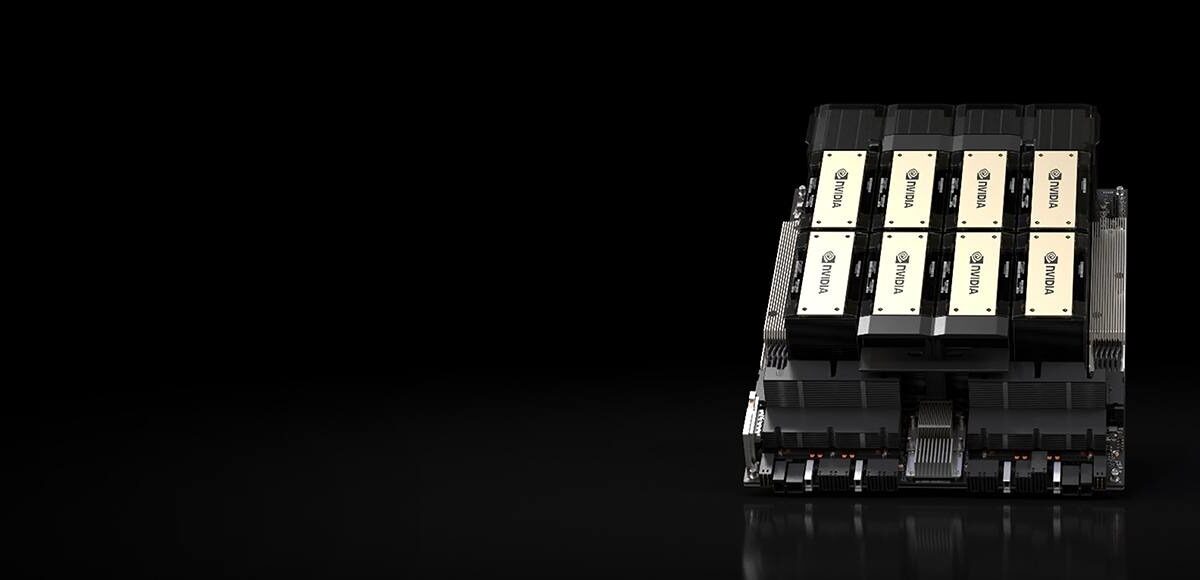

What Makes the NVIDIA H200 Cloud GPU a Big Deal

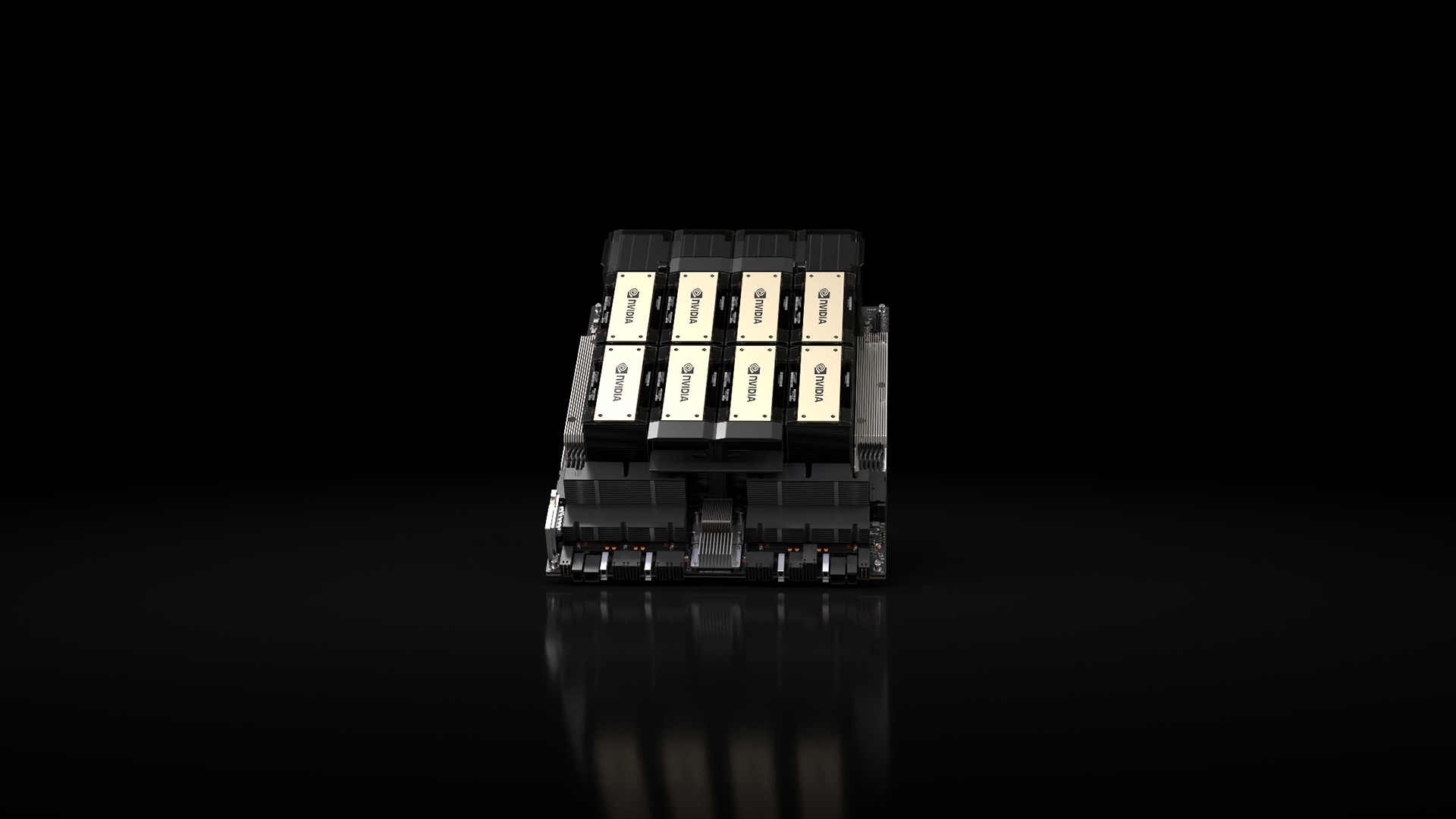

The NVIDIA H200, built on the Hopper architecture, represents a significant memory upgrade over its predecessor. While the H100 ships with 80GB of HBM3 at 3.35TB/s bandwidth, the H200 packs 141GB of HBM3e at 4.8TB/s — nearly doubling the memory capacity and delivering a 43% bandwidth increase. This isn’t just a spec-sheet improvement; it fundamentally changes what you can do with a single GPU.

For large language model inference, the numbers tell the story clearly. According to NVIDIA’s official benchmarks, the H200 delivers 1.9x faster inference on Llama 2 70B and 1.6x faster GPT-3 175B inference compared to the H100. An 8-way HGX H200 system pushes 32+ PFLOPS in FP8, with 1.1TB of aggregate HBM — enough to run models that previously required multi-node setups on a single server.

The key specifications that matter for cloud deployments include 4 PFLOPS FP8 Tensor Core performance (matching H100 on compute, winning on memory), a 700W TDP in the SXM form factor, and availability in HGX configurations with 4 or 8 GPUs, MGX NVL setups for up to 8 GPUs, and the GH200 Grace Hopper Superchip for integrated CPU-GPU workloads.

Cloud GPU Availability: 28+ Providers and Counting

The most notable shift in Q2 2025 is just how many providers now offer H200 instances. According to cloud GPU pricing comparison data, over 28 providers list H200 availability — a dramatic expansion from the handful of early access partners in late 2024.

Hyperscaler Offerings

The major cloud platforms have integrated H200 into their GPU instance lineups:

- AWS: p5e.48xlarge and p5en.48xlarge instances with 8x H200 GPUs

- Google Cloud: a3-ultragpu-8g instances offering 8x H200 with high-bandwidth networking

- Microsoft Azure: H200-based ND-series instances now in general availability

- Oracle Cloud: BM.GPU.H200.8 bare metal instances for maximum performance

Specialized GPU Cloud Providers

Where things get interesting is the specialized provider segment. Companies like CoreWeave, Lambda, RunPod, Nebius AI Cloud, GMI Cloud, Hyperstack, Genesis Cloud, and Jarvislabs are offering H200 instances at significantly lower price points — typically 30-60% cheaper than hyperscaler equivalents. CoreWeave’s 8x H200 configuration runs at approximately $50.44/hr, while Jarvislabs offers entry at around $3.80/hr per GPU.

NVIDIA H200 Cloud GPU Pricing: The 26% Spike Explained

Here’s where the story gets complicated. On-demand pricing for H200 instances currently ranges from $3.72 to $10.60 per GPU per hour. The average on-demand price has risen approximately 26% since March 2025, climbing from $2.97/hr to $3.73/hr. That’s a significant jump in just one month.

Several factors are driving this increase:

- Demand surge: H200 demand has grown approximately 40% quarter-over-quarter, outpacing supply expansion

- Blackwell transition uncertainty: Organizations are securing H200 capacity as a reliable bridge while waiting for B200/B300 availability

- AI inference scaling: The shift from training-heavy to inference-heavy workloads favors the H200’s memory advantage

- Reserved instance competition: Providers are raising on-demand rates to push customers toward committed-use contracts

For teams planning budgets, the pricing landscape breaks down roughly as follows: hyperscalers charge $6-$10+ per GPU/hr on-demand with reserved discounts of 20-40%, while specialized providers offer $3.72-$5.50 per GPU/hr on-demand with more flexible commitment terms. Reserved instances across all providers offer additional savings, making long-term planning critical for cost management.

H200 vs H100: When the Upgrade Actually Matters

The H200 isn’t a universal upgrade — its advantages are workload-specific. The compute performance is essentially identical to the H100 at 4 PFLOPS FP8. Where the H200 pulls ahead is memory-bound workloads:

- LLM inference: 1.9x faster on Llama 2 70B, 1.6x on GPT-3 175B — the 141GB HBM3e eliminates memory bottlenecks for large models

- Training large transformers: Up to 1.4x faster training for transformer architectures thanks to reduced memory swapping

- Multi-model serving: The 141GB capacity lets you serve multiple models simultaneously without splitting across GPUs

- RAG pipelines: Larger embedding caches fit entirely in GPU memory, reducing latency for retrieval-augmented generation

For compute-bound tasks like smaller model training or image generation where you’re not hitting memory walls, H100 instances remain a cost-effective option. The price premium for H200 only makes sense when you’re genuinely bottlenecked on memory bandwidth or capacity.

Supply Chain Reality Check: Q2 2025 and Beyond

Despite the improved availability, the H200 supply chain remains constrained. Lead times currently sit at 8-20 weeks depending on order volume and channel, according to industry reports. Two key factors are shaping the supply picture:

HBM3e production capacity: Samsung and SK hynix supply the 6 HBM3e stacks in each H200. HBM3e demand is exceeding expectations across the industry, not just for NVIDIA but for competing AI accelerators as well. This memory bottleneck is the primary constraint on H200 production.

The China variable: As Tom’s Hardware reported, NVIDIA is preparing shipments of approximately 82,000 H200 GPUs to China, comprising 5,000-10,000 chip modules (40,000-80,000 individual chips) from existing stock. These shipments carry a 25% tariff, and new production capacity orders are opening in Q2. This diverts meaningful supply from the global market.

According to industry analysis, NVIDIA’s high-end GPU shipments are projected to grow 55% year-over-year in 2025. However, much of that growth is Blackwell-driven. NVIDIA is unlikely to increase H-series wafer starts since Blackwell commands higher average selling prices. The H200 supply will expand, but at a measured pace.

TrendForce noted that H200 orders began shipping in Q3 2024, with strong demand from hyperscalers driving supply chain momentum. Server OEMs have been ramping production capacity, but the combination of sustained demand growth and HBM3e constraints means spot availability remains inconsistent.

The Blackwell Bridge: Why H200 Demand Stays Strong

One of the more interesting dynamics in Q2 2025 is the H200’s role as a bridge GPU. The Blackwell B200 and B300 represent the next generation, but their ramp is still in early stages. Organizations that need GPU capacity now — for production inference, fine-tuning, or scaling existing AI services — can’t afford to wait 6-12 months for Blackwell availability to mature.

This creates a self-reinforcing demand cycle for the H200. Companies secure H200 instances today, build their infrastructure around them, and plan to migrate to Blackwell when it becomes cost-effective. The result is sustained H200 demand even as the next generation approaches — which explains why prices are rising despite expanded availability.

My Take: What This Means for AI Teams Planning Infrastructure

Having watched GPU infrastructure cycles for years — from the CUDA compute boom through the crypto-driven shortages to today’s AI gold rush — I see a pattern that most teams are missing. The H200 supply expansion feels like progress, and in absolute terms it is. But the 26% price increase in a single month tells you everything about where the market actually stands: demand is growing faster than supply.

For teams building production AI systems, my practical recommendation is this: don’t wait for prices to drop. The Blackwell transition will eventually shift demand away from H200, but that’s a Q4 2025 or Q1 2026 story at the earliest. If you need inference capacity now, lock in reserved pricing with specialized providers where the cost differential is substantial. A 30-60% savings over hyperscaler pricing, compounded over months of production workloads, adds up to real money.

The more interesting question is the architectural one. The H200’s 141GB HBM3e doesn’t just make existing workloads faster — it enables workloads that weren’t practical on H100. Running a 70B parameter model for inference on a single GPU without quantization compromises, serving multiple specialized models from one instance, keeping massive embedding tables in GPU memory for RAG — these are qualitatively different capabilities, not just performance improvements. Teams that think of the H200 as “a faster H100” are leaving its most valuable features on the table.

And one more thing about the China shipments: 82,000 H200 GPUs is a non-trivial portion of global supply. When those chips leave the pool, everyone else feels it. Factor that into your procurement timeline.

Practical Recommendations for Q2 2025

Based on the current market dynamics, here are the key takeaways for teams evaluating H200 cloud GPU options:

- For memory-bound inference workloads: H200 is the clear choice. The 1.9x inference speedup on 70B+ models pays for the price premium within days of production deployment

- For training workloads under 80GB memory requirements: H100 instances remain cost-effective. Don’t pay the H200 premium if you’re not using the extra memory

- For budget-conscious teams: Specialized providers like Lambda, RunPod, and Jarvislabs offer entry points at $3.72-$3.80/hr — compare carefully before defaulting to hyperscaler instances

- For long-term planning: Secure reserved pricing now. On-demand prices are trending upward and unlikely to reverse before Blackwell matures

- For Blackwell-curious teams: Treat H200 as your production platform for the next 6-9 months and begin Blackwell evaluation in parallel when instances become available

The AI GPU landscape is evolving rapidly, but in Q2 2025, the NVIDIA H200 represents the sweet spot between proven reliability and cutting-edge capability. The question isn’t whether to adopt H200 — it’s how to do it cost-effectively while the supply-demand balance continues to shift.

Want to stay ahead of GPU infrastructure trends and AI tech developments? Get weekly insights delivered straight to your inbox.

Get weekly AI, music, and tech trends delivered to your inbox.