Django 6.0 Built-In Tasks Framework: How Background Jobs Finally Work Without Celery

March 16, 2026

UMG Downtown $775M Deal: EU Approves After Forced Curve Royalty Systems Sale — What It Means for Indies

March 16, 2026NVIDIA GTC 2026 Vera Rubin just rewrote every assumption we had about where AI compute is heading — and the biggest shock was not the GPU. For the first time in NVIDIA’s history, the CPU stole the show. Jensen Huang spent nearly as much time on the 88-core Vera CPU as he did on the 336-billion-transistor Rubin GPU, and that tells you everything about where the industry is pivoting.

NVIDIA GTC 2026 Vera Rubin: The Numbers That Matter

Let me lay out the raw specs first, because they are staggering. The Rubin GPU is a dual-die monster fabricated on TSMC’s 3nm process node, packing 336 billion transistors — a 1.6x increase over Blackwell. It houses 224 Streaming Multiprocessors equipped with fifth-generation Tensor Cores, optimized for NVFP4 and FP8 execution. The headline performance: 50 PFLOPS of NVFP4 inference and 35 PFLOPS of NVFP4 training. That is a 5x inference improvement and 3.5x training improvement over the Blackwell generation.

But the memory story is equally dramatic. Rubin is the first GPU to ship with HBM4 memory, delivering up to 288GB per GPU and 22 TB/s of bandwidth — a 2.8x improvement over Blackwell’s HBM3e. When you are running trillion-parameter models, that bandwidth advantage translates directly into tokens per second.

Why the CPU Is the Real Star of GTC 2026

Here is the part most coverage is missing. NVIDIA quietly admitted something fundamental at this keynote: GPU performance alone is no longer sufficient to sustain throughput at AI factory scale. The bottleneck has shifted. When you deploy thousands of GPUs in an NVL72 rack, the CPU becomes the data movement engine — orchestrating memory, managing control flow, and feeding those hungry Tensor Cores without stalling the pipeline.

Enter the Vera CPU. This is not a rebadged ARM chip. NVIDIA designed 88 custom “Olympus” cores from scratch on the Arm v9.2 architecture, delivering 176 threads via spatial multithreading. The memory subsystem is where Vera flexes: 1.2 TB/s of memory bandwidth (2.4x higher than Grace), up to 1.5TB of LPDDR5X capacity (3x greater than Grace), and a 1.8 TB/s NVLink-C2C link for coherent CPU-GPU data access. That last number is critical — it means the CPU and GPU can share memory space without the latency penalty that crippled previous heterogeneous compute architectures.

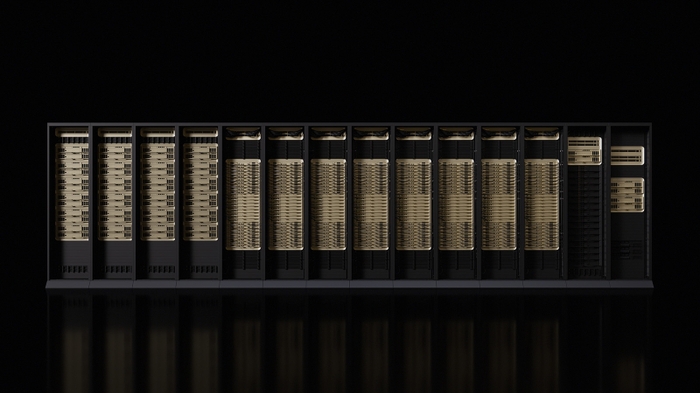

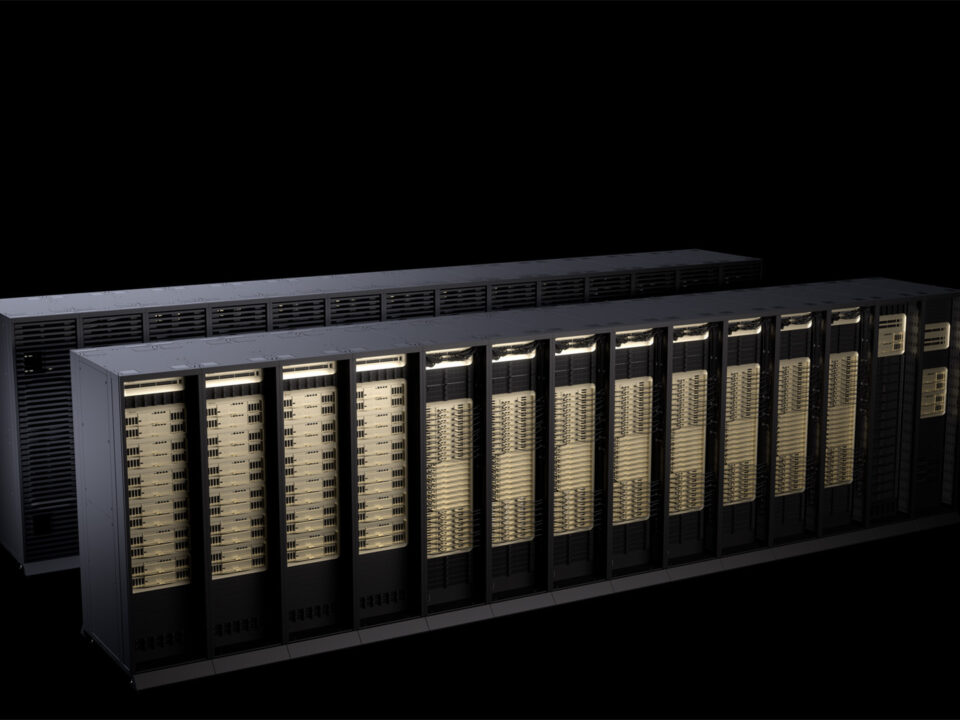

The NVL72 Rack: 72 GPUs, 36 CPUs, One AI Supercomputer

The Vera Rubin NVL72 is where all six co-designed chips come together: Vera CPU, Rubin GPU, NVLink 6 switch, ConnectX-9 SuperNIC, BlueField-4 DPU, and Spectrum-6 Ethernet switch. A single rack houses 72 Rubin GPUs and 36 Vera CPUs connected via 260 TB/s of aggregate scale-up bandwidth. Each GPU gets 3.6 TB/s of NVLink 6 bandwidth — double the previous generation.

The result? NVIDIA claims 10x lower cost per token compared to Blackwell. For cloud providers and enterprise AI teams, that number will drive purchasing decisions for the next two years. When your inference costs drop by an order of magnitude, use cases that were economically impossible suddenly become viable — real-time agentic AI, persistent world simulations, always-on reasoning engines.

Post-Blackwell: What This Means for AI Infrastructure

The broader message from GTC 2026 is that the era of “just add more GPU cores” is over. NVIDIA is now designing complete systems, not just processors. The six-chip co-design approach means every bottleneck — from memory bandwidth to network fabric to CPU orchestration — gets addressed simultaneously. It is a fundamentally different philosophy from the Ampere and Hopper era, where the GPU was the platform and everything else was an afterthought.

The Vera CPU with its 88 Olympus cores represents NVIDIA’s bet that AI workloads are becoming more CPU-bound at the system level, even as individual operations remain GPU-accelerated. Preprocessing, tokenization, scheduling, memory management, and inter-node communication all run on the CPU, and if that CPU cannot keep up, even the fastest GPU on earth will sit idle waiting for data. That is why NVIDIA invested this heavily in custom CPU silicon rather than relying on off-the-shelf ARM designs.

Production Timeline and What Comes Next

NVIDIA announced that Rubin is already in full production as of Q1 2026, with partner system availability expected in H2 2026. That is an aggressive timeline, and it puts pressure on AMD and Intel to respond with competitive offerings in both the GPU and CPU space. The real race is no longer about peak FLOPS — it is about system-level efficiency, cost per token, and the ability to scale to millions of concurrent inference requests without the infrastructure becoming the bottleneck.

GTC 2026 marked a turning point. NVIDIA is no longer a GPU company that happens to make CPUs. It is an AI infrastructure company that co-designs every chip in the rack, and the Vera Rubin platform is the clearest expression of that vision yet. The CPU taking center stage was not a departure from NVIDIA’s identity — it was the inevitable conclusion of where AI compute demands are heading.

Navigating the rapidly evolving AI hardware landscape for your infrastructure or creative pipeline? Sean Kim brings 28+ years of tech expertise to help you make the right decisions.