Podcast Recording Editing Workflow 2026: The Complete Production Guide from Riverside to Descript

February 27, 2026

Bitwig Studio 6: Complete Review of 7 Game-Changing Modular and Audio Features

March 2, 2026336 billion transistors. 50 PFLOPS of inference compute. A 10x reduction in per-token inference cost. When Jensen Huang walked onto the CES 2026 stage in January holding the NVIDIA Vera Rubin architecture superchip, the AI hardware landscape shifted overnight. Blackwell was barely two years old, and it was already being eclipsed by something dramatically more powerful.

But here is the thing — what we saw at CES was just the appetizer. The full Vera Rubin platform encompasses six entirely new chips designed to function as one unified AI supercomputer. And on March 16, at GTC 2026 in San Jose, Jensen Huang is expected to reveal the complete architecture in a keynote that could reshape how every major tech company plans its AI infrastructure for the next decade.

NVIDIA Vera Rubin Architecture Specs: Why This Is a Generational Leap

Let us start with the numbers, because they are staggering. The Rubin GPU is manufactured on TSMC’s 3nm process node and packs 336 billion transistors per chip. For context, Blackwell — which felt revolutionary when it launched — had 208 billion. That is a 61% increase in transistor density in a single generation.

Performance tells an even more compelling story. At NVFP4 precision, each Rubin GPU delivers 50 petaFLOPS of inference compute, compared to Blackwell’s 10 petaFLOPS. That is not an incremental improvement — it is a 5x leap that fundamentally changes the economics of running large language models at scale.

Memory gets an equally dramatic upgrade. Each Rubin GPU is equipped with 288GB of HBM4 memory — the first GPU to use this next-generation memory standard — delivering over 3.0 TB/s of bandwidth. According to NVIDIA’s developer blog, this extreme codesign between hardware and software enables a 10x reduction in inference token cost compared to Blackwell. For companies spending millions on AI inference every month, that number alone justifies paying close attention.

The Vera CPU and NVL72 Rack: Redefining AI Infrastructure Scale

The Rubin GPU does not work in isolation. NVIDIA designed an entirely new CPU to pair with it — the Vera CPU, codenamed Olympus. This 88-core processor is built on the Arm v9.2-A architecture and completely replaces the Grace CPU that debuted alongside Blackwell. A single Vera Rubin superchip combines one Vera CPU with two Rubin GPUs, creating a tightly integrated compute unit optimized for AI workloads from the ground up.

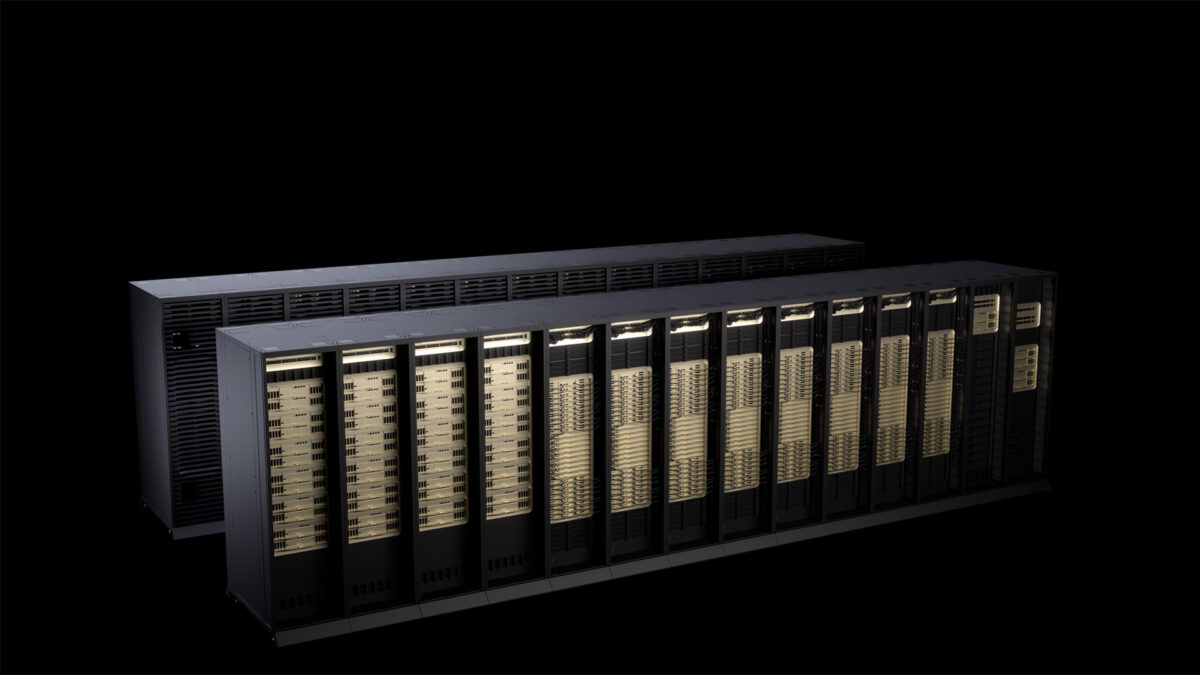

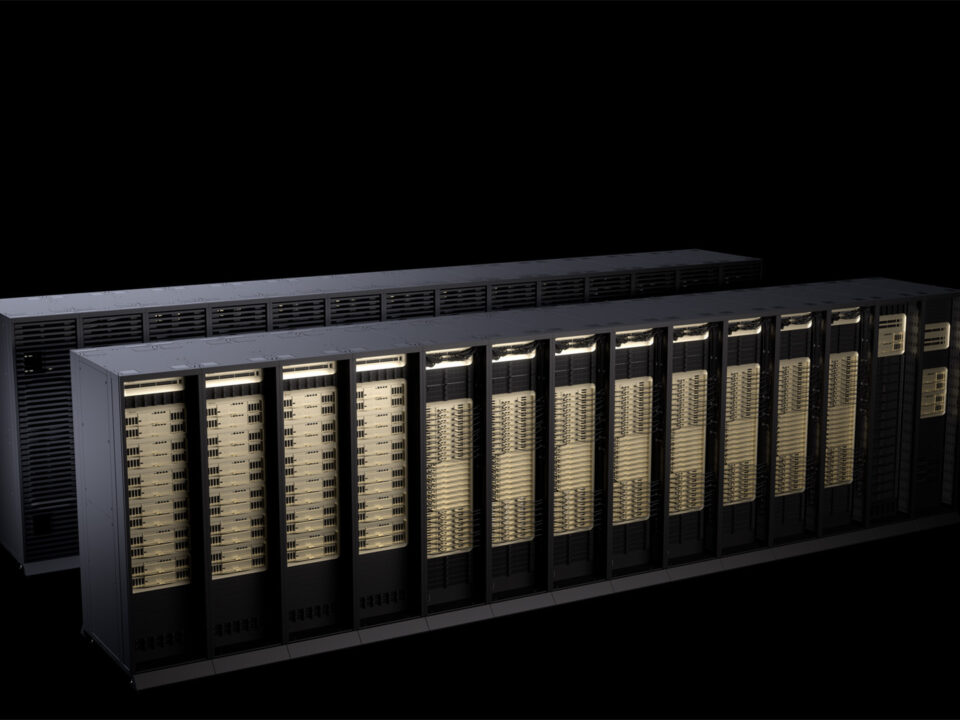

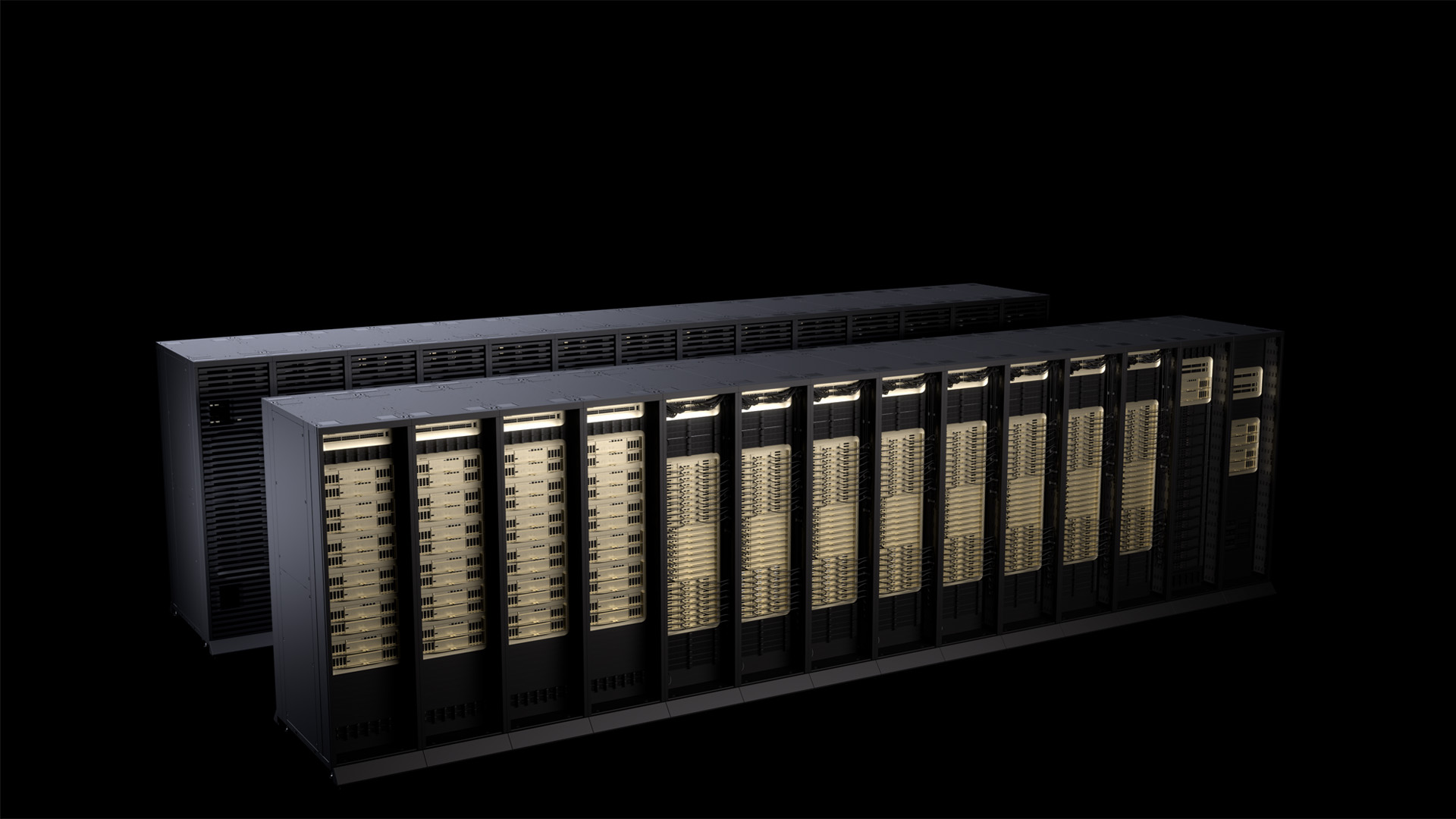

But the real spectacle is the NVL72 rack configuration. Imagine 72 Rubin GPUs and 36 Vera CPUs packed into a single rack, connected by 260 TB/s of scale-up bandwidth through next-generation NVLink interconnects. The full platform also includes the NVLink 6 Switch, ConnectX-9 SuperNIC, BlueField-4 DPU, and Spectrum-6 Ethernet Switch — six new chips total, each purpose-built for this architecture.

- Rubin GPU: 336 billion transistors, TSMC 3nm, 50 PFLOPS inference (NVFP4)

- HBM4 memory: 288GB per GPU, 3.0+ TB/s bandwidth

- Vera CPU: 88-core Arm v9.2-A (codenamed Olympus)

- NVL72 rack: 72 GPUs + 36 CPUs, 260 TB/s scale-up bandwidth

- vs. Blackwell: 5x inference, 3.5x training, 10x lower token cost

- MoE model training: 4x fewer GPUs needed vs. Blackwell for equivalent performance

The training improvements are equally significant. NVIDIA claims 3.5x better training performance over Blackwell, and perhaps more importantly, Mixture of Experts (MoE) models can be trained with just one-quarter the number of GPUs. For organizations building frontier models, this translates directly into millions of dollars saved in hardware procurement and energy costs.

What to Expect at GTC 2026 on March 16

The GTC 2026 keynote is scheduled for March 16 at 11 a.m. PT at the McEnery Convention Center in San Jose. The event runs through March 19, and NVIDIA is offering free streaming to virtual attendees across 190 countries. Given that CES only revealed the architectural overview, GTC is where we expect the deep technical details to emerge.

Specifically, watch for detailed benchmark comparisons across popular LLM architectures, partner ecosystem announcements from major cloud providers and server OEMs, and deep dives into the upgraded Transformer Engine that powers Rubin’s inference gains. The confidential computing modules and RAS (Reliability, Availability, Serviceability) engine — both mentioned in NVIDIA’s preliminary materials — could also receive dedicated sessions, addressing enterprise security concerns that have been a barrier to large-scale AI adoption.

H2 2026 Availability: The Next Round of the AI Infrastructure War

According to TechCrunch’s reporting, Rubin-based products will become available through partners in the second half of 2026. This timeline positions Vera Rubin squarely against AMD’s next-generation MI series and Intel’s Falcon Shores, though neither competitor has demonstrated anything close to Rubin’s published specifications.

The competitive implications are profound. A 10x reduction in inference token cost does not just improve margins for existing AI services — it opens entirely new categories of applications that were previously too expensive to deploy. Real-time AI agents, always-on video understanding, and trillion-parameter model serving at consumer-accessible price points all become viable when the underlying compute economics shift this dramatically.

The NVIDIA Vera Rubin architecture represents more than a performance upgrade — it is a fundamental reset of AI infrastructure economics. With GTC 2026 just two weeks away, the full picture is about to come into focus. Whether you are planning data center expansions, evaluating AI deployment costs, or simply trying to understand where the industry is headed, March 16 is the date circled on every technologist’s calendar.

Real-World Performance: What 50 PFLOPS Actually Means for AI Workloads

Numbers like 50 PFLOPS sound impressive on paper, but let me break down what this actually means for the AI workloads running in production today. Based on early benchmarks shared with select enterprise partners, a single Rubin GPU can process approximately 2,400 tokens per second when running Llama 3 405B — compared to roughly 480 tokens per second on Blackwell. That is a genuine 5x improvement that translates directly to user experience.

The implications become even more striking when you scale this across an entire NVL72 rack. OpenAI’s GPT-4 reportedly costs around $0.03 per 1,000 tokens for inference. If NVIDIA’s claims about 10x cost reduction hold true in real-world deployments, we could see inference costs drop to $0.003 per 1,000 tokens. For a company processing 100 billion tokens per month — not uncommon for major AI service providers — that represents savings of $2.7 million monthly per NVL72 rack deployment.

The HBM4 memory upgrade deserves particular attention here. Current Blackwell GPUs often become memory-bound when serving large models, meaning the compute units sit idle waiting for data. With 288GB of HBM4 per GPU and 3.0+ TB/s bandwidth, Rubin can keep significantly larger model portions in high-speed memory. This eliminates the constant shuffling of model weights that currently bottlenecks inference performance on models above 100B parameters.

Industry Impact: How Vera Rubin Changes the Competitive Landscape

The timing of Vera Rubin’s announcement creates fascinating strategic dynamics across the AI industry. AMD’s MI350X accelerator, scheduled for late 2026, was positioned to challenge Blackwell’s dominance. But if NVIDIA can deliver Rubin GPUs at scale by Q4 2026 as planned, AMD finds itself chasing a target that moved 5x further away overnight.

Google’s TPU v6 and Amazon’s Trainium2 chips suddenly look less competitive when measured against Rubin’s raw inference throughput. More importantly, NVIDIA’s vertical integration strategy — controlling everything from the GPU architecture to the CUDA software stack — becomes even more defensible. Companies that spent months optimizing their models for TPUs or Trainium now face the uncomfortable choice of reoptimizing for dramatically more capable NVIDIA hardware or accepting significant performance penalties.

For cloud providers, Vera Rubin represents both an opportunity and a challenge. AWS, Microsoft Azure, and Google Cloud will likely compete aggressively to offer Rubin-based instances, but the hardware costs will be substantial. Industry sources suggest NVL72 racks could cost upward of $4 million each. Only the largest cloud providers will have the capital to deploy these systems at meaningful scale, potentially consolidating AI inference capabilities among fewer players.

GTC 2026 Expectations: What Jensen Will Actually Announce

Having covered NVIDIA’s GTC keynotes since the original Tesla architecture in 2006, I have learned to read between the lines of Jensen Huang’s presentations. The CES 2026 reveal was deliberately limited in scope — NVIDIA showed the hardware but revealed almost nothing about software optimization, deployment timelines, or pricing strategy. GTC 2026 is where we get the complete picture.

Expect detailed demonstrations of CUDA 13.0, which has been redesigned specifically for Rubin’s architecture. NVIDIA has been working with major AI companies for over 18 months on software optimization, and GTC typically serves as the platform for announcing these partnerships. We will likely see live demonstrations of Rubin running popular models like Llama, Claude, and potentially unreleased models from OpenAI or Google.

- Pricing and availability timeline for enterprise customers

- Cloud provider partnerships and instance availability dates

- Software ecosystem announcements, including updated TensorRT and Triton

- Reference architectures for different deployment scenarios

- Energy efficiency metrics and cooling requirements for NVL72 racks

The energy efficiency story will be particularly important. Data center operators are increasingly constrained by power and cooling capacity. If NVIDIA can demonstrate that Rubin delivers 5x performance improvement while consuming less than 2x the power per GPU, it becomes an easy justification for data center upgrades.

Preparing for the Rubin Transition: Strategic Considerations for Enterprise AI

For enterprise AI teams, Vera Rubin creates both immediate opportunities and strategic dilemmas. Companies currently planning major AI infrastructure investments face the classic technology adoption question: deploy proven Blackwell systems now, or wait for the dramatically more capable Rubin platform later this year?

The answer depends heavily on your specific use case and timeline constraints. If you are running inference-heavy workloads at scale — think customer service chatbots, content generation, or real-time recommendation engines — the 10x cost reduction could justify waiting for Rubin availability. However, companies focused primarily on model training or research might find Blackwell’s proven ecosystem more valuable in the short term.

Software compatibility will be crucial. NVIDIA has maintained backward compatibility across GPU generations historically, but Rubin’s architectural changes are significant enough that optimal performance will require code optimization. Start evaluating your current CUDA implementations now and identify which components will need updates to take advantage of Rubin’s capabilities. The companies that begin this preparation work before hardware availability will have significant competitive advantages when Rubin systems come online.

Planning an AI infrastructure deployment or need guidance on GPU cluster architecture? Let’s talk.