DJI Air 4 Review Preview: Leaked Specs, Triple Camera, AI Tracking — Everything We Know So Far

July 28, 2025

Logic Pro iPad 2.2 Update Deep Dive: 5 Beat-Making Upgrades That Actually Matter This Summer

July 29, 2025Here’s a number that should make every ML engineer reconsider their cloud bill: 100 inference requests, 2 seconds each, $0.06 total. That same workload on AWS? $1.10 — because you’re paying for the full hour whether the GPU is working or gathering dust. Modal Labs GPU cloud is built around one radical idea: you should never pay for an idle GPU.

What Makes Modal Labs GPU Cloud Different

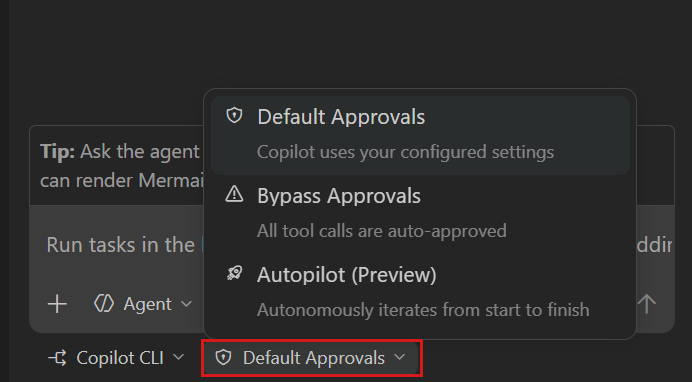

Modal isn’t just another GPU cloud provider slapping a dashboard on rented NVIDIA hardware. It’s a serverless compute platform designed from the ground up for AI workloads — inference, training, fine-tuning, and batch processing — with a Python-native SDK that eliminates the YAML-and-Dockerfile circus that plagues traditional ML deployment.

The core proposition is deceptively simple: write a Python function, add a @modal.gpu decorator specifying the GPU you need, and deploy. Modal handles container orchestration, GPU provisioning, autoscaling, and teardown. No Kubernetes manifests. No Terraform configs. No 47-step CI/CD pipeline to serve a single model.

What separates Modal from competitors is sub-second cold starts. GPU containers spin up in under a second — a figure that matters enormously when your autoscaler needs to handle traffic spikes without pre-warming instances. Most serverless GPU platforms quote cold starts of 10–30 seconds; Modal’s architecture, built on Oracle Cloud Infrastructure bare metal instances, cuts that by an order of magnitude.

Modal Labs GPU Cloud Pricing: The Per-Second Advantage

Modal’s pricing model is the real story here. Every GPU, from the budget T4 to the flagship B200, bills per second. Here’s the current lineup:

- NVIDIA T4: $0.000164/sec (~$0.59/hr)

- NVIDIA L4: $0.000222/sec (~$0.80/hr)

- NVIDIA A10G: $0.000306/sec (~$1.10/hr)

- NVIDIA L40S: $0.000542/sec (~$1.95/hr)

- NVIDIA A100 40GB: $0.000583/sec (~$2.10/hr)

- NVIDIA A100 80GB: $0.000694/sec (~$2.50/hr)

- NVIDIA H100: $0.001097/sec (~$3.95/hr)

- NVIDIA H200: $0.001261/sec (~$4.54/hr)

- NVIDIA B200: $0.001736/sec (~$6.25/hr)

The Starter plan is free with $30 in monthly credits — enough to run roughly 27 hours of T4 inference or about 7.5 hours of H100 compute without spending a cent. The Team plan at $250/month includes $100 in credits and unlocks 1,000 concurrent containers with 50 GPU concurrency slots.

Real Cost Comparison: Modal Labs GPU Cloud vs AWS vs Azure vs Lambda

Let’s break down what the same NVIDIA A10G workload costs across providers:

- Modal: $1.10/hr (per-second billing — pay only for what you use)

- AWS G5.xlarge On-Demand: $1.10/hr (billed per hour minimum)

- AWS G5.xlarge Spot: ~$0.43/hr (can be interrupted)

- AWS 1-Year Reserved: ~$0.70/hr (upfront commitment)

- Lambda Labs: $0.75/hr (on-demand, no serverless)

- Azure NVads A10 v5 Spot: ~$0.60/hr (can be interrupted)

- Azure On-Demand: $3.20/hr

On paper, Modal’s hourly rate matches AWS on-demand. But the per-second billing changes the math entirely for inference workloads. If your model processes requests in bursts — 200ms of compute here, 3 seconds there — you’re paying for those exact seconds, not rounding up to the nearest hour. According to Modal’s own cost analysis, a workload handling 100 requests at 2 seconds each costs $0.06 on Modal versus $1.10 on AWS. That’s a 94.5% cost reduction for bursty inference patterns.

Even against AWS Spot instances (which can be reclaimed mid-inference), Modal’s serverless model provides reliability without the cost overhead of reserved instances. As Modal’s analysis notes: “Unless your GPU runs consistently for three years, serverless beats reserved pricing.”

The Developer Experience That Actually Ships

Modal Labs GPU cloud targets a specific pain point: the gap between writing ML code locally and running it in production. Their Python SDK lets you define everything — GPU requirements, container images, environment variables, cron schedules — in pure Python. No YAML. No Dockerfiles (unless you want them). No separate infrastructure-as-code repo.

import modal

app = modal.App("inference-service")

@app.function(gpu="A10G", image=modal.Image.debian_slim().pip_install("torch", "transformers"))

def predict(text: str) -> str:

from transformers import pipeline

classifier = pipeline("sentiment-analysis")

return classifier(text)[0]That’s a complete, deployable GPU inference service. Run modal deploy and it’s live with autoscaling, HTTPS endpoints, and scale-to-zero built in. The feedback loop from local development to production deployment shrinks from hours to seconds.

For teams running batch processing, model training, or scheduled inference jobs, Modal supports cron-scheduled functions, parallel map operations across thousands of containers, and shared volumes for model weights and datasets. It’s the kind of platform that makes you wonder why you ever wrote a Kubernetes deployment manifest by hand.

Modal vs RunPod vs Beam: Where Each Wins

Modal isn’t the only serverless GPU platform in the market. Here’s how it stacks up against the two strongest alternatives:

Modal Labs GPU cloud wins for: Python-native developer experience, sub-second cold starts, infrastructure-as-code approach, complex orchestration (multi-step pipelines, fan-out/fan-in patterns). Best for teams that want maximum control with minimum DevOps.

RunPod wins for: Lowest raw GPU pricing (H100 at $2.69/hr vs Modal’s $3.95/hr), Quick Deploy templates for popular models, persistent volumes, and steady-state workloads. Cold starts can hit under 200ms for 48% of requests, though tail latency is higher. Better for teams that need simple, cost-optimized inference endpoints.

Beam wins for: Simplicity and quick prototyping. Good for solo developers shipping their first inference endpoint. Limited at scale compared to Modal’s orchestration capabilities.

The competitive landscape is telling. Modal closed a major funding round, RunPod raised $20 million for expansion, and Lambda Labs pivoted away from serverless entirely to focus on traditional VM instances. The market is voting with capital: serverless GPU is the future, and the question is whether you want Modal’s code-first approach or RunPod’s deploy-first simplicity.

Who Should (and Shouldn’t) Use Modal Labs GPU Cloud

Use Modal if: You’re a Python-heavy team running inference workloads with variable traffic. You want to eliminate GPU idle costs. You value developer experience and don’t want to manage Kubernetes. You need complex orchestration — multi-step ML pipelines, batch jobs, scheduled training runs.

Skip Modal if: You need the absolute lowest per-hour GPU rate (RunPod is cheaper for sustained workloads). You’re running 24/7 training jobs where reserved instances make more economic sense. You need multi-language support — Modal is Python-first, with TypeScript/Go SDKs still in alpha.

The bottom line: for teams spending more than a few hundred dollars monthly on cloud GPUs for inference, the per-second billing model alone could cut their bill in half. And with sub-second cold starts, the performance trade-off that typically comes with serverless GPU platforms barely exists here. Modal Labs GPU cloud isn’t just a cheaper option — it’s a fundamentally different model for how AI infrastructure should work.

Building AI infrastructure or need help optimizing your cloud GPU costs? Sean Kim has 28+ years of experience in tech and audio engineering.

Get weekly AI, music, and tech trends delivered to your inbox.