Apple Mac Mini M5 Launch: $599 Desktop Packs Pro-Level AI Performance

October 3, 2025

PreSonus Studio One 7: AI Stem Separation, Mix Engine FX, and 30+ Features That Redefine the DAW Game

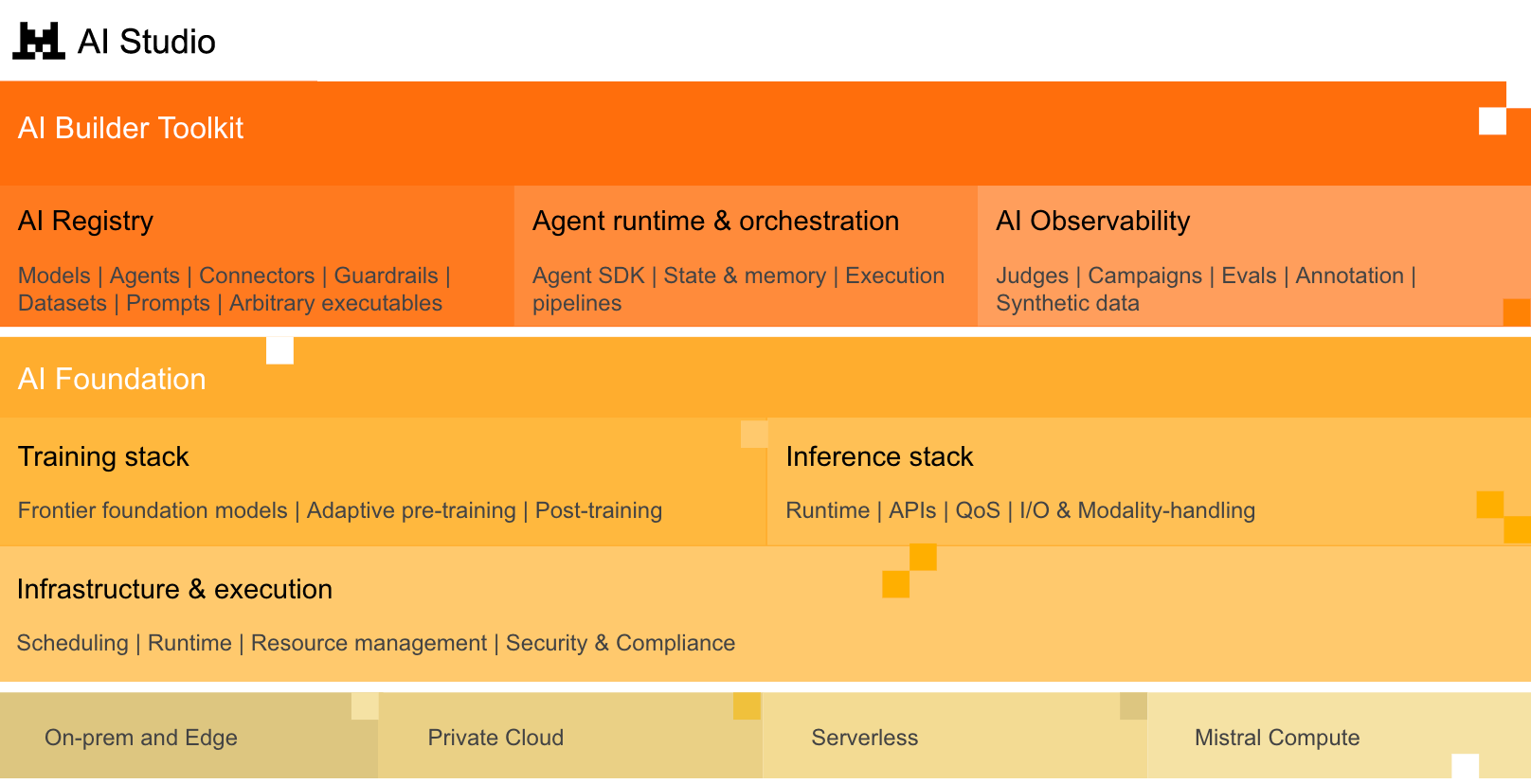

October 6, 2025Mistral AI Studio is finally here — and it’s not just another API wrapper. On October 24, 2025, the French AI company unveiled a full production-grade platform that replaces its earlier Le Plateforme service with three core pillars: Observability, Agent Runtime, and AI Registry. This is Mistral’s clearest signal yet that it’s no longer just a model provider — it’s building the infrastructure layer for enterprise AI.

If you’ve been watching the enterprise AI platform space, you know the stakes. Google has Vertex AI. AWS has Bedrock. Microsoft has Azure AI. Now Mistral AI Studio enters the ring with a distinctly European proposition: data sovereignty, regulatory compliance, and on-premises deployment as first-class features, not afterthoughts. Let’s break down what this platform actually delivers and why it matters for teams deploying AI agents in production.

What Is Mistral AI Studio? From API Service to Production Platform

Mistral AI launched Le Plateforme in late 2023 as a straightforward API service for accessing its language models. It worked well for prototyping and development, but enterprise customers quickly hit a wall: deploying AI agents to production requires far more than API calls. You need monitoring, fault tolerance, version control, access management, and audit trails.

According to Mistral’s official announcement, AI Studio provides what the company calls a “production fabric” — a unified environment that connects creation, observability, and governance. The platform supports both proprietary models (like Mistral Large) and open-source models (like Mistral 7B and Mixtral), giving teams flexibility in choosing the right model for each use case.

Deployment options span four tiers: fully hosted, hybrid cloud, self-deployment, and on-premises. As VentureBeat reports, this flexibility is a direct response to European enterprise requirements around data residency and regulatory compliance — something US-based platforms have historically treated as a secondary concern.

The Three Pillars of Mistral AI Studio

1. Observability — Opening the AI Agent Black Box

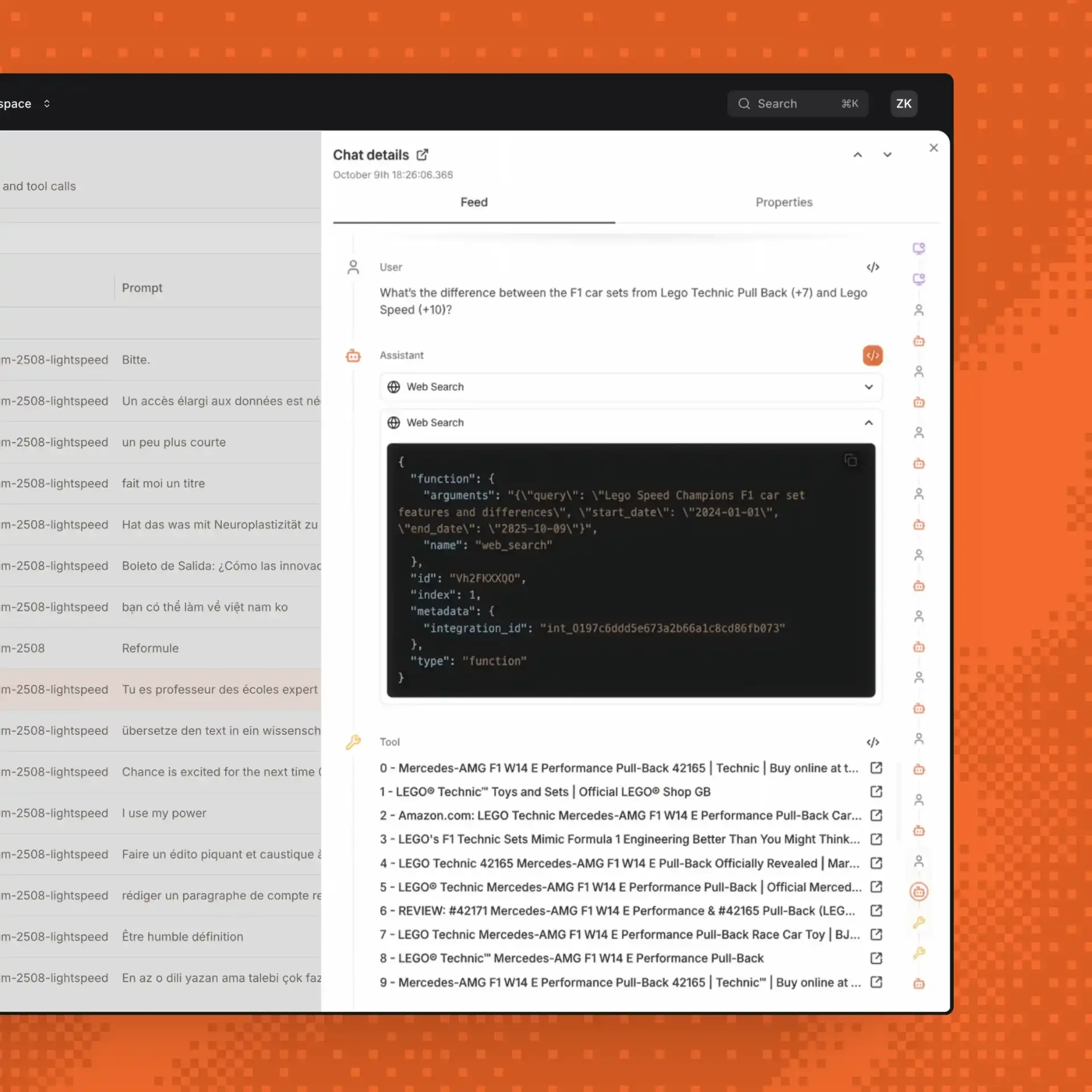

The biggest pain point in production AI isn’t building agents — it’s understanding what they’re doing once deployed. Ask any ML engineering team running AI agents at scale, and they’ll tell you the same story: the agent works perfectly in development, passes all tests, and then behaves unpredictably in production. Mistral AI Studio’s Observability Explorer tackles this head-on with a suite of monitoring and analysis tools built directly into the platform.

The Observability Explorer lets teams filter and inspect all agent traffic in real time. But it goes well beyond simple logging or basic request tracking. The system automatically detects regressions — performance drops that occur after model updates, prompt changes, or configuration modifications. This is critical because AI systems can degrade silently in ways that traditional application monitoring tools like Datadog or New Relic miss entirely. A model that starts hallucinating 5% more frequently won’t trigger a CPU alert, but it will erode user trust quickly.

Perhaps most valuable for iterative improvement, the observability layer can automatically build datasets from production traffic. Instead of manually curating evaluation sets — a process that typically takes ML teams weeks of labeling and validation — teams can extract patterns from real-world usage to create fine-tuning data or benchmark suites. This closes the feedback loop between production performance and model improvement in a way that previously required stitching together multiple third-party tools like LangSmith, LangFuse, or Weights & Biases, each with their own data formats and integration overhead.

2. Agent Runtime — Fault-Tolerant Execution Built on Temporal

Under the hood, Mistral AI Studio’s Agent Runtime is built on the Temporal framework — the same distributed workflow engine used by companies like Uber, Netflix, and Stripe for mission-critical operations. This is a significant architectural choice that reveals how seriously Mistral is treating production reliability. Temporal has been battle-tested at enormous scale — Uber uses it to coordinate millions of rides daily — and choosing it as the foundation for AI agent execution signals enterprise-grade ambitions.

Temporal provides two capabilities that are essential for production AI agents: fault tolerance and stateful execution. When an AI agent performs a complex multi-step task — say, processing a document through extraction, classification, summarization, and routing — and fails at step three, a Temporal-based runtime can resume from that exact point rather than starting over. For enterprise workflows where a single execution might involve dozens of API calls, external service integrations, and branching decisions, this resilience is non-negotiable. Without it, teams are forced to build their own retry logic, state management, and error recovery — infrastructure work that distracts from the actual AI development.

- Execution Graph Capture: Every agent execution path is recorded as a graph, enabling detailed debugging, auditing, and knowledge sharing across teams.

- Stateful Long-Running Agents: Agents that operate over hours or days maintain their state reliably, eliminating the fragility of stateless architectures.

- Shareable Execution History: Team members can replay, inspect, and share specific execution traces, making collaborative debugging practical.

3. AI Registry — Governance as a Platform Feature

According to a detailed breakdown by AGIyes, the AI Registry serves as a centralized catalog for all AI assets — models, prompts, agent configurations, and workflows. Think of it as a Git-like version control system specifically designed for AI artifacts, combined with enterprise-grade access controls and promotion gates. In practice, this means every prompt template, every model configuration, and every agent workflow lives in one governed repository rather than scattered across team members’ local environments or random cloud storage buckets.

The promotion gate concept is particularly relevant for regulated industries. Before any AI asset moves from development to staging to production, it must pass through defined verification steps — automated tests, human reviews, compliance checks, or any combination thereof. Every change is tracked with a complete audit trail: who modified what, when, and why. For financial services firms that need to demonstrate model governance to regulators, or healthcare organizations bound by HIPAA requirements, this kind of built-in compliance infrastructure can save months of custom development. Without a centralized registry, enterprises typically end up building ad-hoc governance layers that are fragile, inconsistent, and expensive to maintain.

- Version Control: Full change history for every AI asset with rollback capability.

- Promotion Gates: Enforced verification stages before production deployment.

- Role-Based Access Control: Granular permissions for reading, modifying, and deploying AI assets.

- Audit Trails: Complete records satisfying regulatory compliance requirements.

The Competitive Landscape: Why This Matters Now

Mistral AI Studio’s launch timing is strategic. The enterprise AI platform market is entering a critical consolidation phase in late 2025. Google continues expanding Vertex AI with agent-centric features. AWS Bedrock has added agent builder capabilities. Microsoft’s Azure AI is pushing Copilot Studio for enterprise adoption.

Mistral’s differentiation is clear and intentional. As Dataconomy’s analysis highlights, being a European company isn’t just a geographic detail — it’s a competitive advantage. GDPR compliance, data sovereignty guarantees, and the option to run entirely on-premises address concerns that many European enterprises have about US-based cloud platforms.

There’s also a compelling community-to-enterprise pipeline at play. Mistral built significant developer mindshare through its open-source models — Mistral 7B and Mixtral became go-to choices for teams wanting powerful models without vendor lock-in. AI Studio converts that community adoption into enterprise revenue, following a playbook similar to what Red Hat achieved with Linux or what Elastic did with Elasticsearch. Developers who prototyped with Mistral’s open-source models can now scale those projects to production within the same ecosystem, reducing migration friction significantly.

The timing also coincides with growing enterprise frustration around AI agent reliability. A recent wave of production AI failures — chatbots going off-script, automated workflows producing incorrect outputs, agent loops consuming expensive API credits — has made organizations acutely aware that model quality alone doesn’t guarantee production success. You need the surrounding infrastructure: monitoring, fault tolerance, governance, and reproducibility. This is exactly what Mistral AI Studio promises to deliver as an integrated package.

Realistic Challenges and What to Watch

It’s worth noting the practical limitations. Mistral AI Studio launched in private beta, meaning production-scale performance is still unproven. Google, AWS, and Microsoft have years of enterprise infrastructure experience and global data center networks that Mistral cannot match overnight.

The observability and agent runtime spaces also have mature incumbents. Tools like LangSmith, LangFuse, and CrewAI have built deep functionality in their respective niches. Whether platform-level integration — the convenience of having everything in one place — can outweigh the depth and flexibility of specialized tools is a question the market will answer over the coming months. History suggests that integrated platforms eventually win in enterprise contexts (see: AWS, Azure, GCP absorbing specialized tools), but that consolidation takes time.

There’s also the question of ecosystem maturity. Google Vertex AI and AWS Bedrock have extensive third-party integrations, marketplace offerings, and certified partner networks. Mistral AI Studio will need to build this ecosystem layer to compete effectively for large enterprise deals where integration capabilities often matter more than raw platform features.

But one thing is increasingly clear: as AI moves from experimentation to production in 2025, the infrastructure for deploying, monitoring, and governing AI agents is becoming just as important as the models themselves. Mistral AI Studio’s three-pillar approach — observability, runtime, and registry — represents a coherent and well-architected answer to this challenge. For organizations where data sovereignty and regulatory compliance are dealbreakers, a European-native platform may be exactly the alternative they’ve been waiting for. The private beta phase will be the proving ground — watch for early adopter case studies from European financial institutions and healthcare providers, which are the most likely first movers.

Looking to build production AI agent pipelines or design scalable AI infrastructure for your organization?

Get weekly AI, music, and tech trends delivered to your inbox.