TAL-J8X Review: 7 Reasons This $69 Plugin Outshines the $2,000 Roland JX-8P Original

April 2, 2026

AirPods Max 2 vs Sony WH-1000XM6: 7 Critical Differences That Decide Which $500 Headphone Wins in 2026

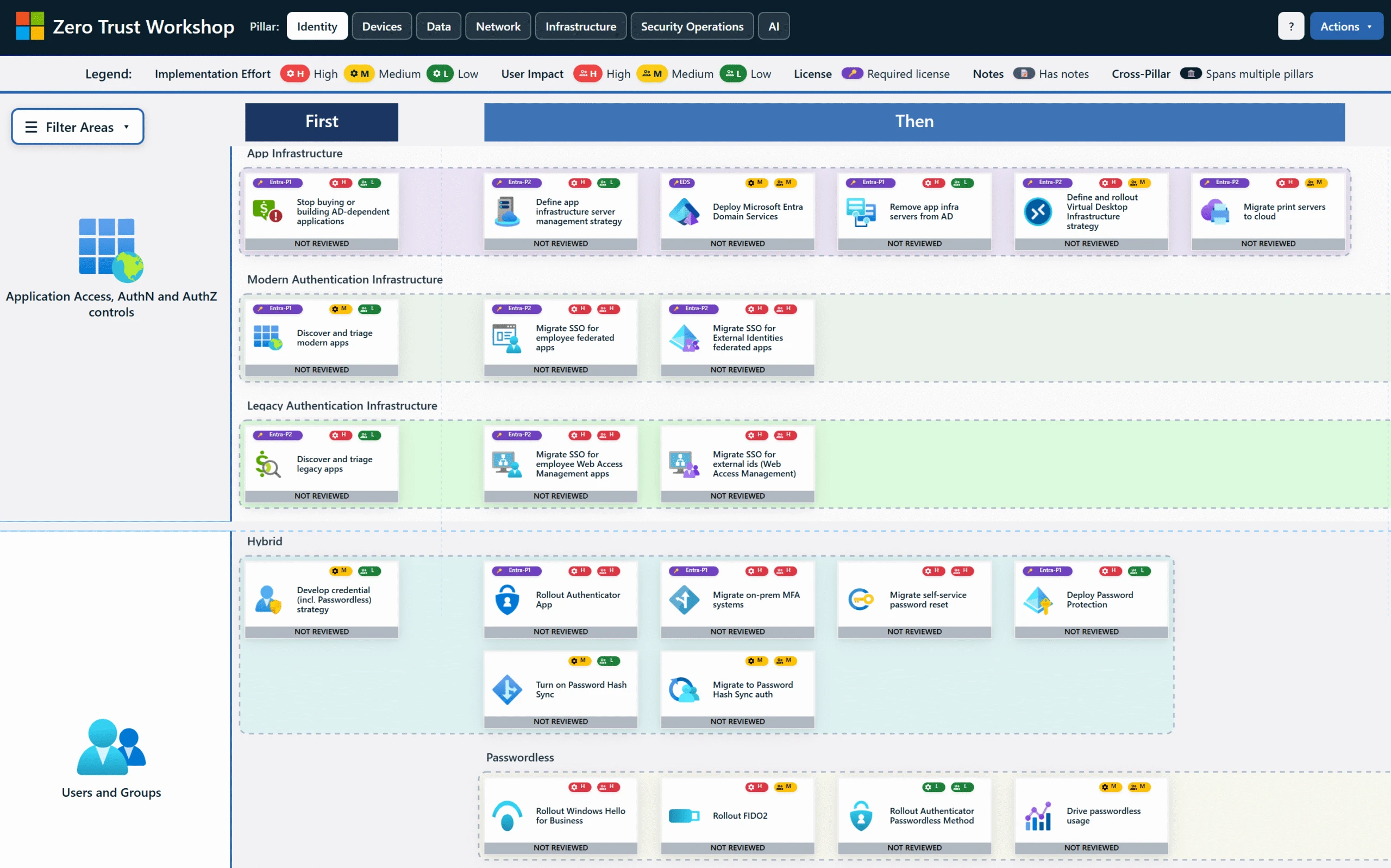

April 2, 2026700 security controls. 116 logical groups. 33 functional swim lanes. Those are the numbers Microsoft Zero Trust for AI dropped at RSAC 2026 — and if you’re deploying AI agents without a security framework this comprehensive, you’re essentially leaving the front door of your enterprise wide open. Right now, organizations worldwide are shipping AI agents into production environments, but until this announcement, there was no industry-standard framework specifically designed to secure them. ZT4AI changes that.

Why Microsoft Zero Trust for AI Matters Right Now

The AI agent revolution is no longer theoretical. In 2026, enterprises are deploying AI agents that autonomously write code, analyze customer data, execute cross-system workflows, and make decisions that directly impact business operations. Recent industry surveys suggest that over 78% of Fortune 500 companies have deployed some form of AI agent in production. The problem? Traditional security frameworks were designed for a world where humans log in, click around, and log out. AI agents don’t follow that pattern — not even close.

They call APIs without human oversight. They communicate with other agents across system boundaries. They process thousands of data transactions in seconds. And the old perimeter-based security model — firewalls, VPNs, network segmentation — simply wasn’t built for autonomous digital entities that operate at machine speed. A human user might trigger a dozen authentication events per day. An AI agent can trigger thousands per minute.

Microsoft recognized this gap and responded with a fundamental extension of its Zero Trust architecture. Announced on March 19, 2026, the Zero Trust for AI (ZT4AI) framework adds a dedicated AI pillar to the existing Zero Trust Workshop, giving enterprises a systematic way to assess their security maturity before deploying AI agents. This isn’t just a whitepaper or a set of recommendations — it’s an actionable framework with reference architectures and assessment tooling.

The Three Pillars of Microsoft Zero Trust for AI

Microsoft’s ZT4AI framework stands on three pillars: agent governance, data protection, and prompt defense. Each covers a critical dimension of the AI agent lifecycle, and neglecting any one of them creates a security gap that attackers will exploit. Understanding this structure is the first step toward making 700 controls manageable.

Pillar 1: Agent Governance

AI agents are no longer just software tools — they’re autonomous actors that make decisions, take actions, and interact with systems on their own. ZT4AI mandates that every agent receives a unique identity and that its access to resources and permissible actions are controlled through policy-based mechanisms. Microsoft’s end-to-end security guide walks through applying Zero Trust principles across the entire pipeline — from data collection through model training, deployment, and agent execution.

The core principle is the same one that has always driven Zero Trust: never trust, always verify. The Least Privilege principle that enterprises apply to human users now extends to AI agents. If Agent A has no business accessing HR data, that access path is blocked entirely — not monitored, not flagged, but blocked. Every agent action is logged to an audit trail, enabling both compliance reporting and forensic analysis when incidents occur. This is particularly critical for regulated industries like finance and healthcare, where audit trails aren’t optional.

Pillar 2: Data Protection

Data leakage is the single biggest risk with AI agents. Whether it’s sensitive information embedded in training data or an agent inadvertently transmitting internal data to external services, the scenarios are not hypothetical — they’re happening right now. Reports indicate that AI-related data breach incidents increased by over 340% in 2025 alone. The attack surface expands every time a new agent gets access to another data source.

ZT4AI enforces data classification, encryption, and access logging at the agent level. It includes mechanisms for automatically applying tiered security policies based on the sensitivity of the data each agent processes. An agent summarizing public documentation operates under different constraints than one analyzing financial records containing customer PII. This tiered approach prevents the common mistake of applying one-size-fits-all security that’s either too loose for sensitive data or too restrictive for routine operations.

Pillar 3: Prompt Defense

Prompt injection attacks are among the most significant AI security threats in 2026. Attackers craft malicious inputs to manipulate agent behavior, extract system prompts, or bypass safety guardrails. These attacks are growing more sophisticated by the month. Indirect prompt injection — where malicious instructions are hidden in documents or web pages that agents read — is particularly dangerous because the agent processes the attack vector as legitimate input.

ZT4AI standardizes input validation at the prompt layer, output filtering, and data integrity verification for inter-agent communications. In multi-agent environments, messages passed from Agent A to Agent B are also subject to validation. This prevents what is essentially the AI equivalent of a supply chain attack — where a compromised upstream agent feeds manipulated data to downstream agents, cascading the breach across the entire system.

Making Sense of 700 Controls: A Practical Roadmap

Seven hundred security controls sounds overwhelming — and it should. The scale reflects how complex the AI agent security landscape actually is. But Microsoft structured them into 116 logical groups and 33 functional swim lanes for a reason: so enterprises can prioritize based on their specific AI deployment profile rather than attempting to implement everything at once. You don’t eat an elephant in one bite.

Microsoft’s frontier AI security strategy identifies identity-based access control as the starting point. Assign unique identities to every AI agent. Define each agent’s permission boundaries explicitly. This single step — knowing exactly who (or what) is accessing your systems and what they’re allowed to do — forms the foundation that everything else builds on. Integration with Microsoft Entra ID allows organizations to connect agent identities to their existing IAM infrastructure without building parallel systems.

At RSAC 2026, Microsoft unveiled Agent 365 alongside ZT4AI, signaling that AI agent security is no longer optional — it’s infrastructure. An AI Assessment tool is also expected to ship in summer 2026, enabling enterprises to automatically measure their AI security maturity against the framework, identify gaps, and receive prioritized recommendations for which controls to implement first. This diagnostic-first approach makes the 700-control list far more actionable than a raw checklist would be.

How ZT4AI Differs from Traditional Zero Trust

Traditional Zero Trust was designed around three entities: users, devices, and networks. ZT4AI introduces a fourth — AI agents — and this addition forces a paradigm shift in how organizations think about trust boundaries. Treating ZT4AI as a simple extension of existing Zero Trust misses the fundamental changes it requires.

- Expanded identity scope: AI agents join human users as first-class identity subjects requiring authentication, authorization, and lifecycle management. Agents must undergo the same rigor of identity verification that humans do.

- Behavioral unpredictability: Humans use systems in relatively predictable patterns. AI agents act autonomously and may access resources in unexpected ways during inference, requiring fundamentally different anomaly detection baselines.

- Speed and scale: Agents execute thousands of API calls in milliseconds. Real-time monitoring must operate at a precision level far beyond what human-centric systems require, with automated response mechanisms that can act at machine speed.

- Agent-to-agent communication: In multi-agent systems, agents exchange data with each other. These inter-agent trust boundaries must also fall under Zero Trust governance — an entirely new layer that didn’t exist in traditional models.

- Lifecycle management: Agents require security management across their entire lifecycle — creation, updates, and decommissioning. Orphaned agent credentials with residual permissions become attack surfaces if not properly managed.

My Take: A Perspective from Running Multi-Agent Systems Daily

When Microsoft announced ZT4AI, my first reaction was: finally. I design and operate multi-agent systems every day — AI agents calling APIs, processing data, communicating with external services, running automated pipelines that produce real output with real consequences. Throughout this work, security has always been something I’ve had to architect myself, without an industry-standard framework to lean on. Every decision about agent permissions, data access boundaries, and inter-agent communication protocols was a judgment call with no reference standard.

After 28 years in the music, audio, and tech industries, one thing I’ve learned is that security frameworks only matter if they’re actually usable in the field. Having 700 controls documented in a PDF that nobody reads is worthless. What makes ZT4AI genuinely promising is the practical structure — 33 swim lanes that let you start where your risk is highest, plus an automated assessment tool coming this summer that can diagnose your current state and tell you what to prioritize. The identity-first approach especially resonates with me. In my own multi-agent pipeline, every agent has a defined scope of access, communication between agents happens through fixed interfaces, and the principle of least privilege isn’t a nice-to-have — it’s the default architecture. Seeing Microsoft formalize this pattern validates the approach.

My concern, however, is accessibility. This framework appears designed for enterprise-scale organizations with dedicated security teams and substantial budgets. Startups and small teams — ironically, the ones most likely to move fast and skip security considerations — may find 700 controls impractical to adopt without significant investment. A 10-person dev team without a dedicated security engineer will hit a wall trying to implement even a fraction of these controls. Whether Microsoft provides tiered guidance for organizations of different sizes — something like an “Essential 20 for Startups” subset — will likely determine ZT4AI’s real-world impact beyond Fortune 500 companies.

AI Agent Security Is Now a Non-Negotiable

Microsoft’s Zero Trust for AI framework represents the industry’s first systematic approach to AI agent security. The three pillars — agent governance, data protection, and prompt defense — cover the essential territory that every organization deploying AI agents must address. If 700 controls feel overwhelming, start with identity management. Assign unique IDs to every agent, explicitly define what each one can access, and build your security posture incrementally from there.

The age of AI agents is already here. Deploying them without a security framework is like leaving your office unlocked in a high-crime neighborhood. ZT4AI is the blueprint for that lock — and the time to install it is before you open the door, not after someone has already walked in and helped themselves to your data.

Looking to architect secure AI agent systems or build automation pipelines? With 28 years of industry experience, I provide hands-on consulting for enterprise AI deployment.

Get weekly AI, music, and tech trends delivered to your inbox.