xAI Grok 4.1 — 65% Fewer Hallucinations, #1 on LM Arena, and What It Actually Means

November 10, 2025

Black Friday 2025: 12 Best Headphone Deals From AirPods to Sennheiser — Up to 56% Off

November 11, 2025One photo. Thirty milliseconds. A full 3D reconstruction. That’s Meta SAM 3D in a nutshell, and it just changed the game for spatial AI.

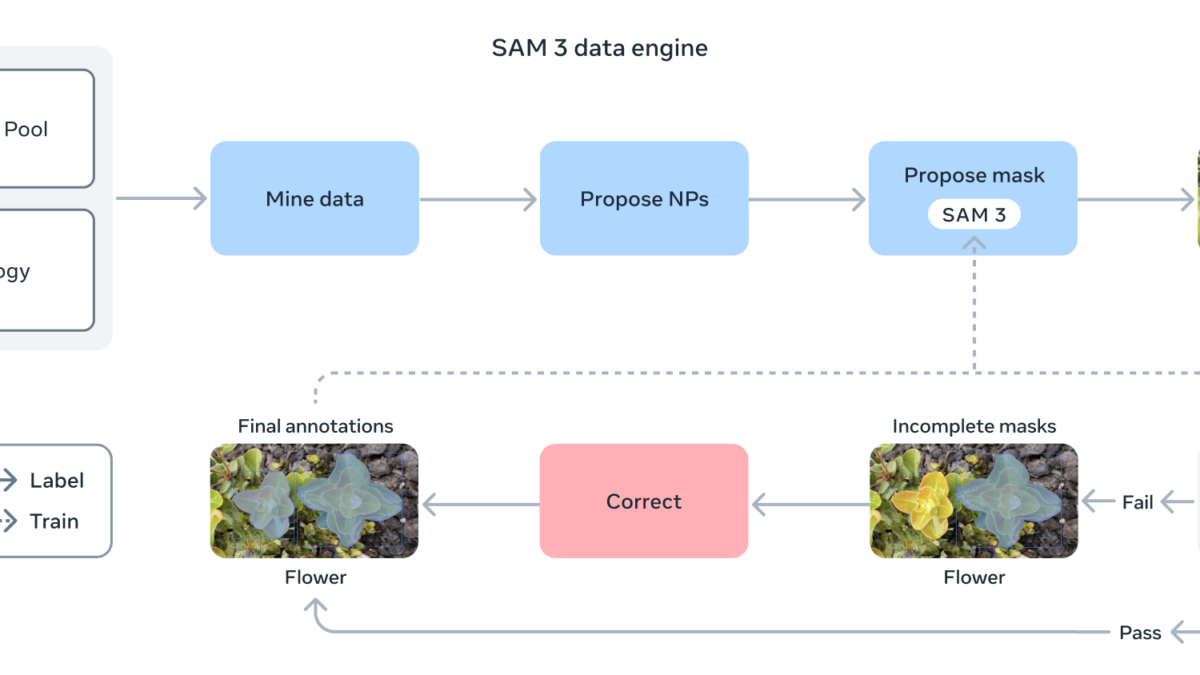

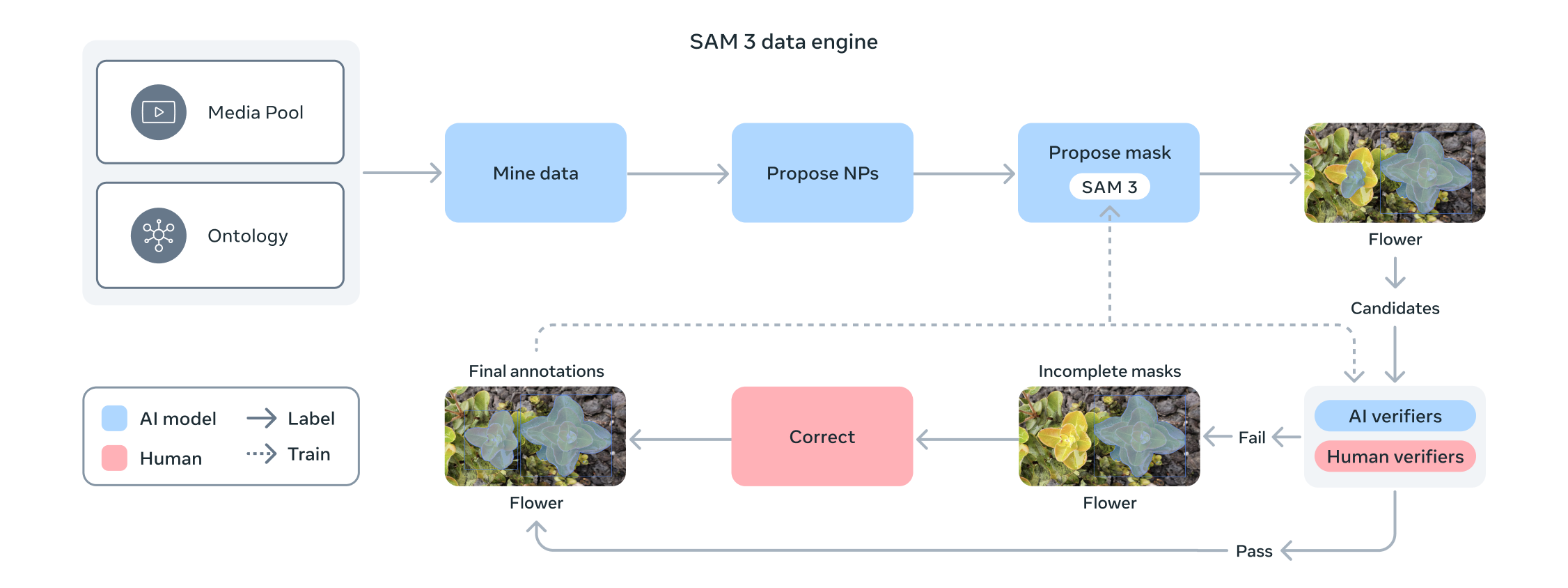

When Meta released SAM 1 in 2023, it fundamentally reset expectations for image segmentation. SAM 2 in 2024 extended that to video with real-time tracking. But what Meta just dropped in November 2025 is something else entirely: SAM 3 and Meta SAM 3D together form a unified ecosystem that goes from 2D pixel understanding all the way to full 3D spatial reconstruction. These aren’t incremental updates. They represent a fundamental expansion of what visual AI can do. Let’s break down what’s actually new, what the numbers look like, and why this matters for anyone building with computer vision.

SAM 3: Text Prompts, Unified Detection, and 30ms Inference

The biggest limitation of previous SAM versions was the prompting mechanism. You had to click points or draw bounding boxes around what you wanted to segment. That works fine for research demos, but it’s a non-starter for production applications where you need automated, scalable object detection. SAM 3 removes that friction entirely by supporting text prompts, exemplar images, and visual prompts in a single unified model.

The practical impact is immediate. You can type “red car” and the model detects, segments, and tracks every matching object in the image or video. It handles complex text prompts too — “the person wearing a blue jacket on the left” works just as well. This text-based interface means SAM 3 can be integrated into automated pipelines without human-in-the-loop pointing and clicking. For any team building computer vision products, that’s a massive reduction in workflow complexity.

But the really impressive part is the performance. On Meta’s SA-Co benchmark, SAM 3 delivers a 2x improvement over the previous state of the art. Speed-wise, it processes images containing 100+ objects in just 30 milliseconds on an NVIDIA H200 GPU. For video, it handles near real-time tracking for approximately 5 concurrent objects. To put that in context: 30ms per frame means you can run SAM 3 on live video feeds at over 30 frames per second. That opens up real-time applications in autonomous vehicles, security systems, live sports analytics, and industrial quality inspection.

What makes this architecturally significant is the unification. Detection, segmentation, and tracking used to require separate models stitched together in a pipeline. Each model had its own preprocessing, inference, and postprocessing steps. Data passed between models introduced latency and potential error propagation. SAM 3 collapses all of that into a single model with a single forward pass. For production systems, this means fewer moving parts, lower latency, simpler deployment, and dramatically reduced infrastructure costs.

Meta SAM 3D Objects: Full 3D Reconstruction From a Single Photo

SAM 3D is a separate model family focused specifically on 3D spatial understanding, and SAM 3D Objects is the headline feature. Give it a single 2D photograph and it generates a complete 3D mesh of the objects or scene. No multi-angle captures, no depth sensors, no LiDAR — just one regular photo from a smartphone camera. If you’ve ever spent hours in a 3D modeling tool trying to reconstruct a real-world object, you understand why this is a big deal.

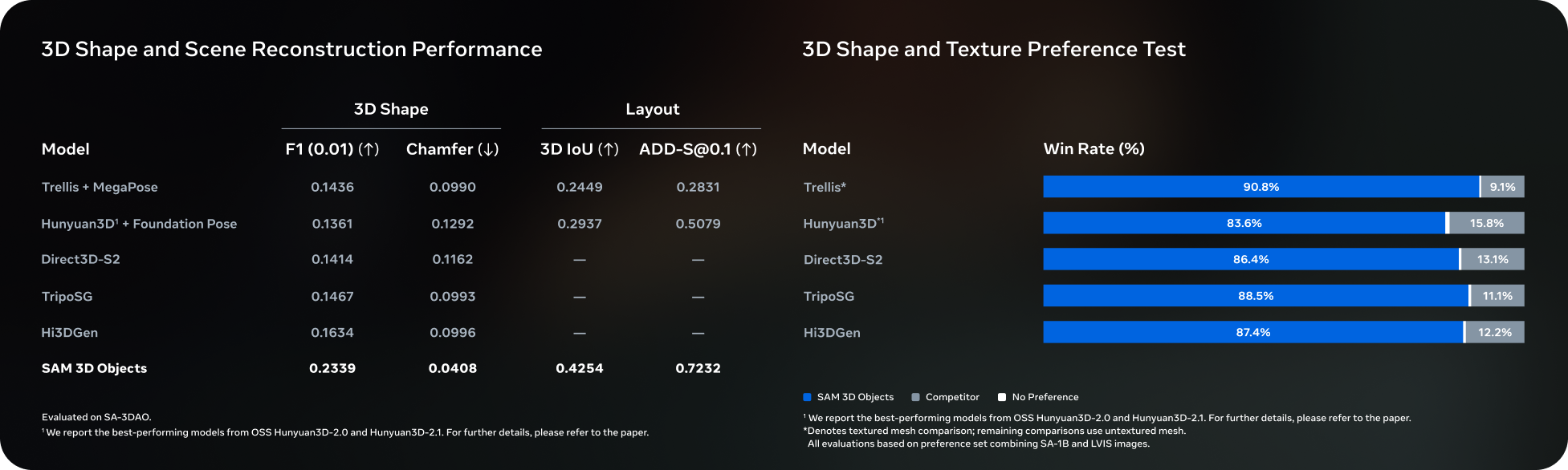

The training scale behind this is substantial. Meta trained SAM 3D Objects on 3.14 million model-generated meshes and 8 million images. That’s an enormous dataset, and the quality of the training data directly impacts the quality of the outputs. The results speak for themselves: in human preference testing, SAM 3D Objects achieved a 5:1 win rate over leading competing models. That means when real people compared the 3D outputs side by side, they preferred SAM 3D’s reconstruction five times out of six. The model doesn’t just estimate rough shapes — it generates high-fidelity meshes with texture and material details that look convincing from multiple angles.

This isn’t theoretical, either. It’s already shipping in a consumer product. Facebook Marketplace’s “View in Room” feature uses SAM 3D Objects to let buyers preview furniture in their own space via AR before purchasing. When a seller uploads a photo of a couch, the system automatically generates a 3D model that buyers can place and rotate in their living room through their phone camera. For e-commerce, this has direct implications for reducing return rates — buyers who can visualize products in their actual space are far less likely to be disappointed when the item arrives.

Beyond e-commerce, the implications for game development and content creation are significant. Artists and developers can photograph real-world objects and get usable 3D assets in seconds rather than hours. Architecture and interior design workflows could be transformed by photographing existing furniture and fixtures, then rearranging them in 3D space. The barrier to creating 3D content just dropped dramatically.

SAM 3D Body: Human Pose and Shape Estimation Without Motion Capture

The second component of the SAM 3D family tackles the human body specifically. SAM 3D Body estimates human pose and body shape from a single 2D image, outputting data in Meta’s new Momentum Human Rig (MHR) format. This isn’t just skeleton tracking — it captures the full volumetric shape of the human body with precise joint positions, limb proportions, and body geometry. The MHR format is designed to be more expressive than traditional skeleton-based representations, encoding both the pose and the physical shape of the person.

The application space here is enormous and crosses multiple industries. In sports medicine, coaches and therapists can analyze athlete biomechanics from standard video footage without expensive motion capture setups. Knee angles, spinal alignment, weight distribution, stride patterns — all measurable from a single camera view. This democratizes biomechanical analysis that previously required six-figure motion capture labs.

In gaming and AR/VR, developers can map user movements to 3D avatars without dedicated tracking hardware. Instagram Edits is already integrating SAM 3D Body to give creators more sophisticated video editing tools, enabling body-aware effects and overlays. In robotics, understanding human pose and shape from a single camera enables safer human-robot interaction in warehouses, healthcare facilities, and service environments. A robot that can accurately estimate where a person’s limbs are — including limbs partially occluded from view — can navigate around humans far more safely.

Open Source Strategy and the Developer Ecosystem

True to form, Meta is releasing this as open source — and the scope of the release matters. For SAM 3, the full package is available: model weights, checkpoints, fine-tuning code, and both the SA-Co and SA-3DAO benchmarks. This means developers don’t just get a model to run inference on — they can fine-tune it for their specific domain, whether that’s medical imaging, satellite analysis, or industrial inspection. For SAM 3D, the release is partial: checkpoints and inference code are public, though full training code is not yet available. Still, inference and fine-tuning capability covers the vast majority of production use cases.

Anyone can try the models right now through the Segment Anything Playground, Meta’s browser-based demo environment. Developers can download the weights, integrate them into their own applications, and benchmark performance against the released datasets. The open benchmarks are particularly valuable — SA-Co for segmentation quality and SA-3DAO for 3D reconstruction quality give the community standardized ways to compare approaches and track progress.

One especially noteworthy application is wildlife conservation. Meta released the SA-FARI dataset specifically to enable researchers to use SAM 3 for identifying and tracking individual animals in the wild. Camera trap images can now be automatically processed to identify species and individual animals, dramatically accelerating ecological research that previously required manual photo review. It’s a powerful demonstration that these models have applications far beyond the typical tech use cases.

The strategic calculus behind the open source approach is clear. By making SAM 3 and SAM 3D freely available, Meta ensures that the developer ecosystem builds on their architecture rather than competitors’. Every fine-tuned model, every benchmark comparison, every production deployment reinforces the SAM ecosystem as the de facto standard for visual AI. It’s the same playbook that made PyTorch the dominant deep learning framework — give away the tool, own the ecosystem.

The SAM Timeline: From Point-Click to 3D Understanding in Two Years

It’s worth stepping back and appreciating just how fast this field is moving. The Segment Anything lineage tells the story clearly:

- SAM 1 (2023) — Foundation model for image segmentation. Point and box prompts only. Revolutionized the field but required manual interaction.

- SAM 2 (2024) — Extended to video. Real-time object tracking with prompt-based segmentation. Introduced temporal understanding.

- SAM 3 + SAM 3D (November 2025) — Text and exemplar prompts, 3D object reconstruction from single images, human body estimation, unified detection-segmentation-tracking pipeline. Full spatial understanding.

In just two years, we went from clicking on pixels to telling a model what to find with plain language, and from flat 2D masks to full 3D mesh reconstructions with texture. That trajectory shows no signs of slowing down. If the pattern holds, we can expect SAM 4 to push into real-time 3D scene understanding from video, potentially enabling instant spatial mapping from moving cameras.

The simultaneous launch of SAM 3 and SAM 3D isn’t just a product update — it’s Meta’s declaration that they intend to own the full stack of visual AI, from 2D pixel understanding to 3D spatial reasoning. With the open source release, the ball is now in the developer community’s court. Robotics, AR/VR, e-commerce, healthcare, game development, wildlife conservation — any field that needs 3D spatial understanding from visual inputs now has a serious, production-ready, open source foundation to build on. The models are live, the code is public, and the Segment Anything Playground is open. The question isn’t whether Meta SAM 3D will impact your field — it’s how fast you’ll integrate it.

Need help building computer vision pipelines or integrating 3D spatial AI into your products? Let’s talk.

Get weekly AI, music, and tech trends delivered to your inbox.