Steinberg Dorico 6 Review: AI-Powered Proofreading Panel Catches 100+ Score Errors Automatically

February 9, 2026

NVIDIA Blackwell B300 Enters Volume Production: 288GB HBM3e Changes the AI Server Game

February 10, 2026A 17-billion-parameter model running on a single GPU that matches proprietary giants costing ten times more — sound too good to be true? Meta Llama 4 Scout made it reality. In the ten months since its April 2025 launch, enterprise open-source AI adoption has surged from 23% to 67%, and organizations processing 500 million or more tokens per month are reporting 60-80% cost reductions compared to proprietary API providers. This is not a marginal improvement. It is a structural shift in how enterprises think about AI infrastructure.

Why Meta Llama 4 Scout Changes Everything

The secret behind Scout’s efficiency lies in its Mixture-of-Experts (MoE) architecture. The model contains 109 billion total parameters spread across 16 expert networks, but only 17 billion parameters are active during any given inference pass. The router selects the most relevant experts for each query, which means you get the reasoning capacity of a much larger model at a fraction of the computational cost. With INT4 quantization, the entire model fits into roughly 55GB of VRAM — comfortably within range of a single NVIDIA H100 GPU.

Then there is the context window. Meta Llama 4 Scout supports a 10-million-token context window — the largest of any open-source model available today. That is not a theoretical number. In long-context retrieval benchmarks, Scout maintains over 95% accuracy through 8 million tokens and achieves 89% on needle-in-a-haystack tests at the full 10 million token range. For enterprises dealing with massive legal documents, multi-year financial records, or comprehensive medical histories, this capability is transformative.

Benchmark Performance: Meta Llama 4 Scout by the Numbers

Numbers do not lie, and Scout’s benchmark results tell a compelling story. On MMLU-Pro, the model scores 74.3%. On HumanEval, it reaches 79.6%. These figures place Scout well ahead of competing models in the same parameter class and, on several benchmarks, within striking distance of proprietary models like GPT-5.3 and Claude Opus 4.6. The MoE architecture has dramatically compressed the performance gap between open-source and proprietary, while keeping inference costs a fraction of what the closed-source alternatives charge.

But the benchmark story that matters most to enterprise buyers is not MMLU — it is industry-specific performance. In the legal sector, Scout delivers a 22% improvement in clause extraction accuracy. Financial services teams are seeing a 25% improvement in entity extraction from unstructured documents. In healthcare, the model achieves 19% better medical entity recognition while maintaining full HIPAA compliance. These are not synthetic benchmarks. They are results from production deployments measured against the proprietary baselines that enterprises were paying premium prices to use.

What makes these results even more remarkable is the fine-tuning economics. Using LoRA (Low-Rank Adaptation), enterprises can achieve an additional 15-25% accuracy improvement on domain-specific tasks for just $50-200 in compute costs. Custom model training that once required five-figure budgets is now accessible to startups and mid-market companies. This democratization of performance is one of the key drivers behind the 340% year-over-year growth in the open-source AI market.

The Cost Economics That Are Forcing Enterprise Migration

Cost has always been the primary barrier to enterprise AI adoption at scale. Proprietary APIs charge per token, and at enterprise volumes — hundreds of millions to billions of tokens per month — those costs become a significant line item. Meta Llama 4 Scout fundamentally restructures this equation.

For self-hosted deployments, the math is straightforward. A single H100 GPU running Scout at INT4 quantization delivers 55-75 tokens per second. At scale, this translates to 60-80% cost savings compared to equivalent proprietary API usage. Even organizations that prefer not to manage their own infrastructure have attractive options. Together AI offers Scout inference at $0.10 per million input tokens and $0.30 per million output tokens. Fireworks AI prices it at $0.12 and $0.35, respectively. AWS Bedrock provides on-demand access with no long-term commitment.

To put this in perspective: an enterprise processing one billion tokens per month through a proprietary API might spend $15,000-$30,000 monthly. The same workload through Together AI’s Scout endpoint costs roughly $1,500-$3,000. Self-hosted, the cost drops even further once the initial infrastructure investment is amortized. These are not theoretical projections — they are the economics that drove enterprise open-source AI adoption from 23% to 67% in less than a year.

Production Deployment: From Single GPU to Hybrid Architecture

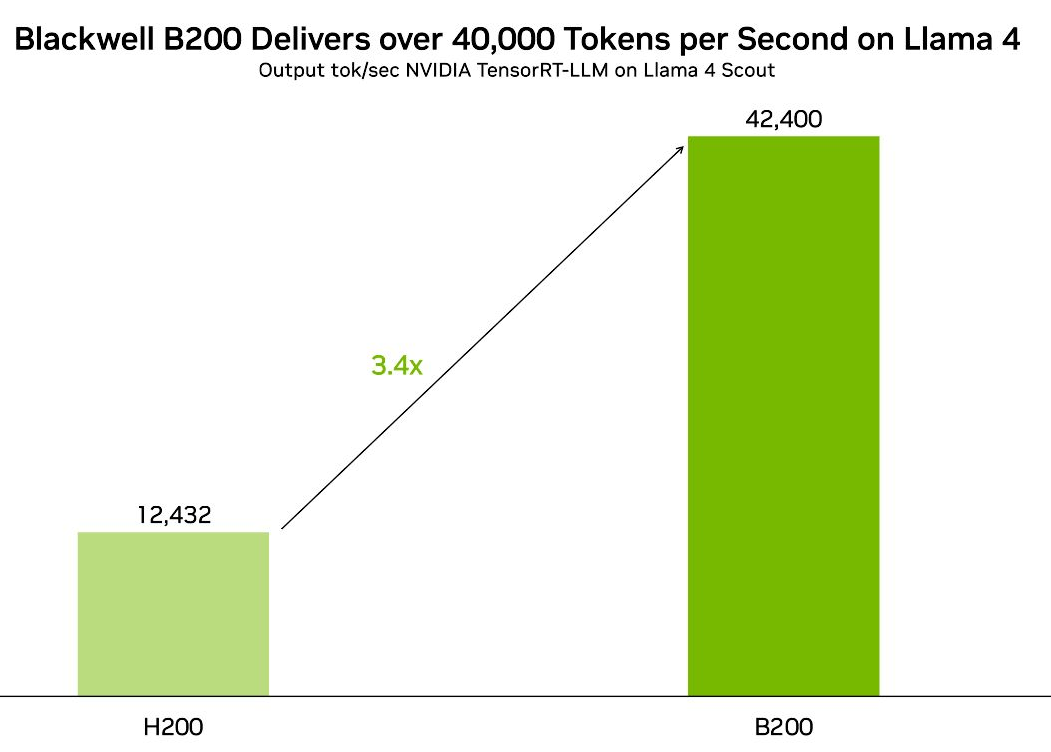

One of Scout’s most compelling advantages is deployment flexibility. NVIDIA’s TensorRT-LLM optimization and NIM (NVIDIA Inference Microservice) integration provide battle-tested infrastructure for production deployments. Organizations can start with a single H100 running INT4 and scale horizontally as demand grows, all while following established best practices from NVIDIA’s deployment documentation.

The deployment pattern gaining the most traction in enterprise environments is the hybrid approach. Route 90-95% of queries through self-hosted Scout, and reserve proprietary APIs (GPT, Claude, or Meta’s own Llama 4 Maverick for complex reasoning) for the 5-10% of queries that require maximum capability. This strategy captures most of the cost savings from open-source while maintaining quality guarantees for edge cases.

- Tier 1 — Edge and Device Deployment: Scout INT4 on a single GPU for low-latency inference and data sovereignty requirements

- Tier 2 — Complex Reasoning: Maverick or proprietary APIs for high-difficulty tasks that exceed Scout’s capabilities

- Tier 3 — Batch Processing at Scale: Scout clusters for high-volume document processing and data pipeline workloads

This tiered approach also addresses a common concern about open-source models: consistency. By establishing clear routing rules based on query complexity, token count, or domain sensitivity, enterprises can ensure that each request is handled by the most cost-effective model capable of producing acceptable output. The router itself can be a lightweight classifier — often another instance of Scout — making the entire system self-contained and cost-efficient.

Meta’s Bigger Picture: 1.5 Million GPUs and the MTIA v3 Chip

Scout’s success does not exist in a vacuum. It is one piece of Meta’s broader strategy to become what industry analysts are calling “the Linux of AI.” By early 2026, Meta had amassed over 1.5 million GPU units and deployed its custom MTIA v3 AI chip on a 3nm process. This compute infrastructure powers not only Meta’s own products but also the training runs that produce models like Scout, Maverick, and the forthcoming 2-trillion-parameter Llama 4 Behemoth — which remains in preview but promises another paradigm shift upon release.

The strategic implications are significant. By open-sourcing models that rival proprietary competitors, Meta forces price compression across the entire AI inference market. Proprietary providers are already cutting prices in response. As open-source models expand to native multimodal capabilities — text, image, audio, and video in a single model — the premium that closed-source providers can command continues to shrink. For enterprises, the question is no longer whether to use open-source AI, but what ratio of open-source to proprietary best serves their workload profile.

Practical Migration: What Enterprise Teams Are Actually Doing

The shift from proprietary to open-source is not happening overnight, and the most successful enterprise migrations follow a deliberate pattern. Teams typically begin with a pilot project — often a high-volume, lower-stakes workload like document summarization or internal knowledge retrieval — and measure Scout’s output quality against their existing proprietary baseline. Once the quality gap is confirmed to be negligible (or nonexistent), the migration expands to cover additional use cases.

Data sovereignty is another accelerator. Organizations in regulated industries — finance, healthcare, government — are increasingly uncomfortable routing sensitive data through third-party API endpoints. Self-hosted Scout eliminates this concern entirely. The data never leaves the organization’s infrastructure, and compliance teams gain full auditability over how the model processes information. Combined with the cost savings, this privacy advantage is converting even the most cautious enterprise AI teams into open-source advocates.

The tooling ecosystem has matured rapidly to support these migrations. NVIDIA’s NIM provides containerized deployment that works across cloud and on-premises environments. Monitoring solutions like vLLM and text-generation-inference offer production-grade serving with built-in metrics. Model registries track fine-tuned versions across teams. The operational overhead that once made self-hosted LLMs impractical for enterprise teams has been engineered away, piece by piece, over the past ten months.

What This Means for Your AI Strategy in 2026

The ten months since Meta Llama 4 Scout’s release have made one thing clear: open-source AI is no longer the “cheaper but weaker” alternative. It is now a mainstream, enterprise-grade option that delivers competitive performance at dramatically lower cost. The combination of 17 billion active parameters, a 10-million-token context window, and single-GPU deployment has created a cost structure that proprietary providers cannot match without fundamentally changing their business models.

For organizations evaluating their AI infrastructure, the playbook is becoming clearer. Start with Scout for the bulk of your inference workload. Use LoRA fine-tuning to optimize for your specific domain. Route complex edge cases to proprietary APIs. Measure cost per quality-adjusted output, not just raw benchmark scores. The enterprises that adopted this hybrid approach early are already seeing returns that make the ROI case undeniable.

Whether you are building a new AI pipeline from scratch or optimizing an existing one, the economics now favor a hybrid architecture anchored by open-source models like Scout. The infrastructure tooling is mature, the deployment patterns are proven, and the cost savings are measurable from day one. The window for gaining competitive advantage through early adoption is narrowing — but it is still open.

Building an AI infrastructure, optimizing LLM deployment, or designing automation pipelines? Let’s talk.

Get weekly AI, music, and tech trends delivered to your inbox.