How to Build a Sample Library: Organization and Tagging Workflow from a 28-Year Producer

December 22, 2025

Music Streaming Wrapped 2025: Artist and Listener Data Insights from 5.1 Trillion Streams

December 23, 2025One billion downloads. That was the milestone Meta AI 2025 hit in March — and it was only the beginning. A month later, Llama 4 dropped and rewrote the rules of open-source AI. As 2025 draws to a close, it is worth looking back at what has been, without exaggeration, the most consequential year in Meta’s AI journey. Here is the full picture — the breakthroughs, the numbers, and the strategic tensions lurking beneath the surface.

The Llama 3.3 Prelude: Efficiency as a Weapon

The year technically started with a December 2024 release. Llama 3.3 70B proved something the industry had debated for years — that you could match 405B-parameter performance with a model less than one-fifth the size. With a 128K context window and dramatically lower operational costs, Llama 3.3 obliterated the assumption that bigger always meant better.

By mid-2024, Llama and its derivatives had been downloaded 650 million times, a figure that doubled in just three months. Over 85,000 Llama derivatives were published on Hugging Face — a fivefold increase in a single year. Monthly token volume on cloud partners was growing more than 50% month-over-month. Llama was no longer just a model; it was an ecosystem.

This was the foundation for everything that followed. When Llama 3.3 demonstrated that cost-efficiency and frontier performance could coexist, it signaled that Meta’s open-source strategy was not charity — it was competitive strategy. For startups and enterprises that could not afford to run 405B-parameter models, Llama 3.3 was a game-changer. The cost of deploying frontier-quality AI dropped by roughly 80%, opening the door for thousands of new applications that would have been economically unfeasible just months earlier.

The trajectory from Llama 3 through 3.1, 3.2, and 3.3 followed a clear pattern: each release either pushed the performance frontier higher (3.1’s 405B model) or made that frontier accessible to more people (3.2’s edge-optimized variants, 3.3’s cost efficiency). This was not random iteration — it was a deliberate strategy to build the widest possible moat through ecosystem adoption.

Llama 4: The Multimodal MoE Revolution

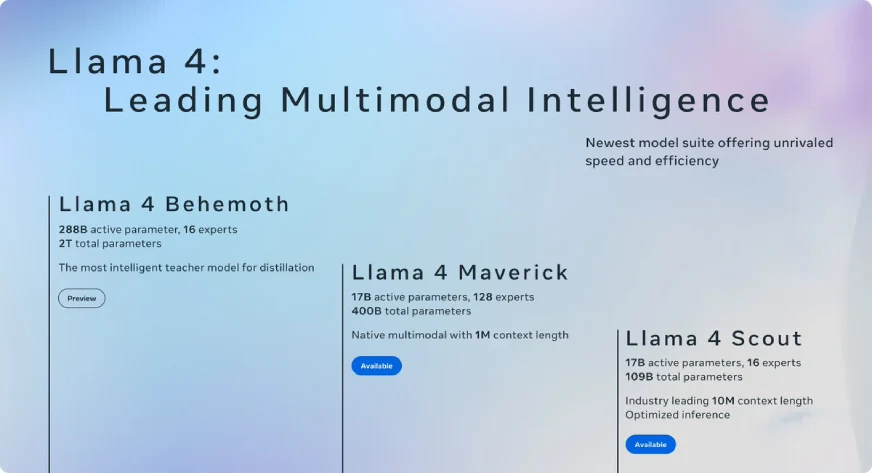

On April 5, 2025, Meta unveiled the Llama 4 family, and the Meta AI 2025 narrative shifted from incremental progress to architectural leap. Three models launched simultaneously:

- Llama 4 Scout — 17B active parameters, 16 experts, 109B total parameters. The headline number: a 10 million token context window, the largest in the industry at launch. Runs on a single NVIDIA H100 GPU.

- Llama 4 Maverick — 17B active parameters, 128 experts, 400B total parameters. 1M context window. Beat GPT-4o and Gemini 2.0 Flash across broad benchmarks.

- Llama 4 Behemoth — 288B active parameters, approximately 2 trillion total parameters. A teacher model designed for distillation, outperforming GPT-4.5 and Claude Sonnet 3.7 on STEM tasks.

The most significant architectural decision was adopting Mixture of Experts (MoE) — a first for Meta’s open models. MoE activates only a subset of parameters during inference, dramatically improving efficiency. This is how Scout, a 109B-parameter model, can run on a single GPU. It is also how Maverick, with 400B total parameters, keeps inference costs competitive with much smaller models.

To understand why MoE matters, consider the economics. A traditional dense model with 400B parameters requires activating all 400B parameters for every single token generated. With MoE, Maverick only activates 17B parameters per inference step — roughly 4% of the total. The remaining experts sit idle, ready to be called upon when their specialized knowledge is needed. The result is a model that has the knowledge depth of 400B parameters but the computational cost of a 17B model. For organizations running AI at scale, this translates directly to lower server costs, faster response times, and more accessible deployment options.

Natively Multimodal: Beyond Text-Only AI

Llama 4 was not just bigger — it was fundamentally different. Previous models bolted image understanding onto a text backbone after pre-training. Llama 4 used early fusion architecture, integrating text, image, and video data from the very first stage of pre-training. This is the difference between a bilingual person who learned both languages from birth versus someone who picked up a second language later in life — the former has deeper, more intuitive fluency.

The training data scale matched the ambition: over 30 trillion tokens (double Llama 3), spanning 200 languages. Meta also introduced the MetaP technique for reliable hyperparameter transfer across model scales, making the development pipeline itself more efficient. These are not flashy features for press releases — they are the kind of infrastructure improvements that compound over years.

From Research to Product: 600 Million Users

Technology without distribution is just a research paper. Meta had distribution — and in 2025, it used it. The second half of the year saw the launch of the Meta AI app powered by Llama 4, a personalized AI assistant integrated across WhatsApp, Messenger, and Instagram Direct. By year-end, it had reached nearly 600 million monthly active users.

Think about what that number means. OpenAI’s ChatGPT, after two years of explosive growth, reported roughly 300 million weekly active users by late 2025. Meta’s AI assistant, leveraging its existing social platforms, achieved comparable reach in a fraction of the time. The “Vibes” AI video feed within the Meta AI app added another dimension — generative AI as entertainment, not just utility.

On the research front, Meta continued releasing advanced models throughout the year: SAM 3 and SAM 3D for segmentation, SAM Audio for audio understanding, and V-JEPA for video representation learning. Each represented a building block for the multimodal future Meta envisions.

LlamaCon 2025: A Developer Ecosystem Comes of Age

On April 29, 2025, Meta held LlamaCon — the first-ever dedicated Llama developer event. The significance was not just in the announcements made that day, but in the fact that the event existed at all. When a model family gets its own developer conference, it has crossed from being a product into being a platform. LlamaCon brought together the community of developers, researchers, and enterprises building on top of Llama, and it signaled that Meta views its open-source AI ecosystem as a long-term strategic asset, not a one-off marketing gesture.

The Infrastructure Bet: Data Centers and Nuclear Power

Behind every AI breakthrough is a massive infrastructure investment. In 2025, Meta broke ground on three new data centers, including a 1GW AI-optimized facility in El Paso, Texas. The company also signed a nuclear energy agreement with Constellation Energy, securing sustainable power for its expanding AI compute needs.

Mark Zuckerberg articulated a vision for “personal superintelligence” — AI that provides customized intelligence to every individual. Whether or not you buy the terminology, the investments backing it are real. You do not spend billions on nuclear-powered data centers for a side project.

The Tensions Beneath: Strategy Debates and Llama 5

No year-end review would be complete without acknowledging the challenges. CNBC reported in December that Llama 5, codenamed “Avocado,” is under development, but internal tensions around the speed and direction of AI development have surfaced. Some insiders described Meta’s approach as “scattershot,” with the company’s open-source obsession giving way to broader strategic shifts and organizational restructuring.

This is worth watching closely. Meta’s competitive advantage in 2025 was being the only major lab offering frontier-class open-weight models. If internal strategy debates slow the pace or shift the open-source commitment, the ecosystem of 85,000+ community derivatives could fragment. The balance between open research and commercial product strategy is one of the most consequential decisions Meta faces heading into 2026.

There is also the talent dimension. Meta has been aggressively hiring top AI researchers as part of an organizational restructure, which is both a sign of ambition and a potential source of cultural friction. Integrating new talent while maintaining the velocity that produced four major Llama releases in 18 months is no small feat. The companies that navigate this challenge well tend to be the ones that dominate the next cycle.

What Meta AI 2025 Means for the Broader Industry

Meta’s 2025 performance did not happen in a vacuum. It reshaped how the entire AI industry thinks about open versus closed models. When Llama 4 Maverick outperformed GPT-4o on benchmarks while being freely downloadable, it raised uncomfortable questions for every company selling proprietary API access. Why pay per token when a comparable model is available for free? The answer, of course, is nuanced — reliability, support, fine-tuning infrastructure, and safety guardrails all matter — but the competitive pressure is real and growing.

For developers and businesses, Meta’s open-source strategy has created a viable third path between building from scratch and being locked into a proprietary API. The ability to download, customize, and deploy Llama models on your own infrastructure gives organizations control over their AI stack in a way that was not possible even two years ago. This is particularly valuable for enterprises with strict data governance requirements or those operating in regulated industries where sending data to third-party APIs is not an option.

Meta AI 2025 Timeline at a Glance

- December 2024: Llama 3.3 70B released — 405B-class performance at a fraction of the cost

- March 2025: 1 billion cumulative Llama downloads

- April 5, 2025: Llama 4 family launched — Scout (109B, 10M context), Maverick (400B, 1M context), Behemoth (~2T, teacher model)

- April 29, 2025: LlamaCon 2025 — first dedicated Llama developer event

- H2 2025: Meta AI app launched with Llama 4 — nearly 600M MAU by year-end

- December 2025: Llama 5 “Avocado” in development; internal strategy debates reported

The Bottom Line

2025 was the year Meta cemented its position as the open-source AI leader. From Llama 3.3’s efficiency breakthrough to Llama 4’s multimodal MoE architecture, from 1 billion downloads to 600 million active users, the numbers tell a clear story. Internal tensions and strategic questions remain, but the ecosystem Meta has built is now too large and too deeply embedded in the industry to unwind easily. As Llama 5 “Avocado” takes shape behind the scenes, the entire AI industry is watching — because what Meta does with open-source AI in 2026 will shape the competitive landscape for years to come.

Interested in deploying open-source AI models or building automated pipelines for your team? Sean can help with technical consulting and implementation.

Get weekly AI, music, and tech trends delivered to your inbox.