Intel Arrow Lake Refresh: Core Ultra 200S Plus CPU Performance and Efficiency Breakdown

July 25, 2025

Summer Music Production Workflow: 5 Tips to Stay Creative When the Heat Hits

July 28, 2025LangChain v0.3 didn’t just ship new features—it fundamentally rewired how we build AI agents. Since the initial release in September 2024, the framework has marched steadily to v0.3.26, deprecating legacy memory classes, overhauling tool definitions, completing the Pydantic v2 migration, and positioning LangGraph as the definitive agent runtime. If you’ve been putting off the upgrade, here’s your comprehensive guide to everything that changed and why it matters.

What Changed in LangChain v0.3: The Big Picture

When LangChain announced v0.3 in September 2024, the changelog was deceptively long. But the core philosophy boils down to three shifts: modular packages, native Pydantic v2, and LangGraph-first architecture. Let’s break each one down.

The Pydantic v2 Migration

The most foundational change is the complete migration from Pydantic v1 to v2. Every internal schema, tool definition, and output parser in LangChain now uses Pydantic v2 natively. This isn’t just a version bump—Pydantic v2’s Rust-backed core delivers up to 50x faster validation, which compounds quickly when your agent is making dozens of tool calls per interaction.

# Before (v0.2 — Pydantic v1 compatibility layer)

from langchain.pydantic_v1 import BaseModel, Field

class SearchInput(BaseModel):

query: str = Field(description="The search query")

# After (v0.3 — native Pydantic v2)

from pydantic import BaseModel, Field

class SearchInput(BaseModel):

query: str = Field(description="The search query")If your codebase imports from langchain.pydantic_v1, those imports need to be updated. Python 3.8 support has also been officially dropped, so make sure you’re on 3.9 or higher.

Modular Integration Packages

Gone are the days of installing one massive langchain package that ships every provider integration. In v0.3, integrations live in dedicated packages: langchain-openai, langchain-anthropic, langchain-google-genai, and so on. You install only what you need.

# Install only what you need

pip install langchain-core langchain-openai

# Universal chat model constructor (new in v0.3)

from langchain.chat_models import init_chat_model

# Auto-detects the right provider from model name

llm = init_chat_model("gpt-4o", temperature=0)

llm = init_chat_model("claude-sonnet-4-20250514", temperature=0)The init_chat_model universal constructor is a small but powerful addition. It inspects the model name, resolves the provider, and returns the appropriate chat model instance. This is especially useful in multi-provider setups where you want a single code path regardless of backend.

The Death of Legacy Memory: LangGraph Checkpointers Take Over

If there’s one change that will break your existing agents, it’s this: ConversationBufferMemory, ConversationSummaryMemory, ConversationTokenBufferMemory, and the entire legacy memory class hierarchy are now deprecated. Their replacement? LangGraph’s checkpointer system.

This isn’t just an API reshuffle. It’s a philosophical shift in how agent state is managed. Instead of bolting memory onto a chain as an afterthought, LangGraph treats state persistence as a first-class concern of the execution graph itself.

Short-Term Memory: Thread-Scoped Checkpointing

In LangGraph, short-term memory is managed per thread. Each conversation thread gets its own checkpoint, and state is automatically persisted after every node execution.

from langgraph.checkpoint.memory import InMemorySaver

from langgraph.prebuilt import create_react_agent

from langchain_openai import ChatOpenAI

checkpointer = InMemorySaver()

agent = create_react_agent(

model=ChatOpenAI(model="gpt-4o"),

tools=[search_tool, calculator_tool],

checkpointer=checkpointer

)

# Conversation persists within a thread

config = {"configurable": {"thread_id": "session-abc"}}

response = agent.invoke(

{"messages": [{"role": "user", "content": "What's the weather in NYC?"}]},

config=config

)

# Same thread_id = automatic context recall

response = agent.invoke(

{"messages": [{"role": "user", "content": "What about tomorrow?"}]},

config=config

)For production, swap InMemorySaver for SqliteSaver or PostgresSaver to get durable persistence across restarts. You also get time-travel debugging out of the box—rewind to any checkpoint and replay from there.

LangMem SDK: Long-Term Memory for AI Agents

Short-term memory handles conversation continuity within a session. But what about remembering user preferences across sessions? Or learning from past interactions to improve future responses? That’s where LangMem SDK comes in, launched in February 2025.

LangMem organizes long-term memory into three distinct types:

- Semantic Memory: Structured facts and knowledge about users. Think “User prefers TypeScript over Python” or “User works at a fintech startup.”

- Episodic Memory: Summarized records of past interactions that serve as few-shot examples or contextual references.

- Procedural Memory: Optimizations to the agent’s system prompt and behavioral patterns, refined through experience. Your agent literally gets better with use.

pip install langmem

from langmem import Client

client = Client()

# Store semantic memories from conversations

client.add_memories(

messages=[

{"role": "user", "content": "I mainly work with FastAPI and PostgreSQL"},

{"role": "assistant", "content": "Got it, I'll tailor examples to your stack"}

],

user_id="dev-jane"

)

# Retrieve relevant memories via semantic search

memories = client.search_memories(

query="What tech stack does this user prefer?",

user_id="dev-jane"

)

# Returns: [{"content": "User works mainly with FastAPI and PostgreSQL", ...}]The semantic search capability, added to LangGraph’s long-term memory in December 2024, is the key enabler here. Instead of exact keyword matching, it finds memories by meaning. Available in PostgresStore, InMemoryStore, and across all LangGraph Platform deployments.

Simplified Tool Definitions and Chat Model Utilities

LangChain v0.3 streamlined how tools are defined. With Pydantic v2 integration, the @tool decorator now auto-generates schemas from type hints and annotations—no more manually specifying argument schemas.

from langchain_core.tools import tool

from typing import Annotated

@tool

def get_stock_price(

ticker: Annotated[str, "Stock ticker symbol (e.g., AAPL)"],

currency: Annotated[str, "Currency unit"] = "USD"

) -> dict:

"""Fetch real-time stock price."""

return {"ticker": ticker, "price": 150.25, "currency": currency}

# Schema auto-generated from annotations

print(get_stock_price.args_schema.model_json_schema())The release also introduced a suite of chat model utilities that solve real-world pain points:

trim_messages: Intelligently truncates message history to fit within token limits, with strategies like “keep last N tokens” or “keep first + last.”filter_messages: Extracts only specific message types (e.g., system + human messages, excluding tool responses).merge_message_runs: Combines consecutive messages from the same role into a single message.- Built-in rate limiter: Manages API call throttling natively, no more external rate-limiting libraries.

from langchain_core.messages import trim_messages, filter_messages

# Trim to fit context window

trimmed = trim_messages(

messages,

max_tokens=4096,

token_counter=ChatOpenAI(model="gpt-4o"),

strategy="last" # Keep most recent messages

)

# Filter to specific message types

filtered = filter_messages(

messages,

include_types=["system", "human"]

)LangGraph as the Agent Runtime Standard

Perhaps the most significant meta-trend in the LangChain v0.3 era is the ascent of LangGraph. Where LangChain originally centered on sequential chains, the framework has decisively pivoted to graph-based agent architectures.

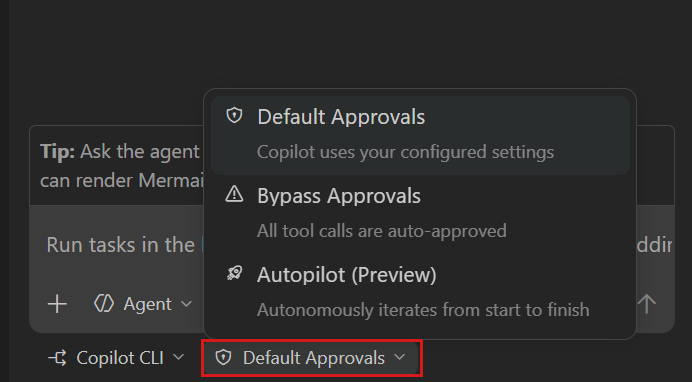

LangGraph expresses agent workflows as directed graphs where nodes perform actions and edges define routing logic. State management, checkpointing, human-in-the-loop approvals, and parallel execution are all native primitives.

from langgraph.graph import StateGraph, MessagesState, START, END

from langgraph.prebuilt import ToolNode

workflow = StateGraph(MessagesState)

workflow.add_node("agent", call_model)

workflow.add_node("tools", ToolNode(tools=[search, calculator]))

workflow.add_edge(START, "agent")

workflow.add_conditional_edges(

"agent",

should_use_tool,

{"continue": "tools", "end": END}

)

workflow.add_edge("tools", "agent")

app = workflow.compile(checkpointer=checkpointer)Looking ahead, v1.0 anticipation is building in the community. Previews suggest a create_agent high-level abstraction, a middleware system for human-in-the-loop, conversation summarization, and PII redaction, plus structured output integrated directly into the agent’s main execution loop. The direction is clear: LangGraph is the runtime, and everything else orbits around it.

Migration Checklist: v0.2 to LangChain v0.3

If you’re still on v0.2, here’s what you need to address:

- Python version: Upgrade to 3.9+ (3.8 is no longer supported)

- Pydantic migration: Replace all

langchain.pydantic_v1imports with nativepydantic - Memory overhaul: Replace

ConversationBufferMemoryand friends with LangGraph checkpointers - Package restructuring: Install provider-specific packages (

langchain-openai,langchain-anthropic, etc.) - Deprecated API audit: Check for

langchain_core.pydantic_v1,BaseTool.run, and other deprecated interfaces

The most common migration headache is Pydantic v1/v2 coexistence. If a downstream dependency still requires v1, you can use pydantic.v1 compatibility mode as a bridge—but the long-term path is upgrading all dependencies to v2.

Bottom Line

LangChain v0.3 marks the framework’s transition from “chain-first” to “agent-first.” The three takeaways that matter:

- LangGraph is the standard runtime. Stateful graph-based agents are now the default, not an advanced option.

- Memory is unified. Short-term (checkpointers) and long-term (LangMem) memory have clear, complementary roles.

- Modular by design. Install only what you need, with a consistent Pydantic v2 type system across the board.

With v1.0 on the horizon, now is the best time to get comfortable with LangGraph and LangMem. The agents you build today on v0.3 will have a smooth upgrade path to v1.0—and the developers who understand these patterns will have a significant edge in the rapidly evolving LLM application landscape.

Building AI agents with LangChain? Need help migrating your existing system or architecting a new one? From LLM application design to production deployment—let’s talk.

Get weekly AI, music, and tech trends delivered to your inbox.