Java 26 Release: 10 JEPs Including Vector API for AI, Post-Quantum Crypto, and Structured Concurrency — Oracle’s Biggest Update Since Records

March 18, 2026

Tidal Direct-to-Fan Downloads: 90/10 Revenue Split vs Bandcamp’s 85/15 — How the New Feature Changes Independent Music Distribution

March 18, 2026One socket. 288 cores. 38% less power than the dual-socket system it replaces. Intel just dropped the most significant server CPU in years at MWC 2026 — and it’s built on the 18A process node that the entire company is betting its future on.

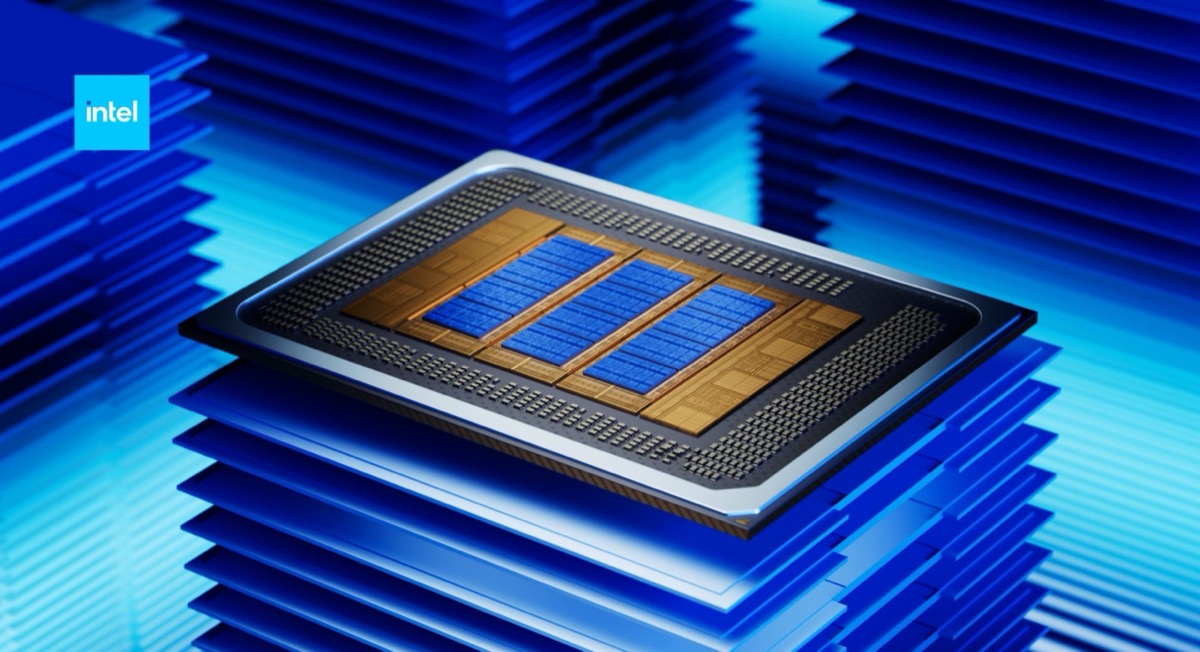

The Intel Clearwater Forest Xeon 6+ isn’t just another chip refresh. It’s the first high-volume data center CPU manufactured on Intel 18A, the 1.8nm-class process featuring RibbonFET gate-all-around transistors and PowerVia backside power delivery. If this chip succeeds, Intel’s foundry ambitions stay alive. If it doesn’t, the pressure from AMD’s EPYC Turin and Arm-based server alternatives only intensifies.

Intel Clearwater Forest Xeon 6+ Architecture: A Multi-Chip Masterpiece

Clearwater Forest is Intel’s most ambitious chiplet design to date. The flagship 288-core configuration stacks 12 compute chiplets on top of three active base tiles, connected by two I/O tiles. Each compute chiplet houses 24 Darkmont E-cores organized into six modules of four cores each. Here’s the full breakdown:

- Compute Tiles (12): Intel 18A process — 24 Darkmont E-cores per tile, 288 total

- Base Tiles (3): Intel 3 process — active silicon interposer with power delivery and cache

- I/O Tiles (2): Intel 7 process — PCIe 5.0, CXL 2.0, DDR5 controllers, 8 accelerators each

The magic glue holding this together is Foveros Direct 3D packaging — Intel’s most advanced 3D stacking technology with 9-micrometer bump pitch and copper-to-copper hybrid bonding. This isn’t the older thermocompression Foveros from Lakefield. The direct copper bonds deliver dramatically lower resistance, enabling higher bandwidth between compute and base tiles at lower power. Lateral tile-to-tile connections use Intel’s EMIB (Embedded Multi-die Interconnect Bridge) 2.5D technology.

288 Darkmont E-Cores: Why Efficiency Cores Dominate the Data Center

The Darkmont microarchitecture powering Clearwater Forest delivers a 17% IPC uplift over the Crestmont cores used in Sierra Forest. That might sound modest, but when you multiply it across 288 cores running cloud-native, throughput-optimized workloads, the aggregate improvement is massive.

Darkmont features a wider 3×3 decode engine (nine-wide), deeper out-of-order execution windows, and enhanced branch prediction. Each four-core cluster shares 4 MB of L2 cache, totaling 288 MB of L2 across the entire package. The Last Level Cache (LLC) reaches a staggering 576 MB, bringing the combined on-package cache to 864 MB — nearly a gigabyte of cache on a single socket.

Unlike AMD’s approach with EPYC Turin, which uses Zen 5C cores with simultaneous multithreading (SMT) to hit 192 cores / 384 threads, Intel’s Darkmont E-cores have no SMT. Each core handles one thread, so 288 cores means 288 threads. For throughput-heavy workloads like web serving, microservices, and 5G/6G telecom infrastructure, raw core count often matters more than thread count.

Memory, I/O, and the Numbers That Matter

Clearwater Forest doesn’t just impress on core count. The platform specifications read like a wish list for hyperscale data center architects:

- Memory: 12-channel DDR5-8000 MT/s — the highest memory bandwidth in Intel’s server lineup

- PCIe: 96 PCIe Gen 5.0 lanes (48 per I/O tile)

- CXL: 64 CXL 2.0 lanes for memory expansion and pooling

- UPI: 192 UPI 2.0 lanes for dual-socket interconnect

- TDP: 450W for the 288-core flagship (300–500W range across SKUs)

- Accelerators: 16 per socket — Intel QAT, DLB, DSA, IAA

- Security: Intel SGX and TDX for confidential computing

For context, the previous Sierra Forest flagship (Xeon 6780E) also offered 288 cores but required a 500W TDP. Clearwater Forest’s 288-core SKU drops to 450W — an 11% TDP reduction while delivering 17% more IPC per core. That’s the power of moving from Intel 3 to 18A.

Foveros Direct 3D and EMIB: The Packaging Technology Advantage

Intel’s advanced packaging story is arguably the most underappreciated aspect of Clearwater Forest. While competitors rely primarily on organic substrate interposers or silicon bridges, Intel combines two cutting-edge approaches:

Foveros Direct 3D uses copper-to-copper hybrid bonding at 9µm bump pitch to stack compute chiplets vertically on base tiles. This eliminates the solder microbumps used in previous Foveros generations, reducing interconnect resistance by over 10x and enabling significantly higher power efficiency between stacked dies.

EMIB (Embedded Multi-die Interconnect Bridge) connects tiles laterally in a 2.5D configuration. Unlike TSMC’s CoWoS, which requires a full silicon interposer, EMIB embeds small silicon bridges only where needed, reducing cost and package complexity. Clearwater Forest is the first production CPU to combine both Foveros Direct 3D and EMIB in a single package — a technology statement that no competitor currently matches.

Performance Claims: 38% Less Power, 60% Better Perf/Watt

Intel’s headline performance numbers come from real-world validation by Ericsson, one of the world’s largest telecom infrastructure providers. Their testing compared a single 288-core Clearwater Forest socket against a dual-socket 288-core Sierra Forest (Xeon 6780E) system:

- 38% reduction in runtime rack power consumption

- 60%+ improvement in performance per watt

- 30% higher overall performance from a single socket vs. dual socket

These numbers are remarkable because they represent a socket consolidation story. One Clearwater Forest socket replaces two Sierra Forest sockets while using less power and delivering more performance. For data center operators paying millions in electricity bills, that math changes procurement decisions immediately.

The 20% power reduction per transistor enabled by Intel 18A’s RibbonFET technology is a key contributor. Gate-all-around transistors wrap the gate electrode around all four sides of the channel, providing better electrostatic control and reducing leakage current compared to FinFET designs. Combined with PowerVia backside power delivery, which separates power routing from signal routing, Intel achieves both higher transistor density and lower operating voltage.

Intel Clearwater Forest Xeon 6+ vs AMD EPYC Turin: Different Philosophies

The server CPU market in 2026 presents two fundamentally different architectural approaches:

AMD EPYC 9965 Turin: 192 Zen 5C cores with SMT (384 threads), full AVX-512 support, monolithic CCDs on TSMC 3nm. AMD’s approach favors wider, more capable cores with thread-level parallelism. The Zen 5 architecture excels in workloads that benefit from SMT and wide SIMD — scientific computing, HPC, and traditional enterprise applications.

Intel Clearwater Forest: 288 Darkmont E-cores (288 threads, no SMT), 16 on-die accelerators, Foveros Direct 3D packaging. Intel’s approach maximizes core density for throughput-per-watt, targeting cloud-native microservices, CDN, web serving, and telecom workloads where independent task parallelism dominates.

Neither approach is universally superior. AMD leads in per-core performance and workloads needing AVX-512 heavy lifting. Intel counters with 50% more cores, lower power per core, and a richer set of on-die accelerators (QAT for encryption, DSA for data movement, IAA for analytics). The right choice depends entirely on the workload profile.

Target Markets: From 5G to Edge AI

Intel is explicitly positioning Clearwater Forest for the network-to-cloud continuum. Key target workloads include:

- 5G/6G RAN Infrastructure: vRAN Boost enables inline acceleration of telecom workloads, reducing the need for external accelerator cards

- Edge AI Inference: High core density enables running hundreds of concurrent AI inference tasks at the network edge

- Cloud-Native Microservices: Each core can independently handle a container or VM, maximizing density per socket

- CDN and Web Serving: Throughput-optimized workloads that scale linearly with core count

- High-Density Virtualization: Dual-socket systems approach 576 cores, enabling hundreds of VMs per server

The Ericsson partnership highlights telecom as the initial beachhead. As mobile operators build out 5G standalone networks and begin early 6G research, the demand for power-efficient, high-density compute at cell towers and regional data centers is accelerating. Clearwater Forest’s ability to replace dual-socket deployments with single-socket solutions directly addresses the space and power constraints of edge installations.

What This Means for Intel’s Foundry Ambitions

Clearwater Forest isn’t just a product launch — it’s a proof of concept for Intel 18A, the process node that Intel Foundry needs to attract external customers. If 18A delivers on its efficiency and density promises in a complex, high-volume server product, it validates the node for potential customers like Qualcomm, MediaTek, and others reportedly evaluating Intel’s foundry services.

The combination of RibbonFET, PowerVia, Foveros Direct 3D, and EMIB in a single production chip demonstrates manufacturing capability that goes beyond what any other foundry currently offers in an integrated package. TSMC may lead in raw transistor density, but Intel’s advanced packaging portfolio — particularly the 3D stacking with copper hybrid bonding — represents a differentiated offering.

The timeline matters here. Intel Diamond Rapids — the performance-core (P-core) counterpart using Clearwater Forest’s same 18A process — is expected to follow in 2027 with Lion Cove cores targeting HPC and AI training workloads. Together, Clearwater Forest and Diamond Rapids will give Intel a complete 18A server portfolio covering both efficiency-optimized and performance-optimized segments.

For hyperscalers like Microsoft Azure, Google Cloud, and AWS, the Intel Clearwater Forest Xeon 6+ socket consolidation story is particularly compelling. Reducing dual-socket deployments to single-socket cuts not just power consumption but also motherboard cost, cooling requirements, and rack density constraints. When you’re deploying hundreds of thousands of servers, even small per-unit savings compound into massive operational budget reductions.

Systems featuring Clearwater Forest are expected to ship in H2 2026. For data center operators evaluating their next infrastructure refresh, the combination of 288 cores, sub-gigabyte on-package cache, 38% power reduction, and Intel’s broadest accelerator suite makes the Xeon 6+ a compelling option that demands serious evaluation alongside AMD’s EPYC lineup.

Stay ahead of the latest server CPU launches, data center hardware trends, and enterprise tech analysis — delivered weekly.

Get weekly AI, music, and tech trends delivered to your inbox.