Apple Mac Mini M5 Rumors: Compact Desktop Gets Pro-Level Performance

September 17, 2025

Waves V15 Explained: Plugin Format Changes, New Installers, and What Every Producer Needs to Know

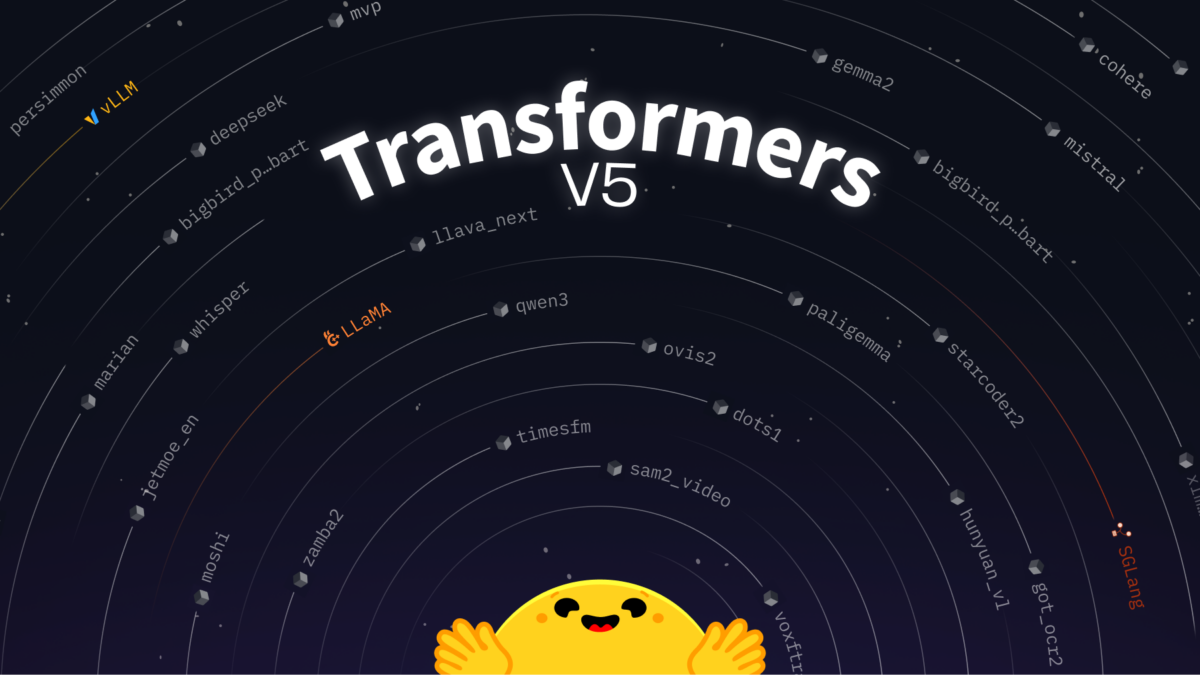

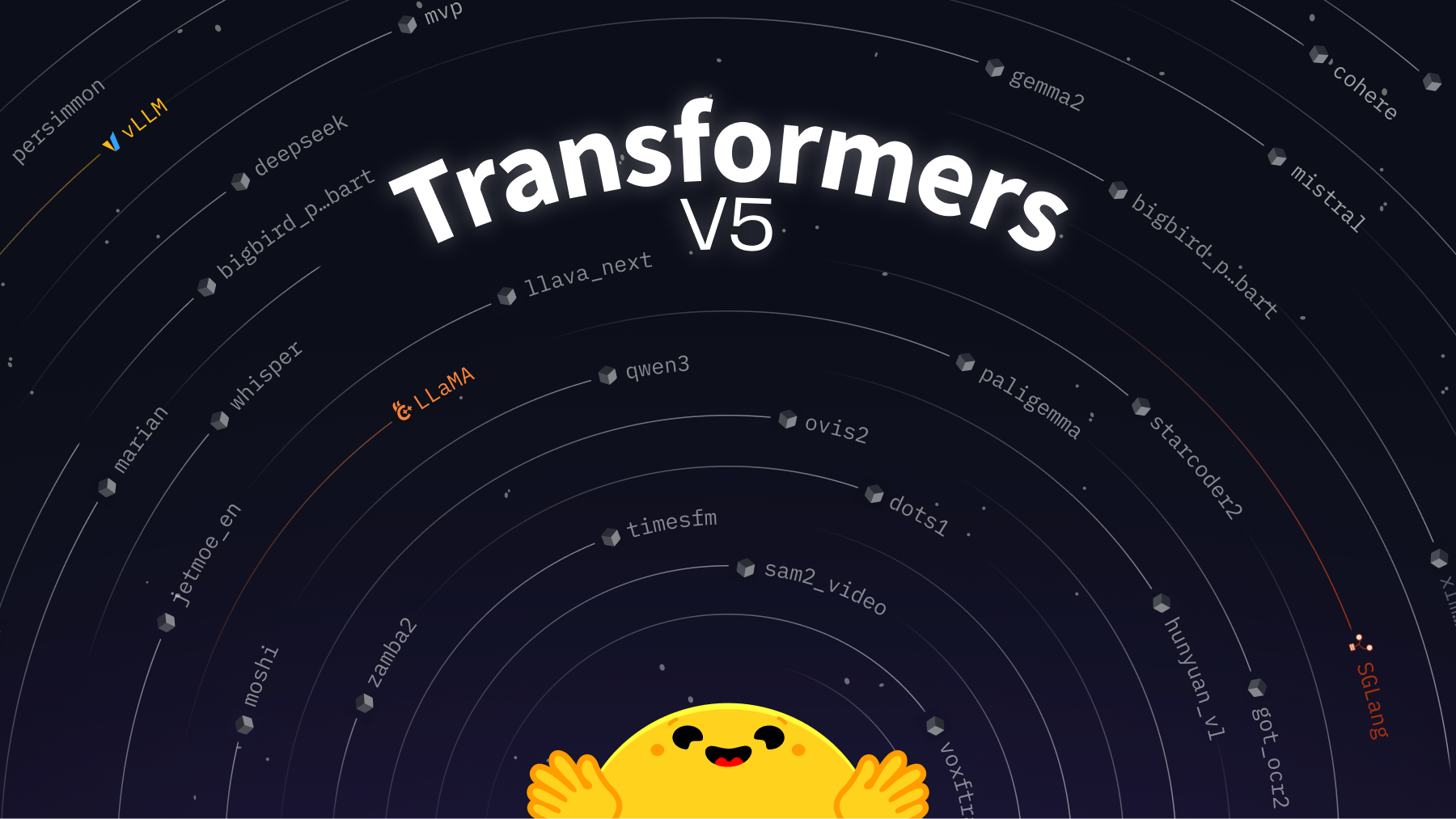

September 18, 2025On September 11th, a single GitHub issue sent ripples through the entire AI open-source ecosystem. “Welcome v5” — the official starting gun for Hugging Face Transformers v5 development. When a library with over 3 million daily installs, 400+ model architectures, and 750,000+ checkpoints announces a major version bump, this isn’t just an update. It’s an infrastructure-level paradigm shift for machine learning.

And the Hugging Face Transformers v5 announcement doesn’t pull any punches. TensorFlow and JAX backends — gone. The entire tokenizer system — redesigned from scratch. A new AttentionInterface abstraction for modular model definitions. A WeightConverter API for standardized checkpoint management. SafeTensors as the mandatory default format. Let’s break down what all of this means, why these decisions were made, and exactly how it’s going to affect your projects.

The Biggest Call: Hugging Face Transformers v5 Goes PyTorch-Only

Multi-framework support has been a defining feature of the Transformers library since its early days. The promise was simple: write once, run on PyTorch, TensorFlow, or JAX via TFAutoModel and FlaxAutoModel. It was an ambitious vision. And now, with v5, Hugging Face is walking away from it entirely.

The reasoning is pragmatic, not ideological. The overwhelming majority of the 750,000+ checkpoints on Hugging Face Hub are PyTorch-native. The three new architectures added to the library every week almost always start as PyTorch implementations. Maintaining TF/JAX parity meant tripling the code for every architecture, creating a massive maintenance burden that directly slowed down the pace of new model support. With inference engines like vLLM and SGLang now adopting Transformers as their backend, the calculus shifted: concentrating on PyTorch benefits the broadest possible user base.

For teams currently using TensorFlow or JAX with Transformers, this is a significant change that demands planning. The good news is that a dedicated v4 maintenance branch will be created, so you won’t be forced to migrate overnight. But the writing is on the wall — if you’re building new ML pipelines in 2025, PyTorch is now the unambiguous default for the Transformers ecosystem. Projects that have been straddling multiple frameworks will need to commit, and the sooner that decision happens, the smoother the transition will be.

This move also has broader implications for the ecosystem beyond Transformers itself. As Hugging Face outlined in their blog post on standardizing model definitions, Transformers has evolved from a model zoo into a central pivot point for the entire ML ecosystem. GGUF interoperability, MLX compatibility, integration with inference frameworks — all of these become easier when the library can focus engineering resources on a single backend rather than spreading them across three.

The Tokenizer Overhaul: No More Fast vs. Slow

If you’ve worked with Transformers for any length of time, you’ve probably encountered the tokenizer confusion. BertTokenizer versus BertTokenizerFast. The use_fast=True parameter. SentencePiece-based tokenizers that behave differently from Rust-based ones. Two parallel implementations for the same model, sometimes with subtle behavioral differences. It’s been one of the library’s most consistent sources of developer friction.

Transformers v5 addresses this head-on with a completely redesigned tokenizer architecture based on explicit backends. Instead of the ambiguous Fast/Slow distinction, v5 introduces clearly labeled backend classes: TokenizersBackend (Rust-based tokenizers library), SentencePieceBackend, PythonBackend (pure Python fallback), and MistralCommonBackend (for Mistral-family models). The underlying implementation is no longer hidden behind a boolean flag — it’s architecturally transparent.

The breaking changes here are real. According to the official migration guide, encode_plus is being deprecated in favor of the standard __call__ method. apply_chat_template will return BatchEncoding objects instead of raw lists. The special_tokens_map.json file gets merged into tokenizer_config.json, simplifying the file structure. If you have custom tokenizer code, wrapper classes, or rely on encode_plus specifically, you’ll need to update your implementations.

While the migration effort is non-trivial for projects with deep tokenizer customizations, the end result is a much cleaner mental model. One tokenizer class per model, one clear backend, no more guessing about behavior differences between Fast and Slow variants. Newcomers to the library will no longer need to understand an accidental historical artifact just to tokenize text properly. For the long-term health of the ecosystem and for developer onboarding, this is the right call.

Modular Model Architecture and the AttentionInterface Abstraction

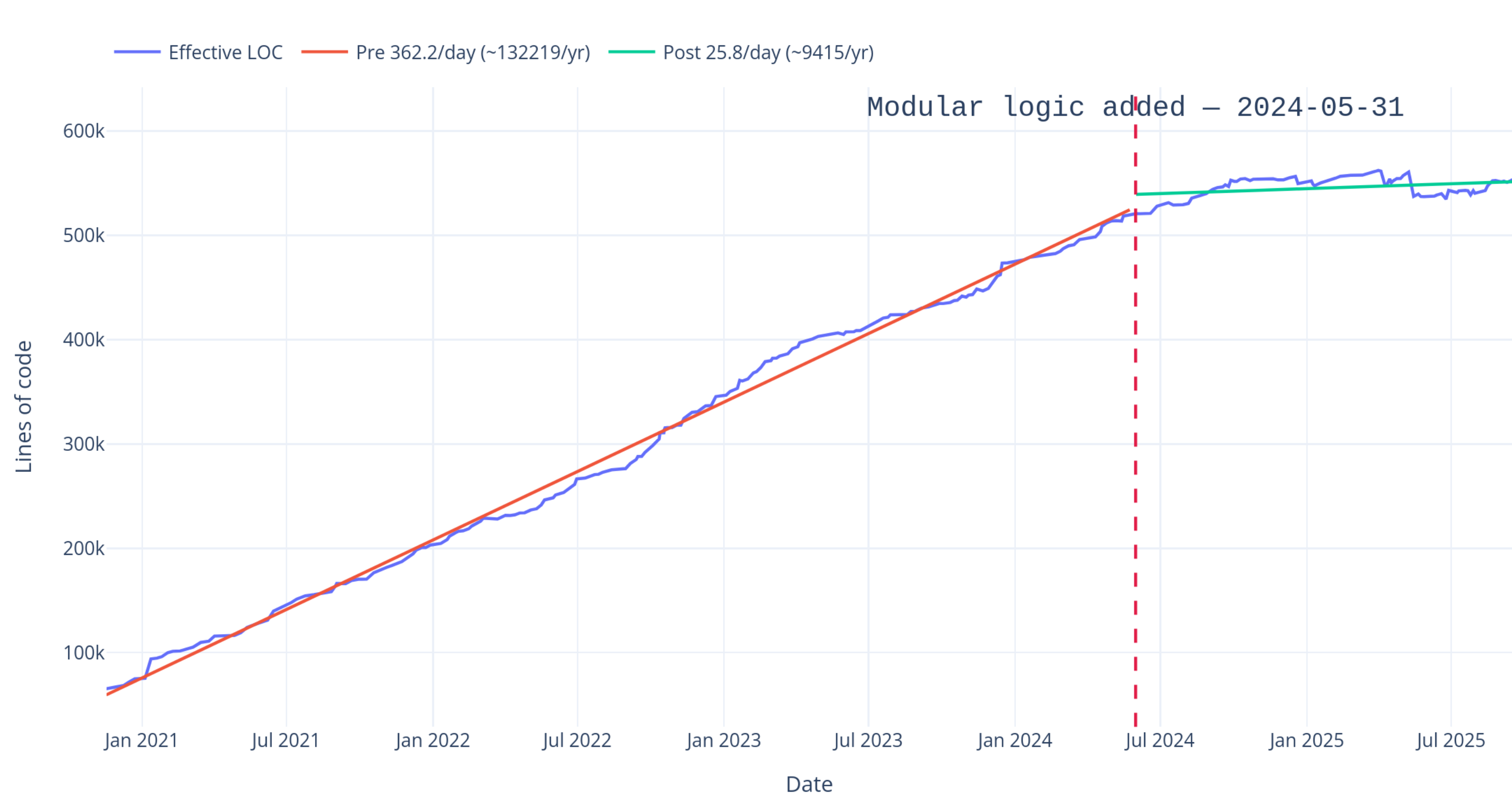

Here’s where things get particularly interesting for model developers and inference engineers. If you’ve ever looked at the source code of a Transformers model implementation, you’ll notice a pattern: every architecture has its own attention implementation. SDPA (Scaled Dot-Product Attention), Flash Attention, vanilla attention — each copied and slightly modified across hundreds of model files. With 400+ architectures, this copy-paste approach had become a maintenance nightmare.

The v5 solution is the AttentionInterface — a standardized abstraction layer that all models implement. Instead of each model defining its own attention variants, models now plug into a common interface. When a new attention algorithm drops (say, Flash Attention 3 or some future innovation), updating the interface once propagates the optimization to every supported model automatically.

The practical implications are significant across several dimensions. Researchers implementing custom architectures can write less boilerplate code while getting access to more attention strategies out of the box. Inference engineers can apply optimizations at the interface level rather than patching individual model implementations. And inference frameworks that use Transformers as a backend — vLLM, SGLang, and others — get a consistent, predictable API to build against.

The Hugging Face v5 blog post describes this as “simple model definitions powering the AI ecosystem,” and that framing is accurate. By reducing the amount of code each model needs to define, the library becomes more maintainable, more consistent, and more amenable to ecosystem-wide optimizations. This is particularly important as the Transformers library transitions from being primarily a training/fine-tuning tool to also being a core inference backend.

WeightConverter API and SafeTensors as the Default

Checkpoint management is another area getting a major upgrade. The new WeightConverter API standardizes the process of converting between different checkpoint formats. Converting from a research codebase’s original checkpoints to Transformers format, exporting to GGUF for local inference, or transforming weight layouts — all of these operations now go through a unified interface rather than ad-hoc conversion scripts.

More importantly, SafeTensors becomes the mandatory default format in v5. The security implications alone make this worthwhile. Traditional pickle-based .bin checkpoint files can execute arbitrary code on load — a well-documented security vulnerability that has been a persistent concern in the ML community. Malicious model files disguised as legitimate checkpoints have been discovered on public hubs in the past. SafeTensors eliminates this entire attack vector by design, since it only stores tensor data and metadata without any executable code paths. In v5, loading non-SafeTensors checkpoints will require an explicit opt-in, making secure-by-default the standard rather than the exception.

Quantization is also being elevated to a first-class citizen in v5. Rather than being an afterthought bolted on through separate libraries, quantization support is being built directly into the core library architecture. This means more consistent quantization behavior across models, better integration with inference optimization pipelines, and lower barriers to deploying efficient models in production. For teams running inference at scale, this alone could be worth the migration effort.

The Migration Checklist: What to Do Right Now

While v5 is still in active development — the main branch just switched to v5 development this week — it’s not too early to start preparing. Based on the official migration guide and the announced changes, here’s what you should be checking right now.

- Audit TF/JAX dependencies: Search your codebase for

TFAutoModel,FlaxAutoModel, and any TensorFlow/JAX-specific Transformers imports. Start planning the PyTorch migration path for each usage. - Review tokenizer API usage: Check for

encode_pluscalls, custom tokenizer subclasses, and any code that depends on the Fast/Slow tokenizer distinction. The new backend-based architecture will require updates. - Convert checkpoints to SafeTensors: If you’re storing or distributing pickle-based

.bincheckpoints, start converting them now. Thesafetensorslibrary already supports this. - Check attention customizations: If you’ve implemented custom attention mechanisms by modifying model source code, the new

AttentionInterfacewill likely require restructuring those modifications. - Prepare CI/CD testing: Set up a parallel test branch with

transformers>=5.0pinning so you can test compatibility as soon as the RC drops. - Pin v4 if needed: For production systems that can’t risk breaking changes, add

transformers<5.0to your requirements now and plan the upgrade on your own timeline.

One important note: since the main branch has already switched to v5 development, anyone using nightly or dev builds of Transformers may start encountering breaking changes immediately. If you’re on a stable release (v4.x), you’re fine for now, but be mindful of your install source.

What This Signals for the Broader AI Ecosystem

Stepping back from the technical details, the Transformers v5 announcement tells us something important about where the open-source AI ecosystem is heading. The era of “support everything, everywhere” is giving way to an era of standardization and optimization. Hugging Face is making deliberate, opinionated choices — PyTorch only, SafeTensors mandatory, modular architectures — because the ecosystem has matured enough that these bets are now safe to make.

The library is also clearly positioning itself as more than a model zoo. With the transformers serve command and deep integration with inference frameworks, Transformers is becoming an end-to-end platform spanning from model definition through training to production serving. The v5 architecture changes are designed to support this expanded role.

For developers and ML engineers, the message is clear: invest in understanding the new abstractions now, while v5 is still in development. The breaking changes are significant but well-documented, and the migration guide is already available. By the time the v5 RC lands, teams that have done their homework will transition smoothly. Those that haven’t will be scrambling.

The Transformers library has earned its position as the backbone of the open-source AI stack through years of consistent execution and community trust. Version 5 is about making that backbone stronger, cleaner, and more efficient — even if it means breaking some things along the way. The decisions are bold but well-reasoned, and the migration path is clearly documented. Start preparing your migration today. When the RC drops, you’ll be ready instead of reactive. Your future self will thank you.

Need help with ML pipeline architecture, model deployment automation, or navigating the Transformers v5 migration? Sean Kim can help.

Get weekly AI, music, and tech trends delivered to your inbox.