Corsair K100 Air Wireless Keyboard: Ultra-Thin Mechanical for Productivity

October 20, 2025

Best Acoustic Treatment Panels 2025: GIK vs Auralex vs DIY — Which Actually Fixes Your Room?

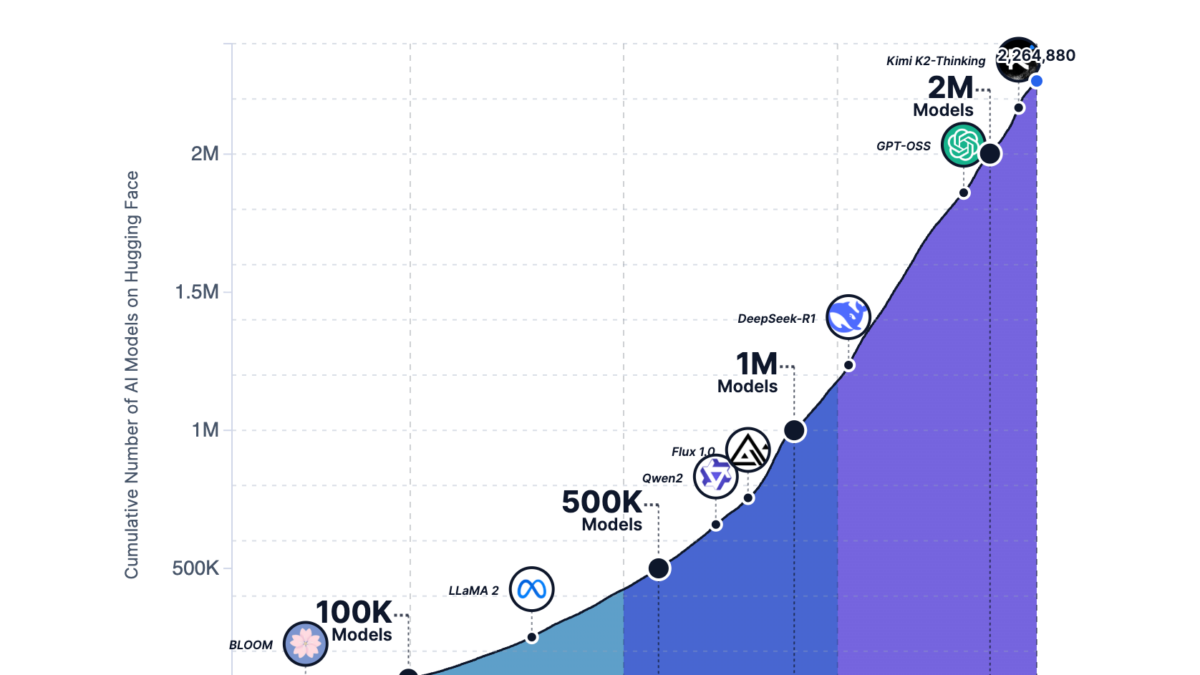

October 21, 2025Two million models. That is the number Hugging Face community models just crossed — and the pace is only accelerating. With 1,000 to 2,000 new models uploaded every single day, Hugging Face has become the undisputed epicenter of open source AI. But October 2025 stands out even by those standards. NVIDIA dropped 650+ open models onto the platform, Meta co-launched a new hub for agentic environments, and China’s AI community posted staggering growth numbers. Here is everything worth paying attention to.

This is not just a trending list. I am going to break down the Hugging Face community models that matter most this month, why they matter, and what the broader patterns tell us about where open source AI is headed.

The Hugging Face Community Models Ecosystem by the Numbers

Before diving into specific models, let us set the stage with the macro picture. Hugging Face now hosts 13 million users, over 2 million public models, and more than 500,000 public datasets. The platform has evolved from a niche NLP repository into the GitHub of AI — a place where everyone from independent developers to Fortune 500 companies ships their work.

The numbers reveal fascinating dynamics. The mean model size on the platform in 2025 has grown to 20.8 billion parameters, but the median sits at just 406 million. That gap tells a clear story: a few massive frontier models coexist with a vast ocean of practical, deployable smaller models. The majority of real-world usage gravitates toward efficiency, not scale.

China now accounts for 41% of all Hugging Face downloads, a seismic shift in the geographic distribution of open source AI consumption. Independent developers represent 39% of downloads, proving that the platform is not just a playground for big tech. Baidu went from zero to over 100 model releases. ByteDance and Tencent saw 8 to 9x increases in their contributions. The open source AI race is truly global now.

Top 6 Hugging Face Community Models Trending in October 2025

1. Qwen2.5-VL Series and Qwen3-VL-32B-Instruct (Alibaba)

Alibaba’s Qwen team continues to dominate the open source vision-language model space. The Qwen2.5-VL series ships in three sizes — 3B, 7B, and 72B parameters — making multimodal AI accessible at every scale. The flagship Qwen3-VL-32B-Instruct model claimed the number one trending spot on Hugging Face in October, and for good reason. It delivers strong performance on both text and image understanding tasks at a sweet-spot parameter count that many developers can actually deploy.

What makes the Qwen ecosystem particularly impressive is its community momentum. Over 113,000 derivative models based on Qwen now exist on Hugging Face. That is not just adoption — that is an entire ecosystem building on top of a single model family. Fine-tuned variants, quantized versions, domain-specific adaptations — the Qwen tree keeps branching.

2. DeepSeek-OCR — Document Digitization Gets a Major Upgrade

DeepSeek-OCR tackles one of the most practical problems in enterprise AI: converting scanned documents, handwritten notes, and complex PDF layouts into accurate digital text. What sets it apart from existing OCR solutions is both its accuracy on challenging layouts and its permissive license, which makes commercial deployment straightforward. In a world where most enterprise data still lives in unstructured documents, a model like this has immediate and obvious value.

The model handles multi-column layouts, mixed-language documents, and even degraded scans with impressive fidelity. For developers building document processing pipelines, DeepSeek-OCR is worth evaluating as a direct replacement for traditional OCR engines.

3. Krea Realtime 14B — Video Generation at Unprecedented Speed

Krea Realtime 14B is an autoregressive video generation model that compresses the typical 30-step inference pipeline down to just 4 steps. That is not an incremental improvement — it is a fundamental rethinking of how video generation models should work. The result is near-real-time video synthesis that was simply not possible a few months ago.

For creative professionals and content producers, this kind of efficiency breakthrough moves AI video from “interesting experiment” to “production-viable tool.” The 14B parameter size also means it can run on high-end consumer hardware, not just data center GPUs.

4. SmolVLM (Hugging Face + Stanford) — Proof That Size Is Not Everything

SmolVLM might be the most intellectually exciting model on this list. At just 256 million parameters, it outperforms Idefics-80B — a model literally 300 times its size — on vision-language benchmarks. Born from a collaboration between Hugging Face and Stanford, SmolVLM represents the cutting edge of efficient model design.

The implications are enormous. A 256M parameter model can run on a smartphone. It can run on edge devices. It can be deployed at a fraction of the cost of its larger competitors. If you are building products that need vision-language capabilities but cannot afford to serve a 7B+ model, SmolVLM just changed your calculus entirely.

5. Hunyuan-MoE-A52B (Tencent) — Mixture of Experts Goes Open

Tencent’s Hunyuan-MoE-A52B is a 52-billion parameter Mixture of Experts model. The MoE architecture activates only a subset of parameters for each input, dramatically reducing inference costs while maintaining large-model-class performance. With Chinese big tech increasingly choosing Hugging Face as their global distribution channel, models like Hunyuan represent a fascinating convergence of enterprise-grade capability and open source accessibility.

6. NVIDIA Nemotron Series — Enterprise Open Source Done Right

On October 28, NVIDIA released Nemotron Speech, Nemotron RAG, Nemotron Safety, and Cosmos models on Hugging Face. This brings NVIDIA’s total open model count to over 650, with 250+ datasets alongside them. These are not toy research releases — they are production-grade models designed for enterprise deployment.

Nemotron RAG is particularly noteworthy. It is purpose-built for retrieval-augmented generation pipelines, the architecture pattern that is rapidly becoming standard for enterprise AI applications. Nemotron Safety addresses the critical need for guardrail models in production systems. And Cosmos pushes into the physical AI space with world foundation models. NVIDIA is not just making chips anymore — they are building the open source software layer that runs on them.

October’s Landmark Events: OpenEnv and the Robotics Data Explosion

Beyond individual models, two developments from October deserve special attention. On October 23 at the PyTorch Conference, Meta and Hugging Face jointly announced OpenEnv Hub — a platform for sharing environments where AI agents can learn and be tested. This is infrastructure for the agentic AI era. As agents move beyond text generation into real-world interaction, standardized training and evaluation environments become essential plumbing.

The other standout trend is the explosive growth of robotics datasets on Hugging Face. The count went from 1,145 to 26,991 — a 24x increase that made robotics the fastest-growing dataset category on the entire platform. Physical AI is no longer a distant research frontier. The data infrastructure is being built right now, and Hugging Face is where it is happening.

What These Hugging Face Community Models Tell Us About the Future

Stepping back from individual models and events, several clear patterns emerge from October 2025’s Hugging Face landscape.

Multimodal is now the default. The days of text-only models dominating the trending charts are over. Qwen2.5-VL, SmolVLM, and others prove that vision-language capability is table stakes for any serious new model release. If your model only handles text, it is already a generation behind.

Efficiency is the new frontier. SmolVLM outperforming a 300x larger model and Krea Realtime cutting inference steps by 87% both point in the same direction. The “make it bigger” era is giving way to “make it smarter.” This has massive implications for deployment costs, energy consumption, and accessibility.

China’s open source AI footprint is expanding rapidly. Alibaba, Tencent, Baidu, ByteDance, and DeepSeek are all aggressively using Hugging Face as their global distribution channel. The 41% download share from China is not just a statistic — it represents a fundamental shift in who is building and consuming open source AI.

Open source AI is moving from research to production. NVIDIA releasing enterprise-grade RAG and safety models, DeepSeek offering OCR under permissive licenses, thousands of independent developers shipping specialized models daily — this is not a research community anymore. This is an industry.

The accessibility of open source AI models keeps increasing, and the ability to integrate them into your own workflows is becoming a core competitive advantage. Hugging Face is no longer just a model repository — it is the central infrastructure of AI innovation. What comes next month will be just as worth watching.

Interested in building AI automation pipelines or integrating open source models into your workflow? Let’s find the right approach for your needs.

Get weekly AI, music, and tech trends delivered to your inbox.