Korg microAudio 722 Filter Ark Review: A $269 Audio Interface With Built-In Analog Synth Filter

March 19, 2026

Cursor Automations Cloud Agents: Always-On AI Triggered by GitHub PRs, Slack, and PagerDuty

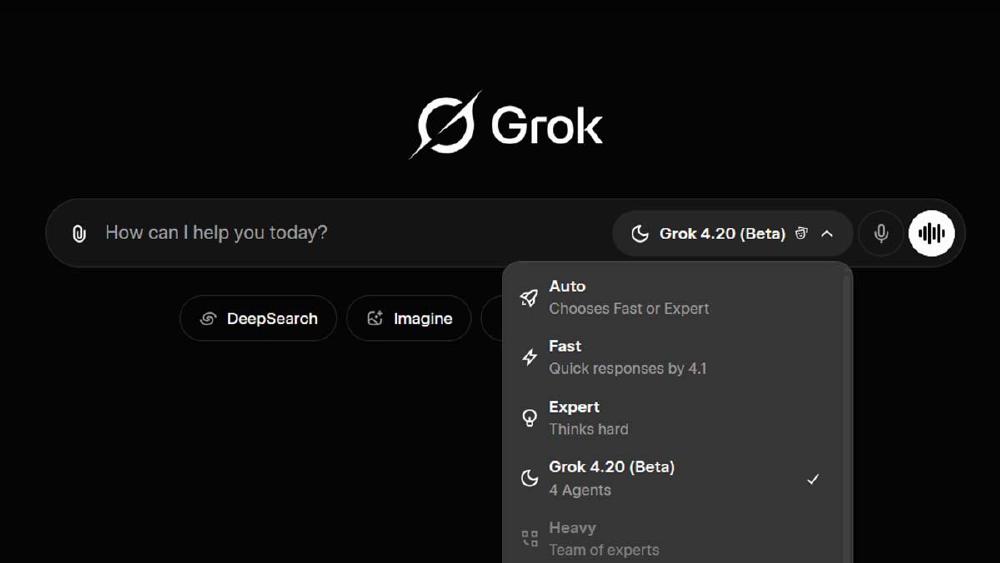

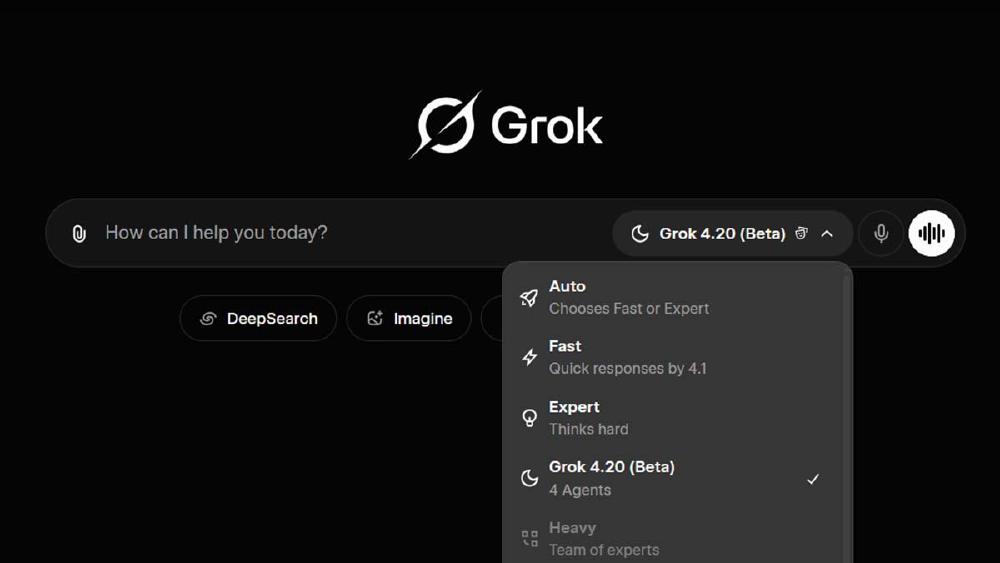

March 19, 2026What if every time you asked an AI a question, four specialists argued about it in real time — and only gave you an answer once they reached consensus? That’s not a thought experiment. It’s exactly how Grok 4.20’s multi-agent architecture works. Launched by xAI in February 2026, this system cuts hallucinations by 65% and was the only AI to turn a profit in a live stock trading competition. The single-model era just hit a wall.

What Is the Grok 4.20 Multi-Agent Architecture?

Traditional AI models work solo. One massive neural network receives your question, thinks about it, and responds. Grok 4.20 fundamentally breaks this pattern. When you send a query, four specialized agents activate simultaneously, each analyzing the problem from its domain of expertise. They exchange intermediate results, challenge each other’s conclusions in real time, and only deliver a response once they’ve reached consensus.

xAI calls this the “think → debate → synthesize” pipeline. This isn’t simple model ensembling where you run the same prompt through multiple models and average the outputs. The critical difference is real-time inter-agent interaction. When one agent confidently asserts something incorrect, another agent immediately pushes back with counter-evidence. This internal adversarial process happens behind the scenes, dramatically improving the accuracy and reliability of the final output.

The approach draws from a well-established principle in organizational psychology: diverse teams with genuine disagreement produce better decisions than homogeneous groups that agree too quickly. xAI has essentially encoded this principle into their AI architecture.

Meet the Four Agents: Grok, Harper, Benjamin, and Lucas

Each of Grok 4.20’s agents has a clearly defined role. Think of them as a four-person startup team where everyone brings a different superpower to the table — except each “person” is a specialized model with hundreds of millions of parameters.

Grok — The Captain and Coordinator

The Grok agent serves as the team’s project manager. It analyzes your query, decomposes it into sub-tasks, and assigns each sub-task to the most appropriate agent. When results come back, Grok mediates disagreements and assembles the final response. If Harper’s data contradicts Benjamin’s calculations, Grok evaluates the strength of each argument before making the final call.

Harper — Research and Fact-Checking Specialist

Harper is the team’s investigative journalist. This agent handles real-time data gathering and fact verification, with a massive advantage that no other AI company can match: direct access to X’s firehose — approximately 68 million English-language posts per day — with millisecond-level latency. Harper cross-references web searches, academic databases, and news sources to verify claims made by other agents in real time.

This is arguably xAI’s strongest differentiator. While other AI companies rely on web crawling with significant latency, Harper can access breaking information on X almost instantly. For time-sensitive queries — market moves, breaking news, emerging trends — this real-time data pipeline gives Grok 4.20 an information advantage that’s hard to replicate.

Benjamin — Math, Code, and Logic Specialist

Benjamin is the team’s quality assurance engineer for logic. This agent handles mathematical proofs, code generation and verification, and step-by-step reasoning chains. When other agents propose strategies or present numerical data, Benjamin stress-tests the logic for consistency and flags computational errors or logical leaps. The Grok 4 family’s strong performance on SWE-bench (75%) — competitive with GPT-5.4’s 74.9% — is largely attributed to Benjamin’s rigorous verification process.

Lucas — Creativity and Balance Specialist

Lucas is the team’s contrarian. This agent deliberately proposes alternative viewpoints, explores blind spots the other agents might miss, and applies divergent thinking to generate novel hypotheses. Lucas also optimizes the final output for readability and human relevance — ensuring the response isn’t just accurate, but actually useful and well-written.

Without Lucas, the remaining three agents would likely produce responses that are technically correct but rigid and one-dimensional. Lucas adds the creative tension that transforms a committee report into something genuinely insightful. In practice, Lucas is also the agent most likely to flag when a query has ethical implications or when the consensus answer might be technically accurate but misleading in context.

How the Debate Mechanism Cuts Hallucinations by 65%

The core value proposition of this multi-agent system is internal adversarial checking. A single model struggles to recognize its own errors — it can’t easily distinguish between confident correctness and confident hallucination. But when four agents simultaneously tackle the same problem, one agent’s mistake gets caught by another almost immediately.

Here’s a concrete example of how this plays out. Imagine asking Grok 4.20 for Tesla’s Q1 2026 earnings outlook:

- Harper pulls the latest financial data, analyst reports, and real-time market sentiment from X

- Benjamin validates the numerical consistency of Harper’s data and calculates key financial ratios

- Lucas considers macro-economic variables, market psychology, and alternative scenarios that pure quantitative analysis might overlook

- Grok synthesizes everything, but if agents disagree, it weighs the strength of evidence behind each position before making the final call

If Harper pulls a data point that’s outdated or incorrect, Benjamin catches it during numerical verification. If Benjamin’s analysis is overly conservative, Lucas flags the limitation and proposes alternative scenarios. This multi-directional checking is what drives the 65% reduction in hallucinations that xAI reported in early testing — a genuinely significant improvement.

Real-World Performance: The Only Profitable AI in Alpha Arena

Benchmarks matter, but real-world performance matters more. The most compelling proof of Grok 4.20’s multi-agent architecture came from Alpha Arena Season 1.5 — a live stock trading competition where AI models manage virtual portfolios against real market data.

Each AI model started with $10,000 in virtual capital and made trading decisions based on real-time market conditions. The results were striking: Grok 4.20 was the only model to finish in profit, growing its portfolio to approximately $11,000–$13,500, while competing models from OpenAI and Google all finished in the red.

Why trading? Because it’s the ultimate test of multi-dimensional judgment under uncertainty. You need real-time data (Harper), quantitative rigor (Benjamin), creative scenario analysis (Lucas), and smart synthesis (Grok) — all working together. The fact that this distributed approach outperformed monolithic models in such a demanding, real-world task is the strongest argument for the multi-agent paradigm.

Grok 4.20 Heavy: Scaling to 16 Agents for Research-Grade Problems

Four agents not enough? Grok 4.20 Heavy mode scales up to 16 specialized agents for research-grade problems. Complex academic analysis, large-scale codebase refactoring, multivariate data analysis — Heavy mode deploys additional specialists to tackle problems that would overwhelm even a strong 4-agent team.

Heavy mode is available through SuperGrok subscriptions ($30/month) or X Premium+ membership. Combined with the 256K context window, this creates a genuinely powerful research tool. Imagine pointing 16 specialized agents at a massive codebase for a comprehensive security audit, or having them collaboratively analyze a complex regulatory filing. The applications for enterprise and research use cases are significant.

The scaling from 4 to 16 agents isn’t just about throwing more compute at a problem. Each additional agent brings a new specialization — deeper domain expertise in areas like regulatory compliance, statistical modeling, or domain-specific code patterns. The coordination overhead increases, but for problems complex enough to warrant it, the depth of analysis scales proportionally. Early reports from researchers using Heavy mode for academic paper reviews suggest it catches methodological flaws that single-model systems consistently miss.

The Competitive Landscape: What Makes This Different

As of March 2026, the major AI players are pursuing distinctly different strategies. Anthropic’s Claude focuses on long-context understanding and code agents. OpenAI’s GPT series doubles down on general-purpose reasoning. Google’s Gemini pushes multimodal integration across its ecosystem.

xAI’s Grok 4.20 carved out a unique position with its native multi-agent architecture. Other models offer agent-like features, but they orchestrate agents externally — at the application layer. Grok 4.20’s inter-agent debate structure is built into the model itself, which is a fundamentally different approach.

There are real limitations to acknowledge. Running four agents in parallel can increase response latency. If agents fail to reach consensus, the output might be ambiguous rather than decisive. And the API isn’t publicly available yet (expected Q2 2026), which limits developer adoption. But the direction this architecture points toward — breaking through single-model limitations via agent collaboration — is setting the agenda for the entire industry.

What Multi-Agent AI Means for the Future

Grok 4.20’s approach reveals a new axis of AI progress. Until now, performance gains have primarily come from scaling up — more parameters, more training data, more compute. But this approach is hitting diminishing returns against skyrocketing costs and energy consumption.

Multi-agent architecture offers a structural innovation that sidesteps these scaling limits. Instead of one monolithic model trying to do everything, specialized smaller models collaborate — mirroring how human organizations work. A diverse team of experts consistently outperforms a single generalist, no matter how talented.

As this paradigm spreads, expect a shift from “asking AI a question” to “assigning a project to an AI team.” For developers, this means designing systems that orchestrate specialized agents rather than relying on a single omniscient model. For businesses, it means rethinking how AI integrates into decision-making workflows — not as a single oracle, but as a collaborative intelligence layer.

Grok 4.20 is the most concrete implementation of this vision to date. Available through SuperGrok ($30/month) or X Premium+, it’s worth testing if you’re seriously building AI-powered workflows. The multi-agent future isn’t theoretical anymore — it’s here, debating itself into better answers right now.

Interested in building multi-agent AI systems or automating complex workflows? Let’s find the right architecture for your use case.

Get weekly AI, music, and tech trends delivered to your inbox.