Samsung Galaxy Z Fold7 vs Google Pixel Fold 2: The Definitive 2025 Foldable Flagship Comparison

August 1, 2025

AI Music Generator Comparison: Suno AI vs Udio vs ElevenLabs Music August 2025

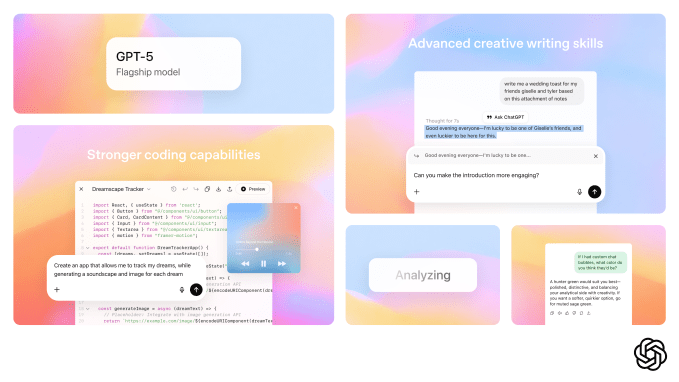

August 4, 202594.6% on AIME 2025 math. 45% fewer factual errors than GPT-4o. 80% fewer than o3 in thinking mode. GPT-5’s benchmark numbers are staggering — but the real revolution isn’t about scores. For the first time, OpenAI has delivered on the promise of a single model that handles everything: quick chat responses, deep multi-step reasoning, image analysis, and code generation — all without you ever touching a model selector dropdown.

What Is GPT-5 — The First Truly Unified AI Model

On August 7, 2025, OpenAI unveiled GPT-5 during its LIVE5TREAM event. Sam Altman called it “the best model in the world.” Bold claim? Sure. But GPT-5 is genuinely different from everything that came before it — not because it’s bigger or faster, but because it fundamentally changes how you interact with AI.

Until now, using OpenAI’s models meant making choices. Need a quick answer? GPT-4o. Need deep reasoning? Switch to o3. Working with images? Maybe GPT-4 Vision. GPT-5 eliminates all of that. It’s a three-part architecture working seamlessly behind the scenes:

- Fast Efficient Model: Handles routine queries with minimal latency — your everyday questions, simple translations, quick lookups.

- GPT-5 Thinking Mode: Engages deep, multi-step reasoning for complex problems — math proofs, architectural decisions, nuanced analysis.

- Real-Time Router: The brain of the operation. It analyzes your query’s complexity, conversation context, and tool requirements to decide which mode to invoke — instantly and invisibly.

You never choose. You never switch. You just ask, and GPT-5 figures out how hard it needs to think. That’s the paradigm shift.

GPT-5 Benchmarks — What the Numbers Actually Mean

Let’s break down the benchmarks, because raw percentages without context are meaningless. What matters is what these numbers translate to in real-world capability.

Mathematics (AIME 2025): 94.6%. This is the American Invitational Mathematics Examination — college-level competition problems that stump most humans. GPT-5 solves nearly all of them without external tools. For reference, the previous state-of-the-art models scored significantly lower. This isn’t incremental improvement; it’s a leap.

Software Engineering (SWE-bench Verified): 74.9%. SWE-bench tests models against real GitHub issues — actual bugs in actual codebases. Scoring 74.9% means GPT-5 can resolve roughly three out of four real-world software engineering tasks correctly. For developers, this translates to a genuinely useful coding partner that can debug, refactor, and implement features with meaningful accuracy.

Polyglot Coding (Aider Polyglot): 88%. This benchmark tests the ability to work across multiple programming languages in a single session. GPT-5 handles Python-to-Rust translations, JavaScript debugging followed by SQL optimization, and similar cross-language tasks with remarkable consistency.

Multimodal Understanding (MMMU): 84.2%. The Massive Multi-discipline Multimodal Understanding benchmark tests comprehension of images, charts, diagrams, and text simultaneously. GPT-5’s score here reflects a genuinely integrated vision-language model, not a bolted-on afterthought.

Medical Reasoning (HealthBench Hard): 46.2%. Medical AI is notoriously difficult, and while 46.2% might seem modest, it represents substantial progress on questions that require nuanced clinical reasoning. This isn’t consumer medical advice territory — it’s research-grade capability advancement.

Perhaps the most impressive stat isn’t a benchmark at all: GPT-5 achieves comparable or better performance than o3 while using 50-80% fewer output tokens. That’s not just efficiency — it’s a fundamental architectural win that translates directly to lower costs and faster responses.

GPT-5 Specifications and API Pricing — The Developer’s Perspective

The specifications are impressive on paper, and they matter even more in practice. GPT-5 offers a 400,000-token context window — split into 272K input tokens and 128K output tokens. To put that in perspective, that’s roughly the equivalent of a 300-page book as input context. Entire codebases, lengthy legal documents, or months of conversation history can now fit in a single prompt.

But the pricing is where things get really interesting. According to OpenAI’s official announcement, GPT-5’s API costs just $1.25 per million input tokens and $10.00 per million output tokens. That’s cheaper than GPT-4o — despite being substantially more capable. Add the 90% discount on cached tokens, and production workloads that reuse prompts become remarkably affordable.

The model comes in three sizes to match different use cases:

- gpt-5: The full-power model for complex tasks requiring maximum capability.

- gpt-5-mini: A balanced option for production workloads that need strong performance at lower cost.

- gpt-5-nano: Ultra-lightweight for high-volume, latency-sensitive applications like real-time chat or edge deployment.

TechCrunch’s analysis suggests this aggressive pricing strategy could trigger an industry-wide price war, forcing competitors like Google (Gemini), Anthropic (Claude), and Meta (Llama) to slash their own API prices. For developers and businesses, this competition is unequivocally good news.

GPT-5 Multimodal Capabilities — Beyond Text

GPT-5’s multimodal capabilities deserve special attention because they represent a fundamentally different approach from previous models. Earlier iterations treated vision as an add-on — you could send an image, and the model would describe it. GPT-5 was built from the ground up as a multimodal system, meaning visual understanding is deeply integrated into its reasoning process.

The MMMU score of 84.2% reflects this integration. GPT-5 doesn’t just identify objects in images — it understands relationships, reads embedded text, interprets charts and graphs, and reasons about spatial layouts. Combined with the 400K-token context window, this means you can feed GPT-5 dozens of screenshots, architectural diagrams, or data visualizations alongside detailed text instructions, and it will process everything coherently.

Practical applications include automated code review with screenshot-based bug reports, medical image preliminary analysis, architectural plan review, financial chart interpretation, and document processing that handles mixed text-and-image content natively. The unified model approach means these capabilities work seamlessly with GPT-5’s reasoning — it can look at a chart, think deeply about what the data implies, and produce a nuanced analysis in a single pass.

Real-World Reception — The Gap Between Benchmarks and Reality

Here’s where intellectual honesty matters. GPT-5’s launch wasn’t all smooth sailing. Early adopters reported issues with the router — sometimes it would engage deep thinking mode for trivial questions, adding unnecessary latency. Other times, basic tasks that GPT-4o handled effortlessly would produce unexpected failures.

Sam Altman acknowledged these growing pains publicly, and OpenAI temporarily brought back GPT-4o as a fallback option. The company subsequently pushed updates to make GPT-5 “warmer and friendlier” — addressing complaints about overly clinical or verbose responses.

Independent reviewers painted a nuanced picture. One tester confirmed that GPT-5’s Thinking Mode excelled at senior-level mathematics, working through complex problems quickly and accurately. However, the same reviewer cautioned that “GPT-5 and its rivals are not general intelligences and can fail at novel problems.” Factual accuracy has demonstrably improved — the 45% reduction in errors versus GPT-4o is real and measurable — but fact-checking remains essential. No AI model has earned the right to be trusted blindly, and GPT-5 is no exception.

Who Benefits Most from GPT-5 — And How

Developers and API users gain the most immediate advantage. A single API endpoint that automatically routes between fast and deep reasoning eliminates the complexity of multi-model pipelines. The three model sizes (gpt-5, gpt-5-mini, gpt-5-nano) provide granular control over the cost-performance tradeoff. And the 90% cached token discount makes production deployments significantly cheaper than before.

Enterprises benefit from reduced complexity in AI adoption. Instead of evaluating which model fits which use case, they can deploy GPT-5 as a single solution and let the router handle optimization. The 400K context window opens up use cases that were previously impractical — analyzing entire contract portfolios, processing lengthy regulatory documents, or maintaining context across extended customer service interactions.

General users get perhaps the most welcome change: GPT-5 is available to all ChatGPT users, including the free tier. No more agonizing over which model to select. No more wondering if you’re using the “right” AI for your question. You just ask, and GPT-5 handles the rest. This democratization of access — top-tier AI capability at zero cost — is arguably GPT-5’s most significant achievement.

The Bottom Line — A New Era of Unified AI

GPT-5 isn’t just a model upgrade. It’s a philosophical shift in how AI models are delivered and consumed. The question “which model should I use?” is becoming obsolete, replaced by a system intelligent enough to make that decision for you. The unified approach, aggressive pricing, 400K context window, and genuine multimodal integration collectively represent the most significant single release in OpenAI’s history.

Is it perfect? No. The launch hiccups, router inconsistencies, and the persistent gap between benchmarks and real-world performance remind us that we’re still in the early chapters of AI development. But the direction is unmistakable: AI is getting simpler to use, cheaper to deploy, and accessible to everyone. GPT-5 is the clearest embodiment of that trajectory yet.

Whether you’re building AI-powered automation pipelines, integrating language models into production systems, or exploring how GPT-5’s unified architecture could transform your workflows — the possibilities that a single model with 400K context, sub-dollar input pricing, and automatic routing unlocks are genuinely new. If you’re considering how to leverage these capabilities for your organization, having an experienced perspective on architecture and implementation can make all the difference.

Need tech consulting or automation? Let’s design the optimal GPT-5-powered pipeline for your use case.

Get weekly AI, music, and tech trends delivered to your inbox.