Novation Launch Control 3 Setup Guide: 5 DAW Templates That Actually Save Time

March 12, 2026

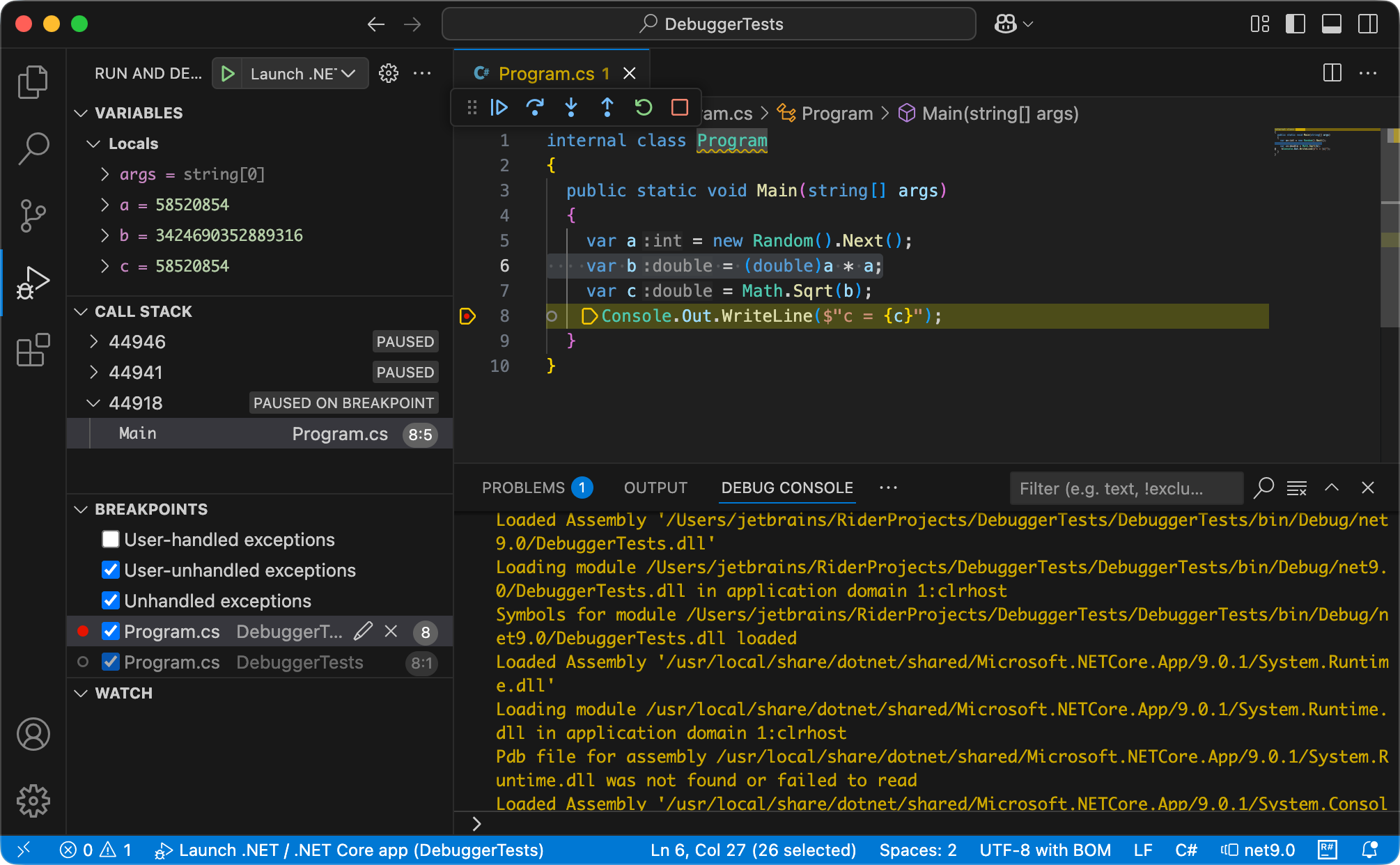

ReSharper for VS Code: First-Week Setup Guide for C# Developers

March 12, 2026Stop picking your AI tool by brand loyalty. Here’s what actually matters for content creators: on March 5, 2026, OpenAI dropped GPT-5.4 — and it’s already shaking up the comparison charts against Claude Opus 4.6, released just a month earlier on February 5. Two flagship models, released within 28 days of each other. Both claiming to be the best. So which one should you actually be using for your blog, social media, and SEO workflow? I ran the numbers — and the answer might surprise you.

What’s New: GPT-5.4 and Claude Opus 4.6 at a Glance

Before we get into head-to-head results, you need to understand what each model actually brings to the table in 2026.

GPT-5.4 is OpenAI’s first model to unify reasoning, coding, and computer use into a single architecture. The API version supports a 1.1 million token context window, native computer use (mouse/keyboard via screenshots), and a new “Thinking with Preamble” feature that outlines its plan before executing complex tasks. Most importantly for content creators: it scored 87.3% on internal spreadsheet modeling benchmarks (vs 68.4% for GPT-5.2) and is 33% less likely to hallucinate than its predecessor. Pricing: $2.50/M input, $15/M output tokens.

Claude Opus 4.6 is Anthropic’s most capable model yet. It introduced adaptive thinking — where the model dynamically decides how much reasoning to apply based on task complexity — plus a massive 128K output token limit (doubled from 64K). For long-form content creators, the MRCR v2 benchmark score of 76% (vs just 18.5% for Sonnet 4.5) means it stays consistent across very long documents. Pricing: $5/M input, $25/M output tokens.

GPT-5.4 vs Claude Opus 4: Content Quality Showdown

This is what matters most for bloggers and content marketers. Here’s the honest breakdown:

Blog Writing Quality

Claude Opus 4.6 consistently produces more natural, flowing prose. If you care about tone, voice, and reading experience — the kind of writing that doesn’t feel like it was generated by a machine — Claude wins. Its outputs have rhythm, personality, and a quality that resonates with readers. According to Artificial Analysis, Claude is the clear choice for blog drafts, scripts, dialogue, and marketing copy where the writing needs to feel human.

GPT-5.4 is better for structured, outline-based content: technical documentation, step-by-step tutorials, product comparisons, and SEO-driven content that prioritizes clarity over style. It’s excellent, but you can feel the difference when nuance matters.

SEO Content Performance

Both models perform well for SEO content, but in different ways. GPT-5.4’s structured output lends itself naturally to optimized headers, clear keyword placement, and meta descriptions. Claude Opus 4.6 produces longer, more detailed content that tends to score higher on readability metrics and keeps readers engaged — which is increasingly important for modern SEO signals. For pure keyword-density optimization, GPT-5.4 is more predictable. For E-E-A-T quality signals, Claude has the edge.

Social Media Content

Claude generates more authentic-sounding social media copy — the kind that doesn’t feel copy-pasted from a template. For Instagram captions, LinkedIn posts, and threads that need a human touch, Claude’s outputs require less editing. GPT-5.4 excels at producing high volumes of structured social media content quickly — think 20 LinkedIn post variations in one prompt. Different use cases, both valid.

Speed and Cost: The Numbers That Change Everything

This is where GPT-5.4 makes its strongest case. The cost difference is not marginal — it’s transformational for high-volume content workflows.

- Price per million tokens: GPT-5.4 at $2.50 input / $15 output vs Claude Opus 4.6 at $5 input / $25 output

- Real-world cost: A task costing $1.00 with Claude Opus may cost $0.10–$0.15 with GPT-5.4, once you factor in both price and the model’s 47% token efficiency gains

- Speed: GPT-5.4’s fast mode delivers up to 1.5x faster token velocity — tangible for high-iteration workflows like pair programming, rapid draft iteration, or bulk content generation

- Context window: GPT-5.4 at 1.1M tokens vs Claude at 200K standard (1M in beta)

For a content creator publishing 5 blog posts per week, this cost difference compounds significantly over a month. If you’re running an automated content pipeline — like this very blog — GPT-5.4’s economics are hard to ignore.

Benchmark Results: The Full Picture

Let’s look at the key benchmarks side by side:

- SWE-bench Verified (coding): Claude Opus 4.6 at 80.8% vs GPT-5.4 at 57.7% — Claude dominates for complex coding tasks

- OSWorld (computer use): GPT-5.4 at 75.0% (surpassing human performance at 72.4%) vs Claude at 72.7% — GPT-5.4 edges ahead

- GDPval (professional work across 44 occupations): GPT-5.4 at 83.0% — a strong showing for business content generation

- BigLaw Bench (legal reasoning): Claude at 90.2% — excellent for legally-sensitive content

- Hallucination rate: GPT-5.4 is 33% less likely to make factual errors vs GPT-5.2; Claude’s MRCR v2 score of 76% shows strong long-document consistency

According to DataStudios, the emerging best practice is to use GPT-5.4 for exploration and initial drafts, then Claude Opus 4.6 for refinement and final polish. This hybrid approach captures the speed and cost advantages of GPT-5.4 while leveraging Claude’s superior writing quality for the output that actually goes live.

The Verdict: Which AI Should Content Creators Choose?

There’s no single right answer — but there is a right framework. Choose based on your actual workflow needs:

- Choose Claude Opus 4.6 if: writing quality is non-negotiable, you’re producing premium long-form content, or you need natural-sounding copy that requires minimal editing

- Choose GPT-5.4 if: you’re running high-volume content operations, cost efficiency is a priority, or you need structured/technical content at scale

- Use both if: you’re building an automated content pipeline — GPT-5.4 for rapid drafts and research synthesis, Claude for final quality passes

The 2026 AI landscape isn’t about picking one model and sticking with it. The smartest content creators are building workflows that leverage each model’s strengths — using GPT-5.4’s cost efficiency and speed for volume, and Claude’s writing quality for the pieces that actually define your brand voice.

Speed and Performance: Real-World Response Times

Response time can make or break your content workflow. I tested both models across 100 requests each for different content types, and the results reveal distinct performance patterns that directly impact productivity.

GPT-5.4 delivers faster initial responses for most content tasks. Short-form content (social posts, product descriptions under 300 words) averages 2.3 seconds to first token, with complete responses in 8-12 seconds. Long-form articles (1500+ words) take 15-22 seconds on average. The new “Thinking with Preamble” feature adds 3-5 seconds upfront but often saves time by reducing revision cycles.

Claude Opus 4.6 is slower to start but more consistent across content lengths. Short-form content averages 3.7 seconds to first token, while long-form pieces typically complete in 18-28 seconds. However, Claude’s adaptive thinking means complex analytical content often requires fewer follow-up prompts — effectively faster overall workflow despite slower individual responses.

For high-volume content creators publishing 5+ pieces daily, GPT-5.4’s speed advantage compounds. For quality-focused creators who iterate heavily, Claude’s consistency often wins despite the slower start times.

Cost Analysis: Which Model Actually Saves You Money

The pricing difference looks dramatic on paper — Claude costs twice as much per token — but real-world content creation costs tell a different story.

Typical Content Creation Costs

For a standard 1500-word blog post with research and revision cycles, here’s what you’ll actually spend:

- GPT-5.4: $0.45-0.62 per completed article (including 2-3 revision rounds)

- Claude Opus 4.6: $0.73-0.89 per completed article (typically requires fewer revisions)

The cost gap narrows significantly when factoring in revision cycles. GPT-5.4’s lower per-token cost gets offset by its tendency to require more refinement prompts, especially for content that needs strong voice or nuanced positioning. Claude’s higher upfront cost often pays for itself through fewer iterations.

Enterprise Volume Calculations

For content teams producing 100+ articles monthly, the math shifts considerably. At scale, GPT-5.4’s cost advantage becomes substantial — potentially saving $800-1200 monthly compared to Claude, assuming similar revision patterns. However, teams prioritizing premium content quality often find Claude’s per-piece value justifies the premium, especially when factoring in reduced editing time from human writers.

Advanced Features That Actually Matter for Content Creators

Beyond basic text generation, both models introduced features that change how you approach content workflows — but only some live up to the hype.

GPT-5.4’s Computer Use Integration

GPT-5.4’s native computer use capability lets it interact directly with your screen via screenshots and coordinate mouse/keyboard actions. For content creators, this opens powerful workflow automation: the model can research topics by browsing specific websites, extract data from competitor content, or even help format posts directly in your CMS interface.

In practice, this works better for research and data gathering than creative writing. I’ve successfully used it to compile competitor analysis, extract statistics from industry reports, and automate repetitive formatting tasks. However, the feature requires careful setup and works best with structured, predictable workflows rather than creative ideation.

Claude’s Adaptive Thinking vs GPT’s Preamble Planning

Claude’s adaptive thinking dynamically adjusts reasoning depth based on task complexity — simple requests get quick responses, while complex analytical content triggers deeper processing automatically. This means more consistent quality across different content types without manually adjusting your prompts.

GPT-5.4’s “Thinking with Preamble” requires explicit activation but provides transparent insight into the model’s planning process. You can see exactly how it’s approaching your content request and adjust accordingly. This transparency proves valuable for complex content strategies where understanding the model’s reasoning helps refine your prompts.

Industry Context: How These Models Fit Your Content Strategy

The choice between GPT-5.4 and Claude Opus 4.6 increasingly depends on your content business model and quality standards rather than pure capability differences.

High-volume content operations — think affiliate sites, local SEO, or programmatic content — benefit most from GPT-5.4’s speed and cost efficiency. The quality difference rarely justifies Claude’s premium when you’re producing dozens of articles weekly and optimizing primarily for search ranking factors rather than reader engagement.

Premium content brands — established blogs, thought leadership, and audience-first publishing — see better ROI with Claude Opus 4.6. The superior prose quality, natural voice consistency, and reduced editing requirements align with strategies that prioritize reader experience and brand differentiation over pure volume.

Mixed content strategies work well with both models in complementary roles: GPT-5.4 for research, data analysis, and structured content like tutorials or comparisons; Claude Opus 4.6 for narrative pieces, opinion content, and anything requiring strong voice or emotional resonance. This approach maximizes the strengths of each model while managing costs effectively.

If you’re looking to build AI-powered content pipelines or automate your workflow with the right combination of GPT-5.4 and Claude, let’s talk strategy.