AMD Ryzen 9000X3D Refresh: 3D V-Cache CPUs Get Up to 500MHz Clock Speed Boost and 192MB Cache

August 12, 2025

EZdrummer 3 Electronic Expansion Packs Compared: Electronic Edge EZX vs Synthwave EZX vs Electronic EZX — The Ultimate EDM Producer’s Guide

August 13, 2025A mysterious model called “nano-banana” just appeared on LMArena’s AI benchmark leaderboard — and it’s demolishing every image generation model in existence. DALL-E 3, Midjourney v6.1, Flux.1 — none of them come close. The AI community is scrambling to figure out who built it, and the answer might surprise everyone.

Google Nano Banana Emerges from the Shadows

In early August 2025, AI researchers and enthusiasts began noticing something extraordinary on the LMSYS Chatbot Arena, the crowdsourced platform where users rate anonymous AI models in blind tests. A new contender labeled simply as “nano-banana” was achieving results that nobody expected. Within days, this anonymous model had achieved the highest ELO rating in the arena’s history, surpassing every established image generation model in categories including prompt adherence, spatial reasoning, and text generation accuracy.

The numbers are staggering. According to testing data compiled by researchers, Google Nano Banana delivers 95%+ character consistency across multiple images — a 40% improvement over the nearest competitor. Generation times clock in at 1-2 seconds, while models like Midjourney and DALL-E typically take 10-15 seconds for comparable quality. Testing platform usage surged by 500% as word spread through AI communities on X, Reddit, and Discord.

What Makes Google Nano Banana Different from DALL-E and Midjourney

What sets this model apart isn’t just speed — it’s the combination of capabilities that no single competitor currently matches. While DALL-E 3 excels at following complex text prompts and Midjourney v6.1 produces aesthetically stunning images, Google Nano Banana appears to do both simultaneously while adding a layer of image editing intelligence that’s genuinely new.

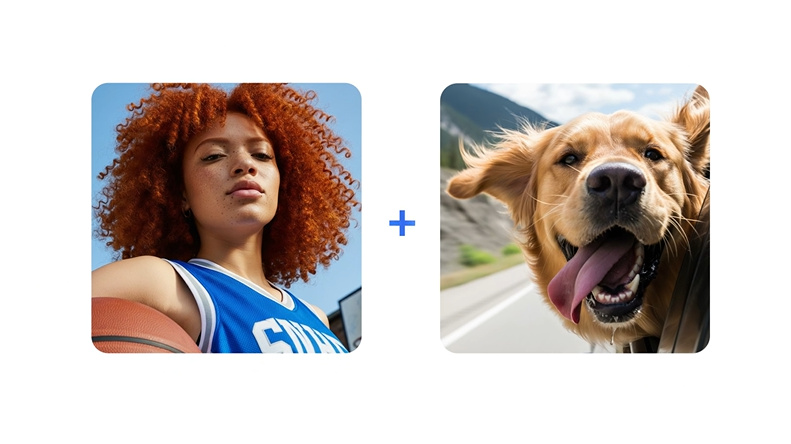

Multi-Image Blending and Character Consistency

The model can blend multiple source images into a single coherent composition — maintaining consistent character identity across different prompts and edits. This is the capability that’s generating the most excitement in the creative AI community. Character consistency has been one of the biggest pain points in AI image generation. You could generate a perfect character in one image, but recreating that exact same character in a different pose or setting was nearly impossible. Google Nano Banana appears to have solved this problem with 95%+ consistency rates.

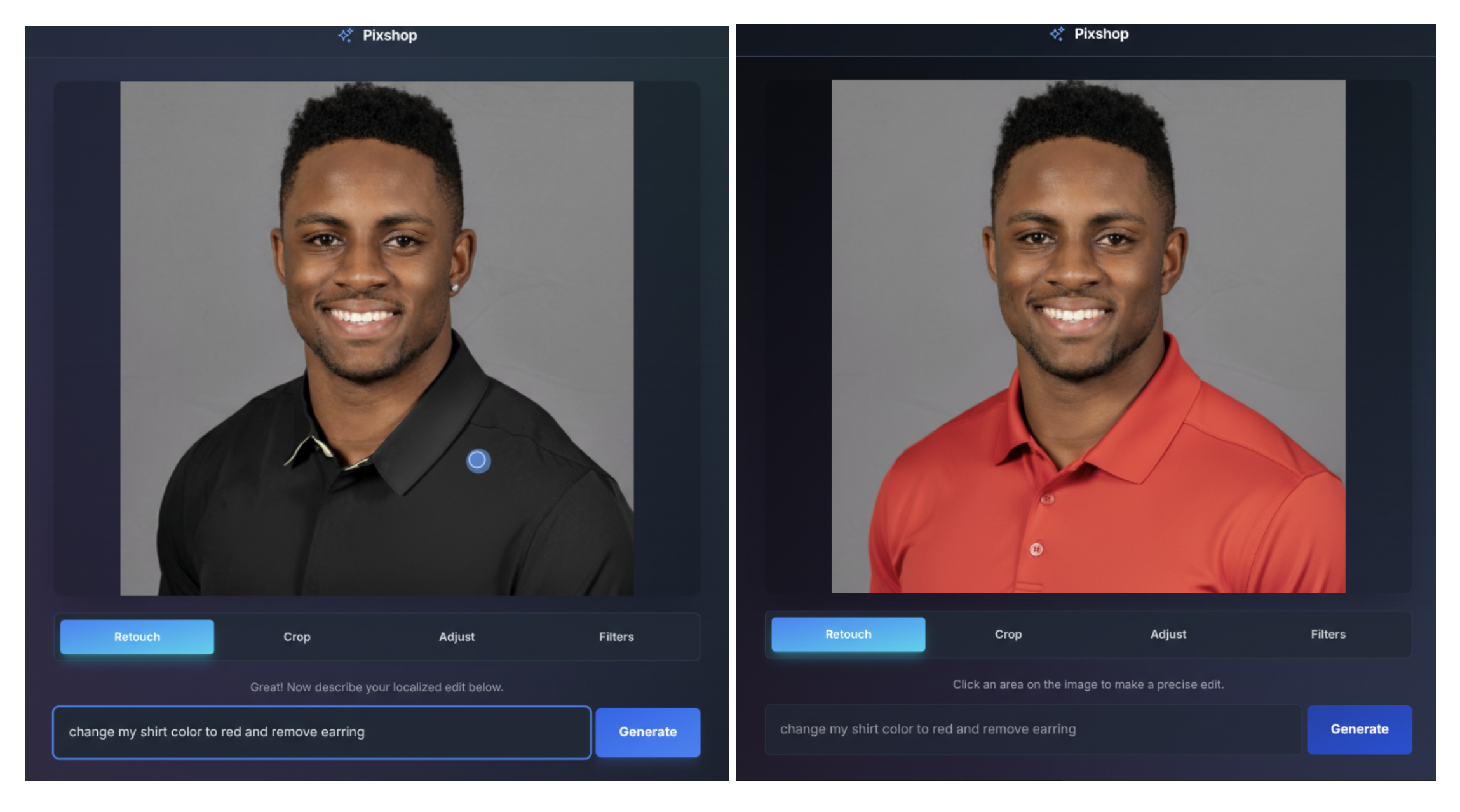

Natural Language Image Editing

Rather than requiring complex mask selections or inpainting workflows, the model understands plain English instructions for targeted edits. “Blur the background,” “remove the person on the left,” “change the lighting to golden hour” — these natural language commands produce precise, localized edits that previously required Photoshop-level expertise. The model even understands hand-drawn diagrams as editing instructions, a feature that bridges the gap between professional designers and casual users.

The LMArena Benchmark Dominance

The LMSYS Chatbot Arena (now LMArena) operates on a simple but powerful principle: users compare outputs from anonymous models side-by-side without knowing which model produced which result. This blind evaluation method eliminates brand bias and measures pure output quality. Google Nano Banana didn’t just win — it dominated.

- ELO Rating: Highest in arena history at the time of testing

- Prompt Adherence: Outperformed DALL-E 3 and Midjourney v6.1 in following complex multi-part prompts

- Spatial Reasoning: Superior understanding of object placement, perspective, and scene composition

- Text in Images: Significantly better text rendering accuracy — a traditionally weak point for all AI image models

- Speed: 1-2 second generation vs. 10-15 seconds for leading competitors

In just two weeks on the platform, the anonymous model attracted over 5 million total votes and won over 2.5 million direct comparisons — setting a record for the highest participation ever on a single model evaluation.

The Identity Reveal: Gemini 2.5 Flash Image

On August 26, 2025, Google DeepMind officially confirmed what many had suspected: “nano-banana” was the internal codename for Gemini 2.5 Flash Image, their new state-of-the-art image generation and editing model. The strategic choice to debut anonymously on a benchmark platform — letting performance speak before branding — was a masterclass in product marketing that Silicon Valley is still talking about.

The model integrates directly into Google’s existing Gemini ecosystem, available through the Gemini API, Google AI Studio, and Vertex AI for enterprise customers. At approximately $0.039 per image (with each image counting as 1,290 tokens at $30.00 per million output tokens), it’s also one of the most cost-effective options for developers building AI-powered creative tools.

Why Google Nano Banana Matters for Creators and Developers

The implications extend far beyond benchmark scores. For content creators, designers, and marketers, Google Nano Banana represents a fundamental shift in what’s possible with AI image generation:

- Brand Consistency: Maintaining character identity across marketing campaigns without manual rework

- Rapid Prototyping: 1-2 second generation means real-time creative iteration during brainstorming sessions

- Accessible Editing: Natural language commands eliminate the learning curve for complex image manipulation

- Cost Efficiency: At $0.039 per image, batch generation for A/B testing becomes economically viable at scale

- SynthID Watermarking: Built-in digital watermarking identifies AI-generated content, addressing growing concerns about authenticity

The viral 3D figurine trend that’s already exploding across social media — where users create photorealistic action figure versions of themselves and celebrities — is just the tip of the iceberg. Someone generated a little banana character with arms and legs using the model, and the internet ran with it. Within days, memes and short videos featuring the Nano Banana character spread across Instagram, LinkedIn, and X, creating the kind of organic viral moment that money can’t buy.

What This Means for the AI Image Generation Landscape

Google’s entry with Nano Banana reshapes the competitive landscape significantly. Midjourney, which has dominated the aesthetic quality space, now faces a competitor that matches its visual fidelity while offering dramatically faster generation and superior editing capabilities. OpenAI’s DALL-E, which led in prompt comprehension, is now outperformed in that exact category. Stability AI’s open-source Flux models, which offered cost advantages, now compete against a model that’s both cheaper and more capable.

The speed advantage alone could be transformative. When generation drops from 10-15 seconds to 1-2 seconds, it changes the workflow from “generate and wait” to “generate and iterate.” Creative professionals can explore dozens of variations in the time it previously took to generate a single image. This isn’t an incremental improvement — it’s a qualitative shift in how AI-assisted creation works.

Google Nano Banana’s anonymous debut on LMArena wasn’t just a clever marketing move — it was a statement. In an industry increasingly dominated by hype cycles and vaporware announcements, Google let the technology speak first. The result? A model that earned its reputation through blind evaluation before anyone knew its name. Whether you’re a developer integrating image generation into your app, a designer exploring AI-assisted workflows, or simply curious about where visual AI is headed, Nano Banana has set a new standard that every competitor will now be measured against.

Interested in building AI-powered creative pipelines or integrating image generation into your workflow?

Get weekly AI, music, and tech trends delivered to your inbox.