Pixel 10 Tensor G5: Google’s TSMC Gamble Could Change Everything at I/O 2025

May 1, 2025

Surface Pro 11 Snapdragon X Elite: Spring 2025 Updates That Finally Make ARM Windows Real

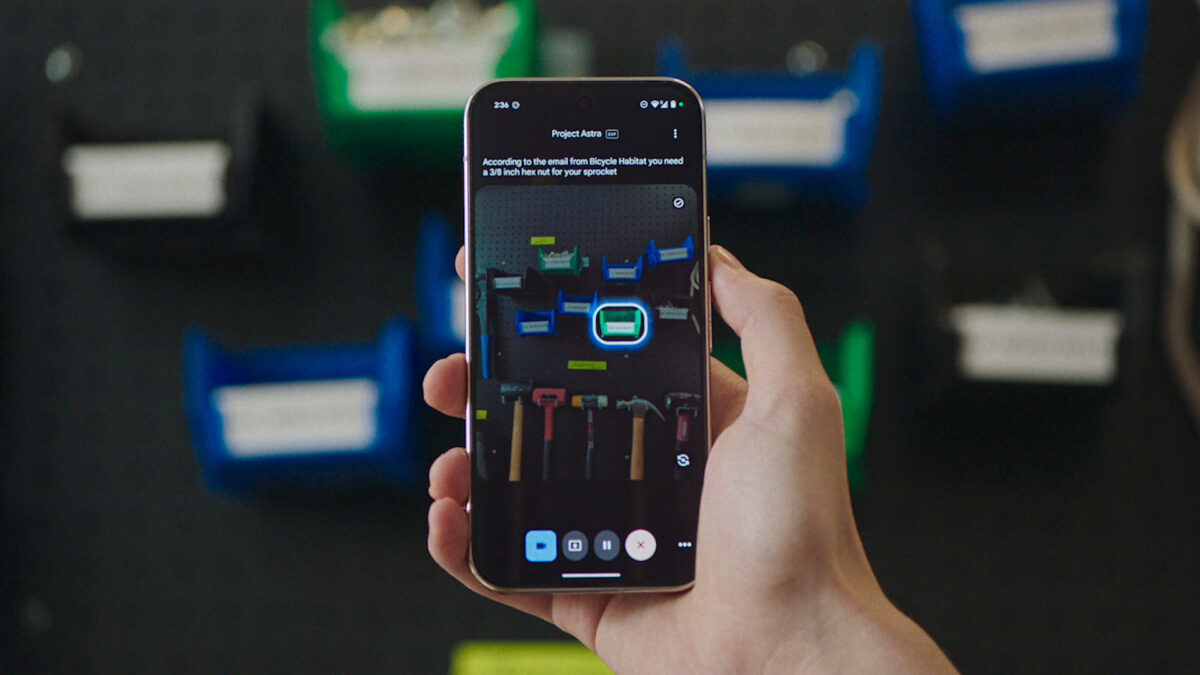

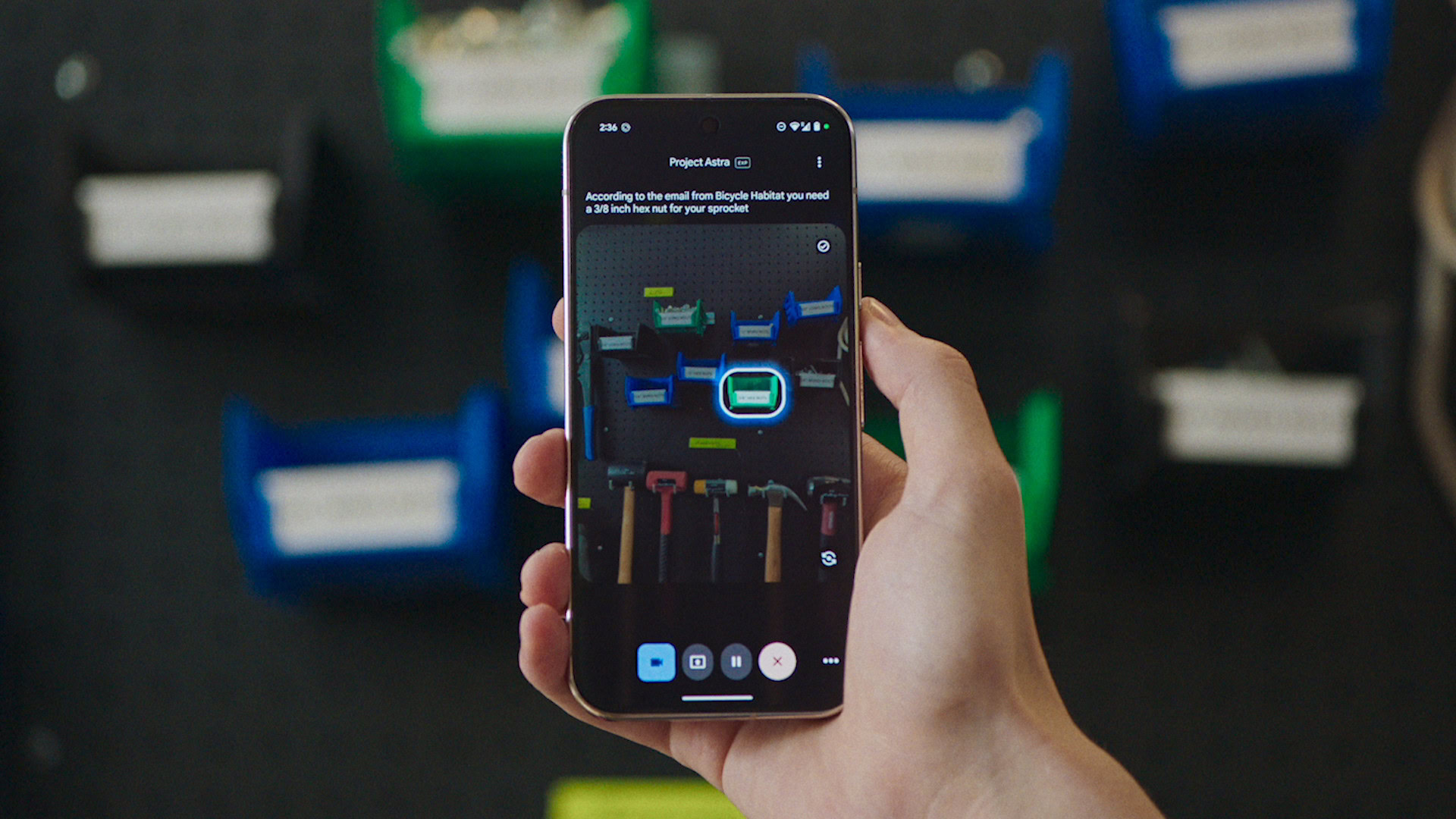

May 2, 2025Google just showed us what a real AI assistant looks like — and it’s not a chatbot sitting in a text box. At Google I/O 2025, Project Astra streamed live video through a smartphone camera, identified a specific bike part in a cluttered garage, pulled up the repair manual, guided the user through the fix, called a nearby bike shop, and handled an interruption from another person — all without missing a beat.

This isn’t a concept video. Google demoed these capabilities live at the I/O keynote, and some of them are already rolling out to Gemini Live users on Android and iOS. Project Astra represents Google’s most aggressive move yet toward building what Demis Hassabis calls a “universal AI assistant” — one that sees, hears, and acts in the real world through your phone.

What Is Google Project Astra? The Technical Foundation

At its core, Project Astra is a research prototype from Google DeepMind that explores how to build an AI assistant capable of understanding and interacting with the physical world in real time. The name “Astra” signals Google’s ambition: this isn’t an incremental update to Google Assistant — it’s a fundamentally different approach to human-AI interaction.

The technical foundation is Gemini 2.5 Pro, Google DeepMind’s most advanced multimodal transformer. Unlike traditional large language models that process text sequentially, Gemini 2.5 Pro was trained on synchronized video, audio, and text data simultaneously. This means it doesn’t just understand language — it understands the spatial and temporal relationships between what it sees, hears, and reads.

The key technical breakthrough is Astra’s continuous encoding pipeline: it processes video frames in real time, combines visual and audio input into a unified timeline of events, and caches this information for instant recall. The result is near-zero latency responses — you ask a question about what the camera sees, and Astra answers immediately.

6 Project Astra Features That Stole the Show at Google I/O 2025

The I/O keynote demo wasn’t a scripted marketing clip — it was a continuous, unbroken workflow showing Astra handling increasingly complex real-world tasks. According to BGR’s analysis, here are the six standout capabilities:

1. Real-Time Document Discovery and Navigation

In the demo, the user pointed the camera at a bike and asked Astra to find the repair manual. Astra browsed the web, located the correct PDF manual, opened it, and then scrolled to the exact section about brake repair — all through voice commands while the user’s hands stayed on the bike. This isn’t web search; it’s an AI agent that navigates documents the way a human research assistant would.

2. YouTube Video Discovery and Playback

When the text manual wasn’t enough, the user asked Astra to find a video tutorial. Astra launched the YouTube app, searched for relevant repair tutorials, selected the most appropriate result, and played it — all without the user touching the screen. The AI made judgment calls about which video best matched the specific repair task.

3. Multimodal Cross-Reference Retrieval

This was the most technically impressive feature. Astra simultaneously analyzed the live camera feed showing the bike part, searched Gmail for purchase confirmation emails about that specific part, and highlighted the correct component in the video feed. It fused real-time vision, email search, and spatial understanding into a single coherent response.

4. Background Phone Calls with Task Delegation

The user asked Astra to find the nearest bike shop and call them. Astra located the shop, initiated the call, inquired about part availability — all while the user continued working on the bike and talking to Astra about other things. When the call finished, Astra reported back with the results. This is genuine multi-tasking: handling a phone conversation and a face-to-face conversation simultaneously.

5. Multi-Speaker Context Awareness

Mid-conversation, another person interrupted the user with a question. Astra detected the interruption, paused automatically, and when the user returned, it resumed exactly where it left off — without asking “where were we?” or losing any context. This demonstrates sophisticated speaker diarization and conversational state management.

6. Context-Aware Shopping Integration

Astra recognized the user’s pet from Google Photos and surfaced relevant product suggestions. It then facilitated a purchase through Google Shopping integration. The implication is clear: Astra builds a persistent understanding of your life context — your possessions, your preferences, your relationships — and uses it proactively.

Google Project Astra vs OpenAI GPT-4o: The Multimodal AI Race

The obvious comparison is OpenAI’s GPT-4o, which also handles text, audio, and video input. But the approaches differ fundamentally. GPT-4o processes audio inputs in as little as 232 milliseconds — on par with human conversation response times. Google hasn’t published specific latency numbers for Project Astra, but the I/O demo showed effectively zero perceptible lag.

The critical difference is in agent capabilities. GPT-4o excels at understanding and generating content across modalities, but Project Astra goes further by actively controlling your device — launching apps, making calls, navigating documents, and executing multi-step workflows. It’s the difference between an AI that answers questions and an AI that takes actions.

Google also has a significant advantage in ecosystem integration. Project Astra taps into Search, Gmail, Google Photos, Google Maps, Calendar, Tasks, Keep, and YouTube — a depth of personal context that no competitor can match. When Astra identifies your bike part from a Gmail receipt while looking at a live camera feed, that’s the Google ecosystem advantage in action.

Search Live and Developer API: Project Astra Beyond the Demo

The consumer-facing integration that matters most is Search Live. When using Google’s AI Mode search or Google Lens, users can now tap a “Live” button to ask questions about what their camera sees. Project Astra streams the video and audio to Gemini 2.5 Pro and returns answers with near-zero latency. This transforms Google Search from a text-input/text-output tool into an ambient, multimodal information layer.

For developers, Google announced that Project Astra powers a new feature in the Live API — a developer-facing endpoint that enables low-latency voice interactions with Gemini. Starting from I/O day, developers can build experiences that support audio and visual input with native audio output. This is Google democratizing the same technology that powered the keynote demo.

Gemini Live’s camera and screen-sharing features — previously limited to Gemini Advanced subscribers — are now free for all Android and iOS users. Integration with Google Maps, Calendar, Tasks, and Keep is planned for the coming months.

My Take: What 28 Years in Audio Taught Me About This

I’ve spent nearly three decades watching technology promises fail to deliver — remember when Siri was supposed to change everything? But Project Astra is different, and here’s why: it demonstrated continuous, multi-step, real-world task completion without a single failure or awkward pause in a live demo. That’s not marketing — that’s a technology that actually works.

As someone who runs automated pipelines and manages multiple AI agents daily, I see the implications clearly. Project Astra’s ability to handle interruptions, maintain context across modalities, and delegate tasks (like the phone call) mirrors the multi-agent architectures we build in software — but packaged for everyday consumers. Google is essentially bringing agentic AI to the mainstream.

The music production angle isn’t obvious, but it’s real. Imagine pointing your phone at a hardware synthesizer and having Astra pull up the manual, find a tutorial video, and even call the manufacturer’s support line — all hands-free while you’re patching cables. For studio professionals, a real-time multimodal assistant that understands physical context could eliminate the constant screen-switching that breaks creative flow.

My concern? Privacy. Astra’s power comes from deep integration with your Google data — emails, photos, location, search history. That same depth of context that makes it useful also makes it the most intimate AI surveillance tool ever built. Google needs to get the privacy controls right, or this becomes a dealbreaker for professional users who handle confidential client work.

What This Means for the AI Industry

Project Astra marks a clear inflection point. We’re moving from AI assistants that respond to queries to AI agents that perceive, reason, and act in the physical world. Google’s advantage isn’t just the AI model — it’s the combination of Gemini 2.5 Pro’s multimodal capabilities with Google’s unmatched ecosystem of services and data.

For competitors, the message is urgent. Apple Intelligence, Samsung Galaxy AI, and Microsoft Copilot are all working on similar capabilities, but none demonstrated anything close to Project Astra’s level of integrated, real-time agent behavior. OpenAI’s GPT-4o is the closest rival, but it lacks the device control and ecosystem integration that makes Astra practical for everyday use.

The next 12 months will determine whether Project Astra’s I/O demo becomes the default way people interact with their phones — or joins the graveyard of impressive Google demos that never shipped at scale. Based on the fact that Gemini Live camera features are already free for all users, Google seems serious about the former.

Want to stay ahead of AI breakthroughs like Project Astra and understand how they impact creative workflows and tech strategy?

Get weekly AI, music, and tech trends delivered to your inbox.