Primary Wave Kobalt Acquisition: Inside the $1.5B Deal Creating a $7B Indie Music Empire

March 13, 2026

Omnisphere 3 Review: 26,000 New Patches, Omni FX Rack, and 300+ Hardware Profiles After 10 Years

March 14, 2026Google just handed every producer on the planet a free AI sample engine — and the full source code to hack it apart. On March 9, 2026, Google Infinite Crate open source release landed on GitHub under an Apache 2.0 license, giving you a VST3/AU plugin that streams 48kHz stereo audio generated in real-time by Lyria RealTime, the interactive music model built by Google DeepMind. Type “slow jazz piano, rainy night” and hear it materialize inside your session. No bouncing. No file management. Just sound, on demand.

This is not another toy demo. Magenta’s previous tools — Magenta Studio and DDSP VST — racked up over one million downloads combined. Infinite Crate builds on that momentum with a fundamentally different proposition: real-time generative audio running as a plugin, not an app. And because it is open source, the community can now reshape it into something Google never imagined.

What Google Infinite Crate Open Source Actually Does

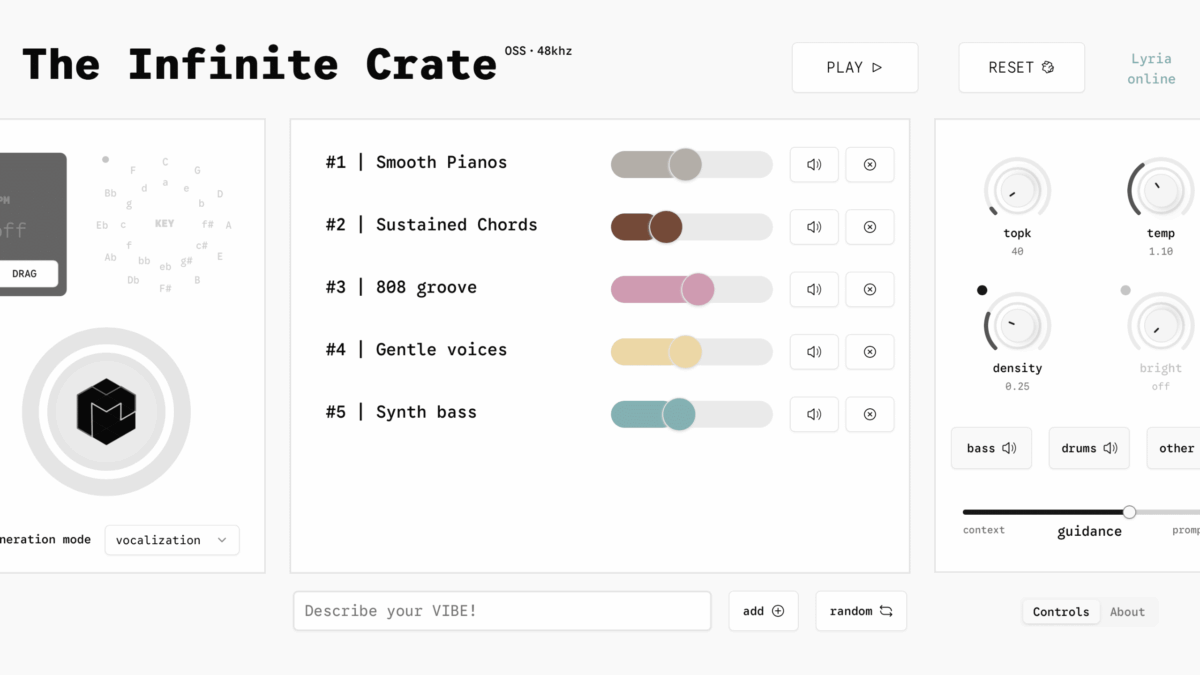

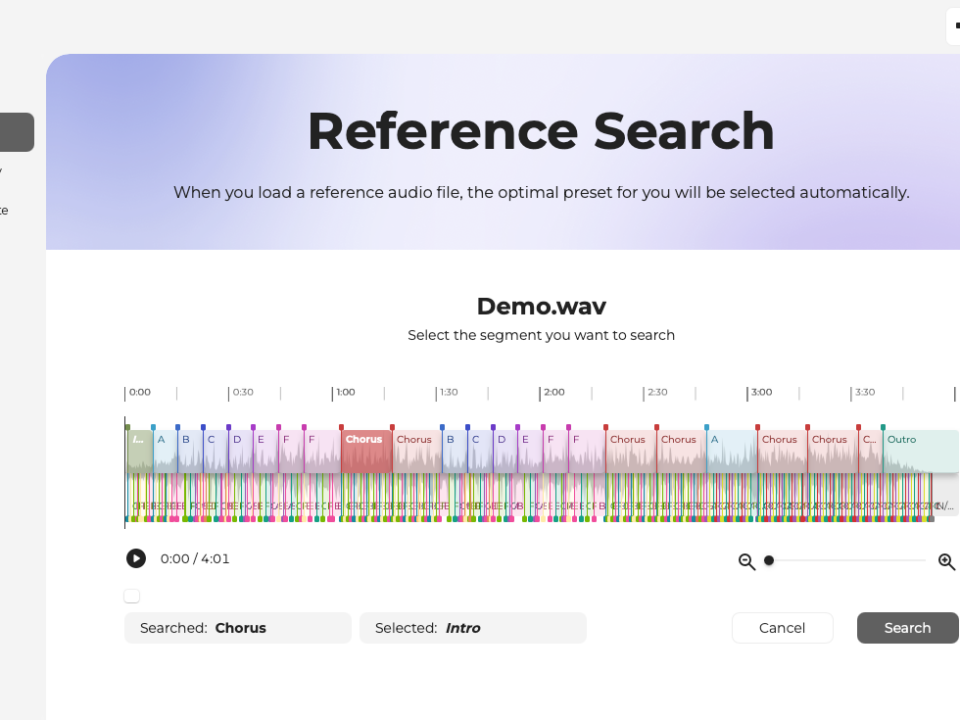

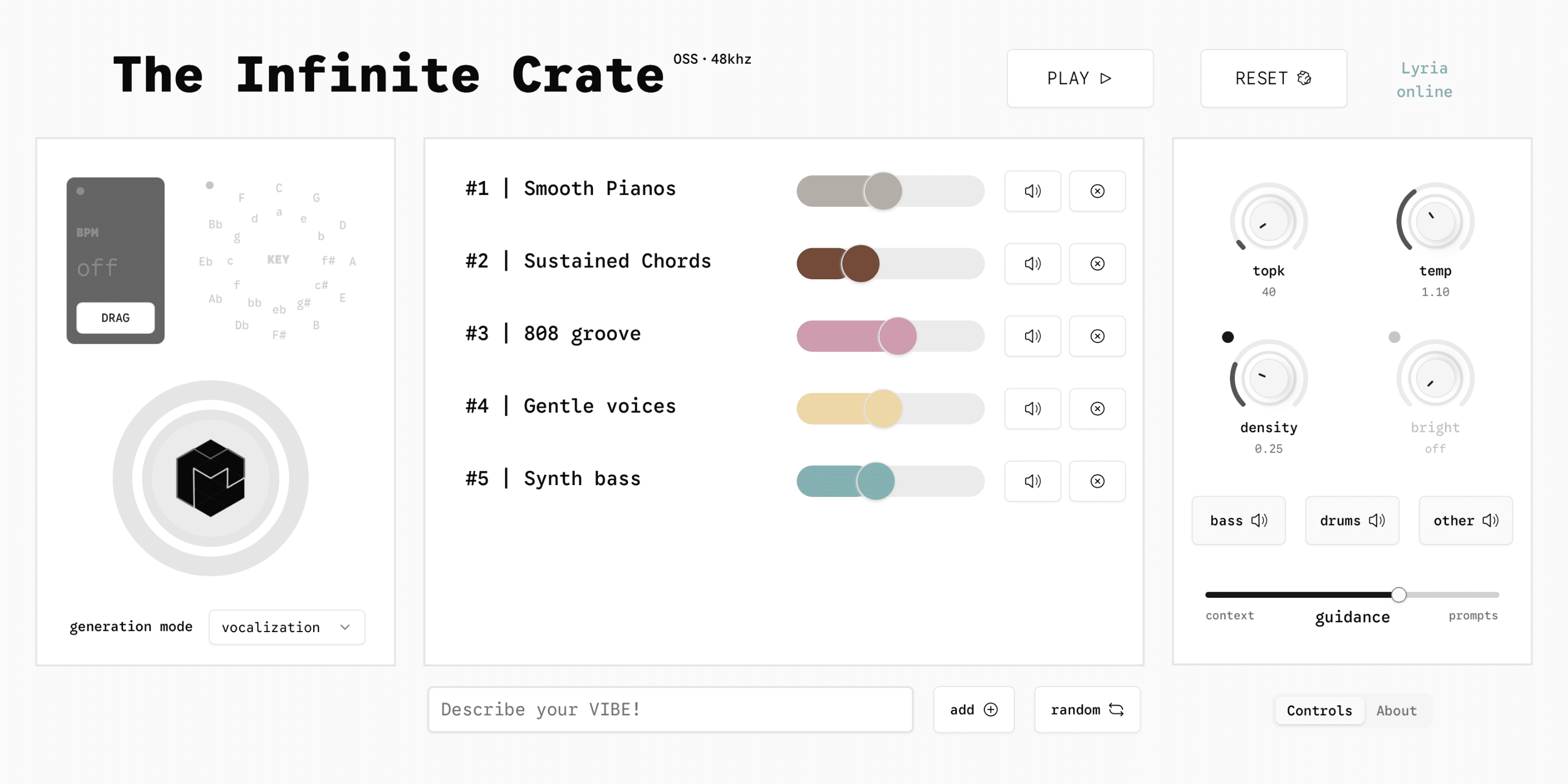

At its core, Infinite Crate is a bridge between Google’s cloud-based Lyria RealTime model and your digital audio workstation. You install the VST3 or AU plugin on Mac or Windows, grab a free Gemini API key from AI Studio, and the plugin appears on an instrument or bus track like any other virtual instrument. Instead of loading preset patches or samples, you type text prompts.

The generation controls go deeper than a simple text box. You get sliders for Guidance (how literally the model follows your prompt), TopK (how much randomness to allow), BPM sync, and key selection. There is even element-level muting — toggle bass or drums on and off independently within the generated stream. Want “driving techno, no hi-hats”? That works, both as a text prompt and as a mute toggle.

Three Workflow Modes

Infinite Crate ships with three distinct creative modes, each targeting a different stage of the production process:

- Digging the Crate — Browse and audition AI-generated loops and textures the way you would dig through a sample library. Generate, preview, drag what works onto your arrangement.

- Jamming — Set BPM and key, then let Lyria RealTime play alongside your session in real-time. Tweak prompts and parameters on the fly while recording the output to an audio track.

- Prompt DJing — Chain prompts sequentially to create evolving sets. Transition from “ambient pads, cathedral reverb” to “breakbeat jungle, vinyl crackle” and the model morphs between them live.

Under the Hood: Lyria RealTime and the Gemini API

The engine behind Infinite Crate is Lyria RealTime, Google DeepMind’s interactive music generation model. It outputs 48kHz stereo audio with low enough latency for real-time monitoring — impressive given that the computation happens on Google’s cloud servers, not on your local machine. Your DAW sends the prompt and parameter state to the Gemini API endpoint, the model generates audio frames, and those frames stream back into the plugin’s buffer.

This cloud-dependent architecture is both the feature and the catch. The feature: you do not need a beefy GPU or any specialized hardware. A MacBook Air handles it. The catch: you need a stable internet connection, and sessions currently cap at 10 minutes. After that, you re-trigger generation. Latency also depends on your connection quality, so producers in regions with less reliable internet infrastructure may encounter buffer underruns.

What Apache 2.0 Really Means for Producers and Developers

The Apache 2.0 license is the most permissive widely-used open source license. It means you can modify the plugin code, fork it into your own project, redistribute it, and even sell products built on it — as long as you include the license notice. For the music production community, the practical implications are huge:

- MIDI input integration — Developers can wire up MIDI note data to influence generation parameters, letting you play keys to steer the AI output.

- Hardware controller mapping — Map Guidance, TopK, BPM, and mute toggles to physical knobs and faders on controllers like Push or Launch Control.

- Custom forks — Specialized versions for specific genres, educational tools, or accessibility interfaces are now possible without waiting for Google to build them.

- Local model exploration — Google’s Magenta team has already confirmed that Magenta RealTime is exploring locally-runnable variants, which could eventually eliminate the cloud dependency altogether.

Limitations You Need to Know Before Diving In

Infinite Crate is not production-ready in the “replace your sample library” sense — at least not yet. Here is what to watch out for:

- Cloud dependency — No internet, no sound. Every generation call hits Google’s servers.

- 10-minute session cap — Sessions time out. For long arrangement work, you will need to record the output to audio tracks and re-trigger as needed.

- Imprecise key control — The model follows key suggestions loosely. If you need exact harmonic accuracy, you will still need to pitch-correct or re-generate until it lands.

- No Linux, no AAX — The plugin currently ships for Mac and Windows only as VST3 and AU. Pro Tools users and Linux-based studio setups are out of luck for now.

- Audio quality variance — Some prompts produce remarkably convincing results; others sound recognizably synthetic. Results are inconsistent across genres.

Where This Fits in a Modern Production Workflow

The most practical use case right now is not replacement — it is ideation. When you are staring at an empty arrangement and need a starting point, typing “lo-fi hip-hop beat, vinyl warmth, detuned keys” gets you something to react to in seconds. Record the output, chop it, process it, and build from there. The Jamming mode works particularly well for this: set your session tempo, let Infinite Crate generate alongside a rough arrangement, and capture the moments that spark ideas.

For sound designers and scoring composers, the Prompt DJing mode opens up interesting territory. You can create evolving ambient beds, transition between sonic palettes live, and capture hours of raw material for further processing. This is where the tool genuinely saves time — not by replacing deliberate composition, but by generating raw sonic material at a speed that manual synthesis cannot match.

The open source release changes the long-term calculus significantly. Producers who are also developers — and there are more of us every year — can now build the exact integration they need. Imagine a version that reads your MIDI clips and generates complementary parts, or one that analyzes your mix bus and suggests textures to fill frequency gaps. Apache 2.0 makes all of this possible without waiting for Google’s product roadmap.

Whether Infinite Crate becomes a staple production tool depends on two things: the community’s ability to extend it through the open source codebase, and Google’s progress toward locally-runnable models that eliminate the cloud bottleneck. For now, it is the most accessible real-time AI music tool we have ever seen — free, open, and already inside your DAW.

Integrating AI-generated audio into your production workflow — or building a hybrid studio setup that puts these tools to work — takes some planning. If you want help designing a workflow that actually fits your creative process, let’s talk.