NAMM 2026 Studio Monitors Headphones Preview: 7 Game-Changing Products Every Producer and Engineer Should Watch

January 12, 2026

NAMM 2026: 8 Best New Studio Monitors and Headphones That Stole the Show

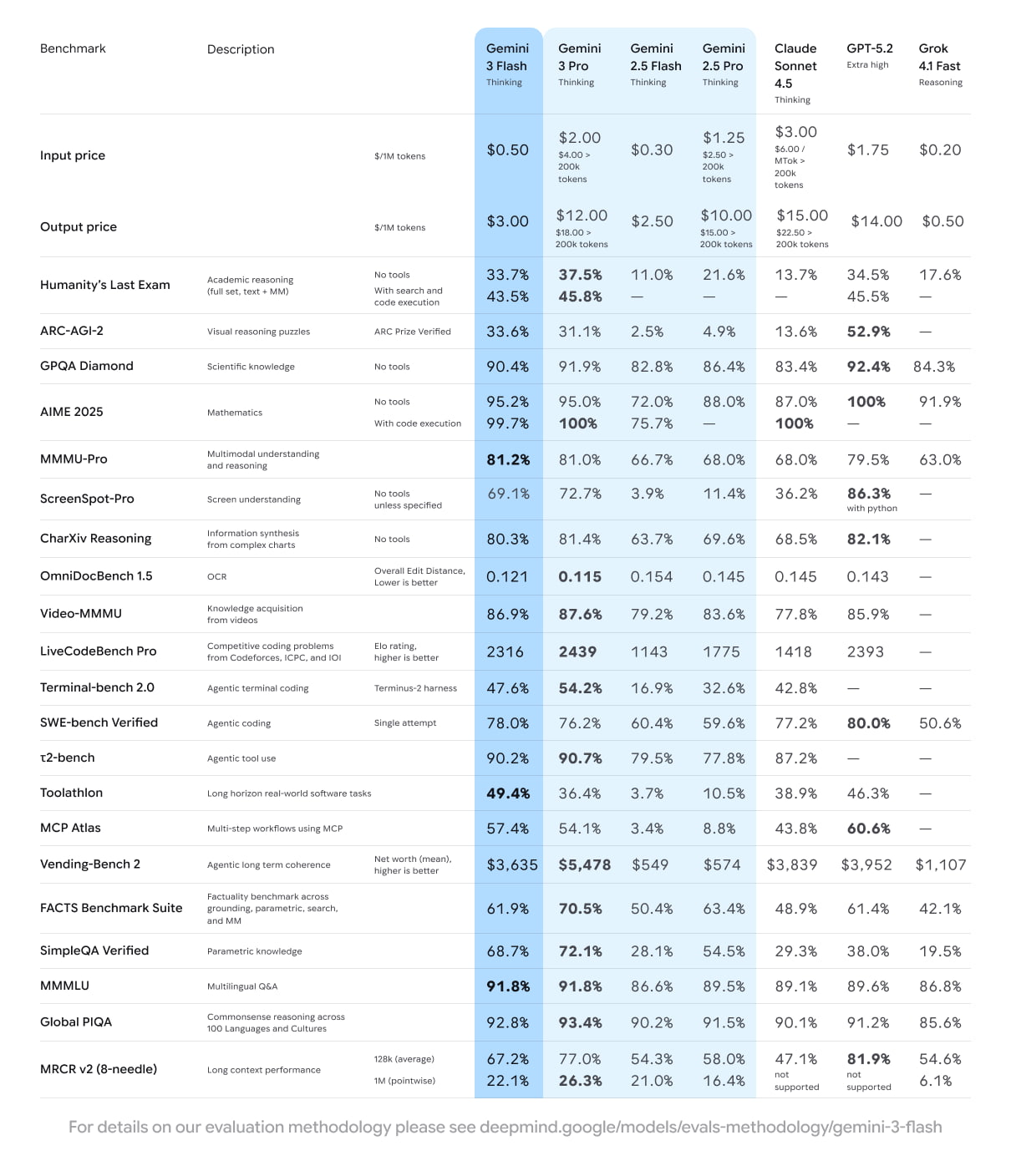

January 12, 2026GPQA Diamond 93.8%. Humanity’s Last Exam 41.0%. ARC-AGI-2 45.1%. These are the numbers Google’s Gemini 3 Pro Preview Deep Think mode posted — and accessing them costs $250 a month. After spending weeks with the complete Gemini 3 model family since its full rollout in late 2025, I’ve developed a detailed perspective on what these numbers actually mean in practice. Here’s my honest breakdown of whether the premium AI Ultra tier is justified, or whether the nearly-free Flash model is the real story everyone should be paying attention to.

Gemini 3 Pro Preview Deep Think: How Parallel Reasoning Changes Everything

On November 18, 2025, Google unveiled Gemini 3 Pro as its most advanced foundation model ever built. The launch came accompanied by record-breaking benchmark scores and a brand-new dedicated coding application, sending immediate shockwaves across the entire AI industry. Developers, researchers, and enterprise teams scrambled to test the model’s capabilities against their most challenging use cases. But the real paradigm shift arrived roughly two weeks later, and it went far beyond incremental improvements.

In December 2025, Google began rolling out Gemini 3 Deep Think mode exclusively to AI Ultra subscribers paying $250 per month. The fundamental innovation behind Deep Think is advanced parallel reasoning — instead of following a single chain of thought sequentially like most AI models do today, Deep Think explores multiple hypotheses simultaneously. Think of it as the difference between a chess player considering one move at a time versus a grandmaster evaluating dozens of potential lines in parallel, weighing probabilities and counter-strategies before committing to the optimal path.

This architectural approach represents a meaningful departure from how reasoning models have traditionally worked. Most current-generation AI systems, including competitors from OpenAI and Anthropic, rely on chain-of-thought reasoning that follows a single logical thread. Deep Think’s parallel hypothesis exploration means it can identify dead ends faster, discover non-obvious connections between concepts, and arrive at more robust conclusions — especially on problems where the correct approach isn’t immediately apparent.

This depth comes at a practical cost: each response takes several minutes to generate. For quick everyday questions — summarizing an article, drafting an email, explaining a concept — Deep Think is dramatic overkill. But for complex scientific reasoning, advanced mathematics, multi-step logical proofs, and high-difficulty coding challenges where correctness matters more than speed, the approach pays enormous dividends. According to 9to5Google’s detailed coverage, Deep Think posted these benchmark numbers upon release:

- GPQA Diamond: 93.8% — graduate-level science questions specifically designed to stump PhD-level experts

- Humanity’s Last Exam: 41.0% (no tools) — a benchmark designed to be the single hardest test ever created for AI systems

- ARC-AGI-2: 45.1% (with code execution) — widely considered the gold standard for measuring general intelligence and abstract reasoning

These numbers deserve careful context. GPQA Diamond was specifically engineered to test the absolute boundaries of AI reasoning capability with questions requiring multi-step scientific deduction across disciplines like physics, chemistry, and biology. Scoring 93.8% means Deep Think can reliably solve problems that most human domain experts genuinely struggle with — a performance threshold that seemed at least two or three years away just twelve months earlier. The Humanity’s Last Exam score of 41.0% is perhaps even more impressive given that this benchmark was explicitly constructed to be unsolvable by current AI systems.

Gemini 3 Flash: Pro-Level Intelligence at a Fraction of the Cost

While Deep Think targets the premium end of the market with its $250 monthly price tag, Google simultaneously attacked from the opposite direction with equal aggression. On December 17, 2025, they launched Gemini 3 Flash with a value proposition that’s genuinely difficult to ignore: frontier-level intelligence delivered at Flash speed and pricing.

The headline stat is staggering and bears repeating: Gemini 3 Flash outperforms the previous-generation flagship Gemini 2.5 Pro while running 3x faster. To put that in perspective, Gemini 2.5 Pro was considered one of the most capable AI models in the world just months earlier. Now its successor in the budget tier exceeds it on virtually every benchmark. The pricing is equally aggressive — just $0.50 per million input tokens and $3.00 per million output tokens, making it accessible to individual developers, startups, and hobbyists alike. Per 9to5Google’s comprehensive report, Flash delivered these benchmark scores at launch:

- GPQA Diamond: 90.4% — just 3.4 percentage points behind the $250/month Deep Think mode

- SWE-Bench Verified: 78% — measuring performance on real-world software engineering tasks pulled from actual GitHub repositories

- MMMU Pro: 81.2% — testing multimodal understanding across visual, textual, and reasoning domains

Let those numbers sink in for a moment: the gap between $250/month Deep Think and the nearly-free Flash tier on GPQA Diamond is only 3.4 percentage points. For the vast majority of practical use cases — content generation, general-purpose coding assistance, document summarization, language translation, data analysis — Flash delivers more than enough capability to handle the job effectively. The fact that a budget-tier model released in late 2025 now surpasses what was the absolute pinnacle of AI performance just six months prior demonstrates how breathtaking the pace of AI advancement has truly become.

The Complete Gemini 3 Family in January 2026: Google’s Strategic Three-Tier Approach

By January 2026, the Gemini 3 family had reached its complete form with three distinct models: Pro (Preview), Flash, and Deep Think. Google continued making significant infrastructure investments during this period to solidify the ecosystem. On January 21, the gemini-pro-latest alias was officially updated to point to gemini-3-pro-preview, signaling that the new model had graduated to production-ready status. Then on January 29, Computer Use tool support was extended to both gemini-3-pro-preview and gemini-3-flash-preview, enabling AI-driven browser and desktop automation capabilities across the model family.

Analyzing this lineup through a competitive lens against OpenAI’s GPT series and Anthropic’s Claude family reveals Google’s deliberate and well-executed three-tier strategy:

- Flash: Mass market dominance — fast, affordable, and surprisingly powerful enough to replace last-gen flagships for most workflows

- Pro (Preview): Developer and professional tier — balanced high performance with robust tool integration and the best cost-to-capability ratio for serious production applications

- Deep Think: Elite reasoning tier — for scenarios where accuracy on complex, multi-step problems is worth any cost and any amount of latency

This segmentation mirrors what we’ve seen in other technology markets. Just as cloud computing evolved from one-size-fits-all instances to purpose-built compute tiers (general purpose, compute-optimized, memory-optimized), AI models are now being deliberately stratified to match diverse workload requirements. Google appears to understand this dynamic better than its competitors, at least in terms of model lineup breadth.

Who Actually Needs the $250 AI Ultra Subscription?

Let me be direct about this: the $250/month Google AI Ultra subscription is excessive for the overwhelming majority of users. Deep Think’s 93.8% GPQA Diamond score is undeniably impressive on paper, but the real-world gap between it and Flash’s 90.4% only manifests in a narrow set of highly specific scenarios. Most people will never encounter a use case where that 3.4 percentage point difference changes the outcome of their work.

Based on the benchmark data, published use cases, and practical testing observations, here’s where AI Ultra with Deep Think genuinely earns its substantial price tag:

- Academic researchers who routinely tackle complex multi-step scientific reasoning problems — the kind of challenges where a 3.4% accuracy improvement makes the critical difference between arriving at a correct proof and hitting a dead end that wastes weeks of work

- Enterprise development teams working on high-stakes architecture decisions or debugging mission-critical production systems, where Deep Think’s parallel hypothesis exploration can surface subtle edge cases and race conditions that single-path sequential reasoning consistently misses

- Data-driven decision makers in specialized fields like quantitative finance, pharmaceutical research, or materials science, where a few percentage points of improved reasoning accuracy translates directly into business outcomes worth millions of dollars

For everyone else — and that includes content creators, everyday software developers, students, small business owners, and casual AI enthusiasts — Gemini 3 Flash represents what might be the most extraordinary value proposition in AI history. It delivers performance that would have been considered flagship-tier just six months ago, at speeds and prices that make it practical for constant daily use. That’s the genuinely revolutionary development here, even if the headline-grabbing benchmark numbers understandably belong to Deep Think.

Beyond Gemini 3 Pro Deep Think: The 2026 AI Reasoning Race Accelerates

Google’s ambitions didn’t stop with the initial Gemini 3 lineup. On February 19, 2026, the company announced Gemini 3.1 Pro, pushing complex problem-solving capabilities to an entirely new level that few observers anticipated arriving so quickly. According to the official announcement, the ARC-AGI-2 score jumped to an remarkable 77.1% — more than double the 45.1% achieved by the original Gemini 3 Pro with Deep Think just three months earlier. That magnitude of improvement in such a compressed timeframe is virtually unprecedented in the history of AI model development.

This rapid pace of iteration carries profound implications for anyone making purchasing decisions about AI subscriptions today. If core reasoning benchmarks can double in a single quarter, the premium that Deep Think commands at $250 per month is almost certainly temporary. The capabilities that justify that price tag today will inevitably trickle down to cheaper and faster model tiers — likely within months rather than years. For budget-conscious users and organizations, patience may be the most financially sound AI strategy in early 2026.

What Google ultimately demonstrated with the Gemini 3 family is a clear and compelling vision for the future of AI: it’s not about building one monolithic model to rule them all. It’s about constructing an ecosystem where users can select the optimal model for their specific use case, budget constraints, and latency requirements. Deep Think sits at the apex of that ecosystem for pure reasoning power and scientific problem-solving, while Flash democratizes access to capabilities that were strictly elite-tier exclusives just months before. Whether you’re seriously evaluating AI Ultra for your research lab or happily running Flash queries at pennies per million tokens for your startup, the Gemini 3 family has permanently and dramatically raised the floor for what we should expect from AI reasoning systems — and given the trajectory from 3 Pro to 3.1 Pro, the ceiling is still rising fast.

Need AI automation systems or tech consulting? Sean Kim brings 28+ years of music, audio, and technology expertise to every project.

Get weekly AI, music, and tech trends delivered to your inbox.