GPT-5.1-Codex-Max: 5 Breakthroughs Behind OpenAI’s 80% SWE-Bench AI Coding Model

November 5, 2025

Black Friday 2025: Best Gaming PC and Component Deals — GPUs, CPUs, SSDs Worth Grabbing

November 6, 20251501 Elo. That single number just rewrote the AI leaderboard — and Google Gemini 3 is the model behind it. After months of leaks, vague social media teases, and a leaked “gemini-3-pro-preview-11-2025” model ID in VertexAI code, Google has officially unveiled its most capable AI system to date, and the benchmarks are not subtle.

Google Gemini 3 Pro: The Numbers That Matter

Google Gemini 3 Pro arrives as the centerpiece of a sweeping rollout across Search, the Gemini app, AI Studio, Vertex AI, the Gemini CLI, and Google’s brand-new Antigravity IDE. Unlike previous Gemini releases that trickled out to a handful of products first, Gemini 3 hit everything simultaneously — a signal of just how confident Google is in this generation.

The benchmark results back that confidence. On LMArena, Gemini 3 Pro tops the leaderboard at 1501 Elo, surpassing Gemini 2.5 Pro’s 1451. On GPQA Diamond — a graduate-level science reasoning benchmark — it scores 91.9%. Humanity’s Last Exam, designed to push frontier models to their absolute limits, yields 37.5% without any tool usage, compared to GPT-5.1’s 26.5%. And on the mathematical front, AIME 2025 sees a perfect 100% score with code execution enabled.

Multimodal Reasoning: Text, Code, and Video in a Single Request

What makes Google Gemini 3 genuinely different from its predecessors is not just faster text generation — it is the unified multimodal architecture. Gemini 3 Pro processes text, images, video, audio, and PDFs in a single request. No separate pipelines. No stitching together vision and language models. You send a 200-page PDF, three screenshots, and a 10-minute video clip, and the model reasons across all of them at once.

The context window stretches to 1,048,576 tokens on the input side with up to 65,536 tokens of output — enough to swallow entire codebases, hour-long meeting transcripts, or multi-document legal reviews in one pass. On multimodal benchmarks, Gemini 3 Pro scores 81% on MMMU-Pro and 87.6% on Video-MMMU, establishing new state-of-the-art marks for combined visual and textual reasoning.

For developers, this means building applications that previously required separate OCR, speech-to-text, and vision model integrations can now be handled by a single API call. Financial analysts can feed quarterly earnings PDFs alongside earnings call recordings. Legal teams can cross-reference contracts with video depositions. The practical implications are enormous.

Deep Think Mode: When Standard Reasoning Is Not Enough

Google positions Deep Think as a higher-intensity reasoning mode for the hardest problems — competitive programming, multi-step mathematical proofs, and long-horizon planning tasks that require sustained logical chains. Think of it as the model shifting into a deliberate, methodical problem-solving gear.

The Deep Think benchmarks are striking. Humanity’s Last Exam jumps from 37.5% to 41.0% in the initial release, later reaching 48.4% — setting a new standard for frontier model reasoning. GPQA Diamond climbs to 93.8%. Most impressively, ARC-AGI-2 — the benchmark specifically designed to test genuine reasoning rather than pattern matching — goes from Gemini 2.5 Pro’s 4.9% to 45.1% with code execution, eventually reaching 84.6% in subsequent updates, verified by the ARC Prize Foundation. On Codeforces competitive programming, Deep Think achieves 3455 Elo — a level that would place it among the top human competitive programmers globally.

Deep Think will be available to AI Ultra subscribers in the coming weeks, with enterprise access through Vertex AI. For most everyday tasks, standard Gemini 3 Pro more than suffices. Deep Think is the scalpel you reach for when the standard model hits its limits on truly complex problems.

Generative UI: The Feature Nobody Expected

Perhaps the most surprising element of the Gemini 3 launch is generative UI — the model’s ability to create entire custom user interfaces dynamically tailored to individual queries. Two experiments ship at launch:

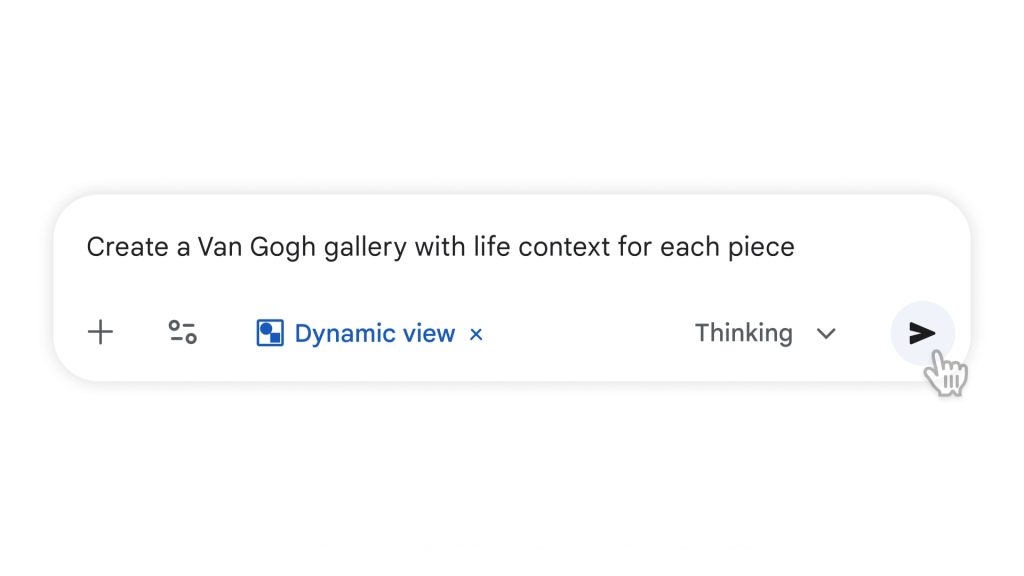

- Dynamic View creates customized interactive responses that adapt based on the audience. Ask it to explain quantum mechanics, and it generates a different experience for a physics PhD student versus a curious 12-year-old.

- Visual Layout produces immersive magazine-style interfaces complete with photos, sliders, and customizable filters — turning what would be a wall of text into an interactive exploration.

This is a departure from the chatbot paradigm. Instead of answering questions with paragraphs of text, Gemini 3 can build mortgage calculators, physics simulations, and interactive data dashboards on the fly. The system combines tool access (web search, image generation) with detailed instructions guiding goal, planning, and technical specifications before post-processors clean up the output.

Coding and Agentic Capabilities: From IDE to Infrastructure

Google Gemini 3’s coding prowess is validated by a 76.2% score on SWE-bench Verified and a WebDev Arena Elo of 1487. But raw benchmarks only tell part of the story. The real shift is in how Gemini 3 integrates into developer workflows.

Through Code Assist, Gemini 3 handles multi-step coding tasks in agent mode across common IDEs. The Gemini CLI enables application scaffolding, refactoring, documentation generation, and lightweight autonomous agents — all from the terminal. And then there is Google Antigravity, a brand-new agentic development platform available on Mac, Windows, and Linux, where agents autonomously plan and execute complex software tasks while validating code.

For enterprise customers, the agentic capabilities extend beyond code. Google highlights financial analysis, supply-chain planning, and contract review as key use cases — tasks that require sustained reasoning across multiple documents, data sources, and decision trees. Terminal-Bench 2.0, which measures tool use and computer operation, sees Gemini 3 score 54.2%, suggesting the model is increasingly capable of operating as an autonomous agent rather than just a text generator.

Access, Pricing, and the Subscription Tiers

Google Gemini 3 Pro is available immediately through the Gemini app for all users, with AI Pro and AI Ultra subscribers accessing advanced features via a dropdown menu. In Google AI Mode, subscribers can select “Thinking: 3 Pro reasoning and generative layouts” for the full experience. Free US users will also gain access with tiered usage limits as the rollout expands.

For developers, the Gemini API through Google AI Studio offers direct access to Gemini 3 Pro with structured JSON outputs and built-in tool integration. Enterprise customers get access through Vertex AI, which provides additional security, compliance, and data governance controls. Deep Think mode will be available exclusively to AI Ultra subscribers and through Vertex AI enterprise plans in the coming weeks.

The pricing model positions Gemini 3 competitively against OpenAI’s GPT-5.1 and Anthropic’s Claude Opus. With Google’s existing infrastructure advantages — including custom TPU hardware and tight integration with Google Cloud — the per-token costs for enterprise deployments could undercut competitors significantly, especially for high-volume multimodal processing workloads where separate vision, speech, and language model costs add up fast.

What This Means for the AI Landscape

The Google Gemini 3 launch represents more than an incremental upgrade. It is Google’s clearest statement yet that the era of AI as a “chatbot” is over. Between generative UI, agentic coding, Deep Think reasoning, and unified multimodal processing, Gemini 3 is positioned as an AI platform rather than just a model.

The competitive pressure on OpenAI, Anthropic, and Meta is immediate. GPT-5.1’s 26.5% on Humanity’s Last Exam looks modest next to Gemini 3’s 37.5% (and Deep Think’s 48.4%). Claude’s strength in coding and long-context tasks faces a direct challenge from Gemini 3’s 1M-token context and SWE-bench scores. And with simultaneous deployment across Search (which processes billions of queries daily), Google has a distribution advantage that no competitor can match.

For developers and enterprises evaluating AI platforms, the message is clear: multimodal reasoning is no longer a nice-to-have — it is the baseline. And Google Gemini 3 just set the new floor for what that baseline looks like.

Want to build AI-powered automation pipelines or integrate multimodal models into your workflow? Let’s talk strategy.

Get weekly AI, music, and tech trends delivered to your inbox.