Suno AI v5 Review: AI Music Generation Takes a Massive Quality Leap

December 1, 2025

Best Tech Products of 2025: Editor’s Choice Awards Across All Categories

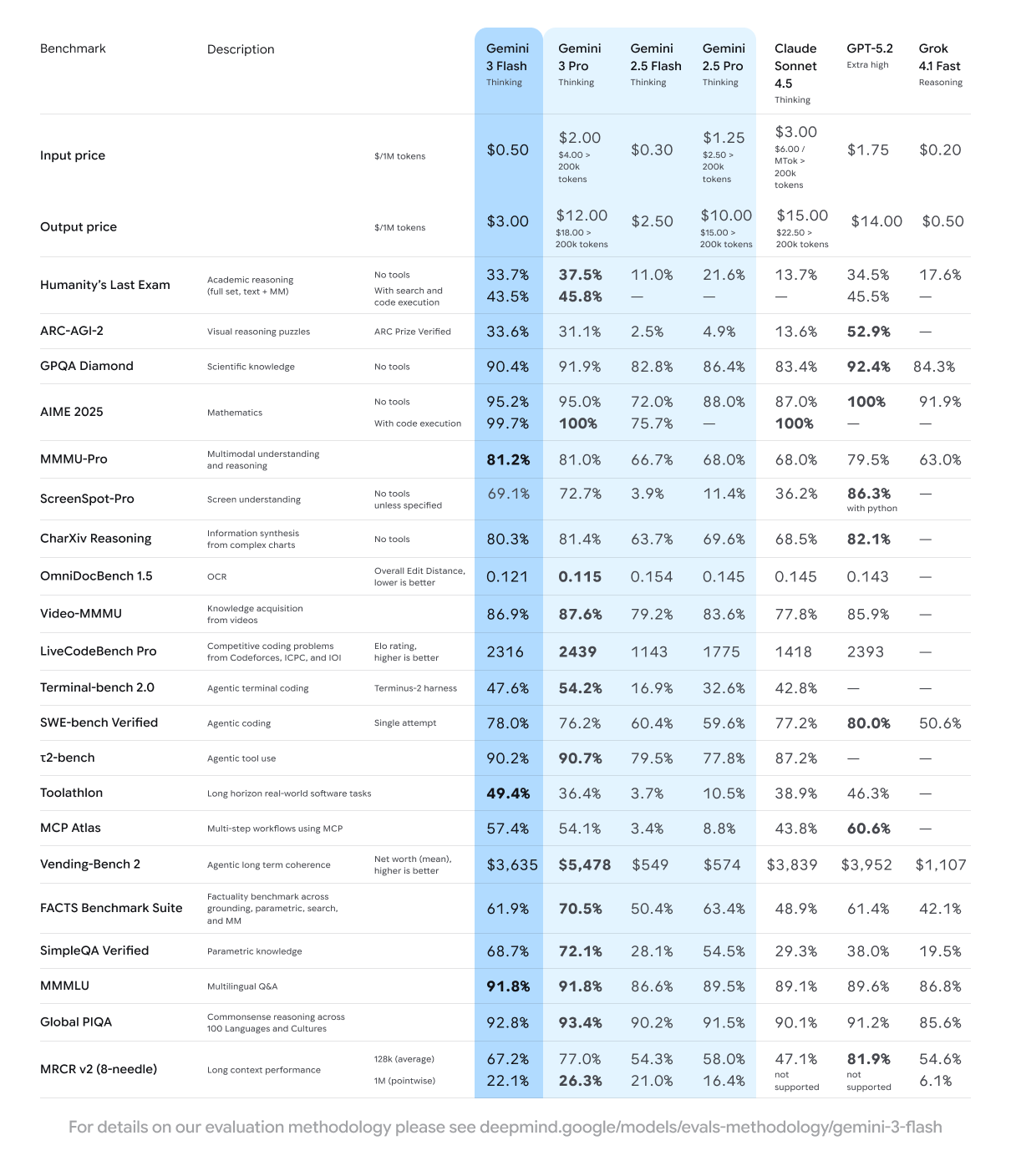

December 1, 2025Finally — a model that doesn’t force you to choose between intelligence and speed. Google just dropped Gemini 3 Flash, and the numbers are frankly absurd: 90.4% on GPQA Diamond, 81.2% on MMMU Pro, all while running 3x faster than Gemini 2.5 Pro at a fraction of the cost. This isn’t incremental improvement. This is a fundamental shift in what “lightweight” AI can do.

Why Gemini 3 Flash Changes the Lightweight AI Game

Here’s the uncomfortable truth the AI industry doesn’t want to admit: for most real-world applications, you don’t need the biggest model — you need the fastest one that’s still smart enough. Google seems to have internalized this with Gemini 3 Flash, which launched on December 17, 2025, just one month after Gemini 3 Pro.

The model is now the default in the Gemini app globally, replacing Gemini 2.5 Flash. It also powers Google Search — meaning billions of queries daily are now running through this architecture. That’s not a beta test; that’s production at planetary scale.

Gemini 3 Flash Benchmark Breakdown: The Numbers That Matter

Let’s cut through the marketing and look at what Gemini 3 Flash actually achieves on standardized benchmarks:

- GPQA Diamond (Scientific Reasoning): 90.4% — outperforming several frontier-class models

- MMMU Pro (Multimodal Understanding): 81.2% — the highest score among all competitors at launch

- SWE-Bench Verified (Agentic Coding): 78% — matching dedicated coding models

- Toolathlon (Real-World Software Tasks): 49.4% — strong tool-use capabilities

- MCP Atlas (Multi-Step Workflows): 57.4% — critical for agent-based systems

- Humanity’s Last Exam: 33.7% without tools — a genuinely difficult benchmark

What makes these numbers remarkable isn’t any single score — it’s the combination. Gemini 3 Flash matches or exceeds Gemini 3 Pro on multiple benchmarks while being 3x faster. According to 9to5Google’s analysis, this effectively makes the Pro tier redundant for many use cases.

Pricing: $0.50 Per Million Input Tokens Changes the Economics

Google priced Gemini 3 Flash aggressively:

- Input tokens: $0.50 per million

- Output tokens: $3.00 per million

- Audio input: $1.00 per million tokens

For context, Gemini 3 Pro costs $1.50/$6.00 per million tokens — 3x more for input and 2x more for output. The previous generation Gemini 2.5 Flash was cheaper ($0.30/$2.50), but the performance gap between 2.5 Flash and 3 Flash is massive. You’re paying 67% more per input token but getting frontier-class intelligence instead of mid-tier performance.

When you compare against OpenAI’s GPT-4o mini ($0.15/$0.60 per million) or Anthropic’s Claude Haiku ($0.25/$1.25 per million), Gemini 3 Flash is more expensive on a per-token basis. But the benchmark scores tell a different story — you’re getting Pro-level intelligence at Flash-tier speeds. The cost per useful output may actually be lower.

Multimodal Input: Text, Image, Video, Audio, and PDF in One Model

Gemini 3 Flash accepts five input modalities — text, image, video, audio, and PDF — with text output. The context window is enormous: 1,048,576 input tokens with up to 65,536 output tokens. That’s a million-token context window in a lightweight model.

The practical implications are significant. You can feed an entire video transcript, a set of product images, and a PDF specification sheet into a single prompt — and get a coherent analysis back in seconds. For developers building customer support agents, content moderation systems, or in-game assistants, this is exactly the kind of multimodal capability that eliminates the need for model chaining.

Google also introduced two operating modes: Fast for quick answers and Thinking for complex problems. This dual-mode approach lets users (and developers) dynamically choose between speed and depth based on the task at hand.

Real-World Use Cases: Where Gemini 3 Flash Shines Brightest

The combination of multimodal input, massive context window, and aggressive pricing opens up use cases that were previously cost-prohibitive. Here are the scenarios where Gemini 3 Flash delivers the most value:

E-commerce product analysis: Feed product images, competitor listings, and customer reviews into a single prompt. At $0.50 per million input tokens, analyzing 10,000 product listings costs less than $5. Retailers can automate product categorization, description generation, and competitive pricing analysis at scale.

Customer support automation: With 78% on SWE-Bench and strong multimodal capabilities, Gemini 3 Flash can handle complex support tickets that include screenshots, log files, and natural language descriptions. The Thinking mode handles edge cases while Fast mode processes routine queries in milliseconds.

Content moderation at scale: Video, image, and text moderation in a single model eliminates the need for separate pipelines. The million-token context window means entire video transcripts can be analyzed in one pass rather than chunked into fragments.

Document intelligence: PDF input support makes Gemini 3 Flash particularly effective for legal document review, financial report analysis, and academic research synthesis. Processing a 200-page PDF costs less than $0.01 in input tokens.

Firebase AI Logic SDK: Gemini 3 Flash on Mobile Without Server Infrastructure

Perhaps the most exciting development for mobile developers: Gemini 3 Flash is available through the Firebase AI Logic SDK, accessible directly from Android apps without complex server-side setup. The implementation is remarkably clean:

val model = Firebase.ai(backend = GenerativeBackend.googleAI())

.generativeModel(modelName = "gemini-3-flash-preview")Three lines of Kotlin and your Android app has access to a frontier-class multimodal AI model. Firebase also provides an AI Monitoring Dashboard for tracking latency, success rates, and costs in real time. Developers can store prompts securely as Server Prompt Templates — preventing unauthorized prompt extraction while enabling rapid iteration without app updates.

Gemini 3 Flash is also free as the default model in Android Studio, lowering the barrier for developers who want to experiment before committing to production workloads.

Developer Ecosystem: Where Gemini 3 Flash Is Available

Google is making Gemini 3 Flash available across its entire developer platform:

- Google AI Studio — web-based prototyping and testing

- Google Antigravity — Google’s latest developer IDE

- Gemini CLI — command-line access for automation workflows

- Android Studio — free integration for mobile development

- Vertex AI — enterprise-grade deployment with SLA guarantees

- Gemini Enterprise — business tier with enhanced security and compliance

This breadth of access is strategic. Google wants Gemini 3 Flash to be the default choice for any developer who needs a fast, capable, multimodal model — regardless of their preferred development environment.

What This Means for the AI Model Market in 2026

Gemini 3 Flash represents a broader trend in the AI industry: the “good enough” tier is getting really, really good. When a lightweight model can score 90.4% on scientific reasoning and 78% on coding benchmarks, the justification for paying 3-10x more for a frontier model becomes increasingly narrow.

For startups and indie developers, this is liberating. The cost of building AI-powered products just dropped significantly — not because prices fell, but because the cheapest tier became genuinely capable. A chatbot running Gemini 3 Flash can handle complex multimodal queries, write production-quality code, and reason about scientific papers — tasks that required GPT-4 class models just 12 months ago.

As reported by TechCrunch, Google positioned this release explicitly to compete with OpenAI’s market dominance. The message is clear: you don’t need to pay premium prices for premium performance anymore. And with the Firebase AI Logic SDK making mobile integration trivial, the barrier to building AI-powered apps has never been lower.

The question isn’t whether Gemini 3 Flash is good enough for your next project. At these benchmarks and this price point, the real question is whether your current model stack is justified anymore.

Building AI-powered products or need help integrating models like Gemini 3 Flash into your workflow? Let’s talk about your tech stack.

Get weekly AI, music, and tech trends delivered to your inbox.