Microsoft MAI Models Drop 3 at Once — MAI-Transcribe-1 Destroys Whisper Across All 25 Languages

April 3, 2026

Lenovo Legion Tab Gen 5 Review: 4-Hour 60FPS Sustained Gaming at $849 Makes Gaming Phones Pointless

April 3, 2026You have 21 days. After April 24, every code snippet you type into GitHub Copilot, every filename, every repo structure, even the context around your cursor — all of it becomes training data for Microsoft’s AI models. GitHub Copilot data training is about to change fundamentally, and if you haven’t opted out yet, the clock is ticking.

What’s Happening: The GitHub Copilot Data Training Policy Shift

On March 25, 2026, GitHub published updates to its privacy statement and terms of service. The bottom line: starting April 24, Copilot Free, Pro, and Pro+ users’ interaction data will be used to train AI models. No explicit consent required — it’s opt-out, not opt-in.

GitHub’s CPO Mario Rodriguez explained in the official blog post that models trained on Microsoft employee data showed improved acceptance rates across multiple programming languages. Translation: your code makes Copilot smarter, and GitHub wants more of it.

The 8 Types of Data Being Collected — Private Repos Aren’t Safe

Here’s where it gets uncomfortable. Many developers assume their private repositories are off-limits. Technically, GitHub says it won’t collect “code at rest” from private repos — meaning the code sitting untouched in your repository. But the moment you open a file and Copilot is active? That code becomes fair game.

Here’s what GitHub collects during active Copilot sessions:

- Code snippets — Code you type or that Copilot suggests

- Input/output data — Your prompts and Copilot’s complete responses

- Cursor context — The code surrounding your current editing position

- Filenames — Names of files you’re working on

- Repository structure — Your project’s directory layout

- Navigation patterns — How you move between files

- Feedback ratings — Whether you accept or reject suggestions

- Edit events — Your code modification history

According to The Register, the distinction between “code at rest” and “active session data” is the critical detail most developers are missing. If you’re working in a private repo with Copilot enabled, your code snippets are collected — period. The “private” label on your repo doesn’t protect what flows through Copilot’s pipeline.

Who’s Affected and Who’s Protected

The impact breaks down clearly by subscription tier:

- Affected: Copilot Free, Pro, and Pro+ users (auto-enrolled unless you opt out)

- Protected: Business and Enterprise subscribers (contractual protections)

- Protected: Student and teacher accounts (GitHub Education)

- Previously opted out: If you disabled this setting before, your preference is preserved

The pattern is clear: individual developers and small teams bear the brunt. Enterprises have legal protections baked into their contracts, but freelancers, indie developers, and startup teams? They need to take action themselves.

Community Backlash: 59 Thumbs-Down and Counting

The developer community’s response has been overwhelmingly negative. The GitHub Community Discussion tells the story: 59 thumbs-down reactions versus 3 rocket emojis. Out of 39 comments, the only positive voice was GitHub VP Martin Woodward himself.

Security experts have been particularly vocal. Help Net Security’s analysis labeled the opt-out-by-default approach a “dark pattern” — a design choice that manipulates users into sharing data they wouldn’t voluntarily give. The argument is straightforward: if GitHub believed developers actually wanted this, they’d make it opt-in.

InfoQ’s technical analysis highlighted that this policy change potentially affects over 100 million GitHub users. Even developers who don’t use Copilot should pay attention — the broader implications for code ownership and data rights in the AI era affect everyone.

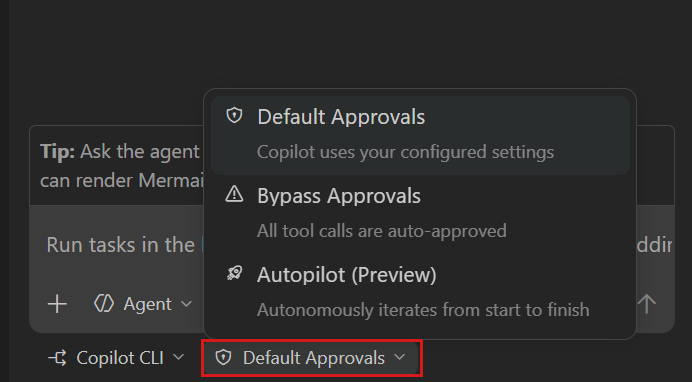

How to Opt Out: Step-by-Step Before the April 24 Deadline

The good news: opting out is straightforward. Here’s exactly what you need to do:

- Step 1: Log in to GitHub.com and click your profile icon in the top-right corner

- Step 2: Navigate to Settings → Copilot

- Step 3: Find the option “Allow GitHub to use my code snippets from Copilot for product improvements”

- Step 4: Toggle it OFF

- Step 5: If you work in organization repos, ask your org admin to verify the organization-level settings as well

Critical note: If you previously opted out, your setting is preserved. But if you’ve never checked this setting — and many developers haven’t — you need to verify now. Data collected after April 24 can’t be retroactively removed.

GitHub’s Defense vs Reality

GitHub has put forward several arguments to justify this change. Let’s examine each one.

“Training on Microsoft employee data improved acceptance rates.” This may be true. But Microsoft employees consented through their employment agreements. Applying the same logic to external users who signed up under different terms is a fundamentally different proposition.

“Anthropic, JetBrains, and Microsoft have similar opt-out policies.” Also true. But “everyone else does it” has never been a compelling ethical argument. GitHub occupies a unique position as the backbone of the global open-source ecosystem. With that position comes a higher standard of trust.

“We don’t collect private repo code.” Technically correct — for code at rest. But once you’re in an active Copilot session editing that private repo code, snippets flow into the collection pipeline. This technical distinction offers far less protection than it appears to on the surface.

The Bigger Picture: Developer Data Sovereignty in the AI Era

This isn’t just about GitHub Copilot data training. It’s about a pattern emerging across the entire tech industry. AI companies are discovering that the most valuable training data comes from active usage — real developers solving real problems in real codebases. And they’re finding ways to collect it with minimal friction.

The opt-out model creates an asymmetry of awareness. Tech-savvy developers who follow industry news will opt out. But the majority — working developers focused on shipping features and meeting deadlines — won’t even know about the change until it’s too late. That’s not informed consent; it’s manufactured compliance.

For teams handling sensitive code — financial systems, healthcare applications, proprietary algorithms — the stakes are even higher. Even if GitHub promises not to expose individual code snippets, the aggregate patterns learned from millions of developers’ code inevitably encode proprietary approaches and techniques.

My Take: What Every Developer Should Do Right Now

After 28 years working across music, audio, and tech, I’ve watched this exact pattern play out over and over. A platform builds a massive user base with generous terms, waits until switching costs are prohibitively high, then changes the deal. Spotify did it to artists with royalty restructuring. Adobe did it with the Creative Cloud subscription pivot. Now GitHub is doing it to developers.

I use Copilot actively across multiple automation projects — including the pipeline that publishes this blog. It’s a genuinely useful tool. But I’ll be opting out before April 24, and here’s the action plan I’d recommend for every developer reading this.

First, go to GitHub Settings → Copilot right now and turn off the data usage toggle. Don’t bookmark it for later — do it today. Second, if you work on a team, message your org admin and make sure the organization-level settings are locked down too. Third, develop a habit of temporarily disabling Copilot when working on sensitive code — authentication logic, API key handling, proprietary algorithms. Fourth, start evaluating your GitHub dependency. Alternatives like GitLab and Codeberg exist, and diversifying your infrastructure is never a bad strategy.

This isn’t about rejecting AI coding assistants — they genuinely boost productivity. It’s about recognizing that the price you pay isn’t just the monthly subscription. It’s your code, your patterns, your intellectual output. As developers, we spend our careers building things. The least we can do is maintain sovereignty over what we’ve built.

The April 24 deadline is both a threat and a gift — at least there’s a window to act. The question is whether you’ll use it. GitHub Copilot data training is happening whether you pay attention or not. The only variable is whether your code is part of it.

Need help building automation workflows or navigating tech decisions? Let’s talk — 28 years of experience, one consultation away.

Get weekly AI, music, and tech trends delivered to your inbox.