Bitwig Studio 6 vs Ableton Live 12.3: Which DAW Should You Choose in 2026?

March 3, 2026

Logic Pro 12 AI Features: Synth Player, Chord ID & Session Players After 5 Weeks of Real Production

March 4, 2026GDC 2026 AI game development demos left the audience speechless — an NPC understood a voice command it had never been programmed to handle and improvised a response with its own personality. That was not a cinematic trailer. It was running in real time on a Snowdrop engine build. And yet, the same week this happened, a survey revealed that 52% of game developers now view generative AI negatively. Welcome to the most fascinating contradiction in gaming right now.

GDC 2026, held March 9-13 in San Francisco, brought together the biggest names in game development — Google Cloud, EA, Unity, Sony, NVIDIA, Ubisoft, and Tencent — all converging on a single theme: AI is no longer a buzzword, it is shipping in production pipelines. But the gap between what the technology can do and what developers are willing to deploy is wider than ever. Let us break down what actually matters from this year’s show.

Ubisoft Teammates: The AI NPC Experiment That Changes Everything

The standout moment of GDC 2026 AI game development presentations came from an unlikely source — not a tech giant’s keynote, but an R&D experiment by Ubisoft called Teammates. Led by Xavier Manzanares, Ubisoft’s director of generative AI gameplay, an 80-person team built a playable experience where players interact with three AI NPCs — Jaspar, Pablo, and Sophia — using natural voice commands.

What makes Teammates different from every chatbot-meets-game-character demo we have seen before? Context and personality. Each NPC has a distinct personality profile and the ability to understand contextual intent. You do not just bark orders at them — they interpret what you mean, weigh it against their own behavioral parameters, and respond dynamically. The system runs on Google Gemini integrated into Ubisoft’s Snowdrop engine, the same engine powering The Division franchise.

Manzanares was careful to label this an R&D experiment, not a product announcement. But the implications are enormous. If NPCs can sustain coherent, personality-driven conversations in real time, the entire paradigm of scripted dialogue trees becomes obsolete. Games would no longer need thousands of pre-written lines — they would need well-designed character models that an AI can inhabit. That is a fundamental shift in how narrative designers, writers, and voice directors approach their craft.

NVIDIA ACE and the New Era of AI Voice Acting in Games

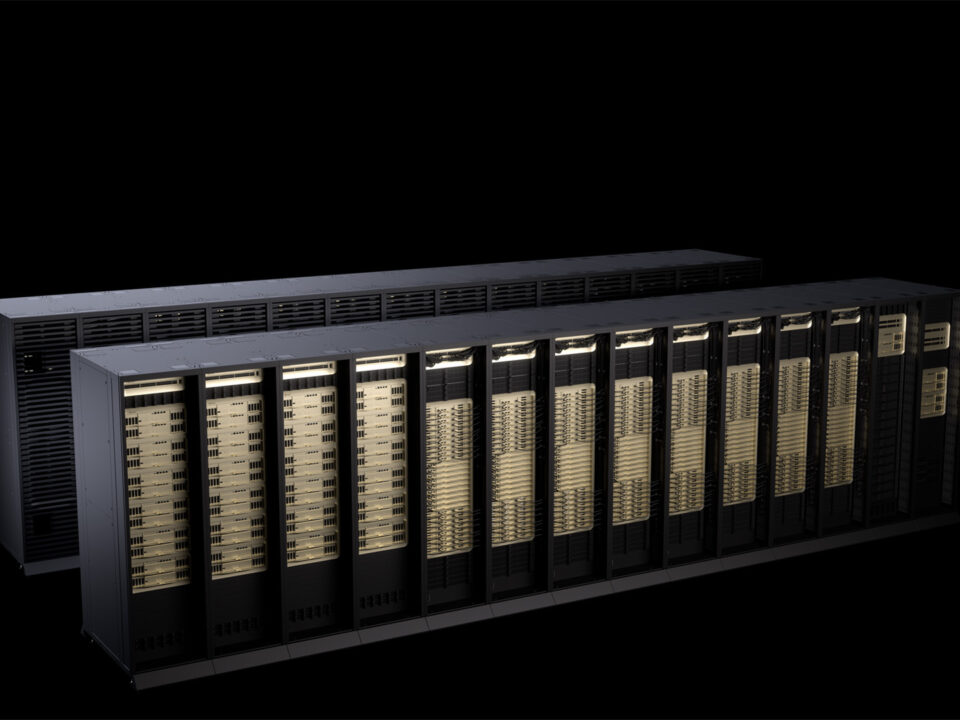

While Ubisoft showed what AI NPCs could feel like from a player’s perspective, NVIDIA’s ACE (Avatar Cloud Engine) demonstrated the infrastructure making it all possible. ACE now runs on-device — no cloud roundtrip required — and debuted its first production-grade text-to-speech model at GDC 2026.

The voice synthesis stack is genuinely impressive. Resemble.ai’s Chatterbox v1.0.0, a 350-million parameter model, enables zero-shot voice cloning. Give it a short audio sample, and it generates speech in that voice without any fine-tuning. Pair this with NVIDIA Riva v1.1 for multilingual automatic speech recognition (ASR), and you have a complete pipeline: the player speaks in their language, the system understands, the NPC responds in a cloned character voice — all in real time.

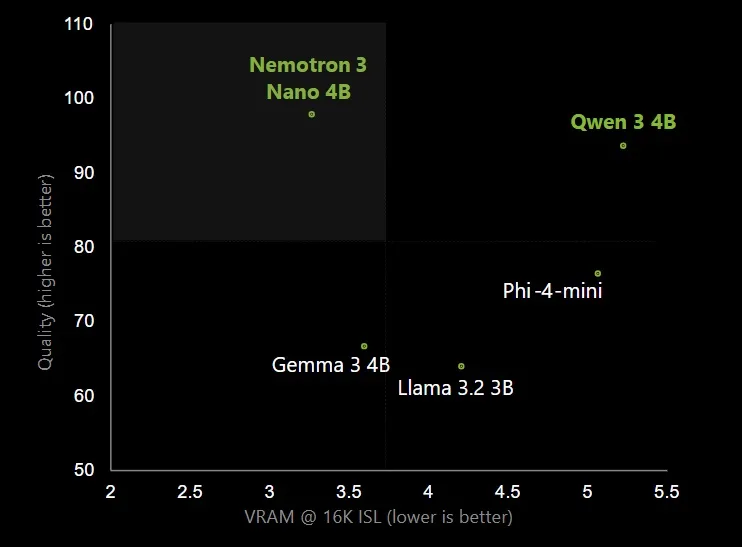

For NPC decision-making, NVIDIA introduced Nemotron 3 Nano, a 4-billion parameter small language model optimized for on-device inference. It handles the logic layer — deciding what an NPC should say or do based on game state, player behavior, and narrative context. Studios already using these tools include Creative Assembly for Total War and Krafton for both PUBG and inZOI.

The voice acting implications are the elephant in the room. Zero-shot cloning means a studio could theoretically generate unlimited dialogue variations from a single recording session. The Screen Actors Guild has already raised concerns, and the ethical boundaries here are far from settled. But technically, the capability is production-ready today.

Tencent Cloud’s GMES Suite: Procedural Content Generation Goes Full Stack

If NVIDIA and Ubisoft focused on NPCs and voice, Tencent Cloud went wide with its GMES (Game Multimedia Engine Suite) announcement, covering everything from asset creation to security. The headline feature is HY 3D AI Creation Engine, which converts text descriptions, images, or rough sketches into production-quality 3D assets in minutes — not hours, not days.

Procedural content generation has existed in games for decades. Minecraft’s world generation, No Man’s Sky’s planets, and Spelunky’s levels all use algorithmic approaches. But HY 3D represents a qualitative leap: instead of generating terrain or room layouts, it produces finished 3D objects with textures and geometry that are ready for engine integration. For indie studios with limited art budgets, this could be transformative.

Tencent’s suite also includes Magic Voice for real-time voice transformation, GVoice for advanced ASR with interruption handling, an Agent Development Platform for building dynamic NPC ecosystems, and EdgeOne AI for security. It is essentially a full-stack AI toolkit for game developers who want to adopt AI across their entire pipeline without stitching together a dozen different services.

The Agent Development Platform deserves particular attention. While NVIDIA and Ubisoft focused on individual NPC interactions, Tencent is thinking at the ecosystem level — how dozens or hundreds of AI-driven NPCs can coexist, interact with each other, and create emergent behaviors within a shared game world. If HY 3D handles the visual assets and ACE handles the voice, Tencent’s platform handles the orchestration layer that ties it all together. For massively multiplayer games, this kind of infrastructure could enable living worlds that evolve even when no human players are online.

The 52% Problem: Why Half of Game Developers Are Pushing Back

Here is the number that should give every AI evangelist pause. According to the GDC 2026 developer survey, 52% of game developers now view generative AI negatively — up from 30% just one year earlier. That is not a minor uptick. That is a seismic shift in sentiment within a single year.

The reasons are layered. Job displacement fears are obvious, especially among voice actors, concept artists, and narrative designers who see their roles directly threatened by the very tools being celebrated on the GDC show floor. But there is also a deeper philosophical objection: many developers believe that AI-generated content lacks the intentionality and craftsmanship that defines great games.

The survey data also reveals where developers are actually using AI today — and it is almost exclusively behind the scenes. Brainstorming ideas, generating placeholder assets, debugging code, writing documentation. Player-facing AI features remain rare in shipped titles. The industry is using AI as a productivity tool while keeping it away from the player experience, which suggests that even AI adopters share some of the skeptics’ concerns about quality and reliability.

Microsoft’s Muse design tool exemplifies this cautious approach. It helps designers iterate faster on concepts without replacing the designer’s judgment. That is a fundamentally different proposition from Ubisoft’s Teammates, which puts AI directly in front of the player. The industry has not yet decided which path it wants to take — and GDC 2026 made that tension impossible to ignore.

What GDC 2026 made clear is that the technology is ready. NVIDIA ACE delivers production-grade voice synthesis on-device. Ubisoft proved that personality-driven AI NPCs can sustain real-time conversations. Tencent showed that procedural content generation now extends to finished 3D assets. The tools exist. The question is no longer whether AI can transform game development — it is whether the people who make games want it to. And right now, the answer from half the industry is a firm “not yet.” The studios that navigate this tension thoughtfully — investing in R&D without forcing half-baked AI into players’ hands — will be the ones that define the next generation of gaming.

Want to discuss AI integration strategies for game development or explore how generative AI fits into your production pipeline? Let’s talk.