Best Standing Desk Setups 2025: Top Desks, Monitor Arms, and Accessories for July

July 17, 2025

Best Sample Libraries for Film Scoring: Orchestral Tools vs Spitfire vs CineSamples — The Definitive 2025 Comparison

July 18, 2025Companies that actively govern their AI put 12 times more projects into production. That single stat from the 2026 State of AI Agents report should make every data leader pause — because the gap between organizations shipping AI and those stuck in pilot purgatory increasingly comes down to one word: governance.

Databricks Unity Catalog AI is at the center of this shift. What started as a metadata catalog for lakehouse tables has evolved into a unified governance layer for every AI asset an organization creates — models, agents, features, prompts, metrics, and the data feeding all of them. If you’ve been treating AI governance as a compliance checkbox, it’s time to rethink. Here’s why.

From Data Catalog to AI Control Plane: What Databricks Unity Catalog AI Actually Does

Traditional data catalogs know about tables and views. Databricks Unity Catalog AI knows about everything. Tables, volumes, machine learning models, feature store entries, AI-defined functions, agents, and even business metrics — all governed under a single security model with attribute-based access control (ABAC).

This isn’t incremental. When your catalog understands that a fine-tuned LLM depends on a specific training dataset, which itself derives from three upstream tables with PII columns, you get something most enterprises desperately need: end-to-end AI lineage. If a regulator questions your model’s output, you can trace the entire data history — from raw ingestion to inference result — inside one system.

The Data + AI Summit 2025 announcements made this vision concrete with three key additions:

- Unity Catalog Metrics (Public Preview) — Business metrics like revenue per user or churn rate become first-class catalog assets, reusable across dashboards, AI models, and data engineering jobs. No more metrics defined in one BI tool but invisible to your ML pipeline.

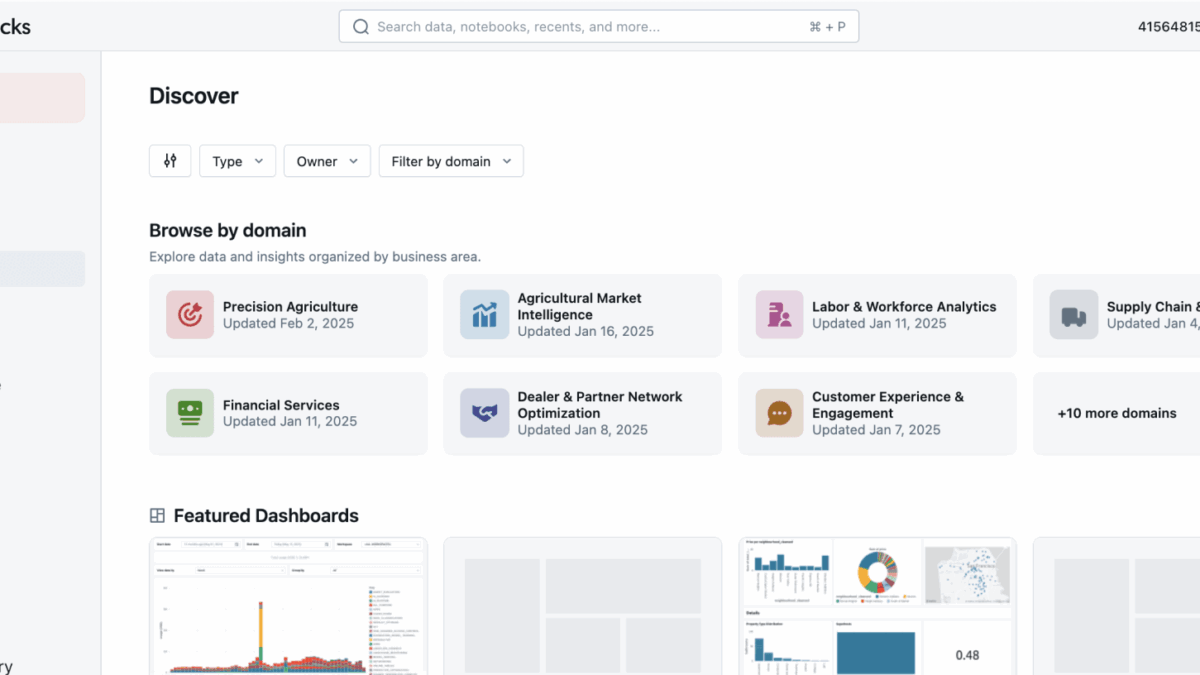

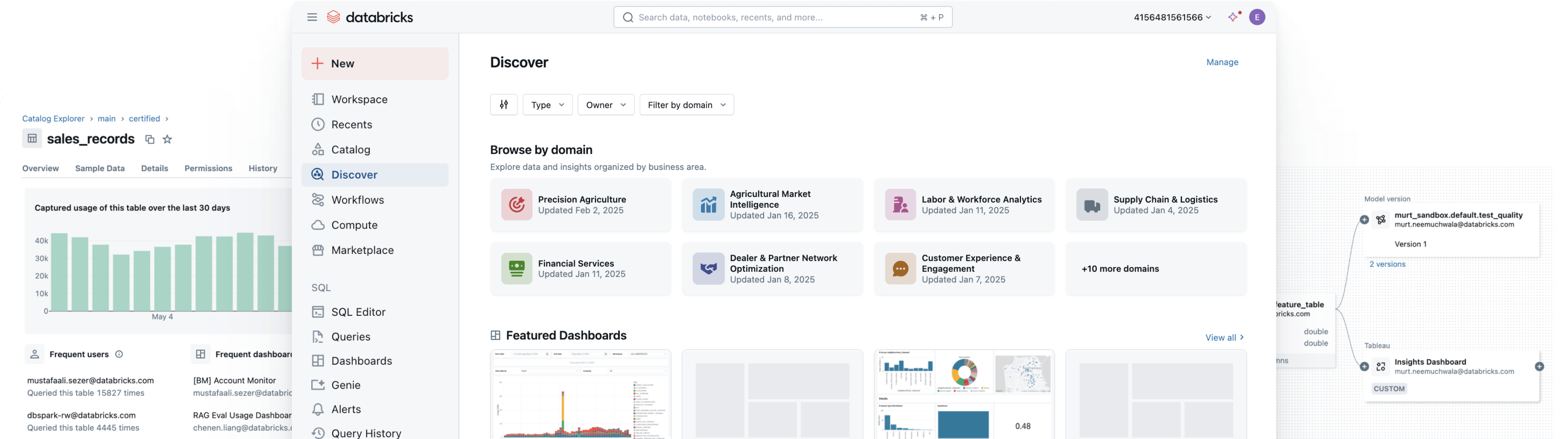

- Discover Experience (Private Preview) — A curated internal marketplace of certified data products organized by business domains (Sales, Marketing, Finance). AI-powered recommendations surface the highest-value assets enriched with documentation, ownership, and usage insights.

- Iceberg REST Catalog API — Read access is GA, write is in Public Preview. External engines like Spark, Flink, or Trino can access Unity Catalog–managed Iceberg tables directly, eliminating vendor lock-in.

Mosaic AI Gateway: One Endpoint to Rule All Your LLMs

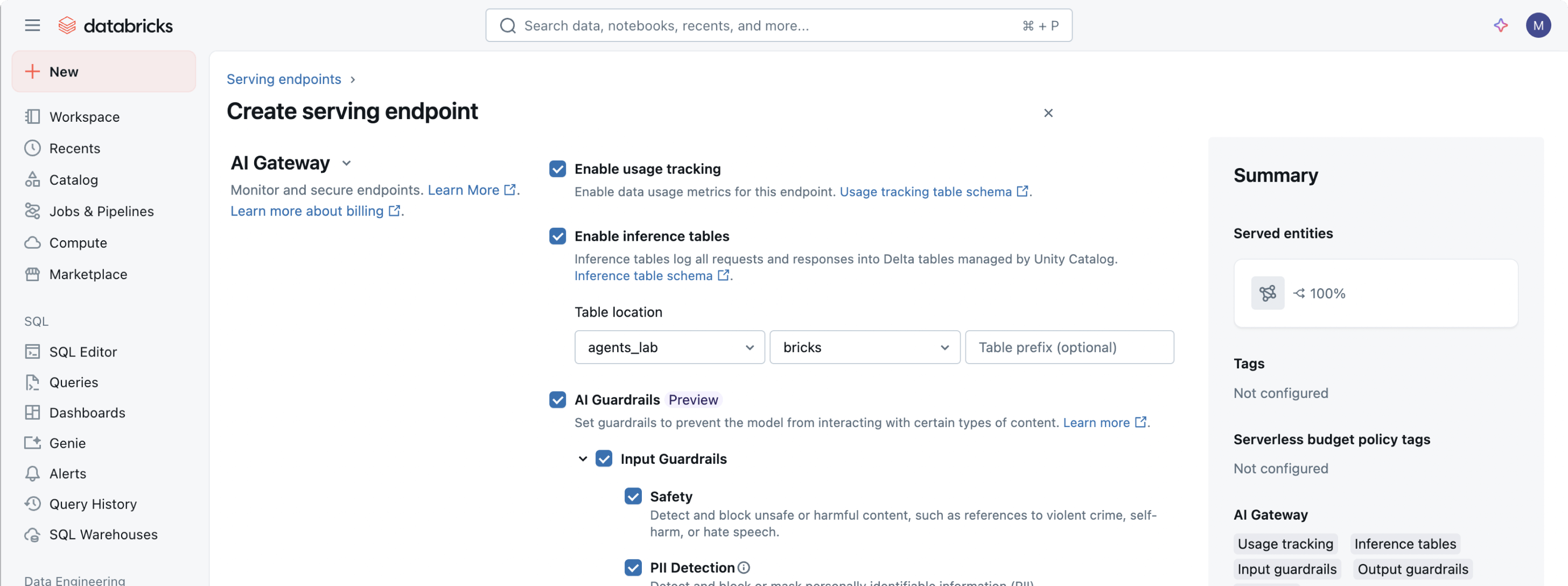

Here’s where Databricks Unity Catalog AI gets genuinely interesting for teams running multiple foundation models. The Mosaic AI Gateway, now generally available, provides a single OpenAI-compatible endpoint that routes to OpenAI, Anthropic Claude, Amazon Bedrock, Meta LLaMA, xAI Grok, or any custom-hosted model.

Think of it as a reverse proxy for LLMs — but one that actually understands governance. Every request flowing through the Gateway is automatically logged, standardized, and stored in Unity Catalog inference tables. Token usage, cost, latency, full payloads — all captured in a single system table for observability across your entire GenAI stack.

The practical features that matter most for production teams:

- Automatic provider fallbacks — If OpenAI is down, traffic automatically reroutes to Claude or Bedrock. Zero downtime for your AI applications.

- PII and safety guardrails — Enforce data masking and content filtering at the gateway level, before requests ever reach the model provider.

- Rate limiting policies — Control spend per team, per application, per user. No more surprise $50K bills from a runaway agent loop.

- Unified billing visibility — Compare cost per token across providers in one dashboard. Knowing that Claude Sonnet costs $3/MTok input vs GPT-4o at $2.50 changes routing decisions.

For engineering teams juggling three or four model providers, this alone can save weeks of custom plumbing. Before AI Gateway, teams were building bespoke routing layers with custom logging — maintaining separate SDKs for each provider, writing their own retry logic, and stitching together cost dashboards from disparate billing APIs. Mosaic AI Gateway collapses all of that into a managed service with governance built in from day one.

The governance logging is the strategic bonus — you get compliance-ready audit trails without building a separate observability stack. When your legal team asks “which customer data touched which model, and when?”, the answer lives in Unity Catalog inference tables, queryable with standard SQL.

Agent Governance: The Missing Piece Most Platforms Ignore

AI agents are the hot topic of 2025, but almost nobody talks about governing them. An agent that can browse the web, execute code, query databases, and call APIs is powerful — and dangerous without guardrails. Databricks Unity Catalog AI addresses this with Agent Bricks Supervisor Agent, now GA.

The Supervisor Agent is a managed orchestration layer that ties together multiple agents and tools, all governed by Unity Catalog. The key innovation is On-Behalf-Of (OBO) authentication: every data fetch or tool execution is validated against the human user’s existing permissions. The agent doesn’t get a god-mode service account — it inherits exactly the access level of the person who triggered it.

Why this matters: imagine a financial analyst asks an agent to summarize portfolio performance. Without OBO, the agent might access datasets the analyst isn’t authorized to see — compensation data, board-level projections, or raw trading signals that should be restricted. With Unity Catalog’s permission model, the agent is constrained to the same data boundaries as the human — automatically, without custom code. This is fundamentally different from the “just give the agent an admin API key” approach that most teams default to today.

Franklin Templeton is already using this in production. They built a governed fund analysis agent using Agent Bricks that combines public fund documents with internal performance data, grounded entirely in approved enterprise sources through Unity Catalog governance.

The 5-Pillar AI Governance Framework: From Theory to Catalog Enforcement

Databricks didn’t just ship features — they published a comprehensive AI Governance Framework spanning 5 pillars and 43 key considerations. This matters because most organizations know they need AI governance but have no idea where to start.

The framework covers:

- Risk Management — Identifying and quantifying AI-specific risks across the model lifecycle

- Legal Compliance — Mapping to EU AI Act, NIST AI RMF, and emerging regulations

- Ethical Oversight — Bias detection, fairness metrics, transparency requirements

- Operational Monitoring — Drift detection, performance degradation alerts, cost tracking

- Organizational Readiness — Roles, responsibilities, and cultural adoption

What makes this more than a whitepaper exercise is Unity Catalog’s ability to enforce these pillars technically. Access controls map to legal compliance. Lineage tracking maps to risk management. Inference table logging maps to operational monitoring. The framework becomes actionable because the catalog already has the hooks.

The IDC MarketScape validated this approach by naming Databricks a Leader in Worldwide Unified AI Governance Platforms 2025-2026, with the highest Strategies placement among all vendors evaluated.

Unity Catalog vs Snowflake Polaris: The Governance War Is On

No discussion of Databricks Unity Catalog AI is complete without comparing it to Snowflake’s answer: Polaris Catalog (now part of Snowflake Horizon). The two take fundamentally different approaches.

Unity Catalog was open-sourced in mid-2024, can run outside Databricks, and supports the Iceberg REST Catalog API for true multi-engine access. It governs the full AI lifecycle — data, models, features, agents, prompts, metrics. Snowflake Horizon focuses on data governance within the Snowflake ecosystem, with strong data sharing and marketplace capabilities but narrower AI asset coverage.

For teams heavily invested in the Databricks lakehouse and running multiple AI workloads, Unity Catalog’s breadth is hard to match. For Snowflake-centric organizations primarily focused on data sharing and analytics, Horizon’s native integration may be more practical. The governance war between these two will shape enterprise AI infrastructure for the next decade.

MLflow 3 and the Prompt Registry: Versioning What Matters

A frequently overlooked announcement alongside the Unity Catalog updates: MLflow 3 now includes a prompt registry. This lets organizations register, version, test, and deploy different LLM prompts as managed artifacts — treating prompts with the same rigor as model weights or feature pipelines.

Why does this matter? Because prompt engineering in most enterprises is still chaotic — prompts live in notebooks, Slack threads, or individual developers’ heads. When a production agent starts hallucinating, nobody knows which prompt version is running, who changed it, or when.

A governed prompt registry changes this. You can A/B test prompt variants with controlled rollouts, roll back to previous versions the moment quality drops, and maintain complete audit trails of which prompt produced which output. Combined with Unity Catalog’s lineage capabilities, you get full traceability from prompt version to model response to downstream business decision. For regulated industries — finance, healthcare, government — this traceability isn’t optional, it’s a legal requirement.

What This Means for Your AI Strategy

The 12x production gap between governed and ungoverned AI teams isn’t going to shrink. As regulations tighten (EU AI Act enforcement is underway, US executive orders on AI safety multiply), the organizations that built governance into their AI stack from the start will move faster, not slower.

Databricks Unity Catalog AI represents a bet that governance and velocity aren’t opposites — they’re multipliers. By treating LLMs, agents, prompts, and metrics as first-class governed assets alongside traditional data, Databricks is positioning the catalog as the operating system for enterprise AI. Whether you use Databricks or not, the pattern is clear: govern AI like data, or get left behind.

Building an AI governance strategy or need help architecting LLM pipelines with proper observability? Let’s talk.

Get weekly AI, music, and tech trends delivered to your inbox.