CES 2026 AI Announcements: 9 Groundbreaking Products That Define the Year Ahead

January 30, 2026

Bitwig Studio 6 Preview: 5 Major Core Features That Make the March Upgrade Worth the Wait

February 2, 2026Finally — the model we’ve been waiting for. On February 5, 2026, Anthropic dropped Claude Opus 4.6, and after spending the past few days pushing it to its limits, I can tell you this: the AI landscape just shifted. With a 1M token context window, agent teams that coordinate like a real engineering squad, and benchmark scores that leave GPT-5.2 in the dust, Claude Opus 4.6 isn’t just an incremental upgrade — it’s the moment AI stops being a tool and starts becoming a collaborator.

Let me break down exactly what makes this release so significant, and why it matters for developers, enterprises, and anyone building with AI right now.

What Is Claude Opus 4.6? The Architecture Behind Anthropic’s Flagship

Claude Opus 4.6 is Anthropic’s most capable model to date, sitting at the top of their model lineup above Sonnet and Haiku. But calling it just “more capable” undersells what’s happening under the hood. This release represents a fundamental rethinking of how AI models handle complex, multi-step work.

The core architecture improvements center around three pillars: massive context handling (1M tokens in beta), parallel agent coordination (agent teams), and adaptive compute allocation (thinking effort controls). Each of these individually would be noteworthy. Together, they create something qualitatively different from what we’ve had before.

Pricing lands at $5 per million input tokens and $25 per million output tokens — competitive with other frontier models while delivering what Anthropic claims (and benchmarks suggest) is superior performance across virtually every category that matters.

Enterprise Availability: Azure, Google Cloud, and Amazon Bedrock

Anthropic is making Claude Opus 4.6 available through every major cloud platform from day one. Enterprise teams can deploy through Microsoft Azure Foundry, Google Vertex AI, and Amazon Bedrock — meaning you can integrate it into your existing cloud infrastructure without vendor lock-in.

Microsoft’s announcement specifically highlights enterprise workflow integration, while Google Cloud’s blog post emphasizes enterprise-grade deployment options. This multi-cloud strategy is critical for organizations that need flexibility and redundancy in their AI infrastructure.

Additionally, Anthropic has expanded its Microsoft Office integrations with improvements to Claude in Excel and a new research preview for Claude in PowerPoint. These integrations bring frontier AI capability directly into the tools that knowledge workers use every day — no API integration required. For organizations that aren’t ready to build custom AI pipelines, these Office integrations provide an accessible entry point to leverage Opus 4.6’s capabilities within familiar workflows.

Claude Opus 4.6 Pricing Breakdown: Is It Worth the Investment?

At $5/$25 per million tokens (input/output), Claude Opus 4.6 sits in the premium tier of frontier model pricing. But the value calculation is more nuanced than raw per-token cost. The adaptive thinking feature means you’re not paying premium rates for every interaction — only for the ones that need maximum reasoning depth. A well-architected application routing 70% of queries to “low” or “medium” effort can achieve an effective blended rate significantly below the headline price.

The 128K output token limit also changes the economics of certain use cases. Tasks that previously required multiple sequential API calls — generating comprehensive reports, producing complete codebases, or creating detailed documentation — can now often be completed in a single call. Fewer round trips mean lower latency, simpler error handling, and in many cases, lower total cost despite the higher per-token rate.

What This Means for Developers, Enterprises, and AI Power Users

Claude Opus 4.6 arrives at a moment CNBC is calling the “vibe working” era — where AI doesn’t just assist with tasks but actively collaborates on complex work. The combination of agent teams, massive context, and adaptive thinking makes this model particularly well-suited for three categories of users:

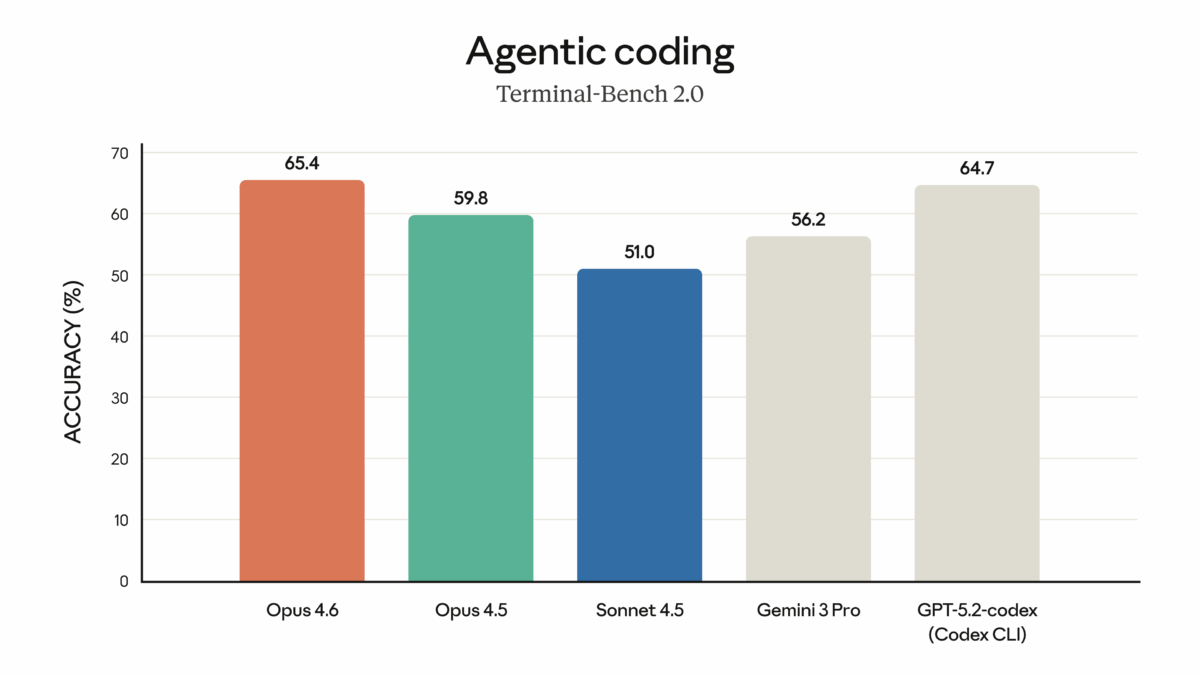

For developers, the Terminal-Bench 2.0 dominance and agent teams make Claude Opus 4.6 the strongest option for agentic coding workflows. Whether you’re building with Claude Code or integrating through the API, the ability to spin up coordinated agent teams for complex engineering tasks is a genuine productivity multiplier.

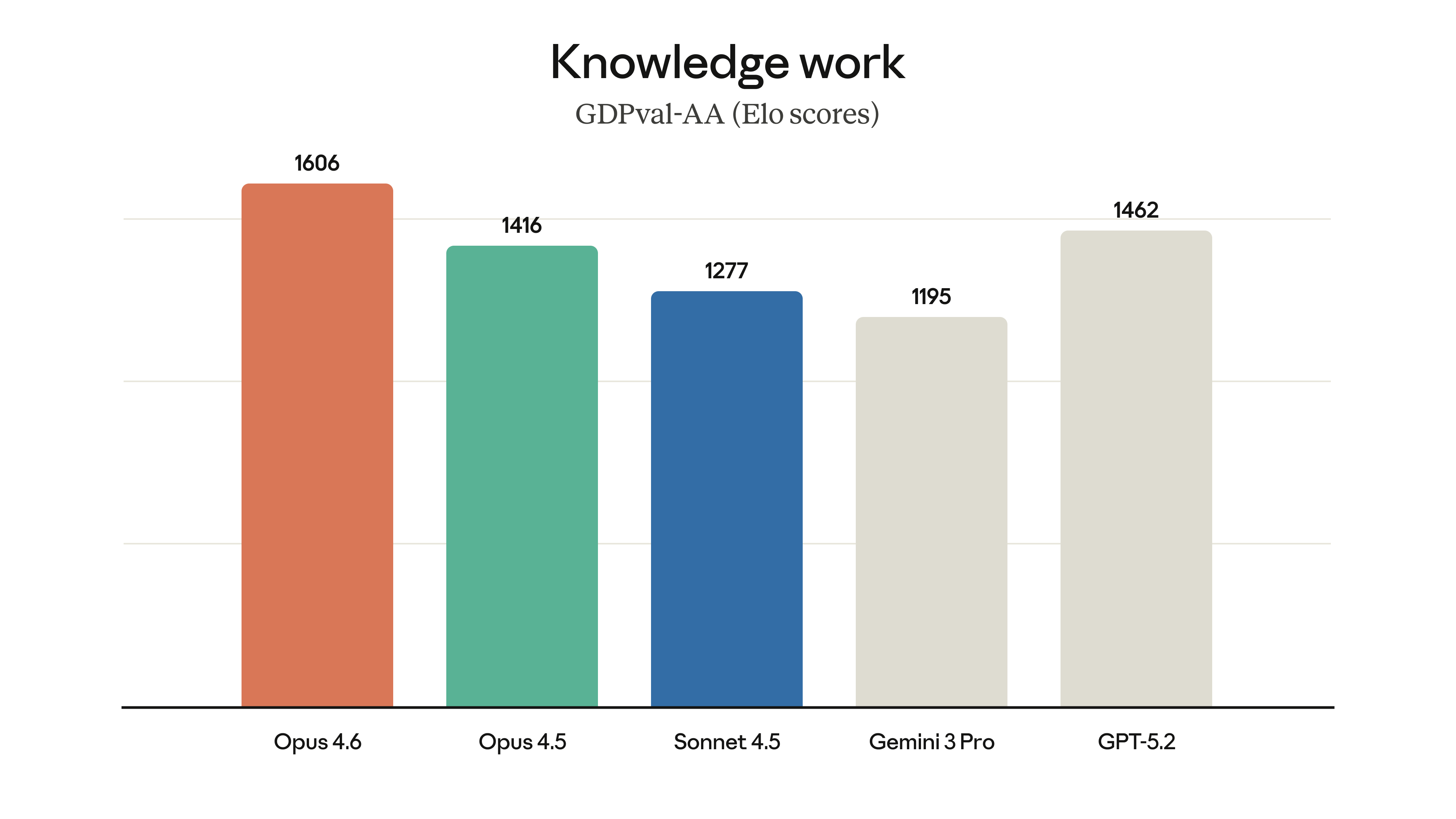

For enterprises, the multi-cloud availability, BigLaw Bench scores, and adaptive thinking controls address the three biggest concerns: deployment flexibility, domain-specific accuracy, and cost management. The 1M context window also opens new possibilities for document-heavy workflows in legal, financial, and research domains.

For AI power users and builders, this release sets a new baseline for what’s possible. The 128K output limit means longer, more complete generations. Context compaction means longer productive sessions. And agent teams mean you can architect more ambitious AI systems than ever before.

The AI model landscape in early 2026 is more competitive than ever, but Claude Opus 4.6 makes a strong case for being the most complete frontier model available. Whether the benchmarks translate perfectly to your specific use case will depend on testing — but the foundation Anthropic has built here is undeniably impressive. If you’re building anything serious with AI right now, this is the model to evaluate first.

Need help building AI agent workflows, automating with frontier models, or evaluating Claude for your enterprise?

Get weekly AI, music, and tech trends delivered to your inbox.