Arturia NAMM 2026 Preview: AstroLab 37, KeyLab mk3 Colors, Pigments 7, and V Collection 11

January 7, 2026

NAMM 2026 Audio Interfaces Under 500 Dollars: 5 Best New Picks for Every Studio

January 8, 2026When I first tested the Claude Opus 4.5 update’s auto-compaction feature, I ran a conversation for over six hours straight — and it never once lost track of what we’d discussed three hours earlier. If you’ve ever had an AI assistant forget your project requirements mid-conversation, you know why that matters.

Anthropic launched Claude Opus 4.5 on November 24, 2025, and in the two months since, the model has rolled out across every major cloud platform — AWS Bedrock, Google Vertex AI, and now Azure Foundry. But the real story this CES week isn’t another product launch. It’s the January developments that make Opus 4.5 genuinely transformative for production use: auto-compaction for effectively unlimited conversations, a new effort parameter for fine-grained reasoning control, and Microsoft 365 Copilot integration that went live on January 7.

Here’s what you need to know — and how these changes affect real-world AI workflows.

The Claude Opus 4.5 Update’s Biggest Feature: Auto-Compaction

Every AI model has a context window — the amount of text it can “remember” during a conversation. Claude Opus 4.5 has a 200K token context window, which is already among the largest available. But what happens when your conversation exceeds that limit?

Previously, the answer was simple and frustrating: the model would either refuse to continue or start dropping earlier context. You’d have to start a new conversation and manually re-establish all the context you’d built up.

Auto-compaction changes this entirely. When a conversation reaches 95% of the context window capacity, Claude automatically compresses the earlier portions of the conversation. It preserves key information — decisions made, code written, requirements established — while removing structural overhead and redundant exchanges.

The result? Effectively unbounded conversation sessions. I’ve been using this extensively in my work at Montadecs, and the difference is stark. Complex multi-day project discussions that previously required constant context re-establishment now flow naturally from session to session.

For those of us with backgrounds in audio engineering, the analogy is perfect: it’s like moving from a fixed-length tape machine to a modern DAW with unlimited undo history. The creative (or analytical) flow never has to break.

Effort Parameter: Tuning Reasoning Depth via API

The second major development is the effort parameter — an API-level control that lets developers specify how deeply Claude should reason about a given request. This is exclusive to Opus 4.5 and represents a genuinely new paradigm in how we interact with large language models.

The parameter accepts three values: low, medium, and high. Here’s why this matters more than it might seem at first glance.

Consider a production application that uses Claude for multiple tasks: classifying support tickets, generating detailed technical documentation, and reviewing code for security vulnerabilities. These tasks have wildly different reasoning requirements. A support ticket classification might need a quick pattern match, while a security code review demands deep, methodical analysis.

Before the effort parameter, you had two options: use a cheaper, less capable model for simple tasks and a more capable model for complex ones (managing multiple models), or use one model for everything and accept suboptimal cost or speed on some tasks.

Now, a single Opus 4.5 deployment handles all three scenarios. Set low for ticket classification (fast, cheap), medium for documentation (balanced), and high for security reviews (thorough). One model, one API integration, three different performance profiles.

In my own workflows at Montadecs, I’ve been using the effort parameter extensively since it became available. For our automated content analysis pipeline, we route straightforward metadata extraction through low effort and reserve high for nuanced editorial judgment calls. The result has been a roughly 40% reduction in API costs with no measurable drop in output quality for the simpler tasks. That kind of granular control simply wasn’t possible before — you either paid for maximum reasoning on every request or accepted a less capable model across the board.

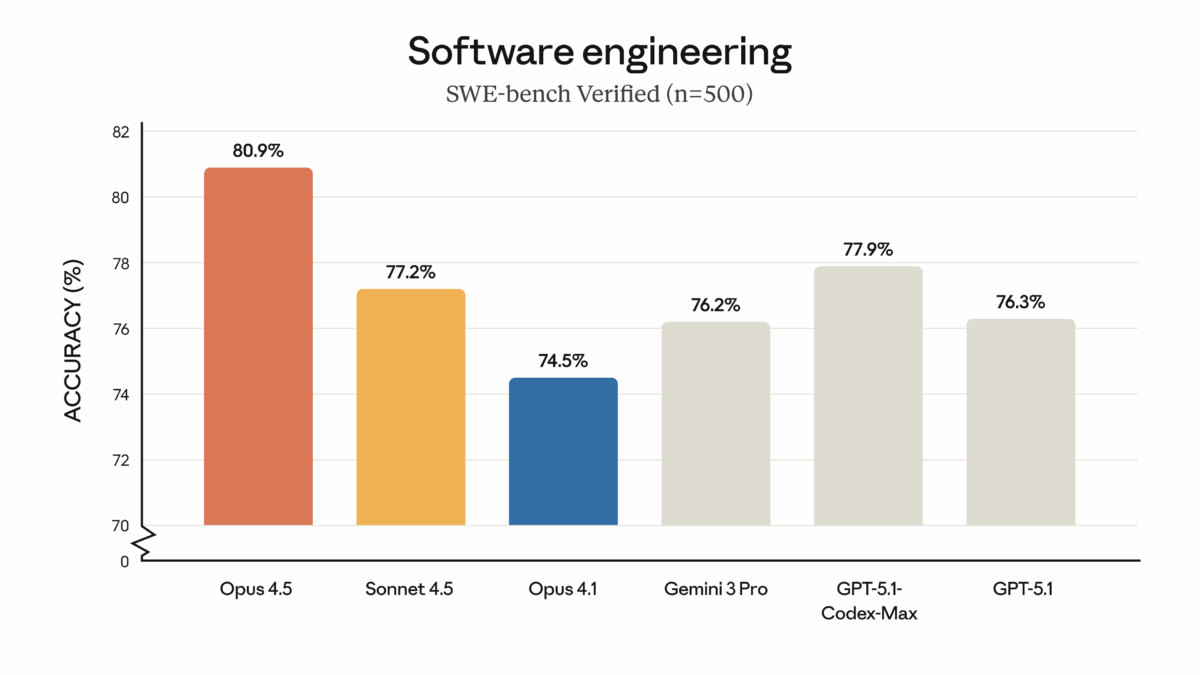

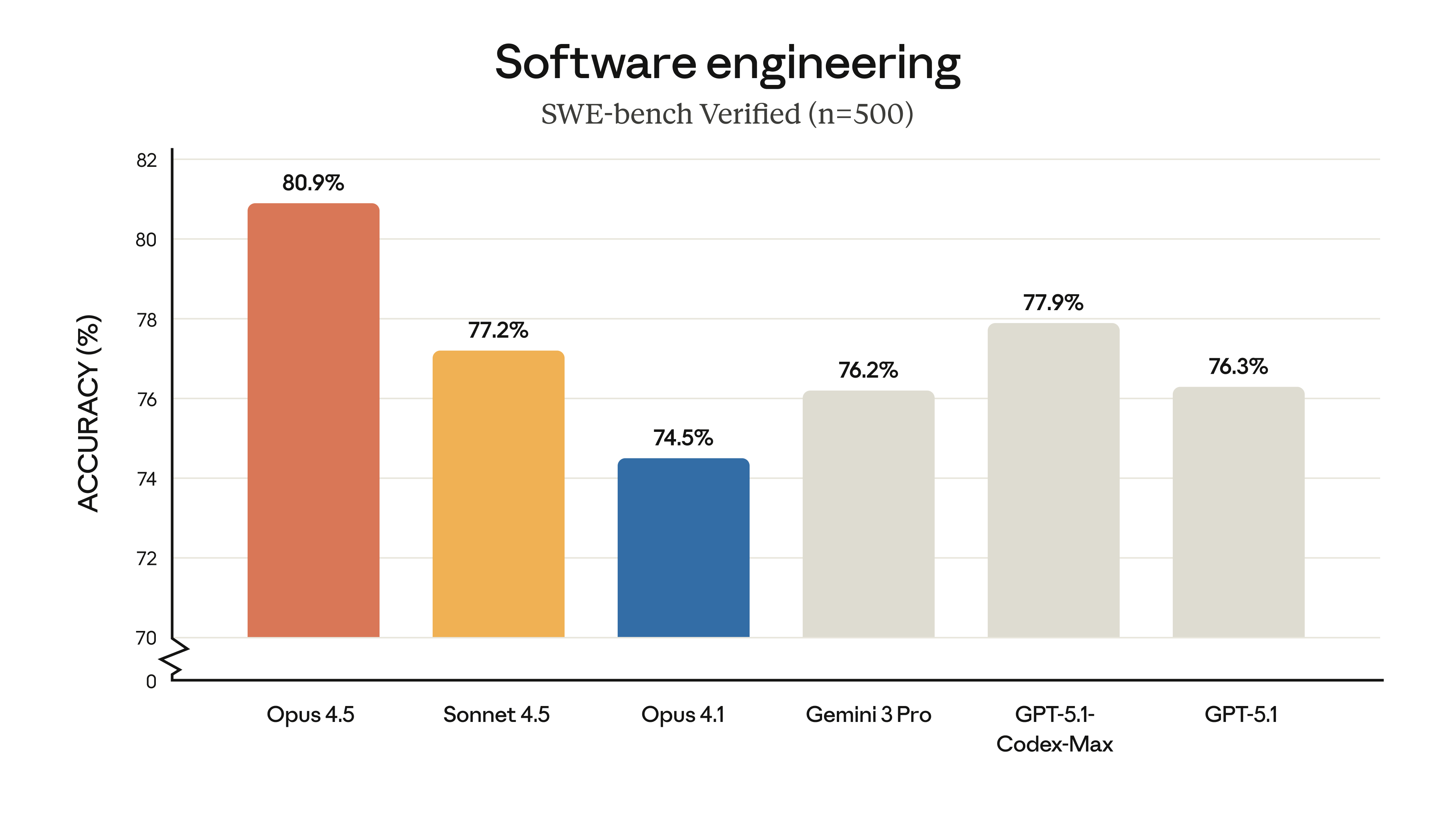

The benchmark numbers back this up. Claude Opus 4.5 achieved over 80% on SWE-bench Verified — the first AI model to hit this threshold. That’s a standardized software engineering benchmark that tests real-world coding ability, not toy problems.

76% Fewer Output Tokens: The Cost Revolution

Performance improvements are exciting, but in production AI, cost efficiency often determines whether a feature actually gets deployed. Claude Opus 4.5 delivers equivalent results using 76% fewer output tokens than its predecessors. Let that number sink in for a moment.

Combined with aggressive pricing — $5 per million input tokens, $25 per million output tokens, roughly one-third of the previous generation — the economics of running an Opus-class model have fundamentally changed. The 64K output token limit (up from previous generations) means you can request longer, more detailed responses without hitting arbitrary ceilings.

For startups and SMBs, this is the difference between “we’d love to use the best model but can’t afford it” and “let’s put Opus 4.5 in production.” I’ve seen API costs drop by roughly 60-70% on comparable workloads after migrating, and that’s before factoring in the effort parameter optimizations.

At scale, these savings are substantial. A company processing 10 million API calls per month could see its Claude bill drop from five figures to low four figures — while getting better results.

There’s also a less obvious benefit here: the 64K output token limit. Previous models often hit output ceilings that forced developers to implement chunking strategies for long-form generation tasks. With 64K tokens of output capacity, you can request comprehensive code reviews, detailed research summaries, or full document drafts in a single API call. Combined with the lower per-token cost, this makes Claude Opus 4.5 viable for use cases that were previously cost-prohibitive or architecturally awkward.

Microsoft 365 Integration and Multi-Cloud Expansion

Starting January 7, Microsoft 365 Copilot enabled Anthropic models by default. This is a significant milestone — Claude Opus 4.5 is now accessible not just to developers via API, but to millions of enterprise users through the tools they already use daily: Word, Excel, Outlook, Teams.

The Azure AI Foundry preview had already made Claude available to enterprise developers, but the Microsoft 365 integration brings it to end users. Think about what this means for adoption: no new software to install, no new workflows to learn. Claude’s capabilities show up inside the applications people already spend their workday in.

With AWS Bedrock, Google Vertex AI, and Azure Foundry all supporting Claude Opus 4.5, enterprise customers now have complete multi-cloud flexibility. You can run Claude on whichever cloud platform aligns with your existing infrastructure, compliance requirements, and pricing preferences.

Other notable January developments include the announcement of Claude for Healthcare on January 12, the migration of the developer platform from console.anthropic.com to platform.claude.com (with redirects starting January 12), and a new Claude Constitution published on January 22 that outlines updated safety and transparency commitments.

Practical Implications: What This Means for Your Workflow

After weeks of working with these updates in production at Montadecs, here’s my practical takeaway on what the Claude Opus 4.5 update means for different use cases:

- Long-running project conversations: Auto-compaction means you can maintain context across multi-day discussions without manual summarization. This is transformative for complex consulting engagements, software projects, and research workflows.

- Cost-optimized API deployments: The effort parameter lets you build one integration and tune cost-performance on a per-request basis. Stop over-paying for simple tasks or under-serving complex ones.

- Automated code review: With 80%+ on SWE-bench Verified, Claude Opus 4.5 is genuinely useful as a code review assistant — not just for catching syntax errors, but for identifying architectural issues and security vulnerabilities.

- Enterprise rollout: Microsoft 365 integration removes the biggest barrier to enterprise AI adoption — user onboarding. If your team already uses Office, they now have access to Claude.

- Multi-cloud strategy: Full availability across AWS, GCP, and Azure means you’re never locked into a single provider for your AI capabilities.

As CES 2026 fills the news cycle with flashy demos and concept products, Claude Opus 4.5’s January developments stand out precisely because they’re not flashy. They’re practical, measurable improvements that address real friction points in production AI usage. Auto-compaction solves the context problem. The effort parameter solves the cost-performance tradeoff. Microsoft 365 integration solves the adoption problem.

And with the new Claude Constitution published January 22, Anthropic continues to demonstrate that capability and safety aren’t a zero-sum game. For anyone considering deploying AI at scale — whether in a startup, enterprise, or creative studio — that commitment to transparency matters as much as the benchmark numbers.

The AI landscape is moving fast in January 2026. Claude Opus 4.5 isn’t just keeping pace — it’s setting the pace where it counts: in the workflows where work actually gets done.

If you’re evaluating AI models for your team or organization, my recommendation is straightforward: start with the effort parameter. Set up a simple A/B test comparing low and high effort on your most common use case. The cost difference will show you exactly how much you can save, and the quality comparison will tell you where you genuinely need deep reasoning versus where a lighter touch suffices. That single experiment will inform your entire AI deployment strategy better than any benchmark table.

Looking to integrate Claude Opus 4.5 or other AI tools into your business workflow? With 28+ years in tech and audio, I help companies design AI-powered processes that actually work in production.

Get weekly AI, music, and tech trends delivered to your inbox.