NVIDIA CES 2026 Keynote Preview — 7 AI Breakthroughs Jensen Huang Is Expected to Unveil

January 2, 2026

Korg NAMM 2026: 7 New Products Including Phase8, KAOSS Pad V, and microAUDIO

January 5, 2026AMD just fired the opening salvo of CES 2026. Today in Las Vegas, CEO Dr. Lisa Su took the stage to unveil the AMD Ryzen AI 400 Series — a lineup of processors that pairs Zen 5 CPU cores with a 60 TOPS XDNA 2 NPU and marks the industry’s first Copilot+ certified desktop CPU. If you’ve been tracking the AI PC race between AMD, Intel, and Qualcomm, this is the moment the competition gets truly interesting.

AMD Ryzen AI 400 Series Flagship: The Ryzen AI 9 HX 475

At the top of the AMD Ryzen AI 400 Series sits the Ryzen AI 9 HX 475, and it’s a beast on paper. This chip packs 12 Zen 5 CPU cores with 24 threads and a generous 36MB of total cache. On the graphics side, 16 RDNA 3.5 compute units boost up to 3.1 GHz, delivering integrated GPU performance that should comfortably handle creative workloads and even casual gaming without a discrete card.

But the headline number is the NPU. Built on AMD’s XDNA 2 architecture, it delivers 60 TOPS — that’s 60 trillion operations per second dedicated to AI inference. For context, Microsoft’s Copilot+ PC threshold is 40 TOPS, so AMD clears that bar by a wide margin. This means every Windows 11 AI feature — Recall, Cocreator, Live Captions with translation, and whatever Microsoft ships next — runs natively on the NPU without touching the CPU or GPU.

Memory support spans LPDDR5x-8533 for thin-and-light laptops and DDR5-5600 for desktop configurations. This dual-memory approach is key to AMD’s strategy of stretching the same silicon across form factors, from ultrabooks to compact desktops.

What 60 TOPS Actually Means for Real-World AI Workloads

Raw TOPS numbers are easy to quote but harder to contextualize. So what does 60 TOPS on the AMD Ryzen AI 400 Series NPU actually translate to in daily use? The most immediate benefit is Microsoft Copilot+ features running entirely on-device. Recall indexes and searches your entire PC activity history using the NPU, meaning the CPU and GPU remain free for your actual work. Cocreator generates and refines images in real time inside Paint, and Live Captions translates audio from over 40 languages — all processed locally without sending data to the cloud.

Beyond Copilot+ features, the 60 TOPS NPU opens the door to meaningful local AI inference. Small to mid-size large language models — think quantized 7B parameter models like Llama 3 or Mistral — can run locally with acceptable token generation speeds when offloaded to the NPU. This matters for developers testing AI applications, professionals who need offline AI assistants, and privacy-conscious users who want to keep their data entirely on-device. While an NPU alone won’t replace a dedicated GPU for training or running 70B+ models, for everyday inference tasks it provides a compelling always-on AI accelerator that sips power instead of draining the battery.

Image generation workloads also benefit. Stable Diffusion and similar diffusion models can leverage the NPU for certain inference steps, particularly when combined with AMD’s ONNX Runtime optimizations. Early developer previews suggest that generating a 512×512 image locally on a 60 TOPS NPU takes roughly 10-15 seconds — not as fast as a discrete RTX 4070, but remarkable for an integrated processor that fits in a laptop. For photographers and designers using AI-powered editing features in Adobe Photoshop or Lightroom, the NPU handles background tasks like subject selection, noise reduction, and generative fill without taxing the main CPU.

Seven Mobile SKUs and the Full Lineup Breakdown

AMD isn’t just dropping a flagship and calling it a day. The Ryzen AI 400 Series launches with seven mobile SKUs spanning multiple power and performance tiers. While AMD hasn’t published exhaustive spec sheets for every model yet, the lineup covers everything from ultrathin productivity notebooks to high-performance creator machines.

According to Tom’s Hardware’s detailed breakdown, the refresh brings modest clockspeed bumps across the CPU, GPU, and NPU compared to the outgoing Ryzen AI 300 Series. The architectural foundation remains Zen 5, RDNA 3.5, and XDNA 2 — this is an optimization and expansion of the platform, not a ground-up redesign.

That might sound underwhelming at first, but AMD’s real play here is platform breadth. The same architecture now extends from 15W ultrathin chips all the way up to desktop APUs, giving OEMs a consistent software and driver stack across every form factor. For IT departments and software developers targeting NPU acceleration, that consistency matters enormously.

The First Copilot+ Desktop CPU Changes the Game

Let’s talk about what is arguably the most significant announcement of the day: the first Copilot+ certified desktop processor. Until today, Microsoft’s Copilot+ PC program was exclusively a laptop affair. Qualcomm’s Snapdragon X Elite, Intel’s Core Ultra — all mobile chips. Desktop users were locked out of Windows 11’s most advanced AI features, stuck waiting for Microsoft to extend the program beyond portable PCs.

AMD just ended that wait. By bringing Copilot+ certification to the AMD Ryzen AI 400 Series desktop APU, they’ve opened the door for the first wave of AI-capable Windows desktops. Mini PCs, compact workstations, all-in-ones — any system builder can now deliver the full Copilot+ experience on a desktop form factor.

This is a genuine differentiator. As PCWorld’s analysis noted, while the mobile refresh plays it relatively safe, the desktop expansion is the real story. It signals that the AI PC concept is maturing beyond the ultrabook niche into a platform-wide standard.

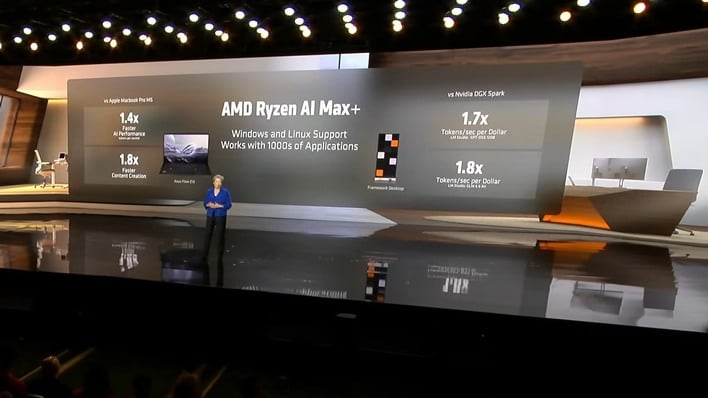

Strix Halo Unleashed: Ryzen AI Max+ 392 for Creator Workstations

AMD didn’t stop at the mainstream Ryzen AI 400 Series. Today’s announcements also included the Ryzen AI Max+ 392 and 388, codenamed Strix Halo. These are workstation-class mobile processors aimed at data scientists, 3D artists, and AI developers who need GPU horsepower that goes far beyond what a standard integrated solution can offer. Think of them as the professional tier — where the Ryzen AI 400 Series handles the mainstream, Strix Halo handles the heavy lifting.

The Ryzen AI Max+ 392 is particularly noteworthy. It pairs 16 Zen 5 cores with a massively expanded RDNA 3.5 GPU featuring up to 40 compute units and 128GB of unified memory addressable by both CPU and GPU. That unified memory architecture is a direct challenge to Apple’s M-series chips, which have used shared memory as a key selling point for creative professionals. For workflows like Blender rendering, DaVinci Resolve color grading, and Unreal Engine 5 scene editing, having 128GB of GPU-accessible memory in a laptop form factor eliminates the bottleneck of data transfer between discrete GPU VRAM and system RAM.

For AI developers specifically, the Strix Halo lineup is compelling because it can run much larger language models locally. Where the mainstream Ryzen AI 400 Series NPU handles 7B parameter models well, the Max+ 392 with its unified 128GB memory pool can load and run 70B+ parameter models entirely on-device — a capability that previously required a desktop workstation with a high-end NVIDIA GPU. This positions AMD’s Strix Halo as a serious mobile workstation platform for AI research and development.

OEM Partner Announcements and Q1 2026 System Launches

On the enterprise front, AMD also unveiled the Ryzen AI PRO 400 Series for commercial PCs. These add the security features, manageability tools, and long-term support commitments that enterprise IT departments require. According to AMD’s official press release, OEM partners including Acer, ASUS, Dell, HP, GIGABYTE, and Lenovo will ship systems across all three product families starting in Q1 2026.

Several OEMs made specific announcements during CES week. ASUS confirmed new ProArt and Zenbook lines powered by the Ryzen AI 400 Series, targeting creators and business professionals respectively. Lenovo previewed ThinkPad and Yoga models with the Ryzen AI PRO 400 for enterprise deployments. HP signaled upcoming EliteBook and OmniBook refreshes featuring the new AMD silicon. GIGABYTE announced AERO and AORUS laptop updates featuring both the mainstream Ryzen AI 400 and the workstation-grade Strix Halo chips. On the desktop side, compact form factor systems from ASUS, GIGABYTE, and several boutique builders will bring Copilot+ desktop capability to market by March 2026.

The commercial angle is worth watching closely. Enterprise PC refresh cycles are massive revenue drivers, and with both Intel and AMD now pushing NPU-equipped chips for the commercial segment, the race to win corporate fleet deals will intensify throughout 2026. Gartner estimates that over 60% of enterprise PCs shipped in 2026 will include dedicated AI acceleration hardware, making the AMD Ryzen AI 400 Series a key contender in what could be the largest PC upgrade cycle since the Windows 10 transition.

AMD vs. Intel vs. Qualcomm: A Deeper Competitive Analysis

The AI PC market is a three-way battle, and each player brings a different value proposition. Intel’s Core Ultra series integrates NPU capabilities within its familiar x86 platform, but the current Arrow Lake and Lunar Lake NPUs top out at roughly 48 TOPS — competitive but below AMD’s 60 TOPS ceiling. Intel’s strength lies in its established enterprise relationships and Thunderbolt connectivity ecosystem, but AMD’s NPU advantage is difficult to dismiss in head-to-head AI workload comparisons.

Qualcomm’s Snapdragon X Elite delivers impressive power efficiency on ARM-based Windows machines and boasts a 45 TOPS NPU, but faces ongoing app compatibility questions. While Windows on ARM has improved dramatically with Microsoft’s Prism emulation layer, certain professional applications — particularly legacy x86 software in engineering, finance, and healthcare — still run best on native x86 hardware. The AMD Ryzen AI 400 Series combines x86 compatibility — meaning every legacy Windows application runs natively without emulation — with the highest NPU TOPS figure of the three competitors.

AMD shared its own benchmarks today showing advantages over Intel Core Ultra in Windows 11 productivity and AI workloads, including up to 20% faster performance in Procyon AI benchmarks and measurable leads in content creation tasks. As always, vendor-supplied benchmarks should be taken with appropriate skepticism until independent reviewers can validate the claims. But the raw 60 TOPS NPU spec gives AMD meaningful headroom over competitors, particularly for sustained local AI inference tasks where NPU throughput directly translates to real-world speed.

The competitive picture also extends beyond raw silicon. AMD’s ROCm software platform for AI developers got significant updates today, and partnerships with OpenAI, Luma AI, Liquid AI, and World Labs suggest that AMD is working to build the software ecosystem that historically has been NVIDIA’s moat. Whether ROCm can close the CUDA gap remains an open question, but AMD is clearly investing heavily.

“AI Everywhere, for Everyone” — What CES 2026 Means for Buyers

For consumers, the practical takeaway is straightforward: when Acer, ASUS, Dell, HP, GIGABYTE, and Lenovo start shipping AMD Ryzen AI 400 Series systems in Q1 2026, buyers will have a compelling x86 option with best-in-class NPU performance, broad software compatibility, and the first desktop Copilot+ option on the market. Whether you’re upgrading a laptop or building a compact AI-ready desktop, AMD’s CES 2026 lineup gives you options that didn’t exist yesterday.

The AI PC era is no longer a concept — it’s shipping hardware. And after today’s keynote, AMD is making sure it ships everywhere.

Get weekly AI, music, and tech trends delivered to your inbox.