Best Smartphones of 2025: iPhone 17 Pro Max vs Galaxy S25 Ultra vs Pixel 10 Pro — Who Wins?

December 3, 2025

Year-End Studio Deals December 2025: 12 Post-Holiday Clearance Sales That Save You Up to 96%

December 4, 2025Finally, the dust has settled. AWS re:Invent 2025 wrapped up in Las Vegas on December 5th, and after five days of keynotes, breakout sessions, and hands-on labs, the sheer volume of announcements can feel overwhelming. I spent the week sorting through every major reveal, and here are the seven AI and ML announcements from AWS re:Invent 2025 that will actually reshape how we build, deploy, and scale intelligent applications in 2026 and beyond.

” alt=”AWS re:Invent 2025 keynote stage with AI announcements”/>

As AWS CEO Matt Garman put it during his keynote: “AI assistants are starting to give way to AI agents that can perform tasks and automate on your behalf. This is where we’re starting to see material business returns from your AI investments.” That theme — the shift from passive AI to agentic AI — ran through virtually every major announcement at the conference, held December 1-5, 2025 in Las Vegas.

1. Amazon Nova 2 Family: AWS re:Invent 2025’s Flagship AI Models

The biggest headline was undoubtedly the Amazon Nova 2 model family. AWS didn’t just iterate — they introduced an entirely new generation of foundation models spanning text, speech, and multimodal capabilities, all with a 1 million token context window.

Nova 2 Lite is the cost efficiency play at just $0.00125 per 1K input tokens and $0.0025 per 1K output tokens. For context, that’s roughly 10x cheaper than comparable models from competitors, making it viable for high-volume production workloads where every token counts.

Nova 2 Pro sits at the performance tier with $0.0003 per 1K input tokens and $0.01 per 1K output tokens, targeting enterprise applications that need both speed and accuracy. The Pro model consistently outperforms its predecessor across reasoning, coding, and instruction-following benchmarks.

Nova Sonic introduces real-time speech-to-speech capabilities at $0.003 per 1K speech input tokens. This isn’t just speech recognition bolted onto a text model — it’s native bidirectional audio processing that understands tone, cadence, and context. For anyone building voice-first applications, this changes the economics entirely.

Nova Omni is the multimodal powerhouse, processing text, images, audio, and video within a single unified model. The 1M token context window means you can feed it hours of video content or thousands of pages of documents and get coherent, contextual responses.

2. Amazon Bedrock AgentCore: The Control Plane for AI Agents

If Nova 2 provides the brains, Bedrock AgentCore provides the nervous system. This new service addresses what has been the biggest enterprise blocker for AI adoption: governance and control. AgentCore introduces policy controls that let organizations define exactly what their AI agents can and cannot do, quality evaluations that score agent outputs before they reach users, and episodic memory that lets agents learn from past interactions.

The bidirectional streaming capability is particularly noteworthy — agents can now maintain real-time conversations while simultaneously executing background tasks, making them feel far more natural and responsive. AWS also announced 18 new open weight models added to Bedrock, bringing the total to nearly 100 serverless models available on the platform. That model diversity means teams can match the right model to the right task without managing any infrastructure.

What makes AgentCore especially compelling is the episodic memory system. Unlike stateless AI assistants that forget everything between sessions, agents built on AgentCore can reference previous interactions, learn from past mistakes, and build contextual understanding over time. For customer service applications, this means an agent that remembers a customer’s previous issues. For development workflows, it means an agent that understands your codebase conventions and team preferences.

3. Reinforcement Fine-Tuning: 66% Accuracy Gains Without Massive Datasets

This one flew under the radar for many attendees, but it might be the most practically impactful announcement for ML engineers. AWS introduced reinforcement fine-tuning for Bedrock models that achieved a 66% accuracy improvement over base models — without requiring large labeled datasets.

Traditional fine-tuning requires thousands of carefully curated examples. Reinforcement fine-tuning uses reward signals instead, meaning you can define what “good” looks like through outcome-based feedback rather than manually labeling every training example. For domain-specific applications — legal document analysis, medical imaging interpretation, financial risk assessment — this dramatically lowers the barrier to creating specialized AI that actually works in production.

4. Kiro Autonomous Agent and Nova Act: AI That Actually Does Things

Garman’s keynote theme came alive with two practical agent announcements. Kiro is AWS’s autonomous coding agent that can handle up to 10 concurrent tasks simultaneously, maintain persistent memory across sessions, and work across multiple repositories. Unlike simple code completion tools, Kiro understands project context, can plan multi-step implementations, and executes them autonomously.

Nova Act takes agentic AI to the browser with over 90% task reliability. This browser automation agent can navigate web interfaces, fill out forms, extract information, and complete workflows — all through natural language instructions. The 90%+ reliability figure is significant because previous browser automation approaches typically hovered around 60-70% success rates, making them impractical for production use. Nova Act crosses the threshold where you can actually trust it with real tasks.

” alt=”AWS re:Invent 2025 AI service announcements overview”/>

5. Amazon SageMaker HyperPod: Checkpointless Training Changes Everything

Training large AI models is expensive, and a huge chunk of that cost comes from downtime — hardware failures, node replacements, and the overhead of checkpointing. SageMaker HyperPod’s new checkpointless training feature reduces downtime by over 80% through elastic training that automatically adapts to cluster changes without stopping the training job.

In traditional setups, if a node fails during a multi-day training run, you either lose progress back to the last checkpoint or maintain expensive checkpoint storage. HyperPod’s approach keeps the training state distributed across the cluster in a way that survives individual node failures. For organizations training foundation models or large domain-specific models, this translates directly to faster iteration cycles and lower compute bills. Consider a typical 72-hour training run — an 80% reduction in downtime could save tens of thousands of dollars in wasted compute and shave days off the development timeline.

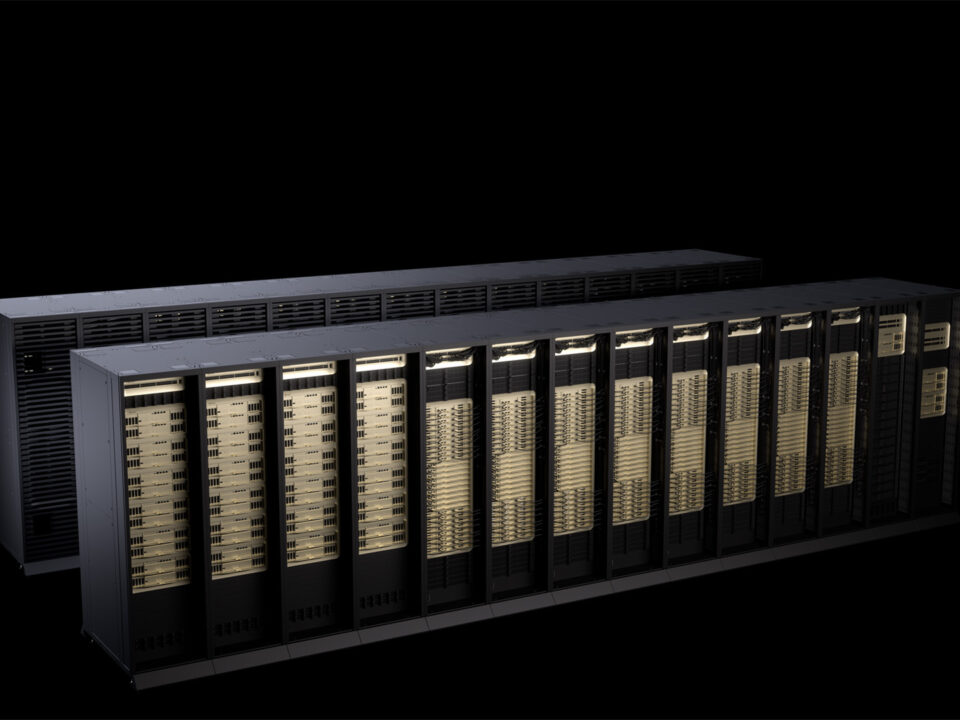

6. Trainium3 and P6e GB300: The Silicon Wars Intensify

AWS is playing both sides of the AI chip game, and playing them well. Trainium3 is Amazon’s first 3nm AI training chip, and the specs are impressive: 144 chips per UltraServer with up to 50% cost reduction compared to previous-generation training infrastructure. The 3nm process node means better performance per watt, which matters enormously at data center scale.

Simultaneously, AWS announced P6e instances powered by NVIDIA GB300 GPUs for customers who prefer the NVIDIA ecosystem. This dual-track strategy gives customers genuine choice — use Amazon’s custom silicon for maximum cost efficiency, or stick with NVIDIA for ecosystem compatibility and existing code portability. As TechCrunch noted, this positions AWS uniquely in the competitive landscape against Google and Azure, both of which are more narrowly focused on specific chip strategies.

7. Amazon S3 Vectors GA: Vector Search at S3 Scale and Pricing

The final announcement that caught my attention was Amazon S3 Vectors reaching general availability. The numbers speak for themselves: 2 billion vectors per index (a 40x increase), approximately 100ms query latency, and up to 90% cost reduction compared to specialized vector databases.

This is a strategic move that threatens the entire standalone vector database market. By embedding vector search capabilities directly into S3 — the world’s most widely used object storage — AWS eliminates the need for separate vector database infrastructure for many use cases. If your RAG pipeline or semantic search application is already running on AWS, the migration path is straightforward and the cost savings are substantial. For startups building AI-powered search, this removes an entire infrastructure component from the stack. The pricing model follows S3’s pay-per-use approach, so you’re not paying for provisioned capacity you don’t need — a stark contrast to dedicated vector database services that charge based on cluster size regardless of utilization.

What This Means for Builders in 2026

Looking at these seven announcements together, a clear narrative emerges. AWS is building an end-to-end AI platform that addresses every layer of the stack: custom silicon (Trainium3) for training efficiency, foundation models (Nova 2) for intelligence, agent frameworks (Bedrock AgentCore, Kiro, Nova Act) for autonomous operation, and storage (S3 Vectors) for the data layer that feeds it all.

The most significant shift isn’t any single product — it’s the move from “AI as a feature” to “AI as an agent.” Every major announcement was oriented around making AI systems that can act independently, with appropriate guardrails, rather than just respond to prompts. For developers, platform engineers, and technical leaders, the message is clear: 2026 will be the year of production-grade AI agents, and AWS is betting its platform on that future.

Whether you’re exploring Nova 2 for cost-efficient inference, evaluating Trainium3 for training workloads, or building your first autonomous agent with Bedrock AgentCore, the barrier to entry has never been lower. The real question isn’t whether to adopt these tools — it’s how quickly you can integrate them into your existing workflows before your competitors do.

Building AI-powered systems or need help navigating cloud architecture decisions like these? Sean Kim offers tech consulting for AI infrastructure, automation pipelines, and cloud-native workflows.

Get weekly AI, music, and tech trends delivered to your inbox.