Samsung Galaxy Watch Ultra Review: The $649 Titanium Smartwatch That Finally Makes Wear OS Worth Wearing

August 15, 2025

Convolution Reverb Showdown: Space Designer vs Altiverb vs Free IR Loaders in 2025

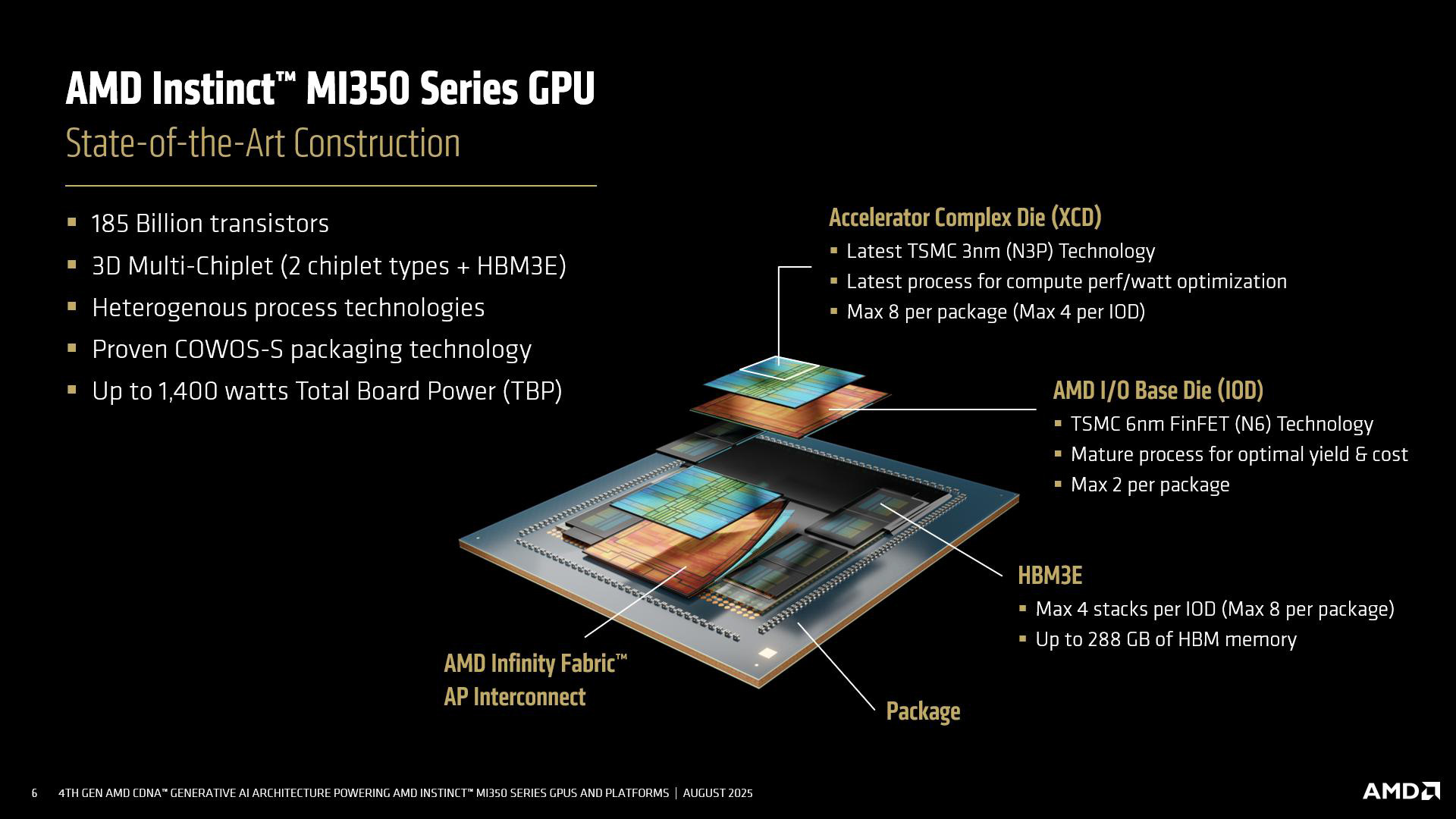

August 18, 2025185 billion transistors. 288 gigabytes of HBM3e memory. 10 petaflops of AI compute. Those aren’t projections from a roadmap slide — AMD just laid bare the full technical details of the Instinct MI350 at Hot Chips 2025, and the numbers demand attention.

AMD Instinct MI350: CDNA 4 Architecture Deep Dive at Hot Chips

At the annual Hot Chips symposium in August 2025, AMD architects presented the most comprehensive technical breakdown yet of the CDNA 4 architecture (as detailed by ServeTheHome) powering the new Instinct MI350 series. This wasn’t another marketing keynote — it was a silicon-level deep dive that revealed exactly how AMD plans to close the gap with NVIDIA in the data center AI accelerator market.

The AMD Instinct MI350 represents a generational leap from the MI300 series. Built on TSMC’s N3P process node for the compute dies and N6 for the I/O dies, the MI350 uses a sophisticated 3D chiplet design. Up to eight Accelerator Complex Dies (XCDs) are stacked on top of a pair of I/O dies using advanced CoWoS-S packaging, creating a 185 billion transistor behemoth that pushes the boundaries of semiconductor engineering.

MI350X vs MI355X: Two Variants for Different Data Center Needs

AMD is shipping the MI350 in two configurations. The MI350X targets air-cooled deployments with a 1,000W TDP and a peak engine clock of 2.2 GHz. The MI355X pushes further with liquid cooling, hitting 2.4 GHz at 1,400W — a serious power envelope, but one that AMD argues delivers unprecedented performance density.

Both variants pack 256 compute units housing 16,384 stream processors and 1,024 matrix engines. Each of the eight XCDs contains 32 CDNA 4-based compute units with 4MB of L2 cache that remains coherent across all XCDs. The memory subsystem features eight stacks of 12-Hi HBM3e memory, delivering 288GB of capacity at 8.0 TB/s of bandwidth — a massive upgrade over the MI300X’s 192GB HBM3 at 5.3 TB/s.

Performance That Puts AMD Instinct MI350 on the Map

The raw performance numbers from AMD’s Hot Chips presentation are staggering. The MI355X delivers 2.5 PFLOPS of matrix FP16/BF16 performance — a 1.9x improvement over the MI300X. At FP8 precision, which is increasingly standard for AI inference, the MI350 hits 5.0 PFLOPS, again roughly doubling the previous generation.

But the headline figure is the new MXFP6 and MXFP4 data type support. CDNA 4 introduces hardware-native FP6 and FP4 computation for the first time, enabling 10 PFLOPS of AI throughput — a capability that simply didn’t exist on previous hardware. AMD claims this translates to a 35x inference performance leap over the MI300 when running models like Llama 3.1 405B, and a 4x generational improvement in overall AI compute.

Taking on NVIDIA: How MI350 Stacks Up Against GB200

AMD didn’t shy away from competitive comparisons at Hot Chips. The company claims the MI350 delivers 2.1x higher compute output versus NVIDIA’s GB200 SXM — a bold assertion that, if validated in independent benchmarks, would mark a significant shift in the AI accelerator landscape. The Infinity Fabric 4 interconnect offers 2 TB/s more bandwidth than the IF3 used in MI300, and supports flexible NUMA configurations that could give AMD an edge in multi-GPU scaling scenarios.

The platform story matters too. The MI350X is designed as a drop-in upgrade for existing MI300/MI325 systems, lowering the barrier for data centers that have already invested in AMD’s ecosystem. Rack densities reach 96-128 GPUs per liquid-cooled rack and 64 GPUs per air-cooled configuration. AMD’s ROCm 7 software stack accompanies the hardware launch with optimizations for both inference and training workloads.

The Roadmap: MI400 in 2026 and Beyond

Perhaps most telling about AMD’s ambitions, the Hot Chips presentation also outlined the future. The MI400 series, based on CDNA 5 architecture and manufactured on a 2nm/3nm process, is slated for 2026 with promises of up to 10x more performance for AI frontier models. Further out, the MI500 on CDNA 6 with full 2nm processing is also on the roadmap. AMD is clearly committing to an annual cadence of accelerator upgrades, mirroring the aggressive pace set by NVIDIA.

The AI accelerator market has long been NVIDIA’s to lose, but the AMD Instinct MI350 represents AMD’s most credible challenge yet. With 185 billion transistors, 10 PFLOPS of AI compute, and aggressive pricing from partners, the Hot Chips 2025 presentation signals that the days of a single-vendor monopoly in AI hardware may be numbered. For data center operators evaluating their next GPU procurement cycle, the MI350 demands a seat at the table.

Whether AMD can deliver on these promises at scale — and whether ROCm 7 can close the software ecosystem gap with CUDA — will determine if these impressive specs translate into real-world adoption. But one thing is clear: Hot Chips 2025 just made the AI accelerator race a lot more interesting.

Get weekly AI, music, and tech trends delivered to your inbox.