Bitwig Studio 6 Is Here — Automation Clips, Clip Aliases, and Everything New

March 11, 2026

NVIDIA GTC 2026 in 5 Days: 3 Reasons Feynman’s 1.6nm Chips Matter for Music Producers

March 11, 2026The Democratization of Immersive Audio

For years, Dolby Atmos music production was the exclusive domain of well-funded studios with six-figure monitoring setups and experienced spatial audio engineers. In 2026, that’s no longer the case.

AI-powered tools have fundamentally changed the equation, enabling independent producers to create immersive mixes that genuinely rival studio-engineered results. From text-to-music generators with spatial awareness to intelligent stem separation tools that simplify object-based mixing, the barriers to entry have never been lower.

As someone who has spent nearly three decades in audio engineering and music production, I can tell you: this is the most significant shift in production accessibility since the rise of affordable DAWs in the early 2000s.

Why Dolby Atmos Matters More Than Ever

Before diving into the AI tools, let’s set the context. Dolby Atmos isn’t just a nice-to-have anymore — it’s becoming a commercial expectation.

Major labels like Atlantic and RCA now regularly require both stereo and Atmos masters for new releases. Apple Music, Tidal, and Amazon Music all prominently feature spatial audio content. The audience for immersive music is growing rapidly, and producers who can deliver Atmos mixes have a clear competitive advantage.

The challenge has always been the learning curve and equipment cost. That’s exactly where AI is making its biggest impact.

The AI-Powered Atmos Workflow: A Step-by-Step Guide

Here’s a practical breakdown of how AI tools fit into a modern Dolby Atmos production workflow in 2026.

Step 1: AI-Assisted Composition with Spatial Awareness

The newest generation of AI music generators don’t just create flat stereo tracks — they generate music with spatial characteristics baked in. Tools like Soundverse AI allow you to describe music using spatial language in your prompts.

For example, a prompt like “ambient electronic track with synth waves moving across the stereo field, deep sub-bass centered, and atmospheric textures panning overhead” gives the AI spatial context to work with. The key insight here is that prompt clarity matters — the more specific your spatial descriptions, the better the starting material for your Atmos mix.

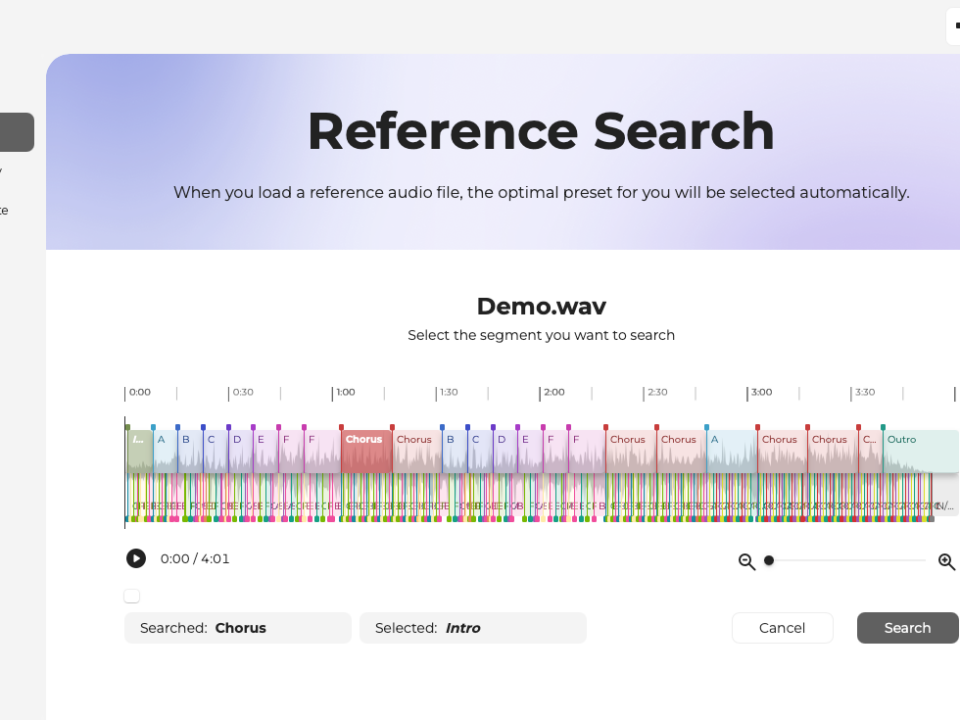

Step 2: AI Stem Separation for Object-Based Mixing

Dolby Atmos is fundamentally an object-based audio format. Each sound element can be independently positioned and moved in 3D space. This means you need clean, isolated stems — and AI has made this dramatically easier.

Modern AI stem separators can isolate:

- Vocals — clean separation even from dense mixes

- Drums — individual kit pieces (kick, snare, hats, overheads)

- Bass — clean low-end isolation

- Melodic instruments — keys, guitars, synths

- Ambient/effects layers — reverb tails, atmospheric textures

This gives you the granular control needed to place each element as a separate Atmos object — something that previously required recording stems separately or spending hours manually processing tracks.

Step 3: Intelligent Spatial Positioning

Once you have your stems, AI tools can suggest optimal spatial positions based on the frequency content, dynamics, and musical role of each element. Think of it as an AI assistant that understands spatial audio principles and can give you a solid starting point for your object placement.

Common AI-suggested spatial strategies include:

- Center anchoring for lead vocals and bass

- Width expansion for harmonic instruments

- Height channels for atmospheric elements and reverb

- Dynamic movement paths for transitional effects

Step 4: The Hybrid Finish — AI Meets Human Expertise

Here’s where the experienced engineer’s ear still matters enormously. AI gives you a starting point, but the final spatial mix requires human judgment about:

- Emotional impact — how spatial positioning affects the listener’s emotional response

- Translation — ensuring the mix works on headphones, soundbars, and full speaker arrays

- Dynamic range — managing the spatial dynamics across the entire song

- Genre conventions — understanding how spatial expectations differ between classical, pop, electronic, and hip-hop

The best Atmos mixes in 2026 come from this hybrid approach: AI handles the heavy lifting of stem separation and initial positioning, while the engineer brings creative vision and quality control.

Essential Tools for AI-Powered Atmos Production

Here’s a practical toolkit for producers looking to start creating Dolby Atmos content with AI assistance:

| Tool | Function | Best For |

|---|---|---|

| Logic Pro | Native Atmos authoring with AI Session Players | Apple ecosystem producers |

| Cubase | Enhanced VariAudio + Atmos renderer | Detailed vocal processing in immersive |

| Soundverse AI | Text-to-music with spatial prompts | Quick spatial composition sketches |

| Dolby Atmos Renderer | Official monitoring and export | Final QC and delivery |

| AI Stem Separators | Isolate individual elements | Preparing stems for object-based mixing |

Best Practices for AI-Assisted Atmos Production

Based on my experience integrating these tools into professional workflows, here are the practices that consistently produce the best results:

- Record at high sample rates — Advanced microphone setups paired with specialized interfaces can capture at 192kHz/24-bit, giving AI tools more data to work with

- Use spatial language in AI prompts — Don’t just describe the music; describe how it should move and exist in space

- Maintain loop consistency across multi-speaker mappings — When using AI-generated loops, ensure they translate cleanly across different Atmos speaker configurations

- Adopt a hybrid workflow — Combine AI-generated elements with dedicated Atmos software like Logic Pro or Cubase for the final mix

- Test on multiple playback systems — Atmos content must translate across headphones, soundbars, and full immersive speaker arrays

The Business Case: Why Producers Should Care

Beyond the creative possibilities, there’s a compelling business argument for learning AI-assisted Atmos production:

- Growing demand — Labels increasingly require Atmos masters alongside stereo

- Premium rates — Atmos mixing commands higher rates than stereo mixing

- Streaming prominence — Apple Music and Tidal actively promote spatial audio content

- Lower barrier to entry — AI tools mean you don’t need a $100K monitoring setup to get started

- Future-proofing — As AR/VR grows, spatial audio skills become increasingly valuable

Looking Ahead: What’s Next for AI and Immersive Audio

The convergence of AI and immersive audio is still in its early stages. Here’s what I expect to see in the next 12-18 months:

- Real-time AI Atmos mixing — AI that can spatialize a live performance in real time

- Personalized spatial audio — AI that adapts the spatial mix based on listener preferences and playback environment

- AI-generated binaural rendering — More accurate headphone-based Atmos experiences through AI head tracking

- Cross-platform spatial consistency — AI ensuring your Atmos mix sounds optimal across every playback device

Final Thoughts

The combination of AI tools and Dolby Atmos represents one of the most exciting developments in audio production this decade. For independent producers, this is an unprecedented opportunity — the tools that were once locked behind expensive studios and specialized knowledge are now accessible to anyone willing to learn.

My advice? Start now. Experiment with AI stem separation on your existing projects. Try spatializing a track using your DAW’s Atmos tools. The learning curve has never been gentler, and the rewards for early movers in immersive audio are substantial.

The future of music is spatial, and AI is your ticket in.

Get weekly AI, music, and tech trends delivered to your inbox.