Home Studio Setup Guide 2026: Complete Beginner to Pro Pathway ($500 to $8,000+)

January 28, 2026

MIDI 2.0 DAW Support in 2026: NAMM Reveals, Windows Rollout, and What Producers Need to Know

January 29, 2026The AI developer tools 2026 landscape just got rewritten in two weeks flat. GitHub Copilot shipped full agent mode on January 14, ClickHouse swallowed Langfuse in a $400M mega-round on January 16, and Cursor’s Background Agents now write code while you sleep. If you build software for a living, the ground beneath your feet looks nothing like it did six months ago — and the pace is only accelerating.

The Great IDE Wars: AI Developer Tools 2026 Are Redefining How We Code

The IDE market has turned into an all-out battlefield. Three contenders are fighting for developer mindshare, and each one has placed a very different bet on what agentic coding should look like.

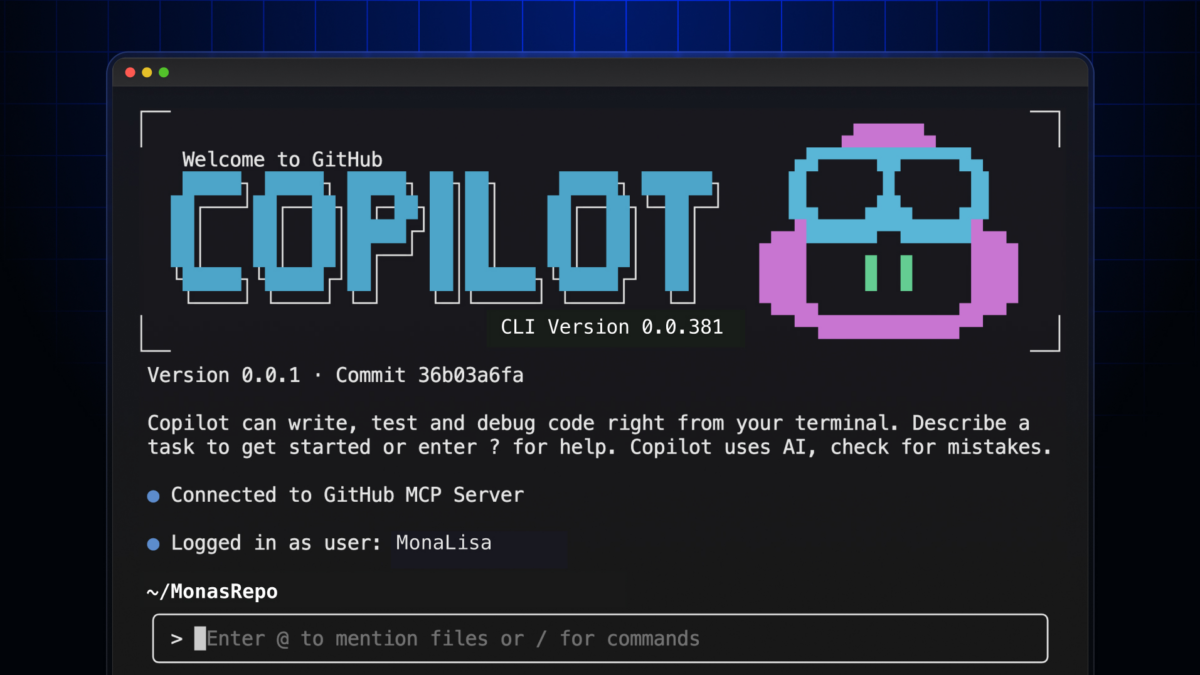

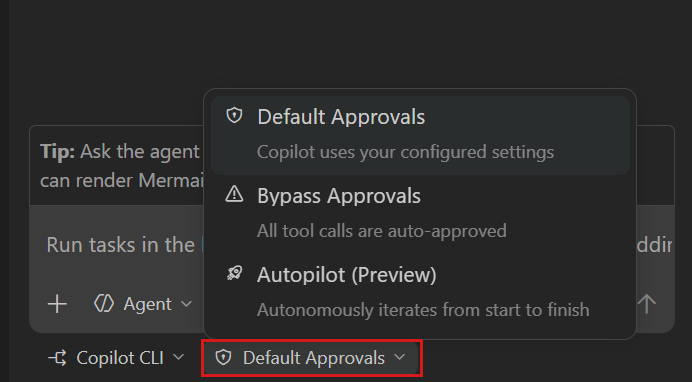

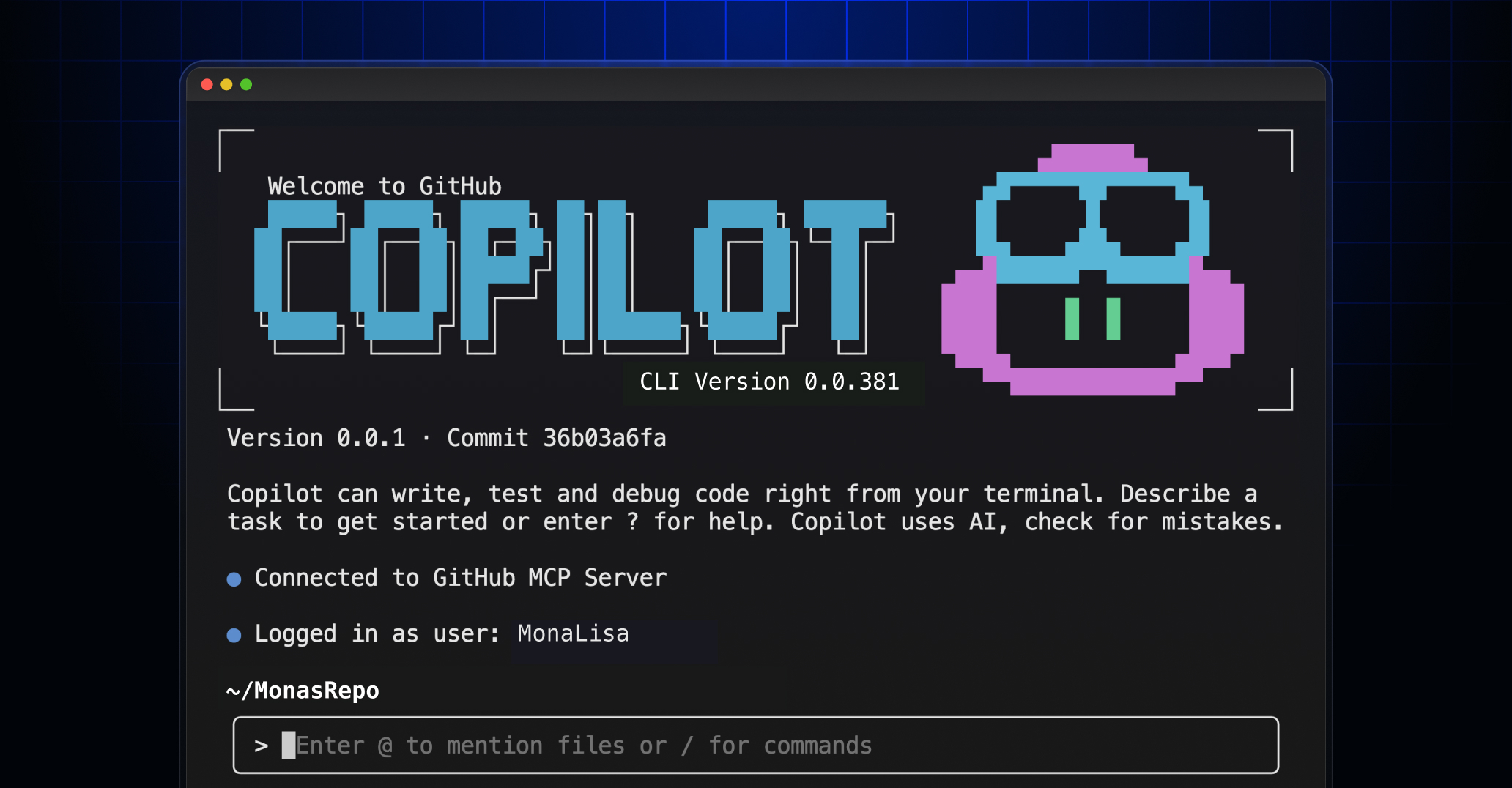

GitHub Copilot: Agent Mode Goes Live

On January 14, GitHub shipped a massive Copilot CLI update that fundamentally changes what “AI pair programmer” means. The headline features: built-in agents for Explore, Task, Plan, and Code-review workflows. GPT-5 mini and GPT-4.1 are now available as model options. Auto-compaction kicks in at 95% token usage, so long sessions don’t just crash anymore. And new scripting flags mean you can bake Copilot directly into your CI/CD pipelines.

The pricing remains aggressive — $10/month for individuals, $19 for business, $39 for enterprise. GitHub is clearly betting that distribution wins: every VS Code user already has it one click away. The addition of a web fetch tool is particularly noteworthy — agents can now pull real-time documentation, API references, and stack traces from the web mid-conversation, dramatically reducing the context-switching that kills developer flow.

Cursor: Background Agents Change the Game

Cursor has taken the boldest swing of the three. Their Background Agents run on remote servers — not your laptop. You describe a task, close your lid, and come back to a pull request. It’s the closest thing we have to autonomous software development, and it’s available today on the Pro tier ($20/month) through the Business tier ($200/month for teams with compliance needs).

The pitch is simple: stop waiting for your AI to finish. Run multiple agents in parallel. Review their output like you’d review a junior developer’s code. The workflow isn’t “AI assists you” — it’s “AI works for you.” For teams juggling multiple microservices or maintaining large monorepos, this changes the math entirely. A single developer can now spin up agents for dependency upgrades, test coverage improvements, and documentation updates simultaneously — work that would have taken a full sprint now happens overnight.

Windsurf: Cascade Goes Full Agentic

Windsurf’s Cascade feature offers a different flavor of agentic development. Where Cursor focuses on background execution, Windsurf emphasizes persistent context and deep codebase understanding. Cascade maintains awareness across your entire project, making multi-file edits that feel less like search-and-replace and more like refactoring from a developer who actually understands your architecture. At $15/month, it’s the value play in this three-way race.

Here’s the real question: does it matter which one “wins”? I don’t think so. The bigger story is that all three have converged on the same conclusion — the future of coding isn’t autocomplete, it’s agents. The IDE is becoming an orchestration layer for AI workers.

ClickHouse Acquires Langfuse: Why AI Observability Is the New Must-Have Infrastructure

On January 16, ClickHouse announced a $400 million Series D at a $15 billion valuation — and in the same breath, revealed it had acquired Langfuse, the open-source LLM observability platform. This isn’t just a database company getting bigger. It’s a signal that the AI infrastructure stack is consolidating fast.

Why does this matter? Because as AI agents become production-critical — not just coding assistants but customer-facing services, internal automation, decision-making pipelines — you need to see what they’re doing. Langfuse provides tracing, evaluation, and monitoring for LLM applications. Think of it as Datadog for your AI stack.

The numbers tell the story: ClickHouse now has 2,000+ paying customers including 19 of the Fortune 50. ARR grew over 250% year-over-year. And critically, Langfuse stays MIT open-source. ClickHouse isn’t buying Langfuse to lock it down — they’re buying it because observability data is the perfect workload for a columnar database optimized for analytics at scale.

For developers, the practical takeaway is clear: if you’re shipping LLM-powered features without observability, you’re flying blind. Langfuse (or a similar tool) should be in your stack alongside your vector database and your model provider.

What makes the ClickHouse-Langfuse combination particularly interesting is the data pipeline it creates. Every LLM call generates trace data — latency, token counts, prompt-response pairs, evaluation scores. That data needs to be stored, queried, and analyzed at speed. ClickHouse’s columnar architecture can ingest millions of trace events per second and return analytical queries in milliseconds. It’s a natural fit that neither company could fully deliver alone.

The API Pricing Race: Costs Are Plummeting and That Changes Everything

Remember when GPT-4 API calls cost $25-60 per million tokens? Those days are gone. GPT-5.2 now runs at $1.75 per million input tokens and $14 per million output tokens — a price drop of over 90% in barely two years. Anthropic’s Claude models are winning roughly 70% of enterprise head-to-head evaluations, putting serious pricing pressure across the board.

This pricing collapse has a cascading effect. Features that were economically unviable six months ago — running an AI agent on every pull request, real-time code review on every commit, natural language interfaces for internal tools — are now table stakes. The cost barrier to agentic development has essentially evaporated.

For indie developers and small teams, this is transformative. You can build sophisticated AI-powered applications without burning through your runway on API bills. The constraint has shifted from “can we afford the tokens” to “can we build the orchestration.”

Consider the math: running a code review agent on every pull request at GPT-4 prices would have cost a 10-person team roughly $3,000-5,000 per month. At current GPT-5.2 rates, the same workload costs under $300. That’s the difference between a “nice to have” and a default part of your development workflow. And with models like Claude continuing to push the quality ceiling while maintaining competitive pricing, the value proposition only improves.

The Agentic Development Paradigm: From Protocols to Production

The biggest structural shift happening right now isn’t any single tool — it’s the emergence of standards for how AI agents communicate and collaborate. Google’s Agent-to-Agent (A2A) protocol, which merged with the Agent Communication Protocol, has been donated to the Linux Foundation with backing from 150+ organizations. Anthropic’s Model Context Protocol (MCP) is rapidly becoming the standard for how agents connect to tools and data sources.

The Linux Foundation’s new Agentic AI Foundation is the clearest sign yet that agentic development is moving from experimental to enterprise-grade. When you see foundations forming around protocols, you know the technology has crossed the chasm from “cool demo” to “production infrastructure.”

What does this mean in practice? Enterprise adoption of agentic AI is accelerating, with development timelines being reduced by 30-50% in organizations that have fully integrated these tools. Multi-agent orchestration — where specialized agents handle research, writing, testing, and deployment in coordinated workflows — is becoming the new standard for software teams that want to stay competitive.

What This Means for Developers Building Right Now

I’ve been building with these tools daily — this blog itself runs on a multi-agent pipeline where specialized AI agents handle research, content generation, image processing, and publishing in a coordinated workflow using MCP. Here’s what I’ve learned from the trenches:

- Pick your IDE based on workflow, not hype. If you need background execution and parallel agents, Cursor is hard to beat. If you want deep codebase context at a lower price, Windsurf Cascade is excellent. If you’re already embedded in the GitHub ecosystem and need CI/CD integration, Copilot’s new agent mode is the natural choice.

- Invest in observability early. The ClickHouse-Langfuse acquisition isn’t just industry news — it’s a reminder that production AI systems need monitoring. Set up tracing before you scale, not after something breaks in production.

- Design for agents, not for prompts. The A2A protocol and MCP standardization mean your architecture should support agent-to-agent communication. Build modular, tool-equipped agents rather than monolithic prompt chains.

- Ride the pricing wave. The 90%+ drop in API costs means you should be re-evaluating every “too expensive” idea from last year. That AI-powered feature you shelved? It’s probably viable now.

The January 2026 announcements aren’t just incremental updates. They represent a phase transition in how software gets built. The developers who thrive won’t be the ones who pick the “right” tool — they’ll be the ones who build systems that leverage all of these tools together, with proper observability, standardized protocols, and an architecture designed for agentic collaboration.

Whether you’re a solo developer building your next SaaS or a team lead managing a fleet of AI agents, the message is the same: the infrastructure is ready, the costs are down, and the standards are forming. The only question left is what you’re going to build with it.

Building an AI-powered automation pipeline or need help architecting agentic systems? Let’s talk about how to bring these tools together for your workflow.

Get weekly AI, music, and tech trends delivered to your inbox.