Why AI Will Transform Music Production in 2026 — And What Producers Need to Know

March 10, 2026

Best Sample Packs March 2026: Drum Kits, Loops, and One-Shots That Actually Slap

March 10, 2026Day two of GDC 2026, and the announcements are already hitting hard. Microsoft just embedded machine learning directly into the DirectX graphics pipeline. Tencent unveiled an AI engine that generates 3D assets from a single text prompt. And in exactly one week, Jensen Huang takes the GTC 2026 stage in San Jose. Welcome to AI developer conference 2026 season — the most consequential stretch of tech announcements this year.

After 28 years in the music and audio industry, I’ve watched plenty of “paradigm shifts” come and go. But what’s unfolding this March is different. AI isn’t being bolted onto creative tools as a novelty feature anymore — it’s being woven into the fundamental infrastructure. From GPU-native machine learning to multi-agent development workflows, this conference season is drawing the blueprint for how every creative professional will work in the next five years.

GDC 2026 Live: The AI Developer Conference 2026 Highlights So Far

The Game Developers Conference is running March 9-13 at San Francisco’s Moscone Center, and this year’s AI focus is unprecedented. Here are the announcements that matter most.

Microsoft DirectX ML: Machine Learning Goes Native in the Graphics Pipeline

Microsoft’s DirectX innovations at GDC 2026 could reshape real-time graphics as we know them. The headline features include Cooperative Vectors in Shader Model 6.9, DirectX Linear Algebra for ML workloads directly within the graphics pipeline, and a Compute Graph Compiler that enables native ML model execution on GPU hardware. A DXR 2.0 preview is also slated for summer 2026.

Why does this matter beyond gaming? Until now, AI inference in real-time applications required separate processing pipelines, adding latency and complexity. With ML integrated natively into the rendering pipeline, neural rendering becomes a first-class citizen. For audio professionals like me, this same architectural shift could eventually bring GPU-accelerated ML to spatial audio processing and real-time sound design — the implications extend far beyond game graphics.

Tencent AI Summit: HY 3D Engine and Multi-Agent Scene Reasoning

Tencent Games is hosting its AI Summit today (March 10) at GDC, showcasing the HY 3D AI Creation Engine — a tool that generates production-quality 3D assets from text descriptions, images, or rough sketches. The system includes multi-agent scene layout reasoning, meaning AI agents can collaboratively design entire environments, not just individual objects. Over 20 sessions cover how major studios are deploying AI in actual production pipelines.

Unity also announced AI Gateway integration with MCP (Model Context Protocol), accelerating the move toward agentic workflows inside game engines. The pattern is clear: AI agents are no longer external tools — they’re becoming embedded collaborators within the development environment itself.

The Developer Sentiment Paradox: Using AI While Fearing It

Perhaps the most revealing data point from GDC 2026 comes from the State of the Game Industry survey. Among 2,300+ developers polled, 52% said generative AI has a negative impact on the industry — a sharp jump from 30% last year. Yet simultaneously, 36% are actively using AI tools at work, with ChatGPT dominating at 74% adoption among AI users. A full 81% use AI for research and brainstorming, while visual artists remain the most opposed group at 64%.

This paradox defines the current moment perfectly. Developers are adopting AI tools while simultaneously worrying about their broader implications. It’s not hypocrisy — it’s the messy reality of a technology transition. The tools are too useful to ignore, but the concerns about creative displacement are too real to dismiss.

NVIDIA GTC 2026 Preview: The Main Event Is Next Week

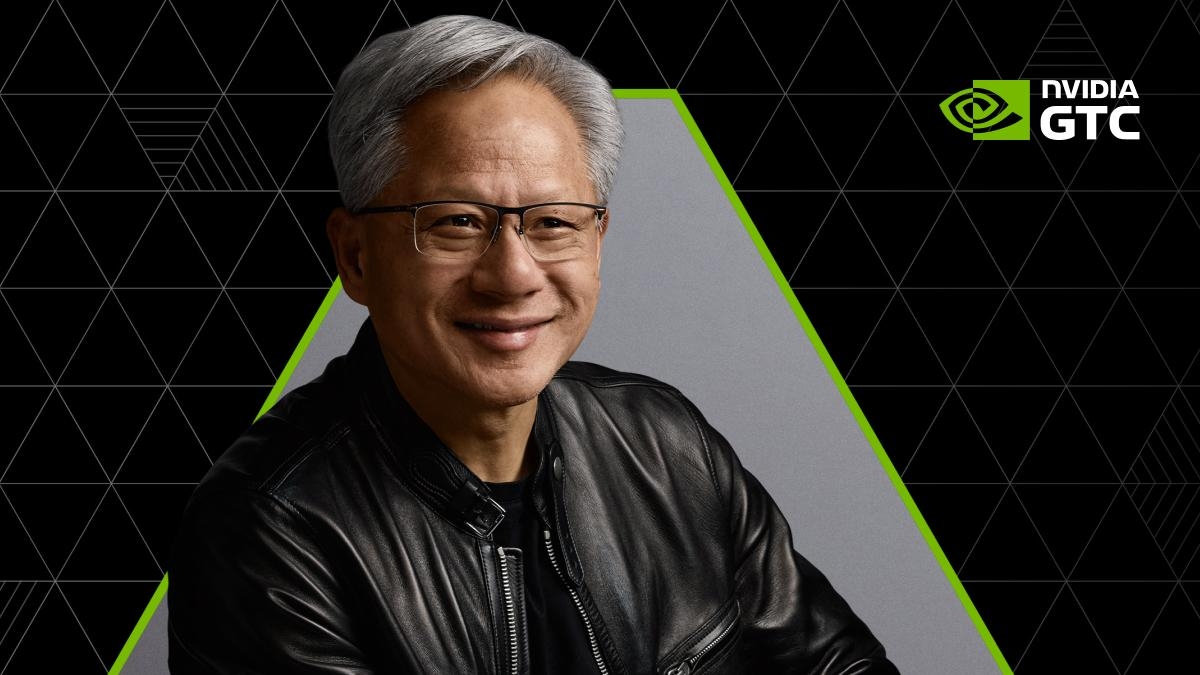

If GDC is showing us how AI integrates into creative production, next week’s NVIDIA GTC 2026 (March 16-19 in San Jose) will reveal the infrastructure powering it all. Jensen Huang’s keynote on March 16 at SAP Center is the most anticipated tech presentation of the month, with over 30,000 attendees from 190 countries expected and more than 700 sessions on the schedule.

The confirmed speaker lineup alone signals the scale of this event: Perplexity CEO Aravind Srinivas, LangChain CEO Harrison Chase, and Mistral AI CEO Arthur Mensch are all presenting. The announced focus areas — agentic AI, physical AI, and AI factories — suggest we’ll see announcements about new hardware architectures and software platforms that will define AI compute for the next generation.

For anyone building AI-powered workflows — whether in game development, music production, video, or enterprise software — Jensen’s keynote is must-watch. NVIDIA has consistently used GTC to debut hardware and platforms that reshape entire industries. Last year’s announcements are still rippling through every creative sector.

Why This AI Developer Conference 2026 Season Matters for Every Creative Professional

Three trends from this conference season deserve your attention regardless of your specific field. First, GPU-native ML is going mainstream. Microsoft’s DirectX ML integration proves that machine learning is graduating from a separate layer to a core component of the rendering pipeline. This same pattern will reach audio, video, and every other real-time creative tool.

Second, agentic workflows are becoming production-ready. Unity’s MCP integration and Tencent’s multi-agent scene reasoning aren’t research demos — they’re shipping tools. The era of AI agents that work alongside you inside your creative applications is arriving faster than most people expected.

Third, the industry sentiment gap is widening but instructive. The fact that adoption is rising even as skepticism grows tells us something important: the professionals who learn to work with these tools effectively will have a significant competitive advantage, precisely because many of their peers are choosing to resist.

What to Watch This Week and Next

GDC continues through Friday, March 13. Key remaining sessions include NVIDIA’s AI video generation tools for game development and major studio presentations on AI production pipelines. Then all eyes shift to San Jose for Jensen Huang’s GTC keynote on Monday, March 16, where new AI hardware and software platform announcements are widely anticipated.

The throughline connecting both conferences is unmistakable: AI is transitioning from experimental technology to essential production infrastructure. Whether you’re a game developer, audio engineer, visual artist, or any kind of creative professional, the tools being unveiled this month will shape your workflow for years to come. The question isn’t whether to pay attention — it’s whether you’ll adapt early enough to benefit from the shift.

What GTC 2026 Could Mean for Creative Professionals

Jensen Huang’s GTC keynote on March 17 typically sets the technical roadmap for the next 18 months of GPU computing. Based on leaked session titles and developer previews, this year’s focus appears centered on what NVIDIA is calling “Omniverse Agents” — AI systems that can operate across multiple creative applications simultaneously.

The implications for audio professionals are particularly intriguing. Current AI audio tools like AIVA or Boomy operate in isolation, requiring manual export and import between different stages of production. But if NVIDIA’s multi-application agent architecture delivers on its promise, we could see AI assistants that understand your entire creative workflow — from initial composition in your DAW to final mastering and distribution.

Expected Hardware Announcements and Performance Implications

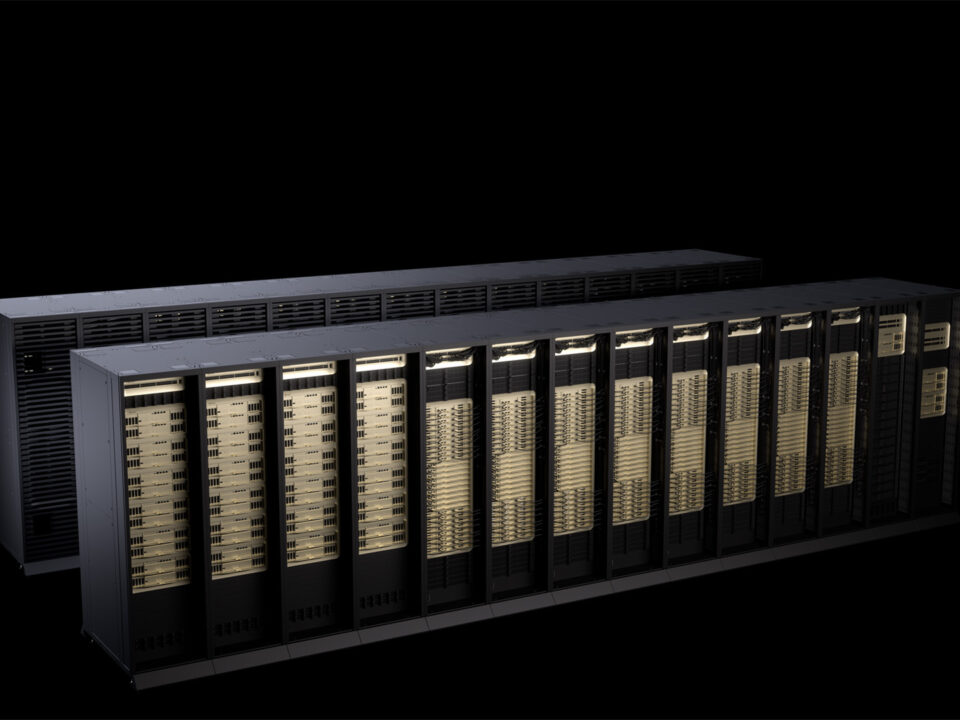

Industry sources suggest NVIDIA will announce the RTX 5090 Ti with dedicated AI inference cores, potentially delivering 2.5x the ML performance of current RTX 4090 cards. More importantly for developers, the rumored “Grace Hopper Studio” workstation could bring data center-class AI capabilities to individual creators — we’re talking about 128GB of unified memory and native support for models up to 70 billion parameters.

For context, most current creative AI workflows are limited to models under 7 billion parameters due to consumer hardware constraints. A 10x jump in accessible model complexity could fundamentally change what’s possible in real-time creative applications. In audio production specifically, this could enable AI that understands musical structure, emotional context, and production aesthetics at a level that’s simply not possible with today’s consumer hardware.

Technical Deep Dive: Infrastructure Changes Driving the AI Shift

The real story at both conferences isn’t the flashy demos — it’s the fundamental infrastructure changes happening beneath the surface. Three technical shifts are converging to enable this new generation of AI-native creative tools.

Memory Architecture and Bandwidth Improvements

Microsoft’s DirectX ML implementation relies heavily on what they’re calling “Cooperative Vectors” — essentially shared memory pools that allow AI models and traditional graphics shaders to work on the same data simultaneously. This eliminates the memory copies that previously created bottlenecks between AI processing and real-time rendering.

- Current RTX 4090: 1008 GB/s memory bandwidth, separate pools for compute and graphics

- Rumored RTX 5090 Ti: 1500+ GB/s with unified memory architecture

- Grace Hopper Studio: 3200 GB/s with CPU/GPU memory coherency

In practical terms, this means AI-assisted real-time audio processing becomes viable at professional sample rates and buffer sizes. Current AI audio plugins often require 512 or 1024 sample buffers to avoid dropouts. With unified memory architectures, 64-sample buffers become realistic for neural processing — the difference between AI as a creative brainstorming tool and AI as a performance instrument.

Model Context Protocol and Agent Orchestration

Both Tencent’s HY 3D engine and Unity’s AI Gateway are built on the Model Context Protocol (MCP), an emerging standard for how AI agents share information and coordinate tasks. This isn’t just about technical interoperability — it’s about enabling AI systems that understand your entire creative process, not just individual tools.

Consider a typical music production workflow: composition, arrangement, sound design, mixing, and mastering. Today’s AI tools tackle each stage in isolation. With MCP-based agent orchestration, an AI assistant could track your creative decisions across all stages, understanding how your compositional choices in Logic Pro should influence the AI mastering chain in Ozone, for example.

Industry Analysis: Winners and Losers in the AI Creative Tools Race

The companies making moves at AI developer conference 2026 reveal a lot about who’s positioning for long-term dominance in AI-powered creative tools. The patterns emerging from GDC and the anticipated GTC announcements suggest some clear strategic divides.

Platform vs. Tools: Different Approaches to AI Integration

Microsoft and NVIDIA are betting on platform-level integration — building AI capabilities into the fundamental computing infrastructure that all creative applications rely on. Meanwhile, companies like Tencent and Adobe are focusing on application-specific AI tools that deliver immediate user value but potentially create vendor lock-in.

From a developer perspective, the platform approach offers more flexibility but requires deeper technical expertise. DirectX ML means any application can potentially access GPU-accelerated AI inference, but developers need to implement their own models and interfaces. Tencent’s HY 3D engine provides a complete solution for 3D asset generation, but ties developers to Tencent’s specific AI models and workflow assumptions.

For audio professionals, this divide is already visible. Native Instruments and Ableton are building AI features directly into their DAWs, creating integrated but proprietary workflows. Meanwhile, companies like iZotope are developing AI plugins that work across multiple host applications but with less deep integration. The platform-level improvements from Microsoft and NVIDIA could enable a third approach: AI-native audio applications that aren’t constrained by existing DAW architectures.

Performance Benchmarks and Real-World Implications

Based on the technical specifications revealed at GDC, we can make some educated projections about performance improvements. Tencent’s HY 3D engine reportedly generates production-ready 3D models in under 30 seconds on current RTX 4090 hardware. With the expected RTX 5090 Ti improvements, that could drop to under 10 seconds — fast enough for real-time creative iteration rather than batch processing.

The audio equivalent would be AI-generated stems, instrument separation, or even full arrangements that render fast enough to use during live performance. We’re approaching the threshold where AI becomes a real-time creative instrument rather than a post-production tool. That fundamental shift — from AI as a utility to AI as a performance medium — could reshape how we think about musical expression and live creativity.

Looking to build AI-powered creative workflows or automate your production pipeline? With 28 years of hands-on experience across music, audio, and technology, I can help you navigate this transition.