Best Smart Home Cameras September 2025: 7 Indoor and Outdoor Security Picks Compared

September 22, 2025

Best Free Reverb Plugins September 2025: Top 8 Zero-Cost Options That Rival Paid Software

September 23, 2025$46.7 billion in a single quarter. That’s not a typo — that’s NVIDIA’s Q2 FY2026 revenue, and it tells you everything you need to know about the AI chip shortage 2025 situation. Supply is improving. Demand is improving faster. And if you’re waiting in line for Blackwell GPUs, you might want to sit down for this.

The AI Chip Shortage 2025 Paradox: Record Revenue, Record Backlogs

Here’s the paradox that defines the AI chip shortage 2025 landscape: NVIDIA just posted its best quarter ever, Blackwell data center revenue grew 17% quarter-over-quarter, and production yields have improved significantly after early design fixes. By every manufacturing metric, supply is getting better.

Yet Blackwell GPUs remain sold out through mid-2026. New orders placed today won’t ship for 12+ months. The backlog sits at approximately 3.6 million units. Lead times for data center GPUs across the board — NVIDIA, AMD, and boutique chip makers — range from 36 to 52 weeks.

So what’s actually happening? Let’s break down what improved, what didn’t, and what this means for anyone trying to build AI infrastructure right now.

NVIDIA Q2 FY2026: The Numbers Behind the AI Chip Shortage

NVIDIA’s Q2 FY2026 earnings report (covering the quarter ending July 2025) painted a picture of extraordinary growth:

- Total revenue: $46.7 billion (+56% year-over-year)

- Data Center revenue: $41.1 billion (+56% YoY) — data center alone is now larger than NVIDIA’s total revenue was just two years ago

- Blackwell data center revenue: grew 17% sequentially, indicating the production ramp is working

- Q3 guidance: $54.0 billion (±2%), suggesting even more acceleration ahead

These numbers confirm that Blackwell chips are shipping in volume. The B200 and GB200 GPUs that struggled with initial yield issues in late 2024 and early 2025 have overcome those hurdles. NVIDIA specifically noted that a mask-level design change resolved the yield problems, and production has been scaling steadily through 2025.

But context matters. NVIDIA described demand as “extraordinary” — a word they keep using quarter after quarter. Every major cloud provider (AWS, Azure, Google Cloud), every sovereign AI initiative, and an increasingly long list of enterprise customers are all competing for the same pool of GPUs.

The Blackwell Cabinet Problem: Forecast Cuts Tell the Real Story

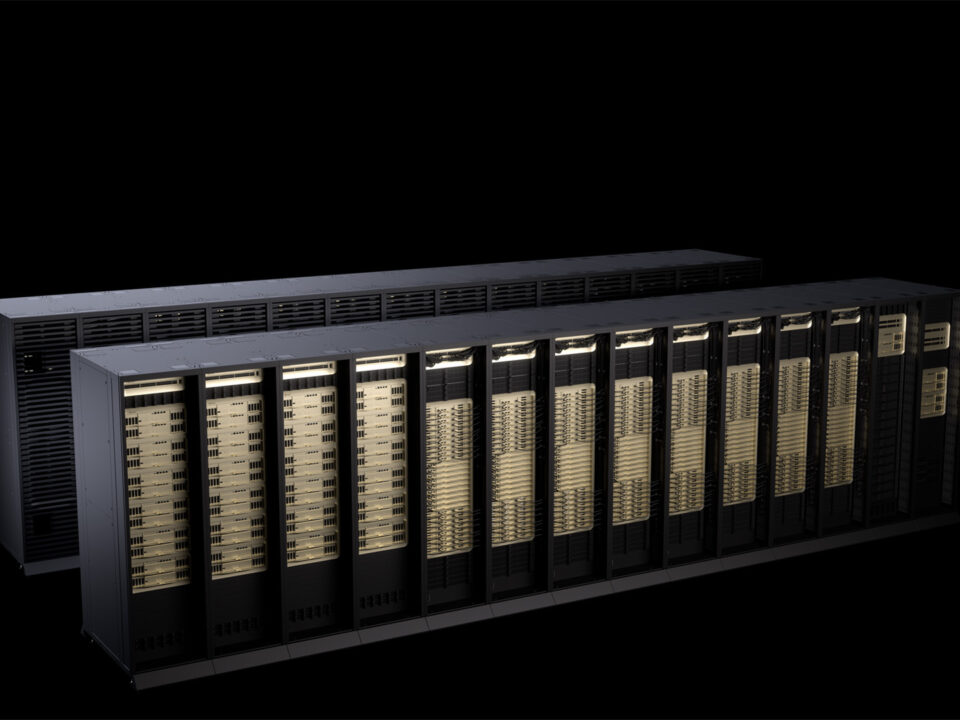

If you want to understand the gap between “supply is improving” and “you still can’t get GPUs,” look at the NVL72 cabinet situation. These are NVIDIA’s flagship AI infrastructure products — complete racks packed with 72 Blackwell GPUs, liquid cooling, and NVLink interconnects. They’re what hyperscalers actually buy.

Industry analysts have cut their 2025 shipment forecasts in half. Original projections called for 50,000 to 80,000 NVL72 units shipping in 2025. The revised forecast: 25,000 to 35,000 units. That’s not a minor adjustment — it’s a 50%+ reduction.

Why the cut? It’s not that NVIDIA can’t make the chips. It’s everything else:

- TSMC CoWoS packaging bottleneck: Advanced chip packaging remains the critical constraint. Even wafers fabricated at TSMC’s Arizona facility are being shipped back to Taiwan for CoWoS packaging because the U.S. packaging capacity isn’t ready yet

- HBM memory shortage: High Bandwidth Memory suppliers (SK Hynix, Samsung, Micron) have seen inventory drop from 13-17 weeks to just 2-4 weeks. There simply isn’t enough HBM to go around

- DRAM price surge: Memory prices have jumped 50% in some categories, reflecting the supply-demand imbalance

- Cabinet assembly complexity: Hon Hai (Foxconn) controls roughly 52% of GB200/GB300 cabinet assembly, creating concentration risk in the supply chain

The AI Chip Shortage 2025 Bottleneck Map

Think of it as a pipeline with multiple chokepoints. NVIDIA has widened the GPU fabrication pipe significantly. But the CoWoS packaging pipe, the HBM supply pipe, and the cabinet assembly pipe are all narrower than the demand pipe. Fix one bottleneck and the next one downstream becomes the new constraint.

Who’s Feeling the Pain — And Who’s Adapting

The AI chip shortage 2025 doesn’t affect everyone equally. Hyperscalers like Microsoft, Google, and Amazon — companies spending $50-80 billion annually on AI infrastructure — have pre-negotiated allocations. They’re still GPU-constrained, but they’re at the front of the line.

The real pain is hitting three groups:

- Mid-tier cloud providers and AI startups: Companies without billion-dollar purchase agreements face 36-52 week lead times and limited allocation. Some are turning to AMD MI300X as an alternative, though supply there is constrained too

- Sovereign AI initiatives: Countries building national AI compute infrastructure (UAE, Saudi Arabia, Singapore, France) are competing with private sector for the same limited supply

- Enterprise AI deployments: Companies wanting to run AI workloads on-premises rather than in the cloud face the longest waits and highest costs

Adaptation strategies are emerging. Some companies are optimizing inference workloads to run on fewer GPUs. Others are exploring custom silicon (Google TPUs, Amazon Trainium, Microsoft Maia). And there’s growing interest in inference-optimized chips that offer better performance-per-dollar for deployment, even if they can’t match Blackwell for training.

The HBM Crisis: The Bottleneck Behind the Bottleneck

While most coverage of the AI chip shortage 2025 focuses on GPU fabrication, the real story might be happening in the memory supply chain. High Bandwidth Memory (HBM) has become the silent chokepoint that few outside the semiconductor industry fully appreciate.

Each Blackwell B200 GPU requires a stack of HBM3E memory — and a lot of it. The GB200 configuration pairs two B200 GPUs with a Grace CPU, requiring even more HBM per unit. Now multiply that by 72 for a single NVL72 cabinet, and you begin to understand the scale of memory demand.

SK Hynix, Samsung, and Micron are the three companies that produce HBM at scale. Their combined inventory levels have plummeted from a comfortable 13-17 weeks of supply to just 2-4 weeks. That’s essentially running on fumes. Any disruption — a yield issue, a power outage, a logistics delay — could halt GPU production entirely, regardless of how many GPU dies NVIDIA and TSMC can produce.

The memory price impact is already visible. DRAM prices have surged 50% in some categories as HBM production consumes an outsized share of DRAM wafer capacity. Every HBM chip produced means fewer standard DRAM chips, creating ripple effects across the entire computing industry — from servers to smartphones to gaming consoles.

The Alternative Chip Landscape

The persistent GPU shortage is accelerating the alternative silicon market. AMD’s MI300X has gained traction with some cloud providers, though AMD faces its own supply constraints with TSMC. Google’s TPU v5p offers compelling performance for specific workloads within Google Cloud. Amazon’s Trainium2 chips are purpose-built for training on AWS. And Microsoft’s Maia 100 represents another hyperscaler building custom silicon to reduce NVIDIA dependency.

Startups are carving out niches too. Cerebras offers wafer-scale computing for specific training workloads. Groq focuses on inference with its LPU (Language Processing Unit) architecture. SambaNova targets enterprise AI deployment. None of these alternatives match Blackwell’s raw training performance, but for inference — where most production AI workloads actually run — the competitive landscape is becoming genuinely viable.

The Seasonal Factor: Why September 2025 Is a Pressure Point

This week’s Apple iPhone event and the upcoming AES Convention remind us that the semiconductor supply chain serves multiple masters. While AI chips and smartphone processors use different designs, they compete for some of the same foundry capacity, packaging resources, and advanced memory.

Apple’s new A-series chips for iPhone consume significant TSMC N3 capacity. TSMC is arguably the most critical single point of failure in the entire global semiconductor supply chain — and they’re running near maximum utilization. Every wafer allocated to iPhone is a wafer not available for something else in the advanced node pipeline.

Meanwhile, the broader AI infrastructure buildout shows no signs of slowing. NVIDIA’s $54 billion Q3 guidance represents a 15.6% sequential jump from Q2. The company is essentially telling the market: everything we can make, we will sell immediately.

When Does the Shortage End?

The honest answer: not in 2025, and probably not in the first half of 2026.

TSMC is aggressively expanding CoWoS packaging capacity, with new facilities coming online through 2025 and into 2026. HBM producers are investing billions in new production lines. NVIDIA’s Blackwell Ultra (the next iteration) is already ramping, which will add capacity but also create new demand.

The fundamental challenge is that AI compute demand is growing faster than the semiconductor industry can expand supply. Every efficiency improvement in AI models (smaller models, better inference optimization) is offset by new use cases and larger training runs.

Industry analysts suggest that supply-demand balance might be reached in late 2026 or early 2027 — but only if demand growth moderates, which is far from guaranteed.

What This Means for the AI Industry

The AI chip shortage 2025 is reshaping the industry in ways that will persist long after supply catches up:

- Efficiency is the new currency. Companies that can achieve more with fewer GPUs have a structural advantage. Expect continued focus on model optimization, quantization, and inference efficiency

- Multi-vendor strategies are becoming essential. Relying solely on NVIDIA is a business risk. AMD, Intel, Google, Amazon, and startups like Cerebras and Groq are all viable alternatives for specific workloads

- Geopolitics and supply chain resilience matter more than ever. The concentration of advanced packaging in Taiwan, HBM in South Korea, and assembly in China creates geopolitical risk that every AI company must factor into planning

- The cost of AI compute will remain elevated. GPU cloud pricing reflects the shortage, and on-premises deployments require significant capital and patience

The bottom line: NVIDIA Blackwell supply is genuinely improving. Production is ramping, yields are up, and record revenue proves that chips are shipping. But demand from every corner of the AI economy — training, inference, sovereign compute, enterprise — is growing even faster. The AI chip shortage 2025 is getting better in absolute terms and worse in relative terms. For anyone planning AI infrastructure, the strategy remains the same: order early, diversify suppliers, and optimize every GPU cycle you can get.

Get weekly AI, music, and tech trends delivered to your inbox.