Teenage Engineering TX-6 at Superbooth 2025: Field System Black, Link Mode, and Why This 160g Mixer Changes Everything

May 5, 2025

Best Monitors for Mac USB-C: 7 Top Displays for M4 Chips in 2025

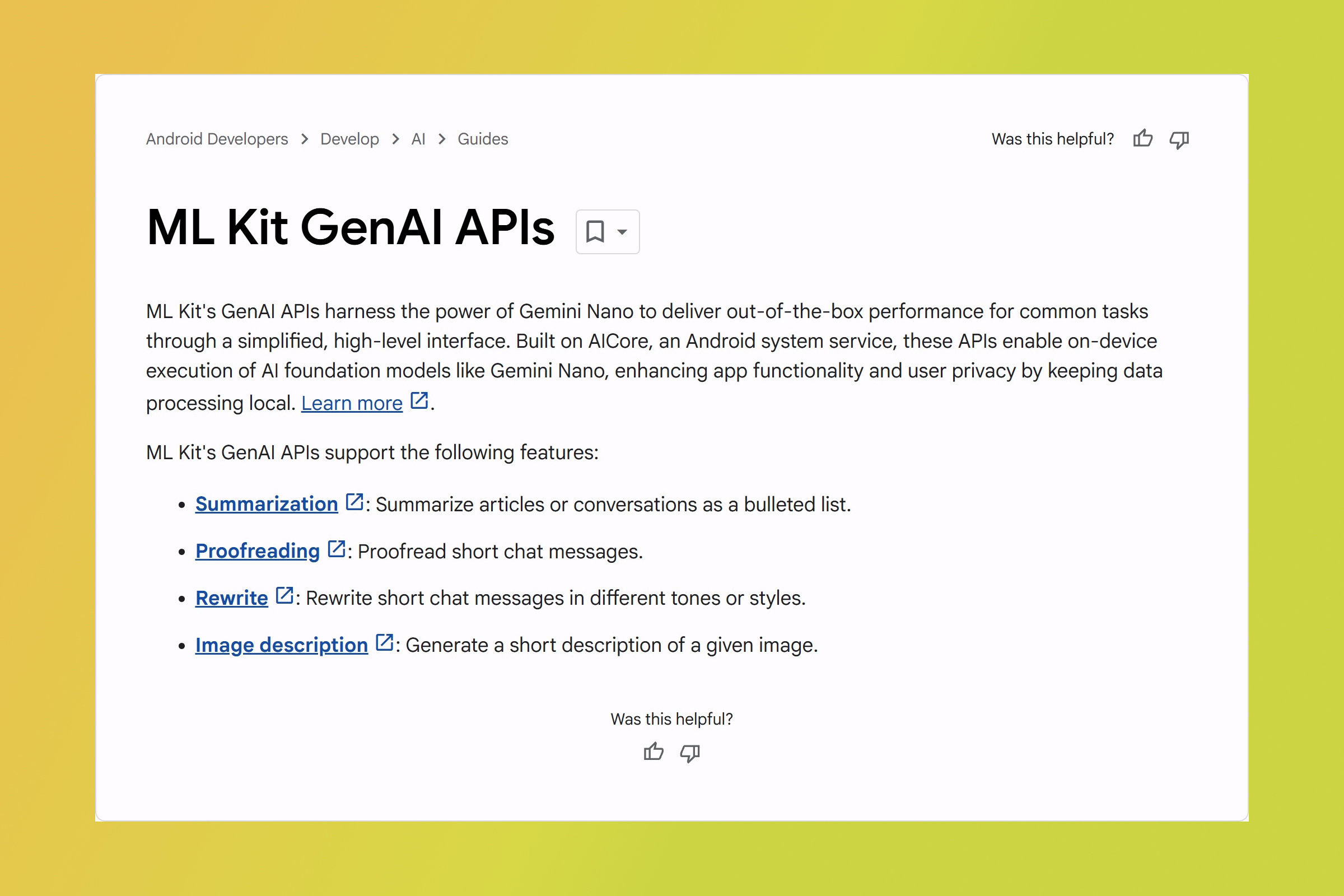

May 5, 2025Google just mass-democratized on-device AI for Android, and most people missed it. At Google I/O 2025, the company quietly shipped four production-ready ML Kit GenAI APIs powered by Gemini Nano that let any third-party app summarize text, proofread documents, rewrite content, and describe images — entirely on-device, with zero cloud cost and zero data leaving the phone. This is the Google I/O 2025 Android 16 AI moment developers have been waiting for.

Google I/O 2025 Android 16 AI: Why On-Device Changes Everything

For years, running generative AI on a smartphone meant sending your data to a cloud server, paying per-token fees, and praying for a fast connection. Google’s new ML Kit GenAI APIs flip that model entirely. Powered by Gemini Nano — Google’s smallest and most efficient large language model — these APIs process everything locally on the device’s neural processing unit.

The implications are massive. A medical note-taking app can summarize patient records without ever transmitting sensitive health data. An email client can proofread your messages in airplane mode. A content creation tool can rewrite paragraphs without burning through API credits. Privacy isn’t a feature here — it’s the architecture.

As the Android Developers Blog detailed, Google designed these APIs with a layered architecture: specialized LoRA (Low-Rank Adaptation) adapter models sit on top of the Gemini Nano base model, each fine-tuned for its specific task with optimized inference parameters including temperature, top-K sampling, and batch size.

The Four APIs: What They Actually Do

Google launched four distinct capabilities, each available as a high-level API that delivers quality results without requiring prompt engineering or model fine-tuning from developers:

1. Summarization

The summarization API can condense documents up to 3,000 English words into concise summaries. It supports both streaming and non-streaming workflows, giving developers flexibility in how they present results. After LoRA fine-tuning, Google’s internal benchmark scores jumped from 77.2 to 92.1 — a 19% improvement that makes on-device summarization competitive with cloud-based alternatives. Language support currently includes English, Japanese, and Korean.

2. Proofreading

Beyond simple spell-checking, this API catches grammatical errors, awkward phrasing, and stylistic inconsistencies. Benchmark scores improved from 84.3 to 90.2 after fine-tuning. It currently supports seven languages, making it useful for multilingual apps serving global audiences.

3. Rewriting

The rewriting API can rephrase text while preserving meaning — useful for content creation, accessibility features, or tone adjustment. Fine-tuning pushed scores from 79.5 to 84.1. Like proofreading, it supports seven languages.

4. Image Description

This is the standout addition. The previous AI Edge SDK was text-only; these new APIs can process images too. The image description API generates alt text and detailed descriptions of visual content — a game-changer for accessibility. Benchmark scores hit 92.3 after fine-tuning (up from 86.9). Currently English-only, but the accessibility implications alone make it significant.

From Pixel-Only to Ecosystem-Wide: Device Support Explodes

Here’s where it gets interesting for the broader Android ecosystem. Gemini Nano was initially a Pixel 9 exclusive. As Android Authority reported, device support has now expanded dramatically to include:

- Samsung Galaxy S25 series

- OnePlus 13

- Xiaomi 15

- Motorola Razr 60 Ultra

- HONOR Magic 7

- All devices powered by MediaTek Dimensity, Qualcomm Snapdragon, and Google Tensor platforms

This isn’t a lab experiment anymore. With flagship devices from Samsung, OnePlus, Xiaomi, Motorola, and HONOR all supporting these APIs, developers can build on-device AI features knowing they’ll reach hundreds of millions of users.

ML Kit GenAI vs. AI Edge SDK: The Upgrade Path

For developers already experimenting with Gemini Nano, the distinction matters. The older AI Edge SDK remains experimental and is limited to text-only processing. The new ML Kit GenAI APIs are in beta but production-ready, with several critical upgrades:

- Image support: AI Edge SDK was text-only; ML Kit GenAI handles both text and images

- High-level abstractions: No prompt engineering needed — the APIs deliver quality results out of the box

- Broader device support: Beyond Pixel to Samsung, OnePlus, Xiaomi, and more

- Production stability: Beta status with production-ready reliability vs. experimental

InfoQ noted that the implementation stacks specialized LoRA adapter models on the Gemini Nano base model, meaning Google can continue improving individual task performance without updating the entire base model — a smart architectural decision for long-term maintainability.

Firebase AI Logic: When On-Device Isn’t Enough

Google isn’t pretending on-device AI solves everything. For complex use cases that exceed Gemini Nano’s capabilities, Google I/O 2025 also introduced Firebase AI Logic, which provides access to more powerful cloud models including Gemini Pro, Gemini Flash, and Imagen.

The smart play for developers is a hybrid approach: use Gemini Nano for routine tasks (summarization, proofreading, basic rewrites) that benefit from privacy and zero latency, then escalate to cloud models via Firebase AI Logic for tasks requiring deeper reasoning, longer context windows, or image generation. This tiered architecture keeps costs low while maintaining capability ceilings.

Beyond AI: Android 16’s Other Big Moves

While AI dominated the headlines, TechCrunch covered several other significant Android 16 announcements at Google I/O 2025:

- Live Updates: Real-time notification surfaces for ride-sharing, delivery tracking, and navigation

- Professional media and camera features: Enhanced APIs for pro-grade photo and video capture

- Desktop windowing: Multi-window support for large-screen devices and desktop modes

- Accessibility enhancements: Major improvements complementing the new image description API

- Androidify sample app: Demonstrates transforming selfies into unique Android robots using both on-device and cloud AI

Sean’s Take: What This Means for the AI Landscape

I’ve been building automated AI pipelines for over a year now, and here’s what strikes me about this announcement: Google is making on-device AI boring — in the best possible way. When ML Kit GenAI APIs deliver 90+ benchmark scores for summarization and image description without any prompt engineering, that’s not a research paper. That’s infrastructure.

From a practical standpoint, the zero-cloud-cost angle is the real story. I run multiple AI-powered automation systems, and API costs add up fast. For any mobile developer processing thousands of user requests daily, the math changes dramatically when summarization and proofreading cost exactly zero per inference. Google essentially turned what was a recurring OpEx line item into a one-time integration effort.

The LoRA adapter architecture is also worth watching. By stacking task-specific adapters on top of the base Gemini Nano model, Google can ship improvements to individual tasks — better summarization, more accurate proofreading — without pushing a full model update to every device. As someone who works with fine-tuned models daily, I know how much faster iteration cycles become when you can update the adapter layer independently. This design decision tells me Google is playing a long game with on-device AI, not just shipping a demo.

My prediction: within 12 months, on-device summarization and proofreading will be table-stakes features that users expect in every text-heavy Android app, the same way spell-check became invisible. The developers who integrate these APIs now will have a head start when user expectations shift.

What Developers Should Do Right Now

If you’re building for Android, the action items are clear. First, audit your app for any feature that currently sends text to a cloud API for processing — summarization, grammar checking, content rewriting. Those are immediate candidates for migration to ML Kit GenAI APIs. Second, explore the image description API for accessibility improvements; auto-generated alt text is both a UX win and an accessibility compliance requirement in many markets. Third, design your AI architecture with the hybrid model in mind: Gemini Nano for routine on-device tasks, Firebase AI Logic for complex cloud processing.

The privacy-first, cost-free, offline-capable AI layer that Google just shipped isn’t a nice-to-have anymore. It’s the new baseline for Android development, and it’s available today on devices already in hundreds of millions of pockets worldwide.

Building AI-powered systems or need help architecting on-device vs. cloud AI strategies for your project? Sean Kim brings 28+ years of tech and production experience to every consultation.

Get weekly AI, music, and tech trends delivered to your inbox.