Apple M5 Chip: 6 Performance Estimates Before WWDC 2025 Changes Everything

June 3, 2025

Summer NAMM 2025: 7 Best Budget Gear Announcements Under $300

June 4, 2025Three lines of Swift code. That’s all it takes to run Apple’s 3-billion parameter language model entirely on-device — no API keys, no cloud costs, no internet connection required. WWDC 2025 Core ML announcements fundamentally changed how developers interact with machine learning on Apple platforms.

While the tech world was busy debating cloud AI pricing, Apple quietly dropped a developer toolkit that makes on-device intelligence genuinely accessible. The new Foundation Models framework, major Core ML enhancements, and the maturing MLX ecosystem represent Apple’s clearest statement yet: the future of AI is on the device, not in the cloud.

Foundation Models Framework: Apple Intelligence in Three Lines of Code

The headline feature of WWDC 2025’s machine learning announcements is the Foundation Models framework. For the first time, developers get direct programmatic access to the same on-device large language model that powers Apple Intelligence — and the barrier to entry is remarkably low.

import FoundationModels

let session = LanguageModelSession()

let response = try await session.sendMessage("Summarize this article")That’s not a simplified example — it’s the actual implementation. The LanguageModelSession API handles model loading, inference optimization, and memory management automatically. No API keys to manage, no usage limits to track, no monthly bills to worry about.

The 3B Model Under the Hood

According to Apple’s machine learning research team, the on-device model packs approximately 3 billion parameters compressed to just 2 bits per weight using Quantization-Aware Training (QAT). The architecture features a 5:3 depth ratio design where key-value caches are shared between blocks, reducing memory usage by 37.5% compared to traditional implementations.

- Context length: Up to 65,000 tokens — enough for substantial document processing

- Language support: 15 languages out of the box

- KV cache: 8-bit quantization for efficient memory use

- Performance: Competitive with Qwen-2.5-3B across all supported languages, matching some 4B models in English

Guided Generation and @Generable: Type-Safe AI Outputs

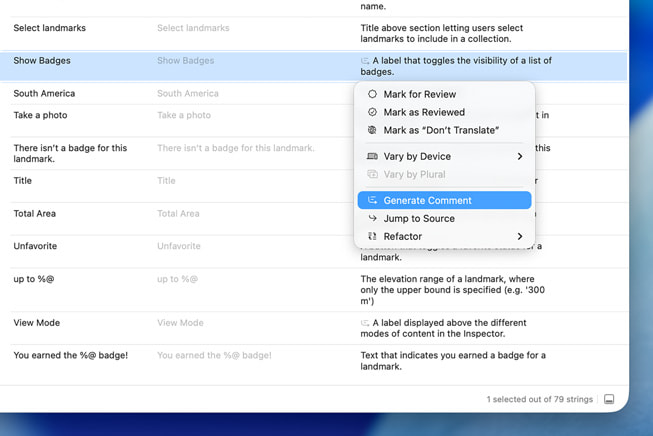

One of the most developer-friendly innovations is guided generation. Instead of parsing JSON strings and hoping the model follows your schema, developers can now use the @Generable macro to define structured outputs directly in Swift types. The framework customizes model decoding at the token level to prevent structural errors — no JSON schema handling needed.

The companion @Guide macro adds natural language annotations to properties, giving the model precise instructions on how to populate each field. Combined with streaming support, developers can build responsive UIs that progressively display generated content.

Tool Calling: Extending the Model’s Reach

The Foundation Models framework includes a Swift protocol-based tool calling system. Developers define tools that the model can invoke to access live data — weather, calendar events, app-specific databases — and the framework automatically handles parallel and serial invocations with source citation for fact-checking.

Practical use cases are compelling: an education app generating personalized quizzes from a user’s notes without any cloud API costs, or an outdoors app adding natural language search that works offline in remote areas.

WWDC 2025 Core ML Upgrades: MLTensor, Model Functions, and Stateful Inference

While the Foundation Models framework grabbed headlines, the Core ML updates at WWDC 2025 are equally significant for developers working with custom models. Three changes stand out.

MLTensor: A Familiar API for Array Operations

The new MLTensor type provides an efficient API for multi-dimensional array operations executed directly on Apple silicon. If you’ve used PyTorch tensors or NumPy arrays, the interface will feel immediately familiar — but with automatic hardware optimization across CPU, GPU, and Neural Engine. MLTensor supports common math and transformation operations typical of machine learning workflows, and these operations leverage Apple silicon’s compute capabilities for high-performance execution without requiring developers to manually target specific hardware.

Multi-Function Models

Core ML models can now hold multiple functions within a single model file. This is critical for LLM deployment — you might have a base model function, multiple LoRA adapter functions, and specialized task functions all packaged together. The runtime manages resource allocation and switching between functions efficiently.

Stateful Inference: The LLM Game Changer

Previously, Core ML treated every model as a pure stateless function: inputs in, outputs out. For LLM token generation, this meant copying large KV cache states back and forth between the model and calling code on every iteration — a significant performance bottleneck.

The new state management system lets models maintain internal state across inference iterations. For LLM workloads, this eliminates the copy overhead entirely, resulting in noticeably faster token generation. Combined with Core ML Tools’ improved compression techniques — more granular and composable weight compression for LLMs and diffusion models — running sophisticated models on-device becomes genuinely practical.

MLX: Apple’s Open-Source ML Framework Grows Up

The MLX framework, Apple’s open-source machine learning library designed specifically for Apple silicon, received significant attention at WWDC 2025. MLX exploits the unified memory architecture of Apple silicon — CPU and GPU share physical memory, eliminating the copy overhead that plagues traditional GPU computing.

- Languages: Python, Swift, C++, and C with community bindings

- Fine-tuning: Train and fine-tune models directly on Apple silicon with full memory sharing

- Distributed learning: Scale training across multiple Apple silicon devices

- Model ecosystem: Hundreds of frontier models available on the Hugging Face MLX community

For developers who want more control than the Foundation Models framework offers — or who need to run custom architectures — MLX bridges the gap between cloud-based training and on-device deployment. You can fine-tune a model on your Mac, convert it with Core ML Tools, and deploy it on iPhone, all within Apple’s ecosystem.

Beyond Core ML: The Broader ML API Ecosystem

WWDC 2025 also brought updates to Apple’s higher-level ML APIs that many developers use without even thinking about the underlying models. For developers who need finer-grained control, Apple also introduced the BNNS Graph Builder — a new API for creating operation graphs optimized for real-time CPU execution with strict latency and memory constraints. This is particularly relevant for audio processing apps and real-time signal pipelines where GPU scheduling overhead is unacceptable.

SpeechAnalyzer: Next-Generation Speech-to-Text

The new SpeechAnalyzer API replaces the aging SFSpeechRecognizer with a faster, more flexible speech-to-text model optimized for long-form and distant audio — lectures, meetings, conversations. It runs entirely on-device with minimal code, making it practical for apps that process extended audio sessions.

Vision Framework: Document Recognition and More

The Vision framework now includes document recognition that groups different document structures for easier processing, plus lens smudge detection for camera-based apps. With over 30 APIs for image analysis, the framework covers everything from text recognition to body pose estimation.

Xcode 26: AI-Powered Development

Xcode 26 integrates AI directly into the development workflow. The AI Assistant provides full method stub completion, SwiftUI view generation, test suggestions for edge-case detection, and auto-generated inline documentation. Developers can also use ChatGPT directly in the sidebar — or run local models on Apple silicon Macs for completely private coding assistance.

The Privacy Equation: Why On-Device Matters for Developers

Every ML announcement at WWDC 2025 reinforced a single architectural philosophy: compute on the device whenever possible. For developers, this translates into concrete advantages beyond privacy.

- Zero marginal cost: Foundation Models framework inference is free — no per-token charges, no rate limits, no surprise bills

- Offline capability: Apps work in airplane mode, remote areas, or spotty network conditions

- Latency: No network round-trips mean faster perceived response times

- Regulatory compliance: User data never leaves the device, simplifying GDPR, HIPAA, and similar compliance requirements

- User trust: “Processed on your device” is increasingly a competitive feature, not just a privacy checkbox

Apple’s server-side model — using a novel Parallel-Track Mixture-of-Experts architecture trained on 14 trillion text tokens — handles tasks that exceed on-device capabilities through Private Cloud Compute, but the clear direction is to push as much intelligence to the edge as possible.

What This Means for Your Next Project

If you’re building for Apple platforms, WWDC 2025 fundamentally expands what’s possible without a cloud backend. The Foundation Models framework alone eliminates the biggest barrier to ML adoption — cost and complexity. Core ML’s stateful inference and MLTensor make custom model deployment practical. And MLX gives researchers and advanced developers a genuine alternative to cloud-based training.

The developers who move fastest on these APIs will have a significant head start. An education app with offline AI tutoring, a health app with private on-device analysis, a creative tool with responsive AI assistance — these are no longer aspirational ideas. With WWDC 2025’s toolkit, they’re weekend projects.

For audio developers specifically, the combination of SpeechAnalyzer’s improved distant-audio recognition, BNNS Graph’s real-time processing capabilities, and the Foundation Models framework’s text generation opens up workflows that previously required multiple cloud services. Imagine a DAW plugin that transcribes vocal takes, generates lyric suggestions, and tags sessions with AI-powered metadata — all running locally on an M-series Mac with zero latency and zero recurring costs.

Apple’s WWDC 2025 ML stack isn’t revolutionary in any single component — competitors offer larger models, more flexible APIs, and broader hardware support. But the integration story is uniquely compelling. From Xcode’s AI assistant to Foundation Models to Core ML to Metal, the entire pipeline lives within one ecosystem, one memory architecture, and one privacy model. For developers already invested in Apple platforms, that coherence is the real advantage.

Building automation pipelines or integrating AI into your development workflow? Sean Kim has been shipping AI-powered production systems since before it was trendy.

Get weekly AI, music, and tech trends delivered to your inbox.