Best USB-C Chargers July 2025: GaN Multi-Port Picks for Travel

July 23, 2025

Music Copyright Basics for Producers: Sampling, Licensing, and AI in 2025

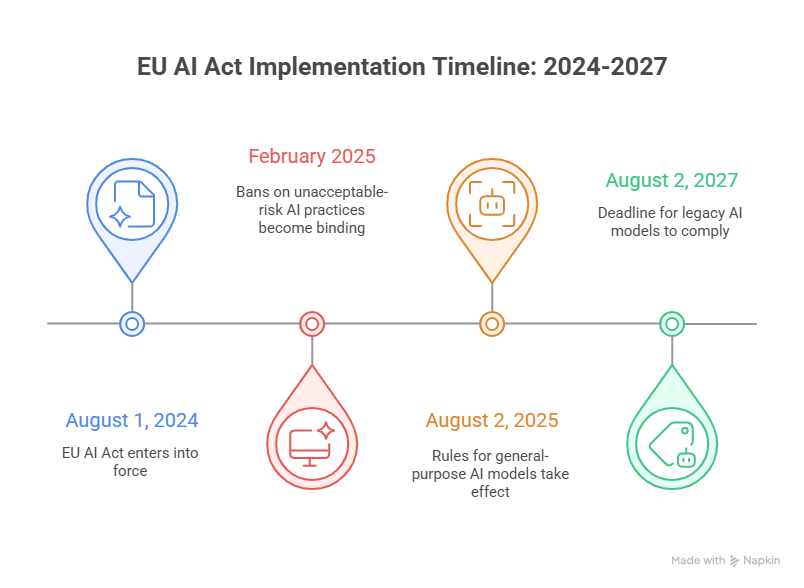

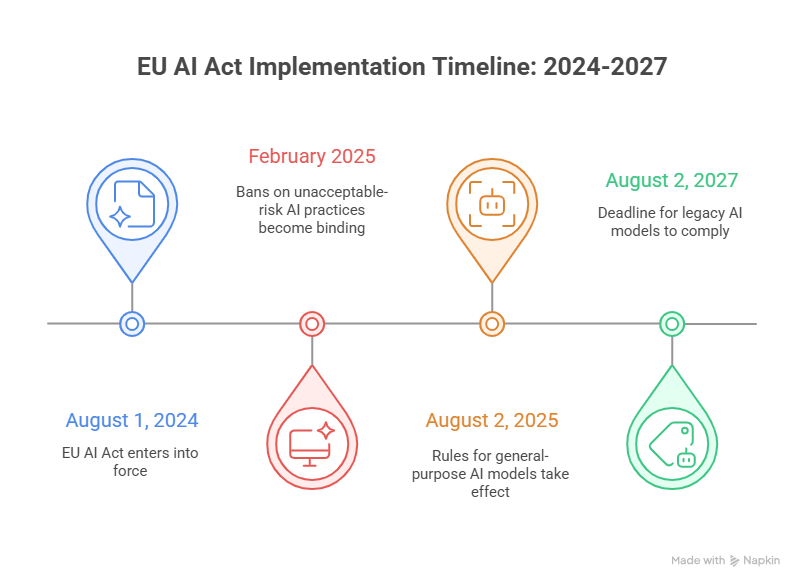

July 24, 2025€35 million. That’s the price tag the European Union just slapped on companies caught running banned AI systems after August 2, 2025. And if you think that number sounds steep, consider this: for the biggest tech companies, the actual fine could hit 7% of global annual turnover—which for someone like Google means potentially tens of billions. The EU AI Act enforcement 2025 wave has officially begun, and July was the month everything got real.

EU AI Act Enforcement 2025: The July Turning Point

On July 10, 2025, the European Commission published the final General-Purpose AI (GPAI) Code of Practice—a document nearly 1,000 participants helped draft over months of intense negotiations. Three weeks later, on August 2, the first wave of actual penalties kicks in. This is not a drill, and it is not another vague regulatory framework sitting on a shelf. The EU AI Act enforcement 2025 timeline is now a countdown clock.

The Code of Practice covers three chapters: Transparency, Copyright, and Safety & Security. Together, they form the voluntary compliance framework that GPAI model providers can adopt to demonstrate conformity with the EU AI Act’s obligations. The European Commission and the AI Board confirmed on August 1, 2025 that the Code serves as an adequate voluntary tool—meaning signatories get a presumption of conformity.

Who Signed, Who Didn’t—and Why It Matters

Here’s where the politics get interesting. The GPAI Code of Practice isn’t mandatory—yet. But signing it grants companies a presumption of compliance, essentially a regulatory shield. And the responses from Big Tech have been revealing.

Full signatories (all three chapters): Google, Microsoft, OpenAI, Anthropic, and Amazon all signed on. These companies are betting that voluntary compliance now is cheaper and smarter than fighting enforcement later. By signing all three chapters—Transparency, Copyright, and Safety & Security—they get the broadest presumption of conformity.

Partial signatory: Elon Musk’s xAI signed only the Safety & Security chapter, declining the Transparency and Copyright sections. This is a calculated move—xAI gets some regulatory cover on the safety front while avoiding commitments on how it handles training data and copyright, areas where Grok’s data sourcing from X (formerly Twitter) could face scrutiny.

Declined entirely: Meta refused to sign any chapter of the Code. This is arguably the boldest stance—and the riskiest. With Llama models distributed globally and Meta’s open-source approach to AI, the company appears to be betting that it can demonstrate compliance through alternative means. But without the presumption of conformity, Meta’s GPAI models will face closer regulatory scrutiny from the EU AI Office.

The 8 Prohibited AI Practices: What’s Now Illegal

Starting August 2, 2025, the EU AI Act’s list of prohibited AI practices carries actual teeth. Violate these and you face the maximum penalty tier: up to €35 million or 7% of global annual turnover, whichever is higher. The eight banned categories are:

- Social scoring by governments — AI systems that evaluate citizens based on social behavior or personality characteristics for general-purpose governance

- Emotion recognition in workplaces and schools — AI that infers emotions of employees or students, except for medical or safety purposes

- Untargeted scraping of facial images — Building facial recognition databases by scraping images from the internet or CCTV without consent

- Subliminal manipulation — AI designed to distort behavior through techniques beyond a person’s consciousness that may cause significant harm

- Exploitation of vulnerabilities — AI targeting people based on age, disability, or social/economic situation to materially distort their decisions

- Biometric categorization for sensitive attributes — Using biometric data to categorize people by race, political opinions, sexual orientation, or religious beliefs

- Predictive policing based solely on profiling — AI that assesses the risk of a person committing a crime based only on profiling or personality traits

- Real-time remote biometric identification in public spaces — For law enforcement, except in narrowly defined situations (missing children, imminent threats, serious crime suspects)

These aren’t theoretical concerns. Clearview AI’s facial recognition scraping, China’s social credit system concepts, and emotion detection tools used in hiring processes—all of these would fall squarely under the prohibition. Companies operating in the EU need to audit their AI systems now, not after August 2.

The Three-Tier Penalty Structure

The EU didn’t just pick one fine amount. The Article 99 penalty structure is tiered based on severity:

- Tier 1 — Prohibited practices: Up to €35 million or 7% of global annual turnover. This is the maximum, reserved for the eight banned categories listed above.

- Tier 2 — Other AI Act violations: Up to €15 million or 3% of global turnover. This covers non-compliance with high-risk AI system requirements, transparency obligations, and GPAI model rules.

- Tier 3 — Providing misleading information: Up to €7.5 million or 1% of global turnover. This is for companies that give incorrect or incomplete information to regulators.

For context, 7% of Alphabet’s 2024 revenue would be approximately $24 billion. That’s not a rounding error—it’s an existential-level penalty designed to make even the largest tech companies take notice.

GPAI Model Obligations: What Changes for AI Providers

Beyond the prohibited practices, August 2025 also activates obligations specifically for general-purpose AI model providers. If you develop or distribute a GPAI model in the EU market, you now need to:

- Maintain and provide technical documentation about your model’s training process

- Establish a policy to comply with EU copyright law, including the text and data mining opt-out mechanism

- Publish a sufficiently detailed summary of training data content

- For models with systemic risk: conduct adversarial testing, track and report serious incidents, and ensure adequate cybersecurity protections

The AI literacy obligations have actually been in effect since February 2025. Organizations deploying AI systems must ensure their staff has sufficient AI literacy to understand the capabilities and limitations of the tools they’re using. According to a survey from SD Worx, many European companies were still scrambling to meet this requirement as of mid-2025.

What This Means for Tech Companies Right Now

The practical implications break down differently depending on where you sit in the AI ecosystem:

For GPAI model providers (OpenAI, Google, Anthropic, etc.): Sign the Code of Practice if you haven’t already. The presumption of conformity it grants is valuable insurance. If you’re like Meta and choosing not to sign, you need an alternative compliance strategy documented and ready to present to the EU AI Office.

For companies deploying AI systems: Audit every AI tool in your stack. Do any of your systems touch the eight prohibited categories? Emotion detection in HR software, biometric categorization in customer segmentation, predictive tools that could be classified as social scoring—all need immediate review. The AI literacy requirement is already active, so training programs should be in place.

For startups and smaller AI companies: The penalty structure scales, but €7.5 million is still a company-killer for most startups. The good news is that the Code of Practice was designed with proportionality in mind. Smaller providers face less burdensome documentation requirements, but they still can’t operate prohibited systems.

For companies outside the EU: If your AI products or services reach EU users, you’re in scope. The AI Act applies based on where the impact is felt, not where the company is headquartered. This is the same extraterritorial approach that made GDPR a global standard.

The Bigger Picture: Europe’s AI Regulation Gamble

Whether the EU AI Act becomes the global gold standard for AI regulation—the way GDPR did for privacy—or an innovation tax that drives AI development elsewhere is the trillion-dollar question. The July 2025 GPAI Code of Practice and August enforcement deadline represent the first real test of whether this regulatory framework can work in practice.

The fact that most major AI companies signed the Code voluntarily is encouraging. It suggests the industry sees compliance as manageable and strategically smart. Meta’s refusal and xAI’s partial participation will be interesting test cases for how the EU AI Office handles non-signatories. If enforcement is weak, the entire framework loses credibility. If it’s heavy-handed, it risks the “innovation tax” narrative.

From my perspective running a company that works at the intersection of AI and creative technology, the practical impact is already being felt. Clients are asking about AI compliance in contracts. Vendors are updating terms of service. Legal teams that never thought about artificial intelligence are suddenly scrambling to understand what “general-purpose AI model” means under EU law. The compliance burden is real, but so is the opportunity for companies that get ahead of it—positioning themselves as trustworthy AI providers in a market that increasingly values regulatory clarity over the fastest deployment cycle.

One thing is certain: the era of building AI systems first and asking regulatory questions later is over in Europe. The EU AI Act enforcement 2025 deadlines are real, the fines are massive, and the prohibited practices list leaves no room for ambiguity. Every company touching AI—from the model builders to the enterprises deploying chatbots—needs a compliance strategy. Not next quarter. Now.

Get weekly AI, music, and tech trends delivered to your inbox.