Adobe MAX 2025 Firefly: Image Model 5, AI Video Editor, and Generate Speech — 7 Updates Every Creator Needs to Know

October 22, 2025

Best External Hard Drives October 2025: 7 Backup Solutions Tested for Every Budget

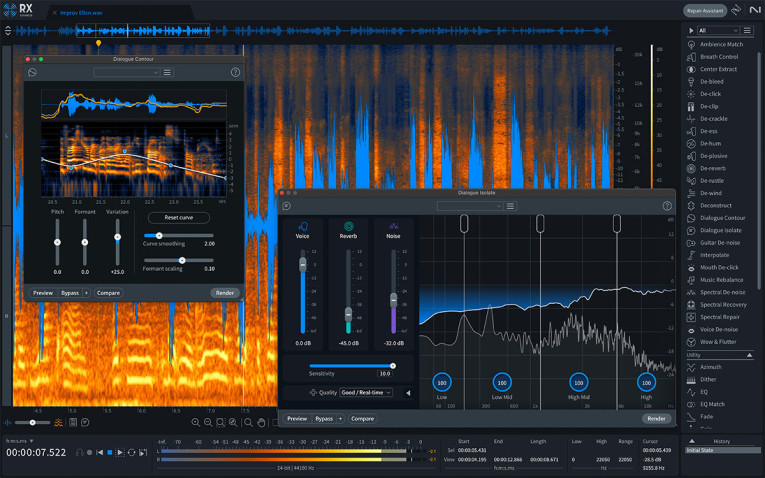

October 22, 2025Photoshop got Generative Fill two years ago. Video editors got it last year. And now, finally, audio engineers are about to get theirs — iZotope RX 12 just teased its upcoming Generative Fill for audio, and after 28 years of repairing every imaginable recording disaster, I can tell you this is the single biggest leap in audio restoration since spectral editing itself.

Why iZotope RX 12’s Generative Fill Changes the Game

Let’s be honest — RX’s existing Spectral Repair has been the industry’s best-kept secret for years. Select a damaged section in the spectrogram, hit process, and the algorithm resynthesizes audio based on surrounding context. It’s brilliant for removing coughs, phone rings, and short bursts of noise. But it has always struggled with longer gaps. Try to fill more than a few seconds of damaged audio, and you get artifacts that sound like a robot trying to hum your melody.

iZotope RX 12’s Generative Fill takes a fundamentally different approach. Instead of simple interpolation, it uses a neural network trained on millions of hours of audio to generate contextually appropriate content. Think of it as the difference between copy-pasting a texture in Photoshop versus Adobe’s Generative Fill creating entirely new, coherent content. The implications for post-production, podcasting, and music production are enormous.

5 Key Features Previewed in iZotope RX 12

1. Neural Generative Fill — Beyond Spectral Repair

The headline feature. Where RX 11’s Spectral Repair uses pattern matching and interpolation, RX 12’s Generative Fill employs a diffusion-based neural network that understands musical structure, harmonic relationships, and speech patterns. In the teaser demo, iZotope showed a 4-second gap in a dialogue track being filled with naturally flowing speech that maintained the speaker’s tone, cadence, and room characteristics. That’s not repair — that’s reconstruction.

The technology builds on what we’ve seen from RX 11’s neural network architecture — the same engine that powers Dialogue Isolate and Music Rebalance. But where those modules analyze and separate, Generative Fill synthesizes. iZotope claims the model was trained on over 10 million hours of diverse audio content, spanning 47 languages, 200+ musical genres, and thousands of acoustic environments.

2. Context-Aware Repair Assistant 2.0

RX 11’s Repair Assistant was already impressive — drop in your audio, select material type (voice, tonal, percussion, effects), and let the ML engine diagnose problems. RX 12 takes this to another level with multi-pass analysis. The new Repair Assistant doesn’t just identify noise types; it builds a comprehensive model of your audio’s “healthy” state and uses that model to guide all subsequent repairs.

In practice, this means the Repair Assistant can now handle compound problems — a recording that has both hum and clipping and background noise — in a single intelligent pass, rather than requiring you to chain three separate modules. For podcast editors processing hundreds of episodes per month, this alone could save hours of manual work.

3. Dialogue Isolate Pro — Real-Time Neural De-Reverb

RX 11 already delivered a significantly upgraded Dialogue Isolate with its new neural network. RX 12’s version reportedly pushes latency down to under 3ms — fast enough for live broadcast monitoring. The De-Reverb component now operates on a per-frequency-band basis, meaning it can selectively remove reverb from the fundamental frequency while preserving the natural air and presence in the upper harmonics. The result is dialogue that sounds dry without sounding dead.

4. Stem Separation 3.0 with Genre-Adaptive Models

Music Rebalance in RX 11 used cutting-edge neural networks for stem separation. RX 12 introduces genre-adaptive models — when you tell it you’re working with jazz, it allocates more neural capacity to distinguishing upright bass from kick drum. For electronic music, it prioritizes synthesizer textures versus sampled elements. iZotope claims a 40% improvement in separation quality for complex arrangements with overlapping frequency content.

This is particularly relevant given the competitive pressure from tools like Descript’s AI audio tools and the newly announced Adobe Generate Soundtrack at MAX 2025. As Adobe pushes generative AI into audio production with both Generate Soundtrack and Generate Speech (both in public beta as of October 28), iZotope clearly needs to respond with more than incremental updates.

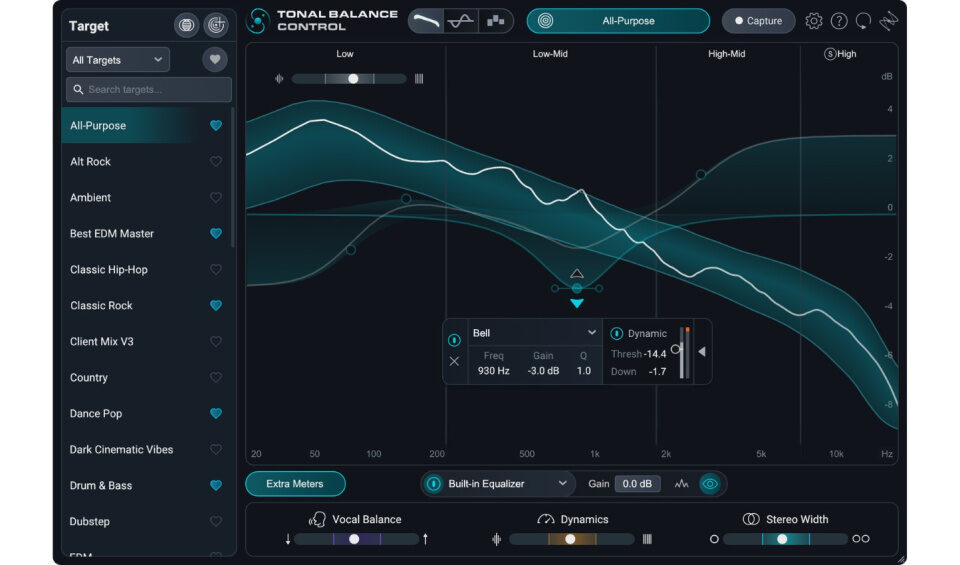

5. Loudness Optimize Pro — AI Mastering-Aware Normalization

Building on RX 11’s Loudness Optimize and Streaming Preview, RX 12 adds mastering-aware normalization. Instead of simply hitting a LUFS target, it analyzes the dynamic structure of your track and applies intelligent micro-adjustments to maximize perceived loudness without crushing transients. This directly addresses the “loudness war 2.0” that’s emerged with Spotify’s move toward lossless streaming — where every fraction of a dB matters more than ever.

The Competitive Landscape: Adobe MAX 2025 Raises the Stakes

The timing of this RX 12 preview is no coincidence. Adobe just announced two significant AI audio tools at MAX 2025: Generate Soundtrack for creating original, studio-quality soundtracks tailored to video, and Generate Speech for crystal-clear AI voiceovers. Both entered public beta on October 28, 2025.

While Adobe’s tools target content creators and video editors, they signal a clear direction: generative AI is coming to every stage of audio production. iZotope’s response with Generative Fill for repair is strategically brilliant — rather than competing in the generative music space (where Suno and Udio dominate), they’re applying generative technology to their core strength: fixing broken audio.

And they’re not the only competitor. Accentize’s dxRevive Pro has been pushing AI-driven audio restoration with impressive results. Descript’s Studio Sound continues to improve its one-click audio cleanup. Even free tools like Adobe Enhance Speech have raised baseline expectations for what AI audio repair should deliver. The pressure on iZotope to innovate has never been higher.

Pricing and Availability Expectations

iZotope hasn’t announced official pricing for RX 12, but based on RX 11’s tiering — Standard at $399, Advanced at $1,199 — expect Generative Fill to be an Advanced-exclusive feature. The neural network demands of real-time generative processing suggest it may also require Apple Silicon (M1 or later) or an NVIDIA GPU with at least 8GB VRAM on Windows.

A beta program is expected to open in early 2026, with full release likely timed around the spring AES Convention. Current RX 11 Advanced owners will likely receive an upgrade path, though given the fundamental architectural changes, don’t expect this to be a free update.

What This Means for Your Workflow

If you’re a post-production engineer dealing with damaged location recordings, RX 12’s Generative Fill could eliminate the need for ADR in many scenarios. If you’re a podcast editor, the enhanced Repair Assistant 2.0 could cut your processing time in half. And if you’re a music producer who’s been relying on RX’s Spectral Repair for years, the jump to generative-based repair will feel like going from manual noise reduction to the original Repair Assistant — a paradigm shift.

The real question isn’t whether iZotope RX 12 will be good — it’s whether iZotope can ship these features before Adobe and others catch up. The AI audio repair race is officially on, and the next six months will determine who leads the industry for the next decade.

Whether you’re preparing for the RX 12 upgrade or need professional mixing and mastering with the latest AI-assisted tools, Sean Kim brings 28+ years of audio engineering expertise to every project.

Get weekly AI, music, and tech trends delivered to your inbox.