DJI Mini 5 Pro Review: 1-Inch Sensor and 4K120fps in a 249.9g Drone Changes Everything

November 27, 2025

Holiday Gift Guide 2025: 10 Best Music Production Gifts Under $100 That Producers Actually Want

November 28, 2025Five open source AI November 2025 models dropped this month — and at least three of them outperform proprietary alternatives that cost 10x more to run. If you blinked, you missed a month that may have permanently tilted the AI power balance toward open source.

From a 32B reasoning model that traces every output back to its training data, to a $4.6 million agentic AI that chains 300 tool calls without breaking a sweat — November wasn’t just busy. It was a turning point. Here are the five open source AI November 2025 releases that developers, researchers, and builders need on their radar right now.

1. OLMo 3 — The Most Transparent Open Source AI Model Ever Built

On November 20, the Allen Institute for AI (Ai2) released OLMo 3, and it set a new standard for what “open source” actually means in AI. While most companies release weights and call it open, Ai2 published everything: weights, training data, training pipeline, evaluation code, and every checkpoint along the way.

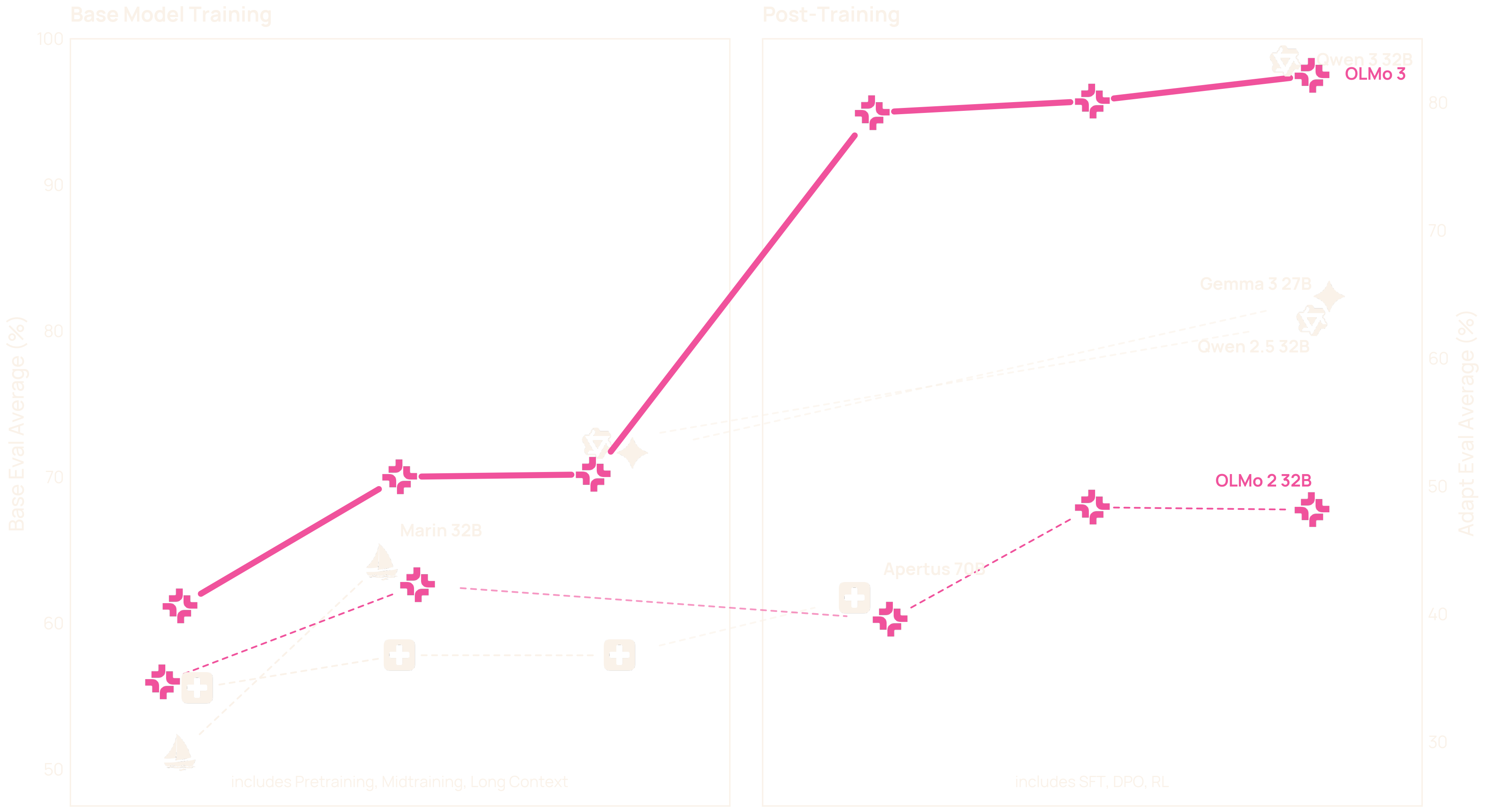

OLMo 3 comes in 7B and 32B parameter variants, both supporting a 65,536-token context window. The models were pretrained on Dolma 3, a massive 9.3-trillion-token corpus drawn from web pages, science PDFs processed with olmOCR, codebases, math problems, and encyclopedic text. The result? A model that’s 2.5x more efficient to train than Meta’s Llama 3.1, based on GPU-hours per token.

But the real game-changer is OlmoTrace — an interpretability tool that lets users see exactly how outputs connect to specific training data. For researchers investigating bias, failure modes, or data contamination, this is unprecedented. The Think variant adds explicit reasoning chains for math, code, and general problem solving, matching Qwen 3 and Gemma 3 at the 32B scale.

Why it matters: OLMo 3 proves that full transparency doesn’t mean sacrificing performance. If you’re building anything where auditability matters — healthcare, legal, finance — this should be your starting point.

2. Kimi K2 Thinking — The $4.6M Agentic Sleeper Hit

Moonshot AI dropped Kimi K2 Thinking on November 6, and the numbers are staggering. This 1-trillion-parameter Mixture-of-Experts model was trained for just $4.6 million — a fraction of what comparable proprietary models cost — and it can autonomously execute 200 to 300 sequential tool calls without human intervention.

K2 Thinking isn’t just another reasoning model. It’s the first open source “thinking agent” — a model designed from the ground up with a “model as agent” philosophy. It reasons coherently across hundreds of steps, auto-selects from available tools, and generates detailed reasoning chains before delivering solutions.

The benchmark results back up the hype: 44.9% on HLE (with tools), 60.2% on BrowseComp, and 71.3% on SWE-Bench Verified. That SWE-Bench score puts it in direct competition with top closed-source models for real-world software engineering tasks. For developers building autonomous AI workflows, K2 Thinking is the open source option they’ve been waiting for.

3. DeepSeek Math V2 — Gold Medal Math for $294K

DeepSeek continued its tradition of punching far above its weight class. Released on November 25, DeepSeek Math V2 won a gold medal at IMO 2025 with 35 out of 42 points, and scored 118 out of 120 on the Putnam 2024 exam. The training cost? Just $294,000.

The model introduces a hybrid thinking/non-thinking mode that lets users control computational effort — essentially a “thinking budget” that balances accuracy against inference cost. This is huge for production deployments where you need Olympic-level math reasoning on some queries but fast, cheap responses on others.

Released under the MIT license with full weights on Hugging Face, DeepSeek Math V2 further cements the argument that open source AI doesn’t just compete with proprietary models — it wins gold medals against them. Literally.

4. IBM Granite 4.0 Nano — Enterprise AI in Your Browser

IBM took a different approach with Granite 4.0 Nano, targeting the edge and on-device frontier. The Nano family ranges from 350 million to 1.5 billion parameters, released under the Apache 2.0 license — meaning unrestricted commercial use, modification, and redistribution.

What makes Granite 4.0 Nano special is the hybrid SSM (State Space Model) + Transformer architecture based on Mamba-2. The 350M variant runs comfortably in a web browser. The 1.5B models need just 6-8GB of VRAM. Both inherit capabilities from the full Granite 4.0 training recipe on 15+ trillion tokens.

For enterprise users, IBM added cryptographic signing, ISO 42001 certification, and full provenance tracking. This isn’t a research toy — it’s a production-ready, privacy-first AI that runs on smartphones and laptops without ever touching a cloud server. With Black Friday driving edge AI adoption conversations, Granite 4.0 Nano is perfectly timed.

5. Qwen 2.5 — The Quiet Multilingual Juggernaut

Alibaba’s Qwen 2.5 family didn’t have a single dramatic launch date — it was a rolling series of updates throughout November that collectively made it the most downloaded open source model family on Hugging Face. The 72B Instruct variant leads multilingual benchmarks, with a 25-35% month-over-month jump in downloads.

Qwen 2.5’s strength is breadth. The family covers dense and Mixture-of-Experts architectures, vision and omni models, coding specialists, and embedding/reranker models. For multilingual applications — especially CJK languages — Qwen 2.5 has become the default choice. Internal testing showed it solved more word problems cleanly than expected with chain-of-thought disabled, and hallucinated units less frequently than competitors.

The GitHub star growth has been the steepest since late summer, and enterprise adoption is accelerating. If you’re building anything multilingual and need an open source foundation, Qwen 2.5 72B is the benchmark to beat.

The Bigger Picture: How Open Source AI November 2025 Changed Everything

Looking at these five releases together, a clear pattern emerges. Open source AI in November 2025 isn’t just catching up to proprietary models — it’s differentiating in ways closed models can’t match:

- Full transparency (OLMo 3) — not just weights, but the entire pipeline

- Agentic capabilities (Kimi K2 Thinking) — 300 tool calls, autonomous workflows

- Cost efficiency (DeepSeek Math V2) — gold medal performance for under $300K

- Edge deployment (Granite 4.0 Nano) — enterprise AI in a browser

- Multilingual dominance (Qwen 2.5) — the broadest model family available

For developers and organizations evaluating their AI stack this Black Friday season, the message is clear: the best open source AI models aren’t just free alternatives. They’re often the better technical choice. The question isn’t whether to adopt open source AI — it’s which of these models fits your use case best.

Want to integrate open source AI models into your production pipeline? From model selection to deployment architecture, we can help you build the right stack.

Get weekly AI, music, and tech trends delivered to your inbox.