AMD Radeon RX 9080 XT Specs Leak: 32GB GDDR7, 4GHz Clocks, and a Serious Threat to NVIDIA’s Dominance

October 13, 2025

Rode PodMic USB Review: The Hybrid Mic That Actually Delivers Broadcast Quality

October 14, 2025A single NVIDIA GPU now costs over $60,000 — and you still can’t get one until mid-2026. The AI chip landscape 2025 has turned into the most consequential hardware war in a generation. One company controls over 80% of the market, a challenger just shipped a chip with more memory than anything NVIDIA offers, a former giant is in freefall, and the world’s biggest tech companies are building their own silicon from scratch. Here’s where every major player stands in October 2025, and what it means for anyone building with AI.

NVIDIA: Blackwell B200 Cements an Unshakeable Lead

Let’s start with the elephant in the room. NVIDIA commands 80–90% of the AI accelerator market as of October 2025, and the Blackwell B200 architecture is a big reason why that number isn’t shrinking anytime soon.

The B200 packs 208 billion transistors and 192GB of HBM3e memory onto a single GPU. Training throughput is 3.7–4x faster than the H100, which was already the industry benchmark. Inference performance is even more dramatic, thanks to a new dedicated inference engine and native FP4 support. But the most telling metric might be the simplest one: pricing sits at $60,000–$70,000 per GPU, and NVIDIA is sold out through mid-2026. Microsoft, Oracle, Meta, and every major hyperscaler placed orders in the hundreds of thousands.

Meanwhile, the H100 and H200 remain the workhorses of production AI infrastructure. The vast majority of cloud AI workloads today still run on Hopper-generation chips. Used H100s trade for $20,000+ on secondary markets — a GPU that launched at $30,000 just two years ago. NVIDIA’s real moat, though, isn’t any single chip. It’s CUDA. Over a decade of software ecosystem investment means that switching costs remain astronomically high, even when competitors offer compelling hardware.

AMD MI355X: The First Real Contender in the AI Chip Landscape 2025

AMD has been threatening to challenge NVIDIA in AI for years. In October 2025, that threat finally became real. The MI355X reached general availability this month, and the numbers are hard to ignore.

The headline spec: 288GB of HBM3E memory with 8 TB/s bandwidth. That’s 50% more memory than NVIDIA’s B200. For large language model inference, where memory capacity directly determines what models you can serve and at what batch sizes, this advantage is massive. In MLPerf benchmarks, the MI355X achieves near-parity with NVIDIA on most training and inference workloads — something no AMD chip has come close to before.

The software story has improved dramatically too. ROCm 7, released in September 2025, delivers native MI350/MI325X support, FP4 and FP8 format optimizations, and claims 3.5x inference and 3x training improvements over ROCm 6. Day-one integration with PyTorch and vLLM means developers can actually use these chips without rewriting their entire stack. It’s still not CUDA-level polish, but the gap has narrowed considerably.

The business momentum backs it up. AMD’s data center revenue hit $4.3 billion in Q3 2025, and the company has secured a partnership with OpenAI. For cost-conscious enterprises and cloud providers looking for leverage against NVIDIA’s pricing power, AMD is now a genuinely viable option.

Intel Gaudi 3: A Cautionary Tale

Intel’s AI accelerator story in 2025 is one of missed opportunities and retreating ambitions. The Gaudi 3 shipment target was slashed by 30%, dropping from 300,000–350,000 units to 200,000–250,000. The company also withdrew its $500 million sales forecast entirely — a stunning admission for what was supposed to be Intel’s flagship AI product.

The internal situation is even more concerning. The co-founders of Habana Labs — the Israeli startup Intel acquired in 2019 specifically for AI chip development — left Intel in September 2025. The next-generation Falcon Shores chip was scrapped entirely. Intel’s share of the AI accelerator market sits at roughly 8.7%, and the trajectory is downward.

The one bright spot is edge AI. Intel’s Lunar Lake NPU delivers 48 TOPS (tera operations per second), making it competitive for on-device AI workloads. Intel may have lost the data center AI war, but with billions of PCs still shipping with Intel silicon, the client-side NPU market remains a meaningful opportunity. It’s a very different business than competing with NVIDIA for hyperscaler contracts, but it’s something.

Custom Silicon: Big Tech Builds Its Own Chips

Perhaps the most significant trend in the AI chip landscape 2025 isn’t about any single company — it’s the collective move by every major tech platform to build custom AI silicon. This is no longer R&D experimentation. It’s production-scale deployment.

Google TPU v5p: Up to 9,000-Chip Pods

Google is the pioneer of custom AI silicon, having deployed TPUs since 2016. The latest TPU v5p can be configured into pods of up to 9,000 chips, creating supercomputing clusters purpose-built for Gemini model training. These are also available to external customers through Google Cloud, providing a genuine NVIDIA-free path for large-scale AI workloads. Google’s decade-plus head start in custom silicon gives it an ecosystem advantage that newer entrants struggle to match.

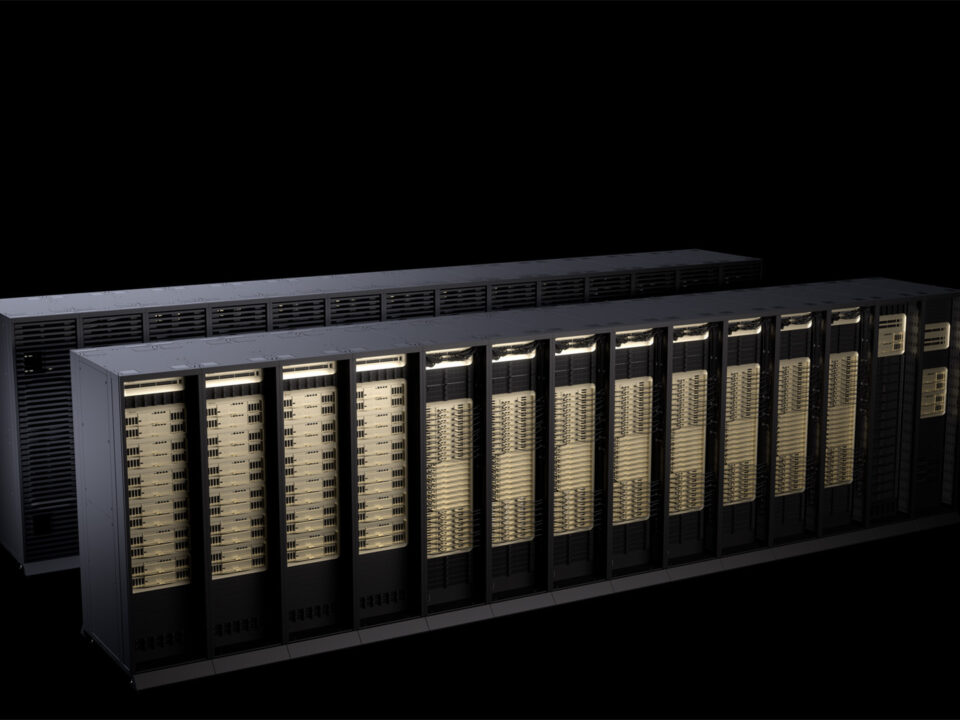

Amazon Trainium2: Project Rainier Goes Live with 500K Chips

Amazon’s Project Rainier activated in October 2025, deploying approximately 500,000 Trainium2 chips at an Indiana data center facility. This massive cluster is dedicated to training Anthropic’s Claude models, with each chip delivering 1.3 PFLOPS at FP8 precision. AWS plans to scale beyond 1 million chips, simultaneously reducing its own NVIDIA dependency while offering customers cheaper AI compute through its cloud platform.

Microsoft Maia 100 and Meta MTIA

Microsoft’s Maia 100 chip is currently deployed for internal workloads only, and the next-generation Maia 200 has been delayed. Its market impact remains limited until Microsoft makes it available to Azure customers.

Meta’s MTIA program is more aggressive. The MTIA 300 is already in production for ranking and recommendation workloads, and the roadmap extends through MTIA 400, 450, and 500 in partnership with Broadcom. From the 300 to the 500, HBM bandwidth increases 4.5x and compute increases 25x. At Meta Connect in September, the company unveiled the Ray-Ban Meta Display ($800) with a Neural Band and sEMG wristband for gesture control — wearable AI devices that ultimately depend on the kind of massive inference infrastructure these custom chips provide.

Apple M5: Redefining On-Device AI

Announced just two days ago on October 15, Apple’s M5 chip takes a fundamentally different approach to AI silicon. Rather than building standalone accelerators for data centers, Apple embedded a Neural Accelerator directly into each GPU core, delivering 4x the peak GPU compute for AI workloads compared to M4. Combined with a 16-core Neural Engine and 153 GB/s unified memory bandwidth, the M5 establishes a new benchmark for on-device AI performance in laptops and tablets.

This matters immediately for creative professionals. Adobe MAX 2025 (October 28–30) will debut Firefly Image Model 5 with native 4MP generation, AI Audio and Video tools, AI Assistants in Photoshop and Express, and Project Moonlight — a unified AI system across Adobe’s suite. Running these features locally rather than in the cloud requires exactly the kind of neural processing power the M5 delivers.

The Scoreboard: Who’s Winning Each Segment

Let’s break down the competitive landscape across three key segments as of October 2025.

Data Center Training: NVIDIA remains dominant. The B200’s raw performance and CUDA’s ecosystem lock-in make it nearly irreplaceable for frontier model training. Google’s TPU v5p and Amazon’s Trainium2 are viable for their own workloads, but external developers still gravitate to NVIDIA.

Data Center Inference: This is where the landscape is shifting fastest. AMD’s MI355X, with its 288GB memory advantage and improving ROCm software, offers genuine cost-performance advantages for serving large models. Meta’s MTIA is rapidly replacing GPUs for its own recommendation systems. For enterprises optimizing inference costs, the NVIDIA monopoly is cracking.

Edge and On-Device AI: Apple’s M5 and Intel’s Lunar Lake NPU (48 TOPS) compete here. As demand explodes for local AI execution — avoiding cloud costs and latency — this segment’s strategic importance is growing rapidly. Apple’s ecosystem integration gives it a clear advantage in consumer devices.

Custom Silicon Overall: 2025 marks the transition from experimentation to production. Amazon’s 500K-chip deployment, Google’s 9,000-chip pods, and Meta’s four-generation roadmap represent structural challenges to NVIDIA’s dominance. These chips won’t replace NVIDIA for general-purpose AI development anytime soon, but they’re optimized for the specific workloads that consume the most compute — and those workloads are growing fastest.

What This Means for You

The AI chip war of 2025 isn’t an abstract industry story — it directly affects the cost, performance, and availability of every AI product and service you use. NVIDIA’s supply constraints mean higher cloud computing costs for everyone. AMD’s emergence means those costs will eventually come down. Custom silicon means that Google Cloud, AWS, and Azure will increasingly offer non-NVIDIA options that are cheaper and sometimes faster for specific workloads.

For developers and enterprises building AI applications, the days of defaulting to NVIDIA for everything are ending. An AMD MI355X-based inference pipeline through ROCm 7, AWS Trainium2 instances for cost-efficient training, or Apple M5 for on-device AI processing — the options are multiplying. More competition means lower prices, more innovation, and better tools for everyone building with AI. The chip war is just getting started, and we’re all going to benefit from it.

Get weekly AI, music, and tech trends delivered to your inbox.