Roland NAMM 2026 Synth Lineup Preview — ZENOLOGY GX, Jupiter-X v3.0, AIRA Compact & More

January 2, 2026

CES 2026: AMD Ryzen AI 400 Series Arrives With 60 TOPS, Zen 5 Cores, and the First Copilot+ Desktop CPU

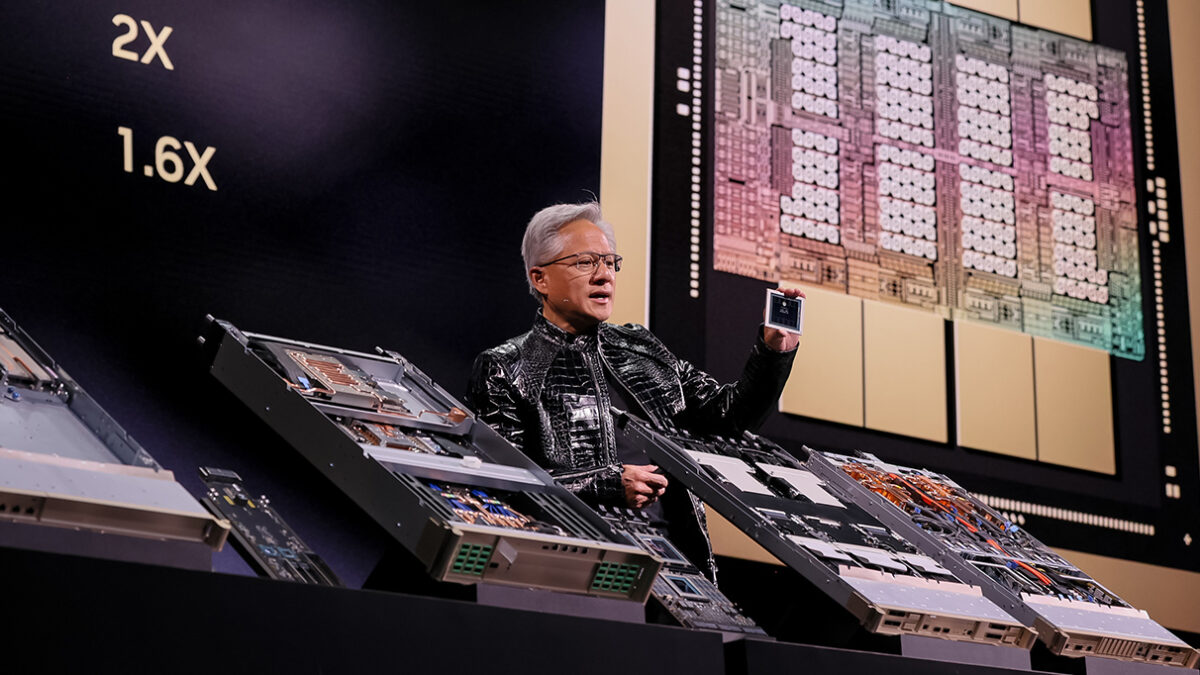

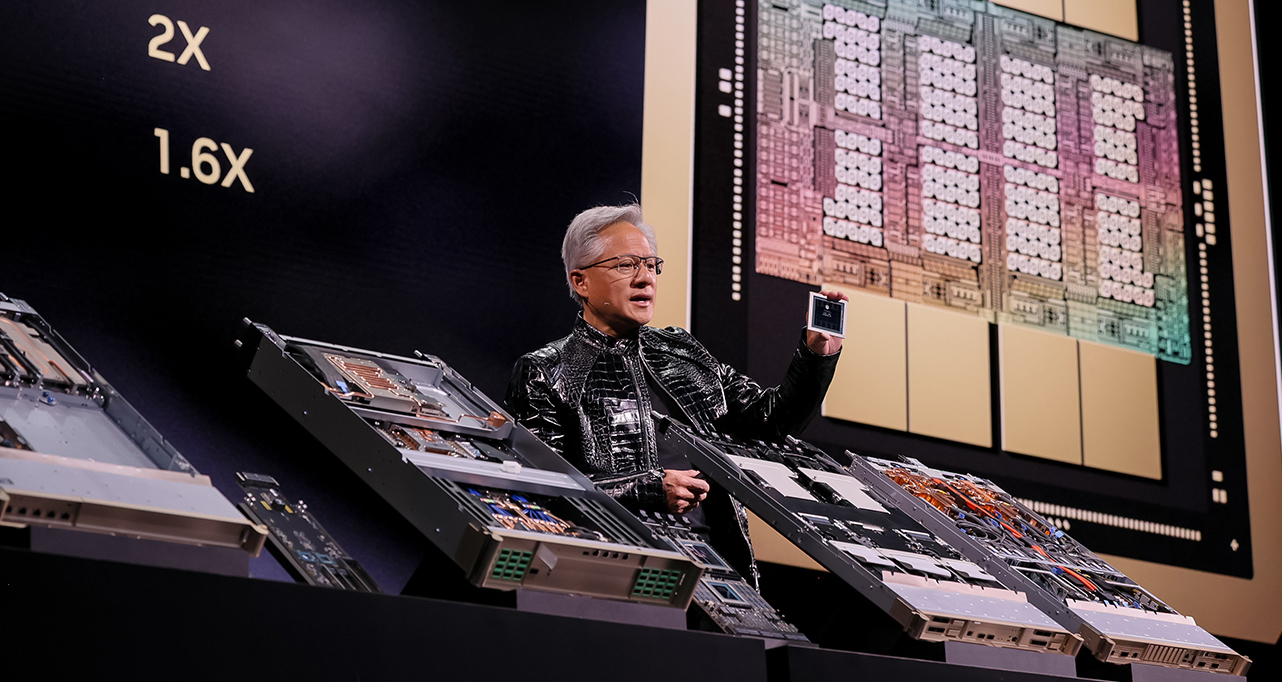

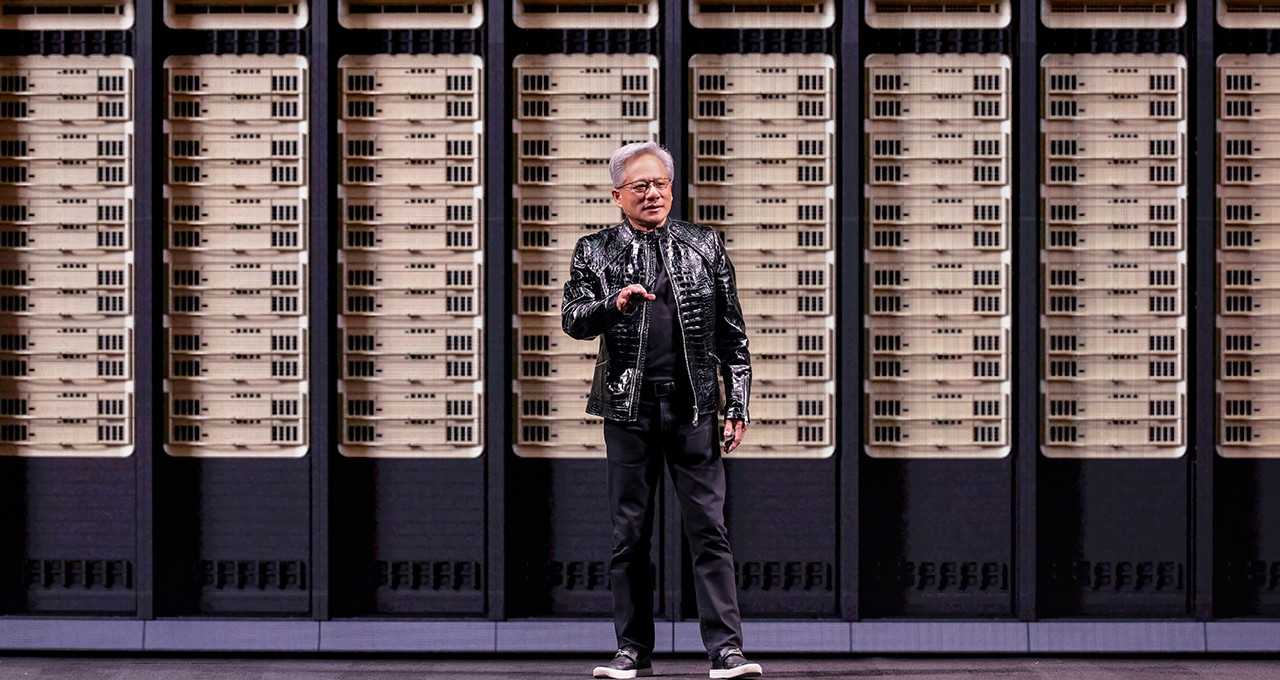

January 5, 2026$1 trillion in AI hardware sales by 2027. That’s not a wild prediction from some anonymous analyst — it’s the number Jensen Huang himself is reportedly backing. And on January 5th, the NVIDIA CEO takes the stage at CES 2026 in Las Vegas, where the NVIDIA CES 2026 keynote is shaping up to be far more than a product showcase. Based on leaks, insider reports, and official teasers, here’s everything we expect to see — and why it matters.

The Vera Rubin Platform — Blackwell’s Successor Is Coming

The headline announcement at the NVIDIA CES 2026 keynote is widely expected to be the Vera Rubin platform — the successor to Blackwell and NVIDIA’s most ambitious AI chip architecture to date. According to industry insiders, this 6-chip AI platform will feature 88 Olympus cores and pack a staggering 227 billion transistors.

The performance numbers, if accurate, are jaw-dropping. Sources suggest 50 petaflops in NVFP4 precision, with token processing costs reduced to one-tenth of Blackwell’s. That 10x cost reduction isn’t just a spec sheet flex — it fundamentally changes the economics of enterprise AI deployment. When inference costs drop by an order of magnitude, use cases that were financially unviable suddenly become mainstream.

Manufacturing and assembly are getting a radical overhaul too. Where Blackwell systems reportedly took about 2 hours to assemble, Vera Rubin is rumored to cut that down to just 5 minutes. The cooling architecture shifts to 80% liquid cooling, optimizing data center efficiency at scale. These aren’t just engineering improvements — they’re supply chain and deployment speed advantages that matter when you’re racing to build AI infrastructure. NVIDIA’s official blog has already been dropping hints about the CES special presentation.

DLSS 4.5 — The Gift Gamers Didn’t Expect

Here’s the twist for consumer-focused audiences: no new consumer GPUs are expected at the main keynote. But don’t close the tab yet. NVIDIA is rumored to unveil DLSS 4.5, and the upgrade is substantial. The key feature is Dynamic Multi Frame Generation, which introduces a 6X MFG (Multi Frame Generation) mode alongside a 2nd-generation transformer model for Super Resolution.

What does this mean in practice? Existing RTX 40 and 50 series owners could see dramatic frame rate improvements through a software update alone — no new hardware required. NVIDIA’s strategy of delivering performance gains through software rather than forcing GPU upgrades is a smart play that keeps the installed base loyal while the company focuses its silicon on the data center gold rush.

The 2nd-generation transformer model for Super Resolution is particularly interesting from a technical standpoint. Moving beyond CNN-based upscaling, transformer architectures can capture more complex spatial relationships, resulting in more accurate texture reconstruction and detail preservation. For gamers pushing 4K resolution, this could mean a fundamental improvement in visual fidelity without the traditional performance penalty. It’s the kind of advancement that makes you wonder whether NVIDIA’s AI research division is now delivering more value to gamers than its hardware engineering team.

6 Open Domain Models — NVIDIA’s AI Model Strategy Evolves

Perhaps the most strategically significant announcement expected at CES 2026 is NVIDIA’s release of six domain-specific open models. This signals a deliberate shift from general-purpose AI to vertical specialization:

- Clara — Healthcare: medical imaging analysis and drug discovery acceleration

- Earth-2 — Climate simulation: digital twin Earth for weather and climate modeling

- Nemotron — Reasoning: enterprise AI agent capabilities

- Cosmos — Robotics: physical world simulation and understanding

- GR00T — Embodied AI: foundation model for humanoid robots

- Alpamayo — Autonomous driving: open reasoning model for self-driving

The Alpamayo R1 model deserves special attention. According to Tom’s Hardware, this open reasoning model for autonomous driving is expected to be integrated into the Mercedes-Benz CLA. When a model designed for self-driving reasoning ships in a production vehicle from a major automaker, we’re looking at a genuine inflection point — not a demo, not a concept, but real-world deployment.

The decision to make these models open is equally significant. NVIDIA is positioning itself not just as a chip vendor, but as a full-stack AI platform provider. Open models drive adoption, adoption drives compute demand, compute demand sells GPUs. It’s a flywheel strategy, and a potentially devastating one for competitors.

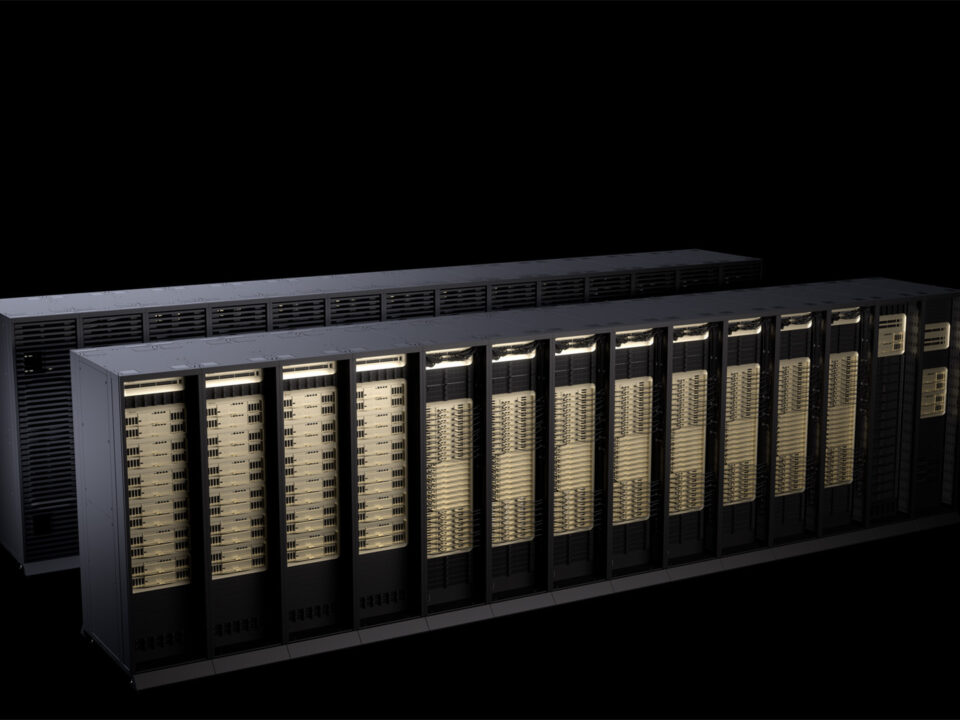

DGX Spark — Desktop-Scale AI Research Gets a Major Boost

Not everything at the NVIDIA CES 2026 keynote is expected to be data-center scale. The DGX Spark desktop system is rumored to receive a 2.6x performance improvement over its predecessor. For individual researchers, small labs, and startups that can’t afford (or don’t need) cloud-scale infrastructure, this brings serious AI capability to the desktop.

The trend is clear: AI development is democratizing at the hardware level. When a desktop system can handle workloads that required a server rack two years ago, the barrier to entry for AI research and development drops dramatically. NVIDIA knows that today’s startup researcher is tomorrow’s enterprise customer.

Consider the implications: a university research lab that currently relies on expensive cloud compute credits could potentially run their experiments locally, iterating faster and at lower cost. Independent AI developers could prototype and test models without monthly cloud bills eating into their budgets. The DGX Spark isn’t competing with NVIDIA’s data center products — it’s creating the next generation of customers who will eventually need those larger systems.

Partnership Ecosystem — Siemens, Boston Dynamics, Franka, Mercedes

No NVIDIA keynote is complete without the partner parade, and CES 2026 is expected to feature some heavy hitters. Reports suggest live demonstrations with Siemens (industrial automation expansion), Boston Dynamics and Franka (physical AI and robotics), and Mercedes-Benz (autonomous driving integration).

The Boston Dynamics and Franka collaborations are particularly noteworthy. These partnerships would represent some of the first large-scale implementations of NVIDIA’s GR00T platform and Cosmos simulation model in actual physical robots. Robots that understand the physical world, learn in simulation, and execute in reality — this is Jensen Huang’s “physical AI” thesis coming to life. As TechRadar’s coverage notes, the AI and robotics focus at this year’s CES is unprecedented.

The Siemens partnership expansion is equally telling. Industrial automation powered by AI represents one of the largest addressable markets in the world. When NVIDIA’s AI chips are embedded in manufacturing lines, supply chains, and industrial processes, the company’s reach extends far beyond the tech sector into the physical economy. This is NVIDIA’s play to become as essential to industrial infrastructure as Intel once was to personal computing.

The $1 Trillion Question — Where AI Hardware Is Headed

Jensen Huang reportedly expects $1 trillion in AI hardware sales through 2027. With model parameters growing 10x annually and the global tech industry shifting an estimated $100 trillion in investments toward AI, the demand for compute infrastructure isn’t slowing — it’s accelerating. The Vera Rubin platform’s 10x token cost reduction provides the economic foundation that justifies this massive investment cycle.

It’s worth noting that consumer GPU announcements will likely come through a separate GeForce On community update rather than the main keynote. The fact that NVIDIA is dedicating its CES main stage entirely to enterprise AI and infrastructure tells you everything about where the company’s strategic priorities lie in 2026.

To put the scale in perspective: AI model parameters are reportedly growing 10x year-over-year. That exponential growth in model complexity requires a corresponding exponential growth in compute capacity. Every new foundation model, every enterprise AI deployment, every autonomous vehicle on the road needs more silicon. The companies that control the compute layer control the AI economy — and right now, NVIDIA controls roughly 80% of the AI training chip market. The Vera Rubin platform is designed to extend and deepen that dominance for the next generation.

What to Watch at the NVIDIA CES 2026 Keynote

Here’s a quick cheat sheet for the NVIDIA CES 2026 keynote — the key announcements to watch for:

- Vera Rubin platform: Performance, cost, and assembly improvements over Blackwell across every metric

- 6 open domain models: The shift from general-purpose to specialized AI

- DLSS 4.5: Free performance gains for existing RTX owners

- Alpamayo R1 + Mercedes-Benz: Autonomous driving’s new paradigm

- DGX Spark 2.6x upgrade: Desktop-scale AI research gets serious

- Physical AI partnerships: Boston Dynamics, Franka, Siemens on stage

- $1 trillion projection: The scale and direction of AI infrastructure investment

When Jensen Huang steps onto that Las Vegas stage on January 5th, we’ll be watching the opening chapter of AI’s next era. The leaks and rumors alone paint a picture of transformation that extends from data centers to desktops, from healthcare to highways. The real question isn’t what NVIDIA will announce — it’s whether the rest of the industry can keep up. CES 2026 is shaping up to be the defining event that sets the direction for the entire tech landscape in the year ahead.

Want to stay ahead of AI hardware trends and tech insights? Subscribe to the newsletter for weekly updates.

Get weekly AI, music, and tech trends delivered to your inbox.