Best AI Tools to Start 2026: Productivity, Coding, and Creative Picks

January 22, 2026

Music Industry Predictions 2026: AI, Streaming Wars, and the Live Event Boom Reshaping Everything

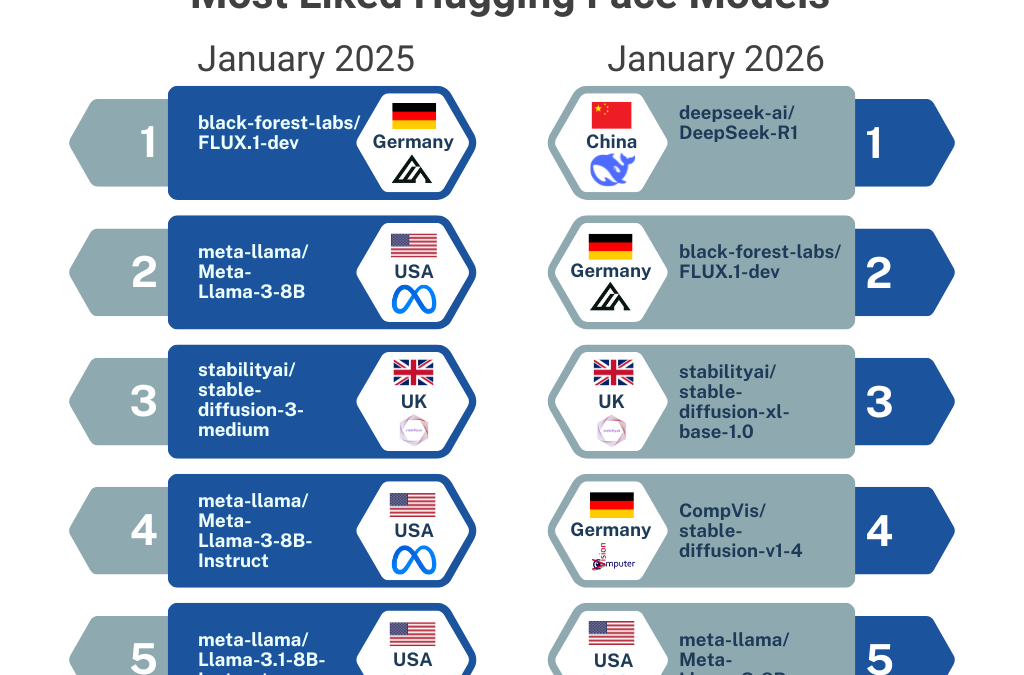

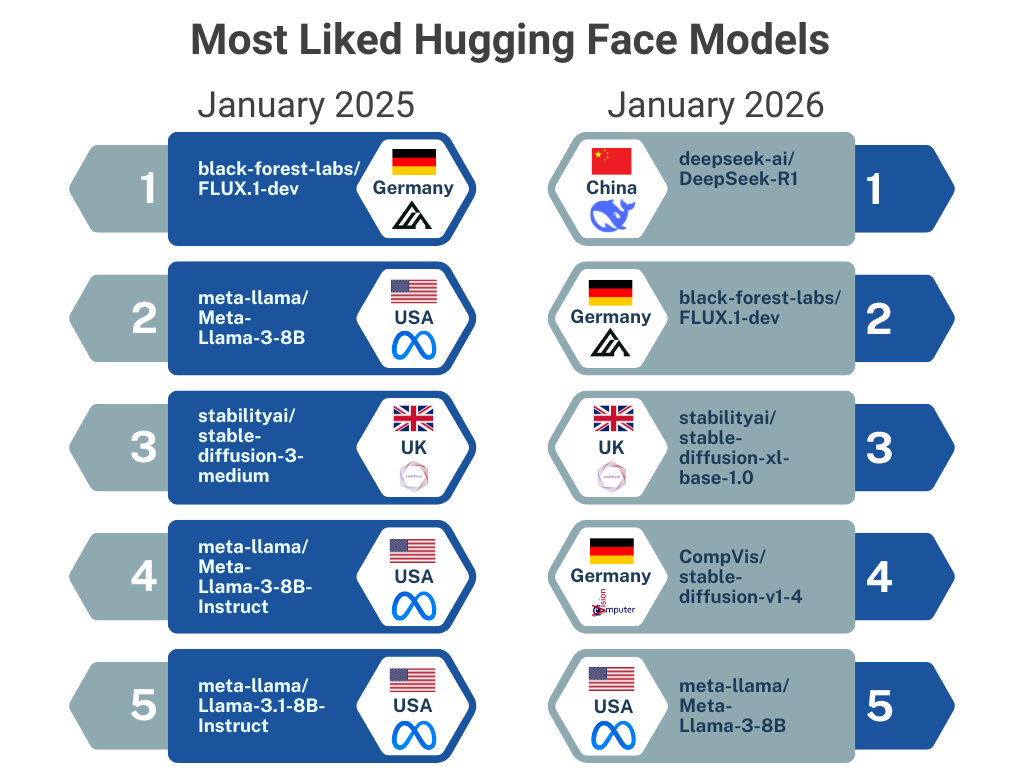

January 23, 2026One year ago, DeepSeek R1 dropped like a grenade into the open-source AI world. Today, the Hugging Face open model rankings 2026 tell a story nobody predicted: Chinese AI labs now command roughly 15% of global model share, up from barely 1% in late 2024. The leaderboard’s top spots? All occupied by descendants of a single technique that didn’t change a single model weight. Let’s unpack what happened.

The DeepSeek Moment: One Year Later

On January 20, 2026, Hugging Face published a retrospective blog titled “One Year Since the DeepSeek Moment” — and the numbers are staggering. DeepSeek R1, released exactly one year prior, has become the most liked model in Hugging Face history. But the real story isn’t about a single model. It’s about what that model triggered across an entire ecosystem.

When DeepSeek open-sourced its R1 reasoning model in January 2025, it proved that a relatively cost-efficient approach (the DeepSeek-V3 base model was trained for roughly $5.5 million) could rival proprietary giants. That signal was heard loud and clear in Beijing, Shenzhen, and Hangzhou. Within twelve months, the ripple effects reshaped the Hugging Face open model rankings 2026 in ways that make the old US-dominated leaderboard look like ancient history.

Qwen’s Staggering Rise: 700M+ Downloads and 113K Derivatives

If DeepSeek lit the fuse, Alibaba’s Qwen family built the rocket. As of January 2026, Qwen has surpassed 700 million downloads on Hugging Face, making it by far the most downloaded open model family on the platform. Even more telling: over 113,000 derivative models have been built on top of Qwen checkpoints. That’s not just popularity — it’s ecosystem dominance.

To put this in perspective, Qwen has become to open-source LLMs what Linux became to operating systems: the base layer that everyone else builds on. Fine-tuners, researchers, startups, and enterprises worldwide are choosing Qwen as their foundation. The derivative count alone tells you that Qwen isn’t just being downloaded — it’s being actively extended, adapted, and deployed in production.

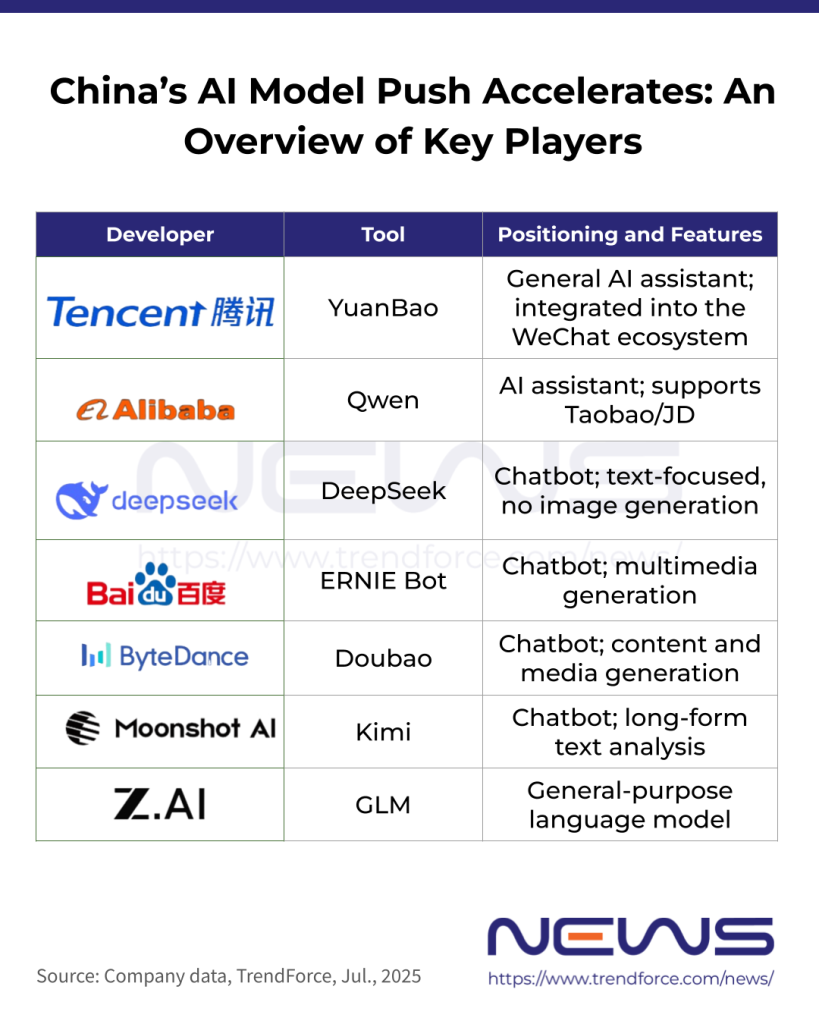

The Chinese AI Surge: From 1% to 15% Global Share

According to TrendForce’s January 2026 report, Chinese AI models hit approximately 15% of global share by November 2025 — a 15x increase from the roughly 1% they held in late 2024. That’s one of the fastest market share shifts in AI history.

The growth wasn’t limited to established players. Hugging Face’s data reveals some jaw-dropping trajectories:

- Baidu went from zero to over 100 model releases on Hugging Face

- ByteDance and Tencent saw 8-9x growth in their Hugging Face presence

- DeepSeek itself continued to push boundaries with its open-source philosophy

- Alibaba’s Qwen became the single most downloaded model family globally

What’s driving this? A combination of government policy encouraging open-source AI, fierce domestic competition, and the realization that open models serve as powerful developer acquisition channels. When your model is the one everyone fine-tunes, you own the ecosystem — even without charging for the weights.

The RYS-XLarge Hack: Topping the Leaderboard Without Changing a Single Weight

Here’s where the Hugging Face open model rankings 2026 story takes a fascinating twist. As of January 2026, the top four models on the Open LLM Leaderboard are all descendants of a single architectural experiment called RYS-XLarge — and the technique behind it is almost absurdly simple.

RYS-XLarge takes Qwen2-72B and duplicates seven middle layers (layers 45-51), inflating the parameter count from 72B to 78B. That’s it. No retraining. No weight modifications. No new data. Just layer duplication. Yet this simple trick produced a model that scored 44.75 on the leaderboard — and when other researchers applied further fine-tuning techniques on top of it, the results got even better:

- MaziyarPanahi/calme-3.2-instruct-78b: 52.08 (top score)

- MaziyarPanahi/calme-3.1-instruct-78b: 51.29

- dfurman/CalmeRys-78B-Orpo-v0.1: 51.23

The MuSR benchmark saw a remarkable 17.72% improvement from the layer duplication alone. This raises provocative questions about what our benchmarks are actually measuring: if duplicating layers without any training can boost scores this dramatically, are we testing intelligence — or testing our ability to game the evaluation?

Open-R1: Hugging Face’s Bid to Reproduce the DeepSeek Pipeline

Recognizing DeepSeek’s impact, Hugging Face launched the Open-R1 project — an ambitious initiative to fully reproduce the DeepSeek-R1 training pipeline in the open. Led by Elie Bakouch, Leandro von Werra, and Lewis Tunstall, the project follows a three-step roadmap:

- Step 1: Distill reasoning datasets from DeepSeek-R1 outputs

- Step 2: Replicate the reinforcement learning pipeline, specifically the GRPO (Group Relative Policy Optimization) approach

- Step 3: Implement the full multi-stage training procedure

Open-R1 matters because DeepSeek published the weights but not the full training recipe. By making the entire pipeline reproducible, Hugging Face aims to democratize not just the model, but the methodology. That’s the difference between giving someone a fish and teaching them to fish — except in this case, the fish is a 671B Mixture-of-Experts reasoning model.

Beyond LLMs: NVIDIA Cosmos and Red Hat AI Enter the Scene

January 2026 isn’t just about language models. NVIDIA released several models to Hugging Face including Cosmos Predict 2.5 (world foundation models), Reason 2 (reasoning), and Isaac GR00T N1.6 (humanoid robotics). Meanwhile, Red Hat shipped AI 3.3 with validated model batches, signaling that enterprise adoption of open models is moving from experiment to production.

As Red Hat’s State of Open Source AI Models report highlights, 2025 also saw IBM release Granite 4 with ISO 42001 certification and OpenAI’s surprising gpt-oss release (120B/20B parameter variants). The Hugging Face open model rankings 2026 reflect a world where open-source isn’t just competitive — it’s the default development paradigm.

The Bigger Picture: From Model Wars to System Wars

Perhaps the most insightful observation from Hugging Face’s retrospective series is that competition has fundamentally shifted. It’s no longer about which model scores highest on a benchmark (as the RYS-XLarge saga demonstrates, benchmarks are fragile). The real competition has moved to system-level capabilities: ecosystems, application frameworks, infrastructure, and deployment pipelines.

Think about it this way: Qwen’s 113,000 derivatives matter more than any single benchmark score. DeepSeek’s cultural impact matters more than its parameter count. The Chinese labs’ collective 15% market share matters more than any individual model release. We’ve entered an era where the winner isn’t the best model — it’s the best platform.

Hugging Face Open Model Rankings 2026: What This Means Going Forward

The Hugging Face open model rankings 2026 paint a clear picture: the open-source AI landscape has become genuinely multipolar. Here’s what to watch for in the months ahead:

- Benchmark reform: The RYS-XLarge layer duplication trick exposed weaknesses in current evaluation. Expect new, harder-to-game benchmarks

- Chinese lab acceleration: With 15% share and climbing, expect ByteDance, Baidu, and Tencent to become as familiar as Meta and Google in the open-source world

- Enterprise open-source: Red Hat’s validated batches and IBM’s certified models signal that CIOs are finally ready to deploy open models in production

- Reasoning model proliferation: Open-R1 and similar projects will make DeepSeek-class reasoning accessible to everyone

One year ago, DeepSeek showed that open-source could match proprietary performance at a fraction of the cost. Now, the ecosystem that moment spawned is rewriting the rules of AI development itself. The question is no longer whether open models can compete — it’s whether closed models can survive the flood.

Need help navigating open-source AI model selection, deployment pipelines, or building automation systems around these rapidly evolving tools?

Get weekly AI, music, and tech trends delivered to your inbox.