Meta Llama 4 Scout: How 17B Parameters and a 10M Token Window Rewrote Enterprise AI Economics in 10 Months

February 9, 2026

Loudness Mastering Streaming Platforms: The Complete 2026 LUFS Standards Guide

February 10, 2026Remember waiting six months just to get your hands on an H100? NVIDIA just flipped the board again. The NVIDIA Blackwell B300, which began shipping in January 2026, is now entering full volume production — and the rules of the AI infrastructure game are changing completely. With 288GB of HBM3e per GPU, 15 PFLOPS of dense FP4 compute, and 60,000 rack shipments projected for 2026, let’s break down exactly what these numbers mean for the industry.

NVIDIA Blackwell B300 Core Specs: The 288GB HBM3e Advantage

The headline number for the B300 (officially dubbed ‘Blackwell Ultra’) is memory. Each GPU packs 288GB of HBM3e in a 12-high stack configuration — a 55.6% increase over the B200’s 180GB. Memory bandwidth reaches 8TB/s, up from B200’s 7.7TB/s. This isn’t just an incremental spec bump. It fundamentally changes what’s possible: trillion-parameter frontier models can now be fine-tuned and served from a single node, without the complex model parallelism that was previously required.

Compute performance tells an equally dramatic story. The B300 delivers 15 PFLOPS of dense FP4, making it 1.5x faster than the B200. FP8 comes in at 7,000 TFLOPS, FP16 at 3,500 TFLOPS, and FP8 throughput runs approximately 3.5x higher than the H200. In real-world LLM inference benchmarks, the B300 shows 11-15x throughput improvement over the H100. For Llama 70B specifically, published benchmarks report approximately 100,000 tokens per second in FP8 and 150,000+ tokens per second in FP4.

Training workloads see significant gains as well. In NVIDIA’s internal MLPerf-style benchmarks, GPT-4-class model training throughput improved by 35% over the B200, while LLM serving tokens-per-second jumped 45-50%. The 1,400W TDP per GPU is substantial, but the performance-per-watt ratio still represents an efficiency improvement over the previous generation. Each watt consumed delivers more useful compute than its predecessor.

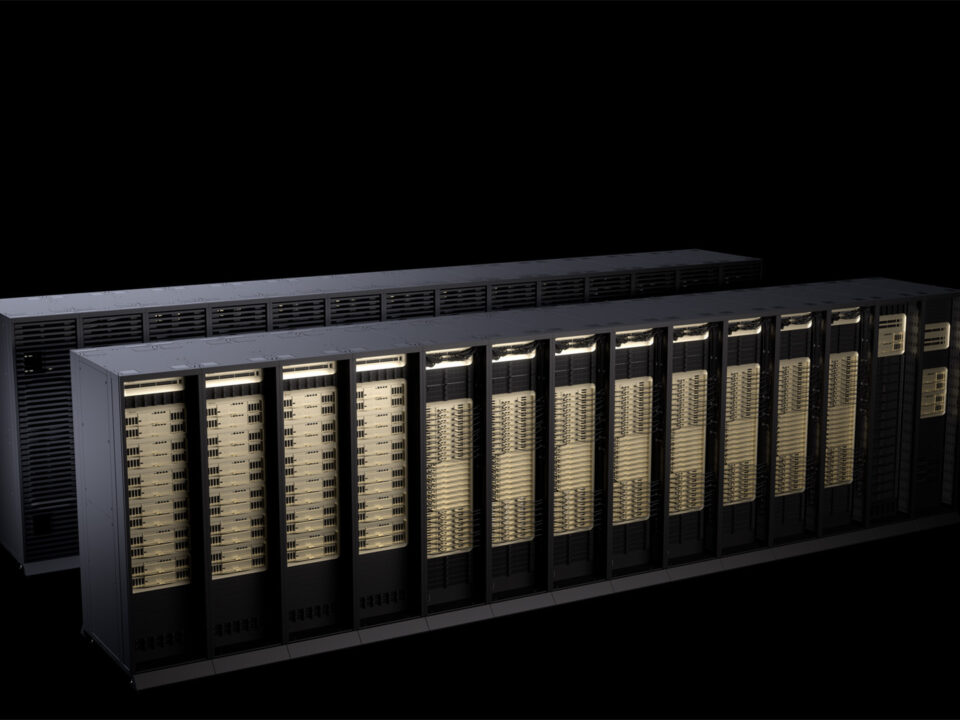

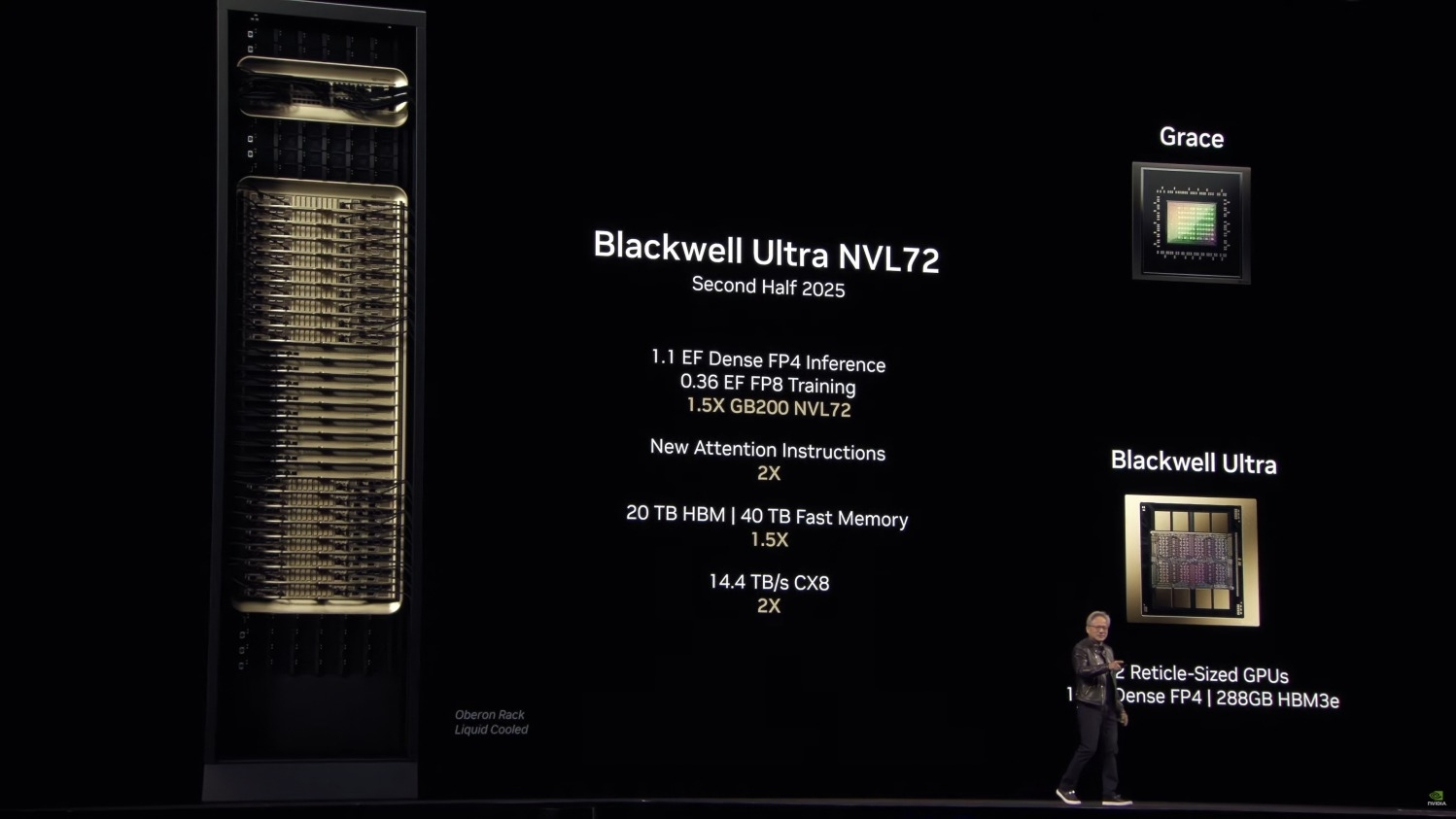

GB300 NVL72: 1.1 Exaflops in a Single Rack

Individual GPU performance matters, but the real paradigm shift happens at the system level. The GB300 NVL72 integrates 72 Blackwell Ultra GPUs and 36 Arm-based Grace CPUs into a single fully liquid-cooled rack. The result is a staggering 1.1 exaflops of FP4 compute from one rack. To put that in perspective, this level of compute was the domain of national-lab supercomputers just a few years ago. Now it fits in a single server rack that a cloud provider can deploy at scale.

The interconnect fabric is equally impressive. NVLink 5 provides 1.8TB/s of inter-GPU bandwidth, enabling the kind of tight coupling needed for multi-GPU training on massive models. ConnectX-8 networking doubles the previous generation at 1.6T, supporting the scale-out requirements of modern AI clusters. The DGX B300 system, built around an 8-GPU configuration paired with Intel Xeon 6776P processors, delivers 2.1TB of total GPU memory. Every B300 system requires Direct Liquid Cooling (DLC) — at 11.2kW for an 8-GPU system before CPUs and networking, air cooling is physically impossible at this power density.

Production Ramp: 60,000 Racks Projected for 2026

The production numbers paint a clear picture of unprecedented scale. According to Morgan Stanley’s projections, demand for NVIDIA AI server cabinets will surge from approximately 28,000 units in 2025 to at least 60,000 units in 2026 — more than doubling year-over-year. GB300 shipments specifically are projected to increase 129% compared to the previous generation. NVIDIA improved production yields by reverting to the older Bianca board configuration instead of the newer Cordelia design, a strategic decision that simplified manufacturing complexity and allowed suppliers like Foxconn to better manage supply chain requirements.

The supply chain is ramping rapidly on multiple fronts. Foxconn (Hon Hai) became the first manufacturer to complete mass production and delivery of both GB200 and GB300 full cabinets, establishing the production template for other ODM partners. Quanta, Wistron, and Wiwynn have all achieved NVIDIA Certified Systems certification and joined the production effort, adding critical manufacturing capacity. Meanwhile, TSMC is producing 4NP process silicon at scale in its Fab 21 facility in Arizona, significantly de-risking the North American supply chain. This carries geopolitical significance beyond pure manufacturing — with US-China tensions affecting semiconductor trade, domestic production provides strategic supply stability.

The HBM Supply War: What 2026’s Sold-Out Allocation Means

The critical bottleneck for B300 production isn’t the GPU die itself — it’s HBM3e memory. SK Hynix CFO Kim Jae-joon stated it bluntly: “We have already sold out our entire 2026 HBM supply.” Samsung and Micron are in identical positions, with their 2025-2026 HBM production fully contracted. Each B300 GPU requires 12-high HBM3e stacks, and the yields on these high-density packages aren’t perfect yet, compounding the supply constraint. The packaging complexity means that even small yield improvements translate into significant production volume gains.

TSMC’s CoWoS (Chip-on-Wafer-on-Substrate) packaging capacity is expanding to 95,000 wafers per month by 2026, but that may still fall short of demand. This supply-demand imbalance paradoxically strengthens NVIDIA’s market position. Cloud providers that secure B300 allocation can command premium pricing — early cloud rates range from $2.90 to $18.00 per GPU per hour, with significant variance based on contract terms and commitment levels. A DGX B300 system (8 GPUs) costs approximately $300,000, with hyperscaler volume pricing around $250,000. For comparison, H200 instances currently run $2.50-3.80/hr, while H100s have settled at $1.49-2.99/hr as they become more widely available.

B300 vs B200 vs H100: The Generational Leap in Numbers

To truly appreciate the B300’s position, it helps to see the generational progression side by side. The H100 launched with 80GB of HBM3 and 3,958 TFLOPS of FP8 performance. The B200 jumped to 180GB of HBM3e with roughly 9,000 TFLOPS of FP4, marking NVIDIA’s first serious push into 4-bit inference at scale. Now the B300 pushes to 288GB of HBM3e and 15,000 TFLOPS of FP4 — meaning that in just two generations, NVIDIA has increased GPU memory by 3.6x and FP4 compute by approximately 1.67x over the B200.

The memory bandwidth story follows a similar trajectory: H100’s 3.35TB/s grew to B200’s 7.7TB/s and now B300’s 8TB/s. While the B200-to-B300 bandwidth jump is modest at 3.9%, the memory capacity increase is where the real value lies. Running a 70B parameter model in FP16 requires roughly 140GB of memory just for weights — comfortably within the B300’s 288GB envelope but tight on a B200’s 180GB when you account for KV cache and activation memory during inference. For 175B+ parameter models, the B300’s memory headroom becomes genuinely transformative.

Power consumption has also escalated: H100 drew 700W, B200 pulled 1,000W, and B300 now demands 1,400W. But efficiency gains mean the B300 delivers roughly 10.7 TFLOPS per watt of FP4, compared to B200’s approximately 9 TFLOPS per watt. The cost per inference token continues to drop with each generation, even as absolute hardware costs rise. This is the fundamental dynamic driving AI infrastructure investment: the economics improve even as the hardware gets more expensive.

What the NVIDIA Blackwell B300 Ramp Means for Creators and Developers

The B300 production ramp represents a genuine step change in AI infrastructure accessibility, even if individual access to B300 hardware remains expensive. That massive 288GB memory pool enables fine-tuning and inference on model sizes that were previously impractical on a single GPU. Running a Llama 70B-class model with high batch throughput in real time becomes straightforward, and the 11-15x inference throughput improvement over H100 directly translates to better quality and lower latency for AI-powered services — from music generation and video processing to text generation and code completion.

Hyperscalers including Microsoft, Amazon, and Meta are driving mass adoption, which will steadily improve the price-performance ratio of cloud-based AI services through 2026. The downstream effects will be felt by anyone building AI-powered products: faster model serving, larger context windows, more sophisticated inference pipelines — all at gradually decreasing costs. For individual studios and small teams, cloud access remains the practical path, since harnessing B300 performance directly requires liquid cooling infrastructure and substantial power delivery that’s impractical outside of data center environments.

The data center transformation required for B300 deployment is itself a significant industry shift. Traditional air-cooled facilities simply cannot support the thermal density of Blackwell Ultra hardware. Cloud providers and enterprises are investing heavily in liquid cooling retrofits and purpose-built facilities, creating a ripple effect across the data center construction and cooling equipment industries. This infrastructure investment cycle will take years to fully play out, but it’s already underway at major hyperscaler facilities worldwide. For smaller organizations, this means that the cloud providers who invest early in B300-ready infrastructure will have a significant competitive advantage in attracting AI workloads over the coming quarters.

The competitive dynamics of the GPU cloud market suggest that B300 instance pricing should trend downward in the second half of 2026, as more capacity comes online and the next-generation Vera Rubin architecture (expected late 2026) begins to capture attention and investment. This generational handoff could make B300 compute even more cost-effective for end users. If you’re planning to leverage AI for music production pipelines, video generation, large language model applications, or any compute-intensive creative workflow, the second half of 2026 looks like the optimal entry point for accessing this level of performance at a reasonable price.

Get weekly AI, music, and tech trends delivered to your inbox.